Optimal Representations for Covariate Shift

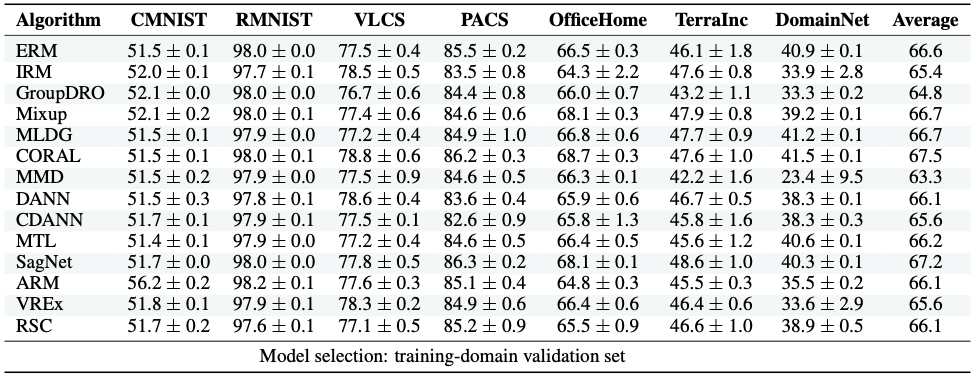

Machine learning systems often experience a distribution shift between training and testing. In this paper, we introduce a simple variational objective whose optima are exactly the set of all representations on which risk minimizers are guaranteed to be robust to any distribution shift that preserves the Bayes predictor, e.g., covariate shifts. Our objective has two components. First, a representation must remain discriminative for the task, i.e., some predictor must be able to simultaneously minimize the source and target risk. Second, the representation's marginal support needs to be the same across source and target. We make this practical by designing self-supervised objectives that only use unlabelled data and augmentations to train robust representations. Our objectives give insights into the robustness of CLIP, and further improve CLIP's representations to achieve SOTA results on DomainBed.

PDF Abstract ICLR 2022 PDF ICLR 2022 AbstractCode

Results from the Paper

Ranked #38 on

Image Classification

on ObjectNet

(using extra training data)

Ranked #38 on

Image Classification

on ObjectNet

(using extra training data)

PACS

PACS

ObjectNet

ObjectNet