PC-DARTS: Partial Channel Connections for Memory-Efficient Architecture Search

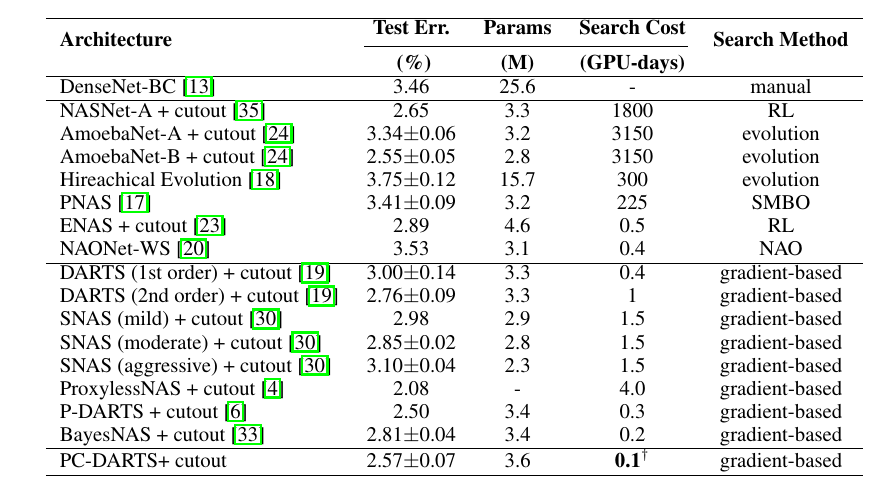

Differentiable architecture search (DARTS) provided a fast solution in finding effective network architectures, but suffered from large memory and computing overheads in jointly training a super-network and searching for an optimal architecture. In this paper, we present a novel approach, namely, Partially-Connected DARTS, by sampling a small part of super-network to reduce the redundancy in exploring the network space, thereby performing a more efficient search without comprising the performance. In particular, we perform operation search in a subset of channels while bypassing the held out part in a shortcut. This strategy may suffer from an undesired inconsistency on selecting the edges of super-net caused by sampling different channels. We alleviate it using edge normalization, which adds a new set of edge-level parameters to reduce uncertainty in search. Thanks to the reduced memory cost, PC-DARTS can be trained with a larger batch size and, consequently, enjoys both faster speed and higher training stability. Experimental results demonstrate the effectiveness of the proposed method. Specifically, we achieve an error rate of 2.57% on CIFAR10 with merely 0.1 GPU-days for architecture search, and a state-of-the-art top-1 error rate of 24.2% on ImageNet (under the mobile setting) using 3.8 GPU-days for search. Our code has been made available at: https://github.com/yuhuixu1993/PC-DARTS.

PDF Abstract ICLR 2020 PDF ICLR 2020 Abstract

CIFAR-10

CIFAR-10

ImageNet

ImageNet

MS COCO

MS COCO

CIFAR-100

CIFAR-100

ssd

ssd