Private, fair and accurate: Training large-scale, privacy-preserving AI models in medical imaging

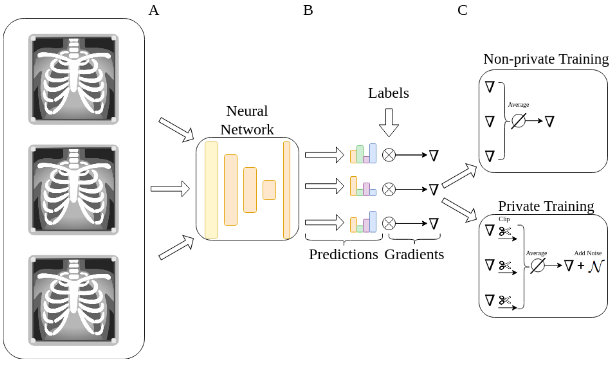

Artificial intelligence (AI) models are increasingly used in the medical domain. However, as medical data is highly sensitive, special precautions to ensure its protection are required. The gold standard for privacy preservation is the introduction of differential privacy (DP) to model training. Prior work indicates that DP has negative implications on model accuracy and fairness, which are unacceptable in medicine and represent a main barrier to the widespread use of privacy-preserving techniques. In this work, we evaluated the effect of privacy-preserving training of AI models regarding accuracy and fairness compared to non-private training. For this, we used two datasets: (1) A large dataset (N=193,311) of high quality clinical chest radiographs, and (2) a dataset (N=1,625) of 3D abdominal computed tomography (CT) images, with the task of classifying the presence of pancreatic ductal adenocarcinoma (PDAC). Both were retrospectively collected and manually labeled by experienced radiologists. We then compared non-private deep convolutional neural networks (CNNs) and privacy-preserving (DP) models with respect to privacy-utility trade-offs measured as area under the receiver-operator-characteristic curve (AUROC), and privacy-fairness trade-offs, measured as Pearson's r or Statistical Parity Difference. We found that, while the privacy-preserving trainings yielded lower accuracy, they did largely not amplify discrimination against age, sex or co-morbidity. Our study shows that -- under the challenging realistic circumstances of a real-life clinical dataset -- the privacy-preserving training of diagnostic deep learning models is possible with excellent diagnostic accuracy and fairness.

PDF Abstract