Text-based RL Agents with Commonsense Knowledge: New Challenges, Environments and Baselines

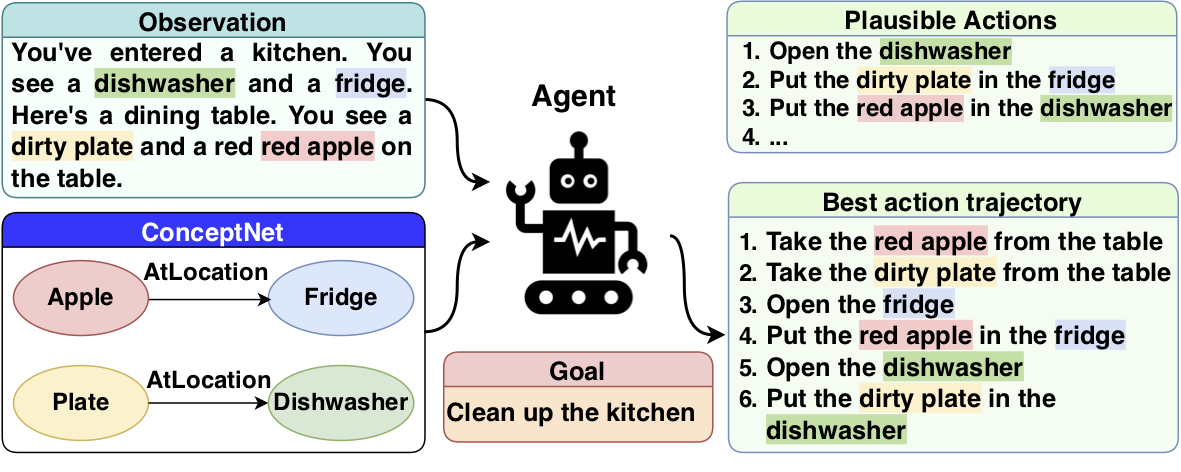

Text-based games have emerged as an important test-bed for Reinforcement Learning (RL) research, requiring RL agents to combine grounded language understanding with sequential decision making. In this paper, we examine the problem of infusing RL agents with commonsense knowledge. Such knowledge would allow agents to efficiently act in the world by pruning out implausible actions, and to perform look-ahead planning to determine how current actions might affect future world states. We design a new text-based gaming environment called TextWorld Commonsense (TWC) for training and evaluating RL agents with a specific kind of commonsense knowledge about objects, their attributes, and affordances. We also introduce several baseline RL agents which track the sequential context and dynamically retrieve the relevant commonsense knowledge from ConceptNet. We show that agents which incorporate commonsense knowledge in TWC perform better, while acting more efficiently. We conduct user-studies to estimate human performance on TWC and show that there is ample room for future improvement.

PDF AbstractDatasets

Results from the Paper

| Task | Dataset | Model | Metric Name | Metric Value | Global Rank | Benchmark |

|---|---|---|---|---|---|---|

| Commonsense Reasoning for RL | commonsense-rl | Human | Avg #Steps | 15.00 ± 3.29 | # 1 | |

| Commonsense Reasoning for RL | commonsense-rl | TNC-A2C | Avg #Steps | 43.27 ± 0.70 | # 3 | |

| Commonsense Reasoning for RL | commonsense-rl | KG-A2C | Avg #Steps | 49.36 ± 7.50 | # 5 | |

| Commonsense Reasoning for RL | commonsense-rl | LSTM-A2C | Avg #Steps | 49.21 ± 0.58 | # 4 | |

| Commonsense Reasoning for RL | commonsense-rl | Optimal | Avg #Steps | 15.00 ± 2.00 | # 1 |

ConceptNet

ConceptNet