Toward DNN of LUTs: Learning Efficient Image Restoration with Multiple Look-Up Tables

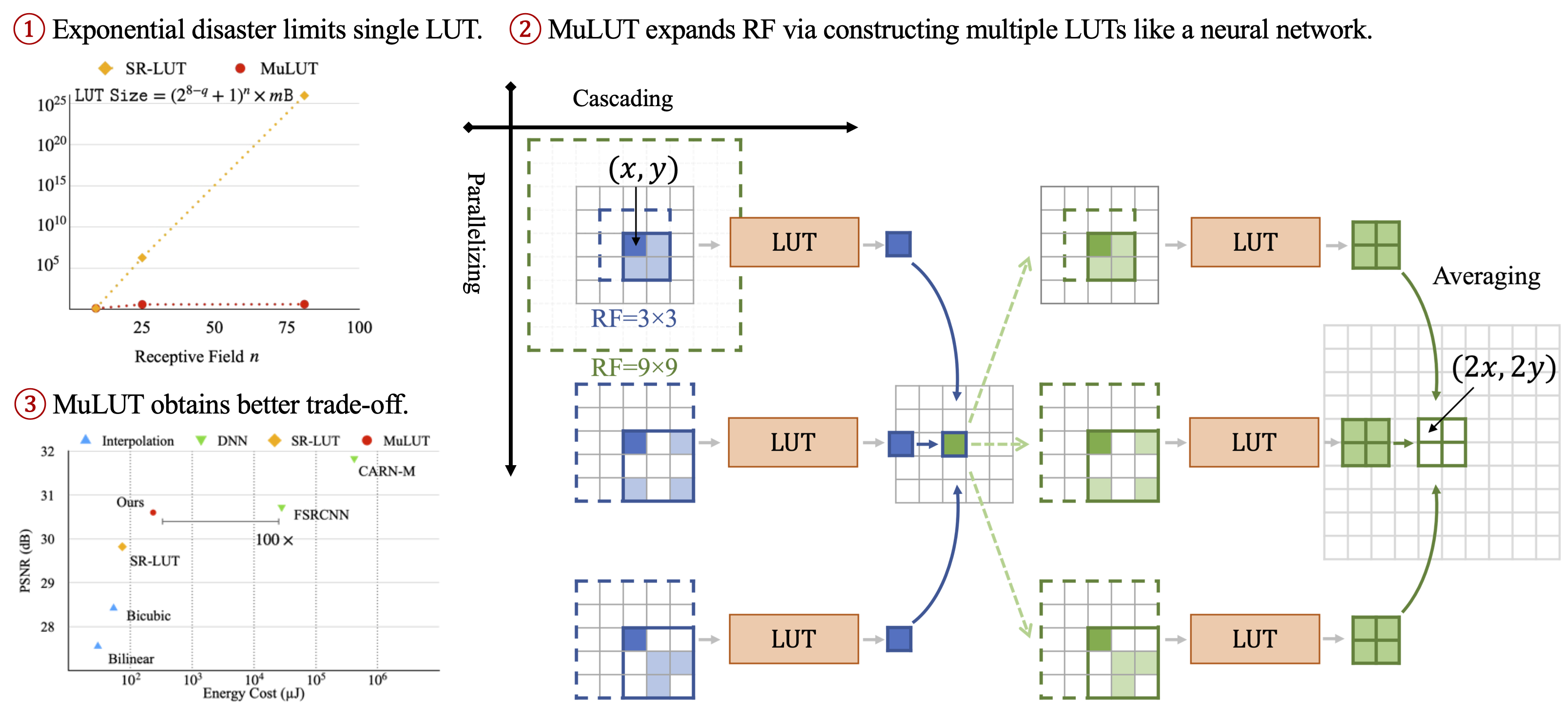

The widespread usage of high-definition screens on edge devices stimulates a strong demand for efficient image restoration algorithms. The way of caching deep learning models in a look-up table (LUT) is recently introduced to respond to this demand. However, the size of a single LUT grows exponentially with the increase of its indexing capacity, which restricts its receptive field and thus the performance. To overcome this intrinsic limitation of the single-LUT solution, we propose a universal method to construct multiple LUTs like a neural network, termed MuLUT. Firstly, we devise novel complementary indexing patterns, as well as a general implementation for arbitrary patterns, to construct multiple LUTs in parallel. Secondly, we propose a re-indexing mechanism to enable hierarchical indexing between cascaded LUTs. Finally, we introduce channel indexing to allow cross-channel interaction, enabling LUTs to process color channels jointly. In these principled ways, the total size of MuLUT is linear to its indexing capacity, yielding a practical solution to obtain superior performance with the enlarged receptive field. We examine the advantage of MuLUT on various image restoration tasks, including super-resolution, demosaicing, denoising, and deblocking. MuLUT achieves a significant improvement over the single-LUT solution, e.g., up to 1.1dB PSNR for super-resolution and up to 2.8dB PSNR for grayscale denoising, while preserving its efficiency, which is 100$\times$ less in energy cost compared with lightweight deep neural networks. Our code and trained models are publicly available at https://github.com/ddlee-cn/MuLUT.

PDF Abstract

BSD

BSD

DIV2K

DIV2K

Urban100

Urban100

Set14

Set14

Manga109

Manga109

Set12

Set12

McMaster

McMaster