Search Results for author: Charles Y. Zheng

Found 7 papers, 1 papers with code

Distributed Weight Consolidation: A Brain Segmentation Case Study

no code implementations • NeurIPS 2018 • Patrick McClure, Charles Y. Zheng, Jakub R. Kaczmarzyk, John A. Lee, Satrajit S. Ghosh, Dylan Nielson, Peter Bandettini, Francisco Pereira

Collecting the large datasets needed to train deep neural networks can be very difficult, particularly for the many applications for which sharing and pooling data is complicated by practical, ethical, or legal concerns.

Estimating mutual information in high dimensions via classification error

no code implementations • 16 Jun 2016 • Charles Y. Zheng, Yuval Benjamini

Multivariate pattern analyses approaches in neuroimaging are fundamentally concerned with investigating the quantity and type of information processed by various regions of the human brain; typically, estimates of classification accuracy are used to quantify information.

How many faces can be recognized? Performance extrapolation for multi-class classification

no code implementations • 16 Jun 2016 • Charles Y. Zheng, Rakesh Achanta, Yuval Benjamini

Using data from a subset of the classes, can we predict how well a classifier will scale with an increased number of classes?

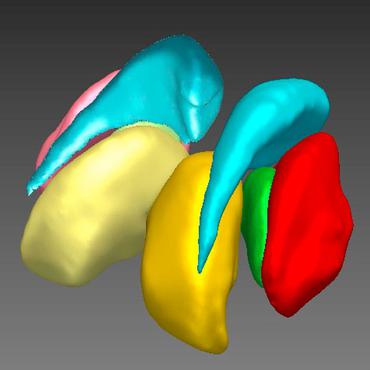

Deconvolution of High Dimensional Mixtures via Boosting, with Application to Diffusion-Weighted MRI of Human Brain

no code implementations • NeurIPS 2014 • Charles Y. Zheng, Franco Pestilli, Ariel Rokem

Diffusion-weighted magnetic resonance imaging (DWI) and fiber tractography are the only methods to measure the structure of the white matter in the living human brain.

Revealing interpretable object representations from human behavior

no code implementations • ICLR 2019 • Charles Y. Zheng, Francisco Pereira, Chris I. Baker, Martin N. Hebart

To study how mental object representations are related to behavior, we estimated sparse, non-negative representations of objects using human behavioral judgments on images representative of 1, 854 object categories.

Testing for context-dependent changes in neural encoding in naturalistic experiments

no code implementations • 17 Nov 2022 • Yenho Chen, Carl W. Harris, Xiaoyu Ma, Zheng Li, Francisco Pereira, Charles Y. Zheng

We propose a decoding-based approach to detect context effects on neural codes in longitudinal neural recording data.

VICE: Variational Interpretable Concept Embeddings

1 code implementation • 2 May 2022 • Lukas Muttenthaler, Charles Y. Zheng, Patrick McClure, Robert A. Vandermeulen, Martin N. Hebart, Francisco Pereira

This paper introduces Variational Interpretable Concept Embeddings (VICE), an approximate Bayesian method for embedding object concepts in a vector space using data collected from humans in a triplet odd-one-out task.