Search Results for author: Zhilei Liu

Found 20 papers, 2 papers with code

Teacher-Student Network for Real-World Face Super-Resolution with Progressive Embedding of Edge Information

no code implementations • 8 May 2024 • Zhilei Liu, Chenggong Zhang

Moreover, because of the existence of a domain gap, the semantic feature information of the target domain may be affected when synthetic data and real data are utilized to train super-resolution models simultaneously.

NeRF-AD: Neural Radiance Field with Attention-based Disentanglement for Talking Face Synthesis

no code implementations • 23 Jan 2024 • Chongke Bi, Xiaoxing Liu, Zhilei Liu

However, most existing NeRF-based methods either burden NeRF with complex learning tasks while lacking methods for supervised multimodal feature fusion, or cannot precisely map audio to the facial region related to speech movements.

Self-supervised Facial Action Unit Detection with Region and Relation Learning

no code implementations • 10 Mar 2023 • Juan Song, Zhilei Liu

Facial action unit (AU) detection is a challenging task due to the scarcity of manual annotations.

Face Super-Resolution with Progressive Embedding of Multi-scale Face Priors

no code implementations • 12 Oct 2022 • Chenggong Zhang, Zhilei Liu

In this paper, we propose a novel recurrent convolutional network based framework for face super-resolution, which progressively introduces both global shape and local texture information.

Cross-subject Action Unit Detection with Meta Learning and Transformer-based Relation Modeling

no code implementations • 18 May 2022 • Jiyuan Cao, Zhilei Liu, Yong Zhang

Ablation study and visualization show that our MARL can eliminate identity-caused differences, thus obtaining a robust and generalized AU discriminative embedding representation.

Talking Head Generation Driven by Speech-Related Facial Action Units and Audio- Based on Multimodal Representation Fusion

no code implementations • 27 Apr 2022 • Sen Chen, Zhilei Liu, Jiaxing Liu, Longbiao Wang

We utilize pre-trained AU classifier to ensure that the generated images contain correct AU information.

Talking Head Generation with Audio and Speech Related Facial Action Units

no code implementations • 19 Oct 2021 • Sen Chen, Zhilei Liu, Jiaxing Liu, Zhengxiang Yan, Longbiao Wang

Quantitative and qualitative experiments demonstrate that our method outperforms existing methods in both image quality and lip-sync accuracy.

Action Unit Detection with Joint Adaptive Attention and Graph Relation

no code implementations • 9 Jul 2021 • Chenggong Zhang, Juan Song, Qingyang Zhang, Weilong Dong, Ruomeng Ding, Zhilei Liu

This paper describes an approach to the facial action unit (AU) detection.

Teacher-Student Competition for Unsupervised Domain Adaptation

no code implementations • 19 Oct 2020 • Ruixin Xiao, Zhilei Liu, Baoyuan Wu

With the supervision from source domain only in class-level, existing unsupervised domain adaptation (UDA) methods mainly learn the domain-invariant representations from a shared feature extractor, which causes the source-bias problem.

J$\hat{\text{A}}$A-Net: Joint Facial Action Unit Detection and Face Alignment via Adaptive Attention

1 code implementation • 18 Mar 2020 • Zhiwen Shao, Zhilei Liu, Jianfei Cai, Lizhuang Ma

Moreover, to extract precise local features, we propose an adaptive attention learning module to refine the attention map of each AU adaptively.

Joint Face Completion and Super-resolution using Multi-scale Feature Relation Learning

no code implementations • 29 Feb 2020 • Zhilei Liu, Yunpeng Wu, Le Li, Cuicui Zhang, Baoyuan Wu

This paper proposes a multi-scale feature graph generative adversarial network (MFG-GAN) to implement the face restoration of images in which both degradation modes coexist, and also to repair images with a single type of degradation.

Controllable Descendant Face Synthesis

no code implementations • 26 Feb 2020 • Yong Zhang, Le Li, Zhilei Liu, Baoyuan Wu, Yanbo Fan, Zhifeng Li

Most of the existing methods train models for one-versus-one kin relation, which only consider one parent face and one child face by directly using an auto-encoder without any explicit control over the resemblance of the synthesized face to the parent face.

Facial Expression Restoration Based on Improved Graph Convolutional Networks

no code implementations • 23 Oct 2019 • Zhilei Liu, Le Li, Yunpeng Wu, Cuicui Zhang

Facial expression analysis in the wild is challenging when the facial image is with low resolution or partial occlusion.

Relation Modeling with Graph Convolutional Networks for Facial Action Unit Detection

no code implementations • 23 Oct 2019 • Zhilei Liu, Jiahui Dong, Cuicui Zhang, Longbiao Wang, Jianwu Dang

Most existing AU detection works considering AU relationships are relying on probabilistic graphical models with manually extracted features.

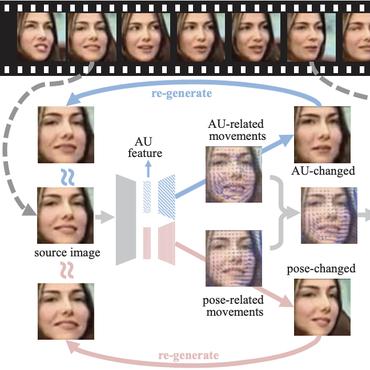

Region Based Adversarial Synthesis of Facial Action Units

no code implementations • 23 Oct 2019 • Zhilei Liu, Diyi Liu, Yunpeng Wu

Facial expression synthesis or editing has recently received increasing attention in the field of affective computing and facial expression modeling.

Facial Action Unit Detection Using Attention and Relation Learning

no code implementations • 10 Aug 2018 • Zhiwen Shao, Zhilei Liu, Jianfei Cai, Yunsheng Wu, Lizhuang Ma

By finding the region of interest of each AU with the attention mechanism, AU-related local features can be captured.

Speech Emotion Recognition Considering Local Dynamic Features

no code implementations • 21 Mar 2018 • Haotian Guan, Zhilei Liu, Longbiao Wang, Jianwu Dang, Ruiguo Yu

Recently, increasing attention has been directed to the study of the speech emotion recognition, in which global acoustic features of an utterance are mostly used to eliminate the content differences.

Deep Adaptive Attention for Joint Facial Action Unit Detection and Face Alignment

1 code implementation • ECCV 2018 • Zhiwen Shao, Zhilei Liu, Jianfei Cai, Lizhuang Ma

Facial action unit (AU) detection and face alignment are two highly correlated tasks since facial landmarks can provide precise AU locations to facilitate the extraction of meaningful local features for AU detection.

Ranked #5 on

Facial Action Unit Detection

on DISFA

Ranked #5 on

Facial Action Unit Detection

on DISFA

Conditional Adversarial Synthesis of 3D Facial Action Units

no code implementations • 21 Feb 2018 • Zhilei Liu, Guoxian Song, Jianfei Cai, Tat-Jen Cham, Juyong Zhang

Employing deep learning-based approaches for fine-grained facial expression analysis, such as those involving the estimation of Action Unit (AU) intensities, is difficult due to the lack of a large-scale dataset of real faces with sufficiently diverse AU labels for training.

Updating the silent speech challenge benchmark with deep learning

no code implementations • 20 Sep 2017 • Yan Ji, Licheng Liu, Hongcui Wang, Zhilei Liu, Zhibin Niu, Bruce Denby

The 2010 Silent Speech Challenge benchmark is updated with new results obtained in a Deep Learning strategy, using the same input features and decoding strategy as in the original article.