Depth Estimation

803 papers with code • 14 benchmarks • 70 datasets

Depth Estimation is the task of measuring the distance of each pixel relative to the camera. Depth is extracted from either monocular (single) or stereo (multiple views of a scene) images. Traditional methods use multi-view geometry to find the relationship between the images. Newer methods can directly estimate depth by minimizing the regression loss, or by learning to generate a novel view from a sequence. The most popular benchmarks are KITTI and NYUv2. Models are typically evaluated according to a RMS metric.

Libraries

Use these libraries to find Depth Estimation models and implementationsSubtasks

Latest papers

NeSLAM: Neural Implicit Mapping and Self-Supervised Feature Tracking With Depth Completion and Denoising

Second, the occupancy scene representation is replaced with Signed Distance Field (SDF) hierarchical scene representation for high-quality reconstruction and view synthesis.

UniDepth: Universal Monocular Metric Depth Estimation

However, the remarkable accuracy of recent MMDE methods is confined to their training domains.

ECoDepth: Effective Conditioning of Diffusion Models for Monocular Depth Estimation

We argue that the embedding vector from a ViT model, pre-trained on a large dataset, captures greater relevant information for SIDE than the usual route of generating pseudo image captions, followed by CLIP based text embeddings.

ModaLink: Unifying Modalities for Efficient Image-to-PointCloud Place Recognition

Experimental results on the KITTI dataset show that our proposed methods achieve state-of-the-art performance while running in real time.

DN-Splatter: Depth and Normal Priors for Gaussian Splatting and Meshing

3D Gaussian splatting, a novel differentiable rendering technique, has achieved state-of-the-art novel view synthesis results with high rendering speeds and relatively low training times.

Physical 3D Adversarial Attacks against Monocular Depth Estimation in Autonomous Driving

Deep learning-based monocular depth estimation (MDE), extensively applied in autonomous driving, is known to be vulnerable to adversarial attacks.

Metric3D v2: A Versatile Monocular Geometric Foundation Model for Zero-shot Metric Depth and Surface Normal Estimation

For metric depth estimation, we show that the key to a zero-shot single-view model lies in resolving the metric ambiguity from various camera models and large-scale data training.

When Do We Not Need Larger Vision Models?

Our results show that a multi-scale smaller model has comparable learning capacity to a larger model, and pre-training smaller models with S$^2$ can match or even exceed the advantage of larger models.

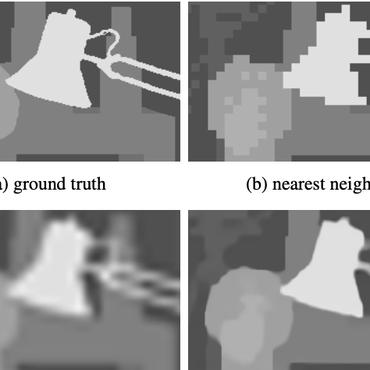

FeatUp: A Model-Agnostic Framework for Features at Any Resolution

Deep features are a cornerstone of computer vision research, capturing image semantics and enabling the community to solve downstream tasks even in the zero- or few-shot regime.

Robust Shape Fitting for 3D Scene Abstraction

A RANSAC estimator guided by a neural network fits these primitives to a depth map.

Cityscapes

Cityscapes

KITTI

KITTI

ScanNet

ScanNet

NYUv2

NYUv2

Matterport3D

Matterport3D

Middlebury

Middlebury

TUM RGB-D

TUM RGB-D

SUNCG

SUNCG

Taskonomy

Taskonomy

2D-3D-S

2D-3D-S