Facial Action Unit Detection

17 papers with code • 2 benchmarks • 4 datasets

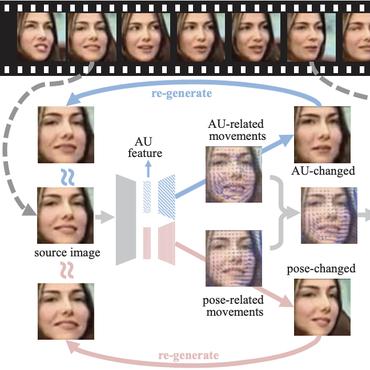

Facial action unit detection is the task of detecting action units from a video of a face - for example, lip tightening and cheek raising.

( Image credit: Self-supervised Representation Learning from Videos for Facial Action Unit Detection )

Libraries

Use these libraries to find Facial Action Unit Detection models and implementationsLatest papers

GPT as Psychologist? Preliminary Evaluations for GPT-4V on Visual Affective Computing

In conclusion, this paper provides valuable insights into the potential applications and challenges of MLLMs in human-centric computing.

AUFormer: Vision Transformers are Parameter-Efficient Facial Action Unit Detectors

Facial Action Units (AU) is a vital concept in the realm of affective computing, and AU detection has always been a hot research topic.

Learning Contrastive Feature Representations for Facial Action Unit Detection

To address the challenge posed by noisy AU labels, we augment the supervised signal through the introduction of a self-supervised signal.

FG-Net: Facial Action Unit Detection with Generalizable Pyramidal Features

The proposed FG-Net achieves a strong generalization ability for heatmap-based AU detection thanks to the generalizable and semantic-rich features extracted from the pre-trained generative model.

Multi-scale Promoted Self-adjusting Correlation Learning for Facial Action Unit Detection

Anatomically, there are innumerable correlations between AUs, which contain rich information and are vital for AU detection.

Learning Multi-dimensional Edge Feature-based AU Relation Graph for Facial Action Unit Recognition

While the relationship between a pair of AUs can be complex and unique, existing approaches fail to specifically and explicitly represent such cues for each pair of AUs in each facial display.

Pre-training strategies and datasets for facial representation learning

Recent work on Deep Learning in the area of face analysis has focused on supervised learning for specific tasks of interest (e. g. face recognition, facial landmark localization etc.)

Automated Detection of Equine Facial Action Units

To automate parts of this process, we propose a Deep Learning-based method to detect EquiFACS units automatically from images.

J$\hat{\text{A}}$A-Net: Joint Facial Action Unit Detection and Face Alignment via Adaptive Attention

Moreover, to extract precise local features, we propose an adaptive attention learning module to refine the attention map of each AU adaptively.

Multitask Emotion Recognition with Incomplete Labels

We use the soft labels and the ground truth to train the student model.

DISFA

DISFA

BP4D

BP4D

CANDOR Corpus

CANDOR Corpus

Thermal Face Database

Thermal Face Database