Search Results for author: Hideitsu Hino

Found 24 papers, 6 papers with code

Duality induced by an embedding structure of determinantal point process

no code implementations • 17 Apr 2024 • Hideitsu Hino, Keisuke Yano

This paper investigates the information geometrical structure of a determinantal point process (DPP).

A Short Survey on Importance Weighting for Machine Learning

no code implementations • 15 Mar 2024 • Masanari Kimura, Hideitsu Hino

Importance weighting is a fundamental procedure in statistics and machine learning that weights the objective function or probability distribution based on the importance of the instance in some sense.

Scalable Counterfactual Distribution Estimation in Multivariate Causal Models

no code implementations • 2 Nov 2023 • Thong Pham, Shohei Shimizu, Hideitsu Hino, Tam Le

We consider the problem of estimating the counterfactual joint distribution of multiple quantities of interests (e. g., outcomes) in a multivariate causal model extended from the classical difference-in-difference design.

Information Geometrically Generalized Covariate Shift Adaptation

1 code implementation • 19 Apr 2023 • Masanari Kimura, Hideitsu Hino

In particular, the phenomenon that the marginal distribution of the data changes is called covariate shift, one of the most important research topics in machine learning.

Active Learning by Query by Committee with Robust Divergences

no code implementations • 18 Nov 2022 • Hideitsu Hino, Shinto Eguchi

In this paper, the measure of disagreement is defined by the Bregman divergence, which includes the Kullback--Leibler divergence as an instance, and the dual $\gamma$-power divergence.

Unsupervised Domain Adaptation for Extra Features in the Target Domain Using Optimal Transport

no code implementations • 10 Sep 2022 • Toshimitsu Aritake, Hideitsu Hino

In this paper, it is assumed that common features exist in both domains and that extra (new additional) features are observed in the target domain; hence, the dimensionality of the target domain is higher than that of the source domain.

Gaussian Process Koopman Mode Decomposition

no code implementations • 9 Sep 2022 • Takahiro Kawashima, Hideitsu Hino

In this paper, we propose a nonlinear probabilistic generative model of Koopman mode decomposition based on an unsupervised Gaussian process.

Geometry of EM and related iterative algorithms

no code implementations • 3 Sep 2022 • Hideitsu Hino, Shotaro Akaho, Noboru Murata

The Expectation--Maximization (EM) algorithm is a simple meta-algorithm that has been used for many years as a methodology for statistical inference when there are missing measurements in the observed data or when the data is composed of observables and unobservables.

Gradual Domain Adaptation via Normalizing Flows

1 code implementation • 23 Jun 2022 • Shogo Sagawa, Hideitsu Hino

In previous work, it is assumed that the number of intermediate domains is large and the distance between adjacent domains is small; hence, the gradual domain adaptation algorithm, involving self-training with unlabeled datasets, is applicable.

Information Geometry of Dropout Training

no code implementations • 22 Jun 2022 • Masanari Kimura, Hideitsu Hino

Dropout is one of the most popular regularization techniques in neural network training.

One-bit Submission for Locally Private Quasi-MLE: Its Asymptotic Normality and Limitation

no code implementations • 15 Feb 2022 • Hajime Ono, Kazuhiro Minami, Hideitsu Hino

Local differential privacy~(LDP) is an information-theoretic privacy definition suitable for statistical surveys that involve an untrusted data curator.

Cost-effective Framework for Gradual Domain Adaptation with Multifidelity

1 code implementation • 9 Feb 2022 • Shogo Sagawa, Hideitsu Hino

Practically, the cost of samples in intermediate domains will vary, and it is natural to consider that the closer an intermediate domain is to the target domain, the higher the cost of obtaining samples from the intermediate domain is.

Fast symplectic integrator for Nesterov-type acceleration method

1 code implementation • 1 Jun 2021 • Shin-itiro Goto, Hideitsu Hino

In this paper, explicit stable integrators based on symplectic and contact geometries are proposed for a non-autonomous ordinarily differential equation (ODE) found in improving convergence rate of Nesterov's accelerated gradient method.

Stopping Criterion for Active Learning Based on Error Stability

1 code implementation • 5 Apr 2021 • Hideaki Ishibashi, Hideitsu Hino

Active learning is a framework for supervised learning to improve the predictive performance by adaptively annotating a small number of samples.

$α$-Geodesical Skew Divergence

1 code implementation • 31 Mar 2021 • Masanari Kimura, Hideitsu Hino

The asymmetric skew divergence smooths one of the distributions by mixing it, to a degree determined by the parameter $\lambda$, with the other distribution.

Active Learning: Problem Settings and Recent Developments

no code implementations • 8 Dec 2020 • Hideitsu Hino

In supervised learning, acquiring labeled training data for a predictive model can be very costly, but acquiring a large amount of unlabeled data is often quite easy.

Modal Principal Component Analysis

no code implementations • 7 Aug 2020 • Keishi Sando, Hideitsu Hino

Thus, this study proposes a modal principal component analysis (MPCA), which is a robust PCA method based on mode estimation.

Stopping criterion for active learning based on deterministic generalization bounds

no code implementations • 15 May 2020 • Hideaki Ishibashi, Hideitsu Hino

Active learning is a framework in which the learning machine can select the samples to be used for training.

Fast and robust multiplane single molecule localization microscopy using deep neural network

no code implementations • 7 Jan 2020 • Toshimitsu Aritake, Hideitsu Hino, Shigeyuki Namiki, Daisuke Asanuma, Kenzo Hirose, Noboru Murata

Single molecule localization microscopy is widely used in biological research for measuring the nanostructures of samples smaller than the diffraction limit.

On a convergence property of a geometrical algorithm for statistical manifolds

no code implementations • 27 Sep 2019 • Shotaro Akaho, Hideitsu Hino, Noboru Murata

In this paper, we examine a geometrical projection algorithm for statistical inference.

Bayesian Optimization for Multi-objective Optimization and Multi-point Search

no code implementations • 7 May 2019 • Takashi Wada, Hideitsu Hino

It is difficult to analytically maximize the acquisition function as the computational cost is prohibitive even when approximate calculations such as sampling approximation are performed; therefore, we propose an accurate and computationally efficient method for estimating gradient of the acquisition function, and develop an algorithm for Bayesian optimization with multi-objective and multi-point search.

Classification of volcanic ash particles using a convolutional neural network and probability

no code implementations • 31 May 2018 • Daigo Shoji, Rina Noguchi, Shizuka Otsuki, Hideitsu Hino

Using the trained network, we classified ash particles composed of multiple basal shapes based on the output of the network, which can be interpreted as a mixing ratio of the four basal shapes.

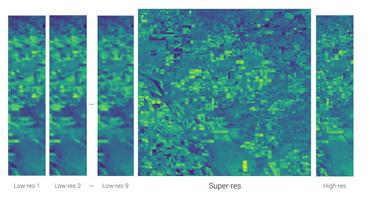

Double Sparse Multi-Frame Image Super Resolution

no code implementations • 2 Dec 2015 • Toshiyuki Kato, Hideitsu Hino, Noboru Murata

A large number of image super resolution algorithms based on the sparse coding are proposed, and some algorithms realize the multi-frame super resolution.

Sparse Coding Approach for Multi-Frame Image Super Resolution

no code implementations • 17 Feb 2014 • Toshiyuki Kato, Hideitsu Hino, Noboru Murata

Relative displacements of small patches of observed low-resolution images are accurately estimated by a computationally efficient block matching method.