Search Results for author: Tianyi Luo

Found 10 papers, 1 papers with code

To Aggregate or Not? Learning with Separate Noisy Labels

no code implementations • 14 Jun 2022 • Jiaheng Wei, Zhaowei Zhu, Tianyi Luo, Ehsan Amid, Abhishek Kumar, Yang Liu

The rawly collected training data often comes with separate noisy labels collected from multiple imperfect annotators (e. g., via crowdsourcing).

Interpretable Research Replication Prediction via Variational Contextual Consistency Sentence Masking

no code implementations • Findings (ACL) 2022 • Tianyi Luo, Rui Meng, Xin Eric Wang, Yang Liu

Research Replication Prediction (RRP) is the task of predicting whether a published research result can be replicated or not.

Compressed Predictive Information Coding

no code implementations • 3 Mar 2022 • Rui Meng, Tianyi Luo, Kristofer Bouchard

The key insight of our framework is to learn representations by minimizing the compression complexity and maximizing the predictive information in latent space.

The Rich Get Richer: Disparate Impact of Semi-Supervised Learning

1 code implementation • ICLR 2022 • Zhaowei Zhu, Tianyi Luo, Yang Liu

Semi-supervised learning (SSL) has demonstrated its potential to improve the model accuracy for a variety of learning tasks when the high-quality supervised data is severely limited.

Research Replication Prediction Using Weakly Supervised Learning

no code implementations • Findings of the Association for Computational Linguistics 2020 • Tianyi Luo, Xingyu Li, Hainan Wang, Yang Liu

In this paper, we propose two weakly supervised learning approaches that use automatically extracted text information of research papers to improve the prediction accuracy of research replication using both labeled and unlabeled datasets.

Machine Truth Serum

no code implementations • 28 Sep 2019 • Tianyi Luo, Yang Liu

In this paper, we extend the idea proposed in Bayesian Truth Serum that "a surprisingly more popular answer is more likely the true answer" to classification problems.

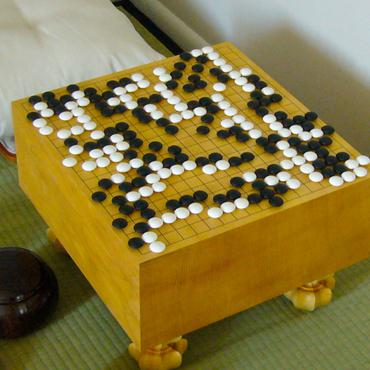

Can Machine Generate Traditional Chinese Poetry? A Feigenbaum Test

no code implementations • 19 Jun 2016 • Qixin Wang, Tianyi Luo, Dong Wang

Recent progress in neural learning demonstrated that machines can do well in regularized tasks, e. g., the game of Go.

Chinese Song Iambics Generation with Neural Attention-based Model

no code implementations • 21 Apr 2016 • Qixin Wang, Tianyi Luo, Dong Wang, Chao Xing

Learning and generating Chinese poems is a charming yet challenging task.

Stochastic Top-k ListNet

no code implementations • EMNLP 2015 • Tianyi Luo, Dong Wang, Rong Liu, Yiqiao Pan

ListNet is a well-known listwise learning to rank model and has gained much attention in recent years.

Learning from LDA using Deep Neural Networks

no code implementations • 5 Aug 2015 • Dongxu Zhang, Tianyi Luo, Dong Wang, Rong Liu

Latent Dirichlet Allocation (LDA) is a three-level hierarchical Bayesian model for topic inference.