PyMAF: 3D Human Pose and Shape Regression with Pyramidal Mesh Alignment Feedback Loop

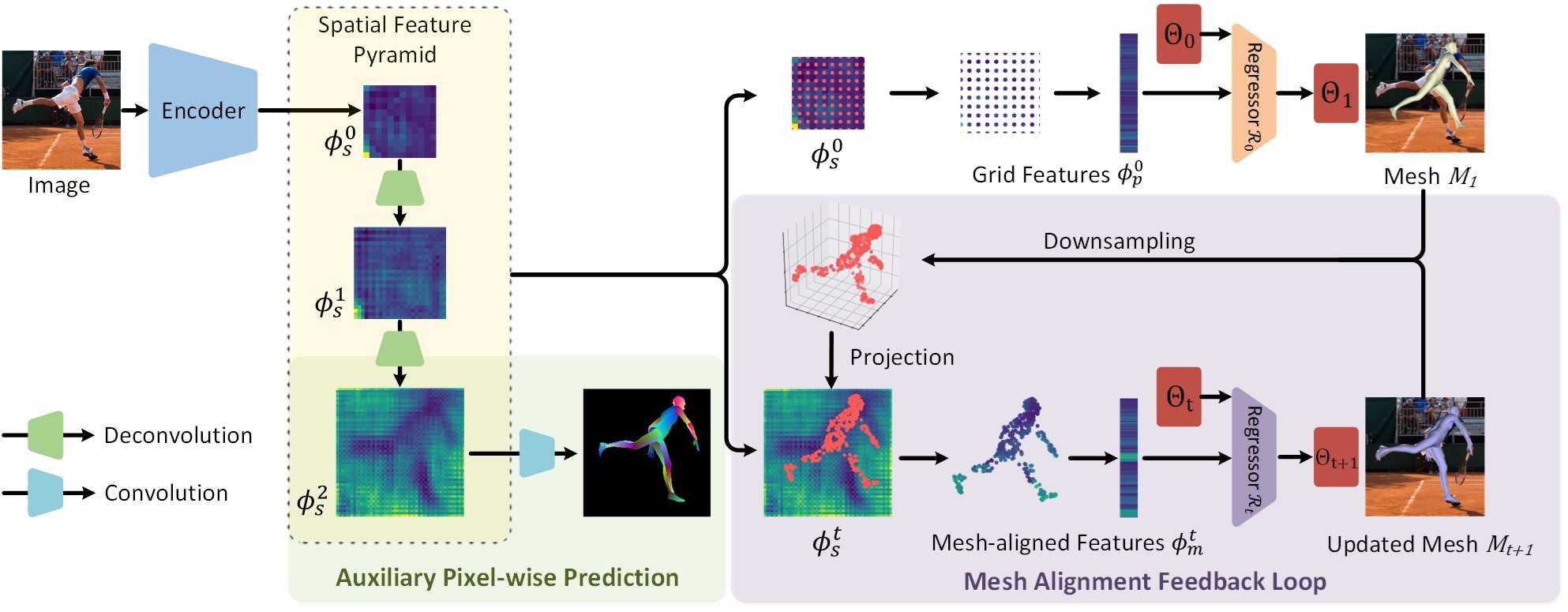

Regression-based methods have recently shown promising results in reconstructing human meshes from monocular images. By directly mapping raw pixels to model parameters, these methods can produce parametric models in a feed-forward manner via neural networks. However, minor deviation in parameters may lead to noticeable misalignment between the estimated meshes and image evidences. To address this issue, we propose a Pyramidal Mesh Alignment Feedback (PyMAF) loop to leverage a feature pyramid and rectify the predicted parameters explicitly based on the mesh-image alignment status in our deep regressor. In PyMAF, given the currently predicted parameters, mesh-aligned evidences will be extracted from finer-resolution features accordingly and fed back for parameter rectification. To reduce noise and enhance the reliability of these evidences, an auxiliary pixel-wise supervision is imposed on the feature encoder, which provides mesh-image correspondence guidance for our network to preserve the most related information in spatial features. The efficacy of our approach is validated on several benchmarks, including Human3.6M, 3DPW, LSP, and COCO, where experimental results show that our approach consistently improves the mesh-image alignment of the reconstruction. The project page with code and video results can be found at https://hongwenzhang.github.io/pymaf.

PDF Abstract ICCV 2021 PDF ICCV 2021 AbstractResults from the Paper

Ranked #5 on

3D Human Pose Estimation

on AGORA

(using extra training data)

Ranked #5 on

3D Human Pose Estimation

on AGORA

(using extra training data)

MS COCO

MS COCO

Human3.6M

Human3.6M

3DPW

3DPW

DensePose

DensePose

LSP

LSP

AGORA

AGORA

EMDB

EMDB