Distract Your Attention: Multi-head Cross Attention Network for Facial Expression Recognition

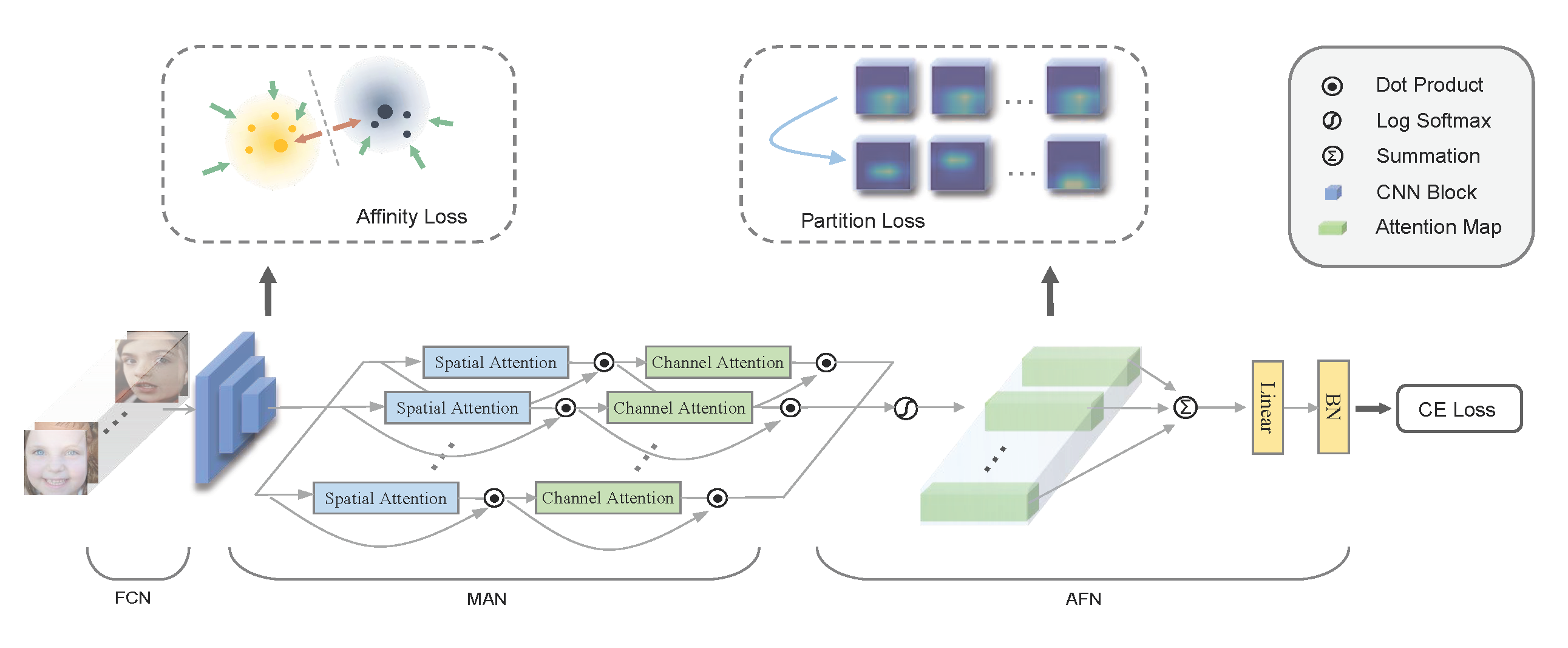

We present a novel facial expression recognition network, called Distract your Attention Network (DAN). Our method is based on two key observations. Firstly, multiple classes share inherently similar underlying facial appearance, and their differences could be subtle. Secondly, facial expressions exhibit themselves through multiple facial regions simultaneously, and the recognition requires a holistic approach by encoding high-order interactions among local features. To address these issues, we propose our DAN with three key components: Feature Clustering Network (FCN), Multi-head cross Attention Network (MAN), and Attention Fusion Network (AFN). The FCN extracts robust features by adopting a large-margin learning objective to maximize class separability. In addition, the MAN instantiates a number of attention heads to simultaneously attend to multiple facial areas and build attention maps on these regions. Further, the AFN distracts these attentions to multiple locations before fusing the attention maps to a comprehensive one. Extensive experiments on three public datasets (including AffectNet, RAF-DB, and SFEW 2.0) verified that the proposed method consistently achieves state-of-the-art facial expression recognition performance. Code will be made available at https://github.com/yaoing/DAN.

PDF Abstract

AffectNet

AffectNet

RAF-DB

RAF-DB

SFEW

SFEW