Florence: A New Foundation Model for Computer Vision

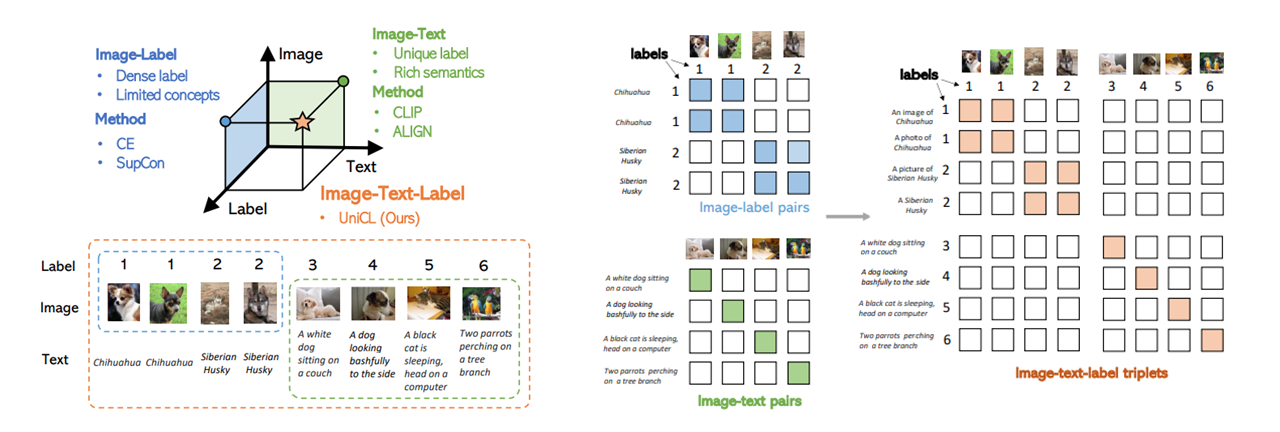

Automated visual understanding of our diverse and open world demands computer vision models to generalize well with minimal customization for specific tasks, similar to human vision. Computer vision foundation models, which are trained on diverse, large-scale dataset and can be adapted to a wide range of downstream tasks, are critical for this mission to solve real-world computer vision applications. While existing vision foundation models such as CLIP, ALIGN, and Wu Dao 2.0 focus mainly on mapping images and textual representations to a cross-modal shared representation, we introduce a new computer vision foundation model, Florence, to expand the representations from coarse (scene) to fine (object), from static (images) to dynamic (videos), and from RGB to multiple modalities (caption, depth). By incorporating universal visual-language representations from Web-scale image-text data, our Florence model can be easily adapted for various computer vision tasks, such as classification, retrieval, object detection, VQA, image caption, video retrieval and action recognition. Moreover, Florence demonstrates outstanding performance in many types of transfer learning: fully sampled fine-tuning, linear probing, few-shot transfer and zero-shot transfer for novel images and objects. All of these properties are critical for our vision foundation model to serve general purpose vision tasks. Florence achieves new state-of-the-art results in majority of 44 representative benchmarks, e.g., ImageNet-1K zero-shot classification with top-1 accuracy of 83.74 and the top-5 accuracy of 97.18, 62.4 mAP on COCO fine tuning, 80.36 on VQA, and 87.8 on Kinetics-600.

PDF AbstractCode

Tasks

Action Classification

Action Classification

Action Recognition

Action Recognition

Action Recognition In Videos

Action Recognition In Videos

Cross-Modal Retrieval

Cross-Modal Retrieval

Image Classification

Image Classification

object-detection

object-detection

Object Detection

Object Detection

Retrieval

Retrieval

Transfer Learning

Transfer Learning

Video Retrieval

Video Retrieval

Visual Question Answering (VQA)

Visual Question Answering (VQA)

Zero-Shot Cross-Modal Retrieval

Zero-Shot Cross-Modal Retrieval

Zero-Shot Learning

Zero-Shot Learning

Zero-Shot Transfer Image Classification

Zero-Shot Transfer Image Classification

Zero-Shot Video Retrieval

Zero-Shot Video Retrieval

ImageNet

ImageNet

MS COCO

MS COCO

Kinetics

Kinetics

Visual Genome

Visual Genome

Flickr30k

Flickr30k

Kinetics 400

Kinetics 400

AudioSet

AudioSet

MSR-VTT

MSR-VTT

EuroSAT

EuroSAT

Visual Question Answering v2.0

Visual Question Answering v2.0

HowTo100M

HowTo100M

WebVid

WebVid

Kinetics-600

Kinetics-600