From Multi-View to Hollow-3D: Hallucinated Hollow-3D R-CNN for 3D Object Detection

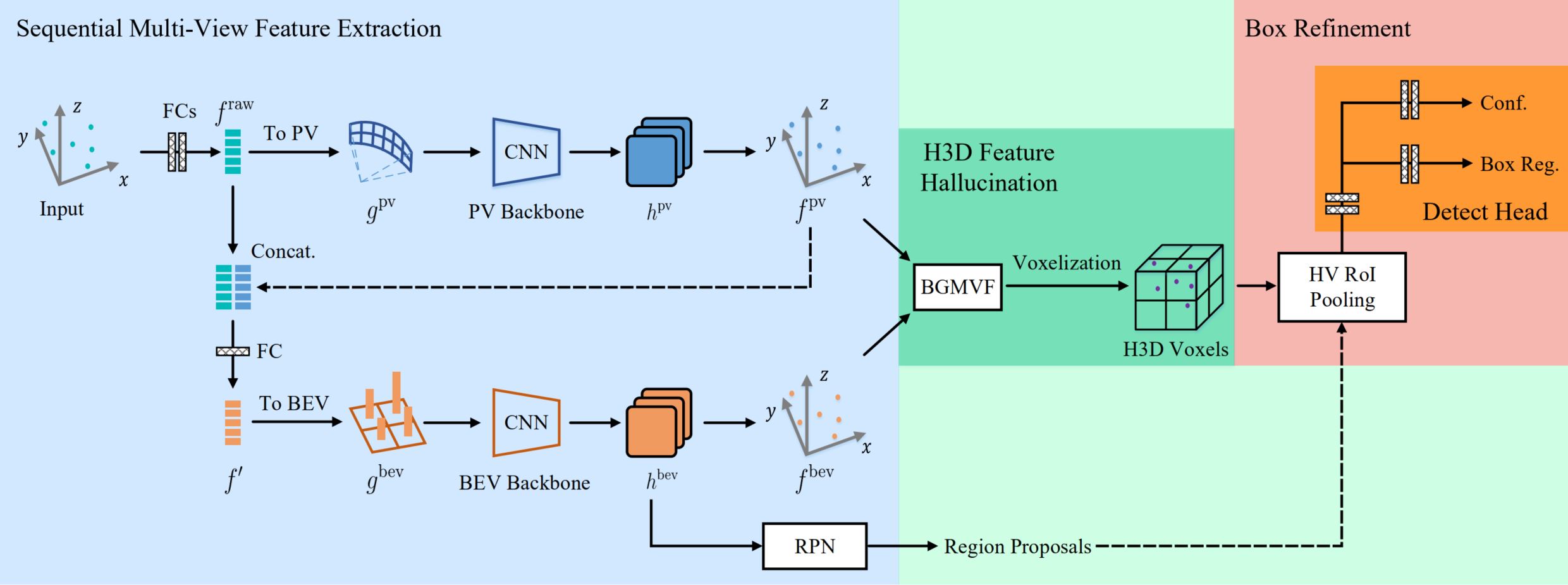

As an emerging data modal with precise distance sensing, LiDAR point clouds have been placed great expectations on 3D scene understanding. However, point clouds are always sparsely distributed in the 3D space, and with unstructured storage, which makes it difficult to represent them for effective 3D object detection. To this end, in this work, we regard point clouds as hollow-3D data and propose a new architecture, namely Hallucinated Hollow-3D R-CNN ($\text{H}^2$3D R-CNN), to address the problem of 3D object detection. In our approach, we first extract the multi-view features by sequentially projecting the point clouds into the perspective view and the bird-eye view. Then, we hallucinate the 3D representation by a novel bilaterally guided multi-view fusion block. Finally, the 3D objects are detected via a box refinement module with a novel Hierarchical Voxel RoI Pooling operation. The proposed $\text{H}^2$3D R-CNN provides a new angle to take full advantage of complementary information in the perspective view and the bird-eye view with an efficient framework. We evaluate our approach on the public KITTI Dataset and Waymo Open Dataset. Extensive experiments demonstrate the superiority of our method over the state-of-the-art algorithms with respect to both effectiveness and efficiency. The code will be made available at \url{https://github.com/djiajunustc/H-23D_R-CNN}.

PDF Abstract

Waymo Open Dataset

Waymo Open Dataset