Holistic 3D Scene Parsing and Reconstruction from a Single RGB Image

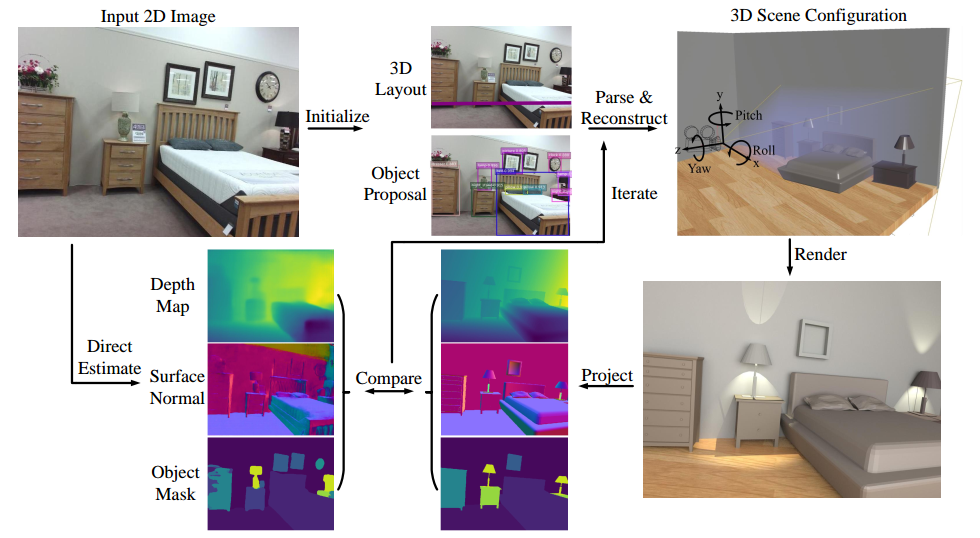

We propose a computational framework to jointly parse a single RGB image and reconstruct a holistic 3D configuration composed by a set of CAD models using a stochastic grammar model. Specifically, we introduce a Holistic Scene Grammar (HSG) to represent the 3D scene structure, which characterizes a joint distribution over the functional and geometric space of indoor scenes. The proposed HSG captures three essential and often latent dimensions of the indoor scenes: i) latent human context, describing the affordance and the functionality of a room arrangement, ii) geometric constraints over the scene configurations, and iii) physical constraints that guarantee physically plausible parsing and reconstruction. We solve this joint parsing and reconstruction problem in an analysis-by-synthesis fashion, seeking to minimize the differences between the input image and the rendered images generated by our 3D representation, over the space of depth, surface normal, and object segmentation map. The optimal configuration, represented by a parse graph, is inferred using Markov chain Monte Carlo (MCMC), which efficiently traverses through the non-differentiable solution space, jointly optimizing object localization, 3D layout, and hidden human context. Experimental results demonstrate that the proposed algorithm improves the generalization ability and significantly outperforms prior methods on 3D layout estimation, 3D object detection, and holistic scene understanding.

PDF Abstract ECCV 2018 PDF ECCV 2018 AbstractCode

Datasets

Results from the Paper

Ranked #4 on

Monocular 3D Object Detection

on SUN RGB-D

(AP@0.15 (10 / PNet-30) metric)

Ranked #4 on

Monocular 3D Object Detection

on SUN RGB-D

(AP@0.15 (10 / PNet-30) metric)

SUN RGB-D

SUN RGB-D

3D Chairs

3D Chairs

Watch-n-Patch

Watch-n-Patch