Learning and Reasoning with the Graph Structure Representation in Robotic Surgery

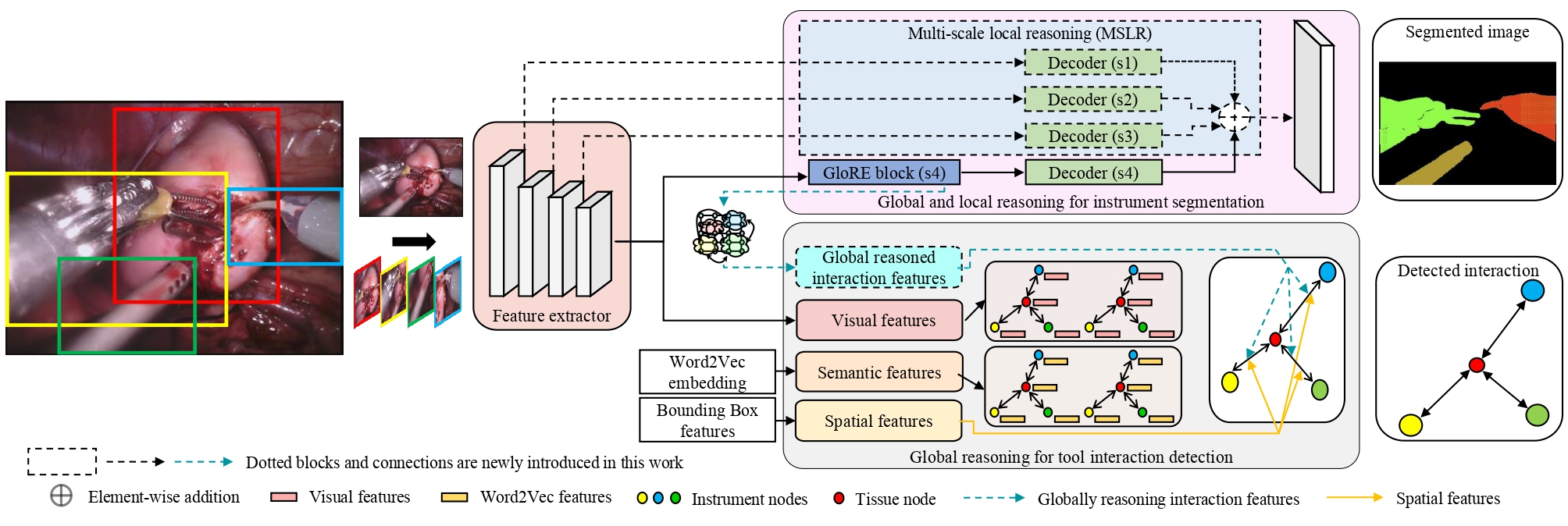

Learning to infer graph representations and performing spatial reasoning in a complex surgical environment can play a vital role in surgical scene understanding in robotic surgery. For this purpose, we develop an approach to generate the scene graph and predict surgical interactions between instruments and surgical region of interest (ROI) during robot-assisted surgery. We design an attention link function and integrate with a graph parsing network to recognize the surgical interactions. To embed each node with corresponding neighbouring node features, we further incorporate SageConv into the network. The scene graph generation and active edge classification mostly depend on the embedding or feature extraction of node and edge features from complex image representation. Here, we empirically demonstrate the feature extraction methods by employing label smoothing weighted loss. Smoothing the hard label can avoid the over-confident prediction of the model and enhances the feature representation learned by the penultimate layer. To obtain the graph scene label, we annotate the bounding box and the instrument-ROI interactions on the robotic scene segmentation challenge 2018 dataset with an experienced clinical expert in robotic surgery and employ it to evaluate our propositions.

PDF Abstract

ssd

ssd