Manifold Alignment for Semantically Aligned Style Transfer

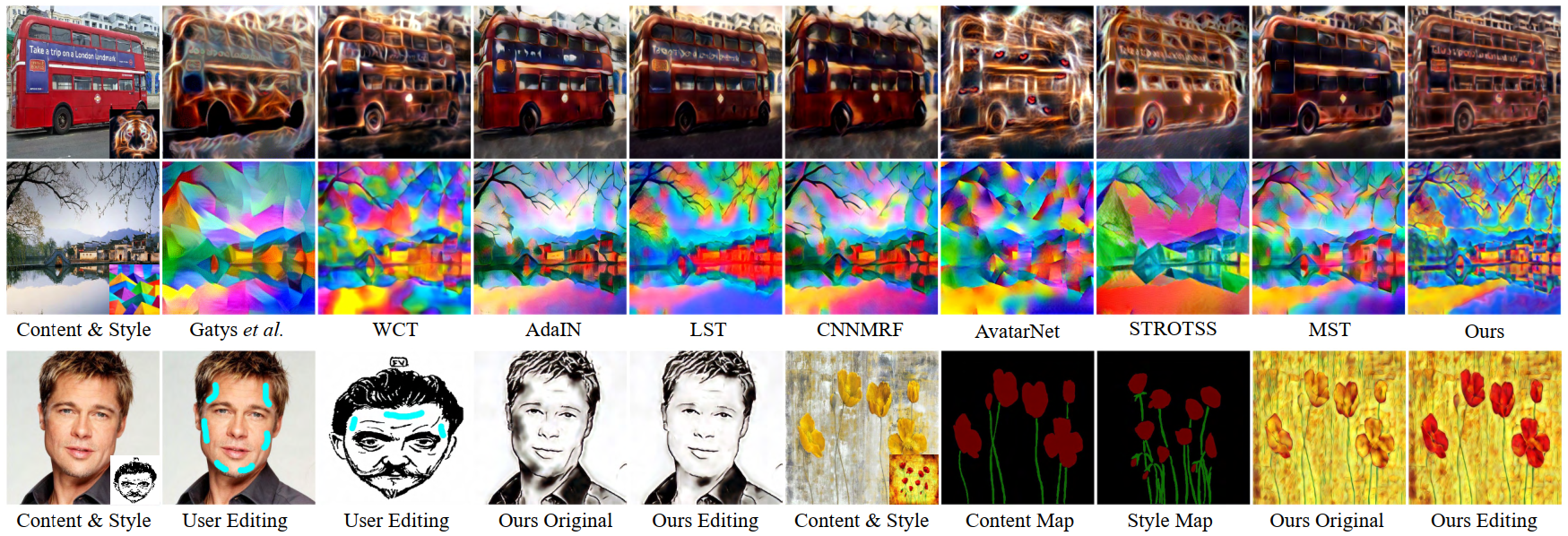

Most existing style transfer methods follow the assumption that styles can be represented with global statistics (e.g., Gram matrices or covariance matrices), and thus address the problem by forcing the output and style images to have similar global statistics. An alternative is the assumption of local style patterns, where algorithms are designed to swap similar local features of content and style images. However, the limitation of these existing methods is that they neglect the semantic structure of the content image which may lead to corrupted content structure in the output. In this paper, we make a new assumption that image features from the same semantic region form a manifold and an image with multiple semantic regions follows a multi-manifold distribution. Based on this assumption, the style transfer problem is formulated as aligning two multi-manifold distributions and a Manifold Alignment based Style Transfer (MAST) framework is proposed. The proposed framework allows semantically similar regions between the output and the style image share similar style patterns. Moreover, the proposed manifold alignment method is flexible to allow user editing or using semantic segmentation maps as guidance for style transfer. To allow the method to be applicable to photorealistic style transfer, we propose a new adaptive weight skip connection network structure to preserve the content details. Extensive experiments verify the effectiveness of the proposed framework for both artistic and photorealistic style transfer. Code is available at https://github.com/NJUHuoJing/MAST.

PDF Abstract ICCV 2021 PDF ICCV 2021 Abstract

MS COCO

MS COCO