Depth Estimation

799 papers with code • 14 benchmarks • 70 datasets

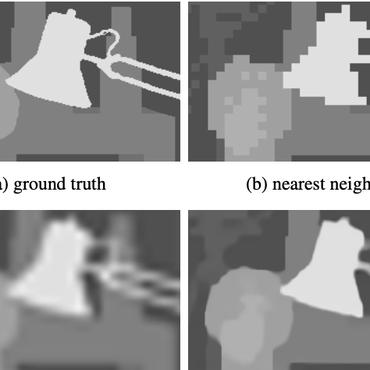

Depth Estimation is the task of measuring the distance of each pixel relative to the camera. Depth is extracted from either monocular (single) or stereo (multiple views of a scene) images. Traditional methods use multi-view geometry to find the relationship between the images. Newer methods can directly estimate depth by minimizing the regression loss, or by learning to generate a novel view from a sequence. The most popular benchmarks are KITTI and NYUv2. Models are typically evaluated according to a RMS metric.

Libraries

Use these libraries to find Depth Estimation models and implementationsSubtasks

Latest papers

Metric3D v2: A Versatile Monocular Geometric Foundation Model for Zero-shot Metric Depth and Surface Normal Estimation

Our method benefits various applications including in-the-wild metrology monocular-SLAM, and 3D reconstruction, which highlight the versatility of Metric3D v2 models as geometric foundation models.

Virtually Enriched NYU Depth V2 Dataset for Monocular Depth Estimation: Do We Need Artificial Augmentation?

We present ANYU, a new virtually augmented version of the NYU depth v2 dataset, designed for monocular depth estimation.

On the Robustness of Language Guidance for Low-Level Vision Tasks: Findings from Depth Estimation

Recent advances in monocular depth estimation have been made by incorporating natural language as additional guidance.

Boosting Self-Supervision for Single-View Scene Completion via Knowledge Distillation

In this work, we propose to fuse the scene reconstruction from multiple images and distill this knowledge into a more accurate single-view scene reconstruction.

RoadBEV: Road Surface Reconstruction in Bird's Eye View

This paper uniformly proposes two simple yet effective models for road elevation reconstruction in BEV named RoadBEV-mono and RoadBEV-stereo, which estimate road elevation with monocular and stereo images, respectively.

Know Your Neighbors: Improving Single-View Reconstruction via Spatial Vision-Language Reasoning

We propose KYN, a novel method for single-view scene reconstruction that reasons about semantic and spatial context to predict each point's density.

WorDepth: Variational Language Prior for Monocular Depth Estimation

To test this, we focus on monocular depth estimation, the problem of predicting a dense depth map from a single image, but with an additional text caption describing the scene.

MonoCD: Monocular 3D Object Detection with Complementary Depths

Monocular 3D object detection has attracted widespread attention due to its potential to accurately obtain object 3D localization from a single image at a low cost.

VSRD: Instance-Aware Volumetric Silhouette Rendering for Weakly Supervised 3D Object Detection

In the auto-labeling stage, we represent the surface of each instance as a signed distance field (SDF) and render its silhouette as an instance mask through our proposed instance-aware volumetric silhouette rendering.

NeSLAM: Neural Implicit Mapping and Self-Supervised Feature Tracking With Depth Completion and Denoising

Second, the occupancy scene representation is replaced with Signed Distance Field (SDF) hierarchical scene representation for high-quality reconstruction and view synthesis.

Cityscapes

Cityscapes

KITTI

KITTI

ScanNet

ScanNet

NYUv2

NYUv2

Matterport3D

Matterport3D

Middlebury

Middlebury

TUM RGB-D

TUM RGB-D

SUNCG

SUNCG

Taskonomy

Taskonomy

2D-3D-S

2D-3D-S