ST-MoE: Designing Stable and Transferable Sparse Expert Models

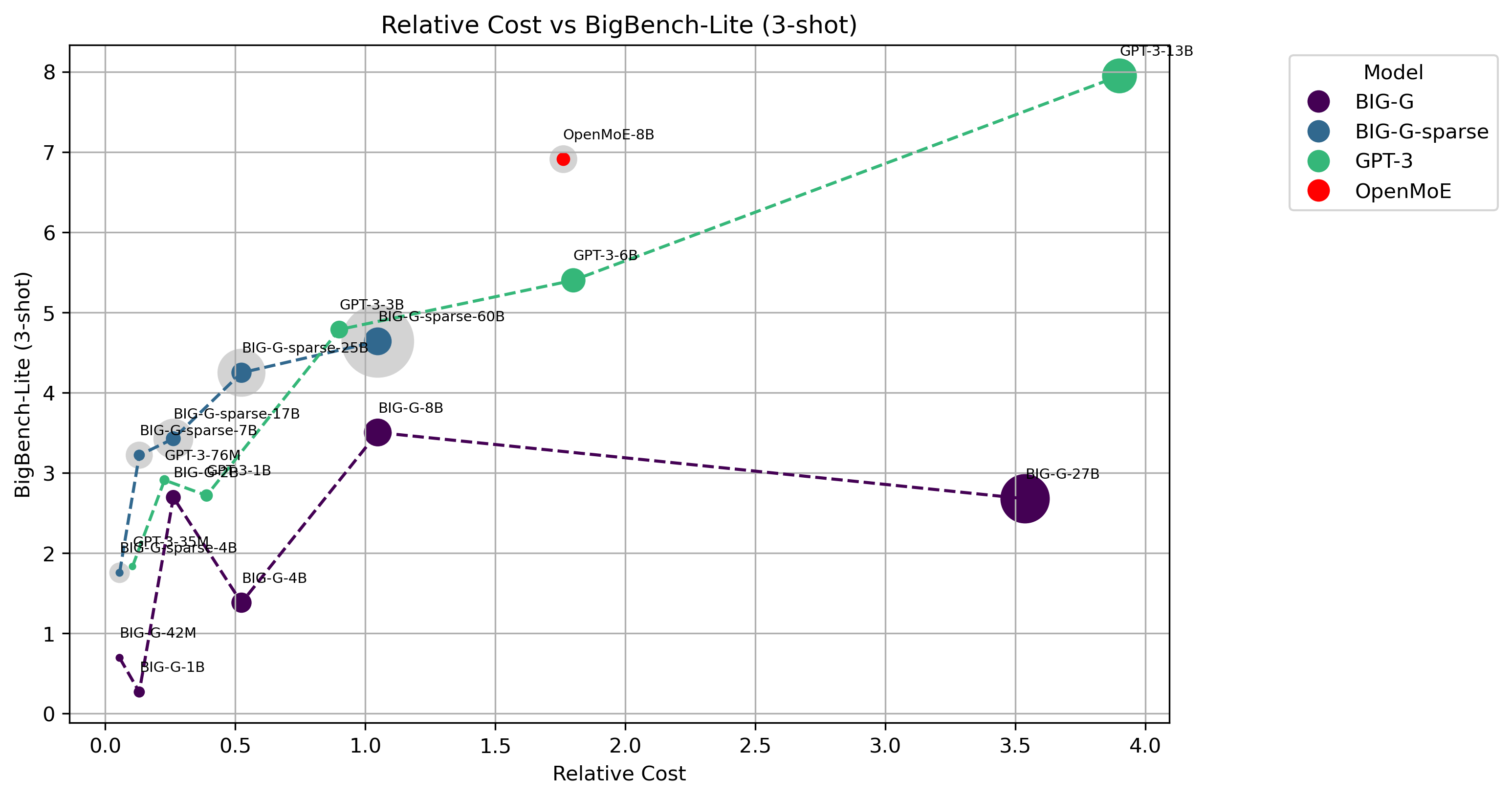

Scale has opened new frontiers in natural language processing -- but at a high cost. In response, Mixture-of-Experts (MoE) and Switch Transformers have been proposed as an energy efficient path to even larger and more capable language models. But advancing the state-of-the-art across a broad set of natural language tasks has been hindered by training instabilities and uncertain quality during fine-tuning. Our work focuses on these issues and acts as a design guide. We conclude by scaling a sparse model to 269B parameters, with a computational cost comparable to a 32B dense encoder-decoder Transformer (Stable and Transferable Mixture-of-Experts or ST-MoE-32B). For the first time, a sparse model achieves state-of-the-art performance in transfer learning, across a diverse set of tasks including reasoning (SuperGLUE, ARC Easy, ARC Challenge), summarization (XSum, CNN-DM), closed book question answering (WebQA, Natural Questions), and adversarially constructed tasks (Winogrande, ANLI R3).

PDF Abstract

GLUE

GLUE

SQuAD

SQuAD

Natural Questions

Natural Questions

TriviaQA

TriviaQA

C4

C4

BoolQ

BoolQ

SuperGLUE

SuperGLUE

WinoGrande

WinoGrande

WSC

WSC

COPA

COPA

ANLI

ANLI

MultiRC

MultiRC

ReCoRD

ReCoRD

ARC (AI2 Reasoning Challenge)

ARC (AI2 Reasoning Challenge)