Neural Prompt Search

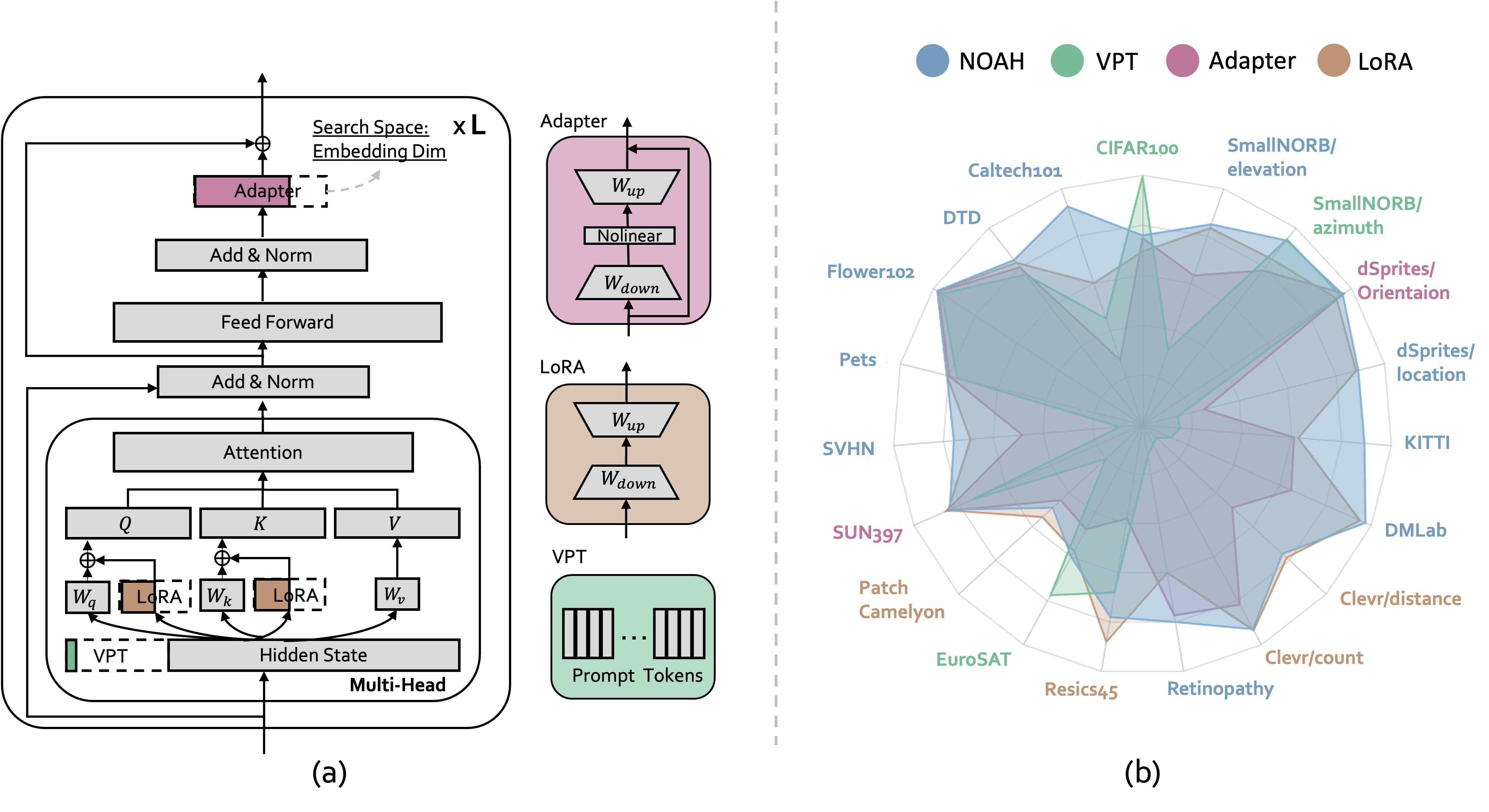

The size of vision models has grown exponentially over the last few years, especially after the emergence of Vision Transformer. This has motivated the development of parameter-efficient tuning methods, such as learning adapter layers or visual prompt tokens, which allow a tiny portion of model parameters to be trained whereas the vast majority obtained from pre-training are frozen. However, designing a proper tuning method is non-trivial: one might need to try out a lengthy list of design choices, not to mention that each downstream dataset often requires custom designs. In this paper, we view the existing parameter-efficient tuning methods as "prompt modules" and propose Neural prOmpt seArcH (NOAH), a novel approach that learns, for large vision models, the optimal design of prompt modules through a neural architecture search algorithm, specifically for each downstream dataset. By conducting extensive experiments on over 20 vision datasets, we demonstrate that NOAH (i) is superior to individual prompt modules, (ii) has a good few-shot learning ability, and (iii) is domain-generalizable. The code and models are available at https://github.com/Davidzhangyuanhan/NOAH.

PDF AbstractCode

Results from the Paper

Ranked #1 on

Image Classification

on OmniBenchmark

(using extra training data)

Ranked #1 on

Image Classification

on OmniBenchmark

(using extra training data)

ImageNet

ImageNet

CIFAR-100

CIFAR-100

SVHN

SVHN

DTD

DTD

Food-101

Food-101

Caltech-101

Caltech-101

EuroSAT

EuroSAT

ImageNet-A

ImageNet-A

RESISC45

RESISC45

smallNORB

smallNORB

OmniBenchmark

OmniBenchmark