SepTr: Separable Transformer for Audio Spectrogram Processing

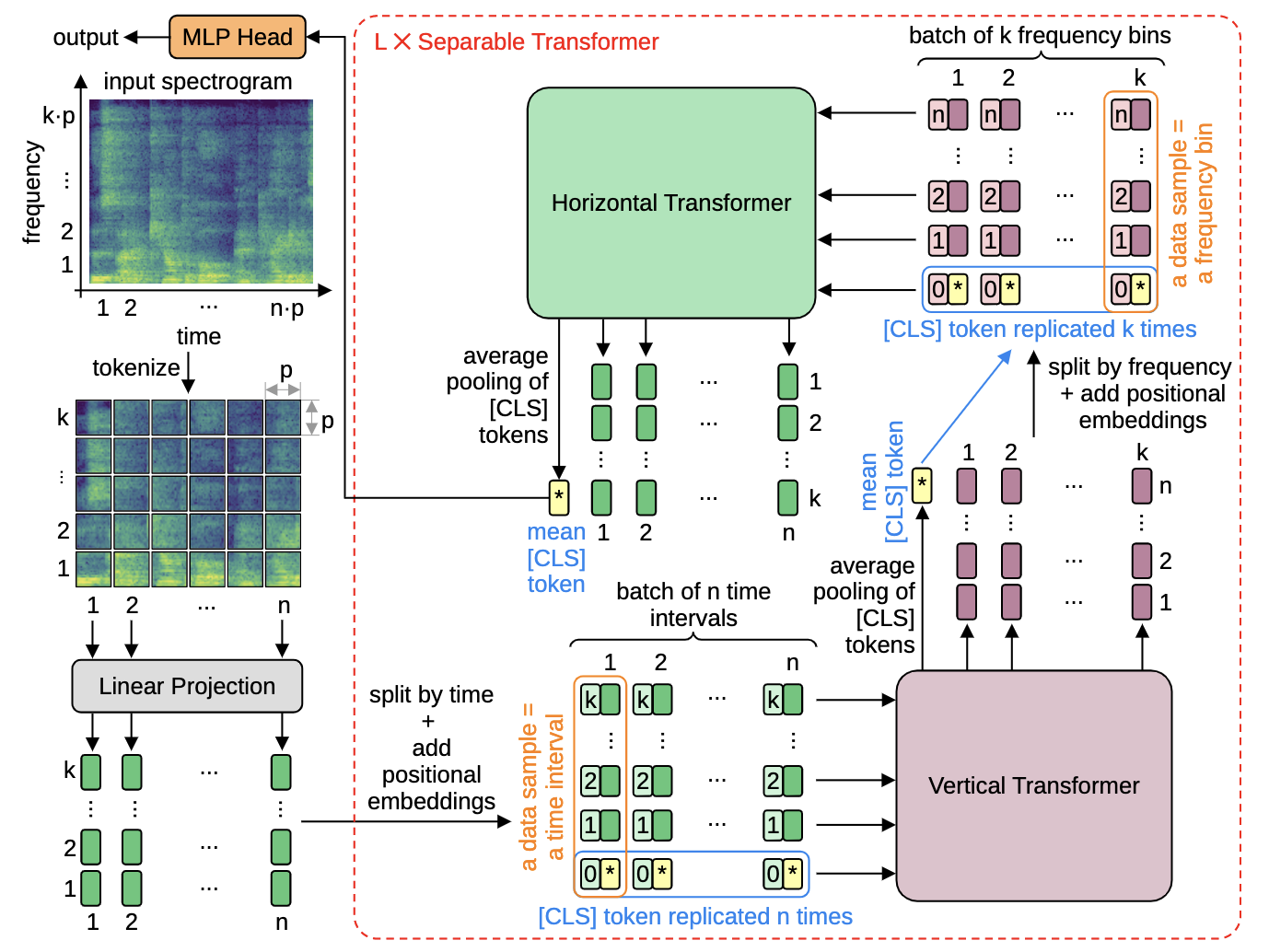

Following the successful application of vision transformers in multiple computer vision tasks, these models have drawn the attention of the signal processing community. This is because signals are often represented as spectrograms (e.g. through Discrete Fourier Transform) which can be directly provided as input to vision transformers. However, naively applying transformers to spectrograms is suboptimal. Since the axes represent distinct dimensions, i.e. frequency and time, we argue that a better approach is to separate the attention dedicated to each axis. To this end, we propose the Separable Transformer (SepTr), an architecture that employs two transformer blocks in a sequential manner, the first attending to tokens within the same time interval, and the second attending to tokens within the same frequency bin. We conduct experiments on three benchmark data sets, showing that our separable architecture outperforms conventional vision transformers and other state-of-the-art methods. Unlike standard transformers, SepTr linearly scales the number of trainable parameters with the input size, thus having a lower memory footprint. Our code is available as open source at https://github.com/ristea/septr.

PDF Abstract

Speech Commands

Speech Commands

ESC-50

ESC-50