Stacked Hybrid-Attention and Group Collaborative Learning for Unbiased Scene Graph Generation

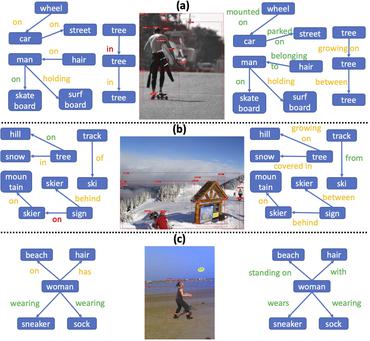

Scene Graph Generation, which generally follows a regular encoder-decoder pipeline, aims to first encode the visual contents within the given image and then parse them into a compact summary graph. Existing SGG approaches generally not only neglect the insufficient modality fusion between vision and language, but also fail to provide informative predicates due to the biased relationship predictions, leading SGG far from practical. Towards this end, in this paper, we first present a novel Stacked Hybrid-Attention network, which facilitates the intra-modal refinement as well as the inter-modal interaction, to serve as the encoder. We then devise an innovative Group Collaborative Learning strategy to optimize the decoder. Particularly, based upon the observation that the recognition capability of one classifier is limited towards an extremely unbalanced dataset, we first deploy a group of classifiers that are expert in distinguishing different subsets of classes, and then cooperatively optimize them from two aspects to promote the unbiased SGG. Experiments conducted on VG and GQA datasets demonstrate that, we not only establish a new state-of-the-art in the unbiased metric, but also nearly double the performance compared with two baselines.

PDF Abstract CVPR 2022 PDF CVPR 2022 AbstractDatasets

Results from the Paper

Ranked #1 on

Unbiased Scene Graph Generation

on Visual Genome

(mR@20 metric)

Ranked #1 on

Unbiased Scene Graph Generation

on Visual Genome

(mR@20 metric)

Visual Genome

Visual Genome

GQA

GQA