Search Results for author: Siyang Song

Found 26 papers, 17 papers with code

Multi-scale Dynamic and Hierarchical Relationship Modeling for Facial Action Units Recognition

1 code implementation • 9 Apr 2024 • Zihan Wang, Siyang Song, Cheng Luo, Songhe Deng, Weicheng Xie, Linlin Shen

Human facial action units (AUs) are mutually related in a hierarchical manner, as not only they are associated with each other in both spatial and temporal domains but also AUs located in the same/close facial regions show stronger relationships than those of different facial regions.

Boosting Adversarial Transferability across Model Genus by Deformation-Constrained Warping

2 code implementations • 6 Feb 2024 • Qinliang Lin, Cheng Luo, Zenghao Niu, Xilin He, Weicheng Xie, Yuanbo Hou, Linlin Shen, Siyang Song

Adversarial examples generated by a surrogate model typically exhibit limited transferability to unknown target systems.

REACT 2024: the Second Multiple Appropriate Facial Reaction Generation Challenge

1 code implementation • 10 Jan 2024 • Siyang Song, Micol Spitale, Cheng Luo, Cristina Palmero, German Barquero, Hengde Zhu, Sergio Escalera, Michel Valstar, Tobias Baur, Fabien Ringeval, Elisabeth Andre, Hatice Gunes

In dyadic interactions, humans communicate their intentions and state of mind using verbal and non-verbal cues, where multiple different facial reactions might be appropriate in response to a specific speaker behaviour.

Multi-level graph learning for audio event classification and human-perceived annoyance rating prediction

1 code implementation • 15 Dec 2023 • Yuanbo Hou, Qiaoqiao Ren, Siyang Song, Yuxin Song, Wenwu Wang, Dick Botteldooren

Specifically, this paper proposes a lightweight multi-level graph learning (MLGL) based on local and global semantic graphs to simultaneously perform audio event classification (AEC) and human annoyance rating prediction (ARP).

Audio Event-Relational Graph Representation Learning for Acoustic Scene Classification

1 code implementation • 5 Oct 2023 • Yuanbo Hou, Siyang Song, Chuang Yu, Wenwu Wang, Dick Botteldooren

The results show the feasibility of recognizing diverse acoustic scenes based on the audio event-relational graph.

Acoustic Scene Classification

Acoustic Scene Classification

Graph Representation Learning

+1

Graph Representation Learning

+1

Joint Prediction of Audio Event and Annoyance Rating in an Urban Soundscape by Hierarchical Graph Representation Learning

1 code implementation • 23 Aug 2023 • Yuanbo Hou, Siyang Song, Cheng Luo, Andrew Mitchell, Qiaoqiao Ren, Weicheng Xie, Jian Kang, Wenwu Wang, Dick Botteldooren

Sound events in daily life carry rich information about the objective world.

MRecGen: Multimodal Appropriate Reaction Generator

no code implementations • 5 Jul 2023 • Jiaqi Xu, Cheng Luo, Weicheng Xie, Linlin Shen, Xiaofeng Liu, Lu Liu, Hatice Gunes, Siyang Song

Verbal and non-verbal human reaction generation is a challenging task, as different reactions could be appropriate for responding to the same behaviour.

REACT2023: the first Multi-modal Multiple Appropriate Facial Reaction Generation Challenge

1 code implementation • 11 Jun 2023 • Siyang Song, Micol Spitale, Cheng Luo, German Barquero, Cristina Palmero, Sergio Escalera, Michel Valstar, Tobias Baur, Fabien Ringeval, Elisabeth Andre, Hatice Gunes

The Multi-modal Multiple Appropriate Facial Reaction Generation Challenge (REACT2023) is the first competition event focused on evaluating multimedia processing and machine learning techniques for generating human-appropriate facial reactions in various dyadic interaction scenarios, with all participants competing strictly under the same conditions.

ReactFace: Multiple Appropriate Facial Reaction Generation in Dyadic Interactions

1 code implementation • 25 May 2023 • Cheng Luo, Siyang Song, Weicheng Xie, Micol Spitale, Linlin Shen, Hatice Gunes

ReactFace generates multiple different but appropriate photo-realistic human facial reactions by (i) learning an appropriate facial reaction distribution representing multiple appropriate facial reactions; and (ii) synchronizing the generated facial reactions with the speaker's verbal and non-verbal behaviours at each time stamp, resulting in realistic 2D facial reaction sequences.

Reversible Graph Neural Network-based Reaction Distribution Learning for Multiple Appropriate Facial Reactions Generation

1 code implementation • 24 May 2023 • Tong Xu, Micol Spitale, Hao Tang, Lu Liu, Hatice Gunes, Siyang Song

This means that we approach this problem by considering the generation of a distribution of the listener's appropriate facial reactions instead of multiple different appropriate facial reactions, i. e., 'many' appropriate facial reaction labels are summarised as 'one' distribution label during training.

Spatio-Temporal AU Relational Graph Representation Learning For Facial Action Units Detection

1 code implementation • 19 Mar 2023 • Zihan Wang, Siyang Song, Cheng Luo, Yuzhi Zhou, shiling Wu, Weicheng Xie, Linlin Shen

This paper presents our Facial Action Units (AUs) detection submission to the fifth Affective Behavior Analysis in-the-wild Competition (ABAW).

Multiple Appropriate Facial Reaction Generation in Dyadic Interaction Settings: What, Why and How?

1 code implementation • 13 Feb 2023 • Siyang Song, Micol Spitale, Yiming Luo, Batuhan Bal, Hatice Gunes

However, none attempted to automatically generate multiple appropriate reactions in the context of dyadic interactions and evaluate the appropriateness of those reactions using objective measures.

Shift from Texture-bias to Shape-bias: Edge Deformation-based Augmentation for Robust Object Recognition

no code implementations • ICCV 2023 • Xilin He, Qinliang Lin, Cheng Luo, Weicheng Xie, Siyang Song, Feng Liu, Linlin Shen

Recent studies have shown the vulnerability of CNNs under perturbation noises, which is partially caused by the reason that the well-trained CNNs are too biased toward the object texture, i. e., they make predictions mainly based on texture cues.

Fourier-Net: Fast Image Registration with Band-limited Deformation

1 code implementation • 29 Nov 2022 • Xi Jia, Joseph Bartlett, Wei Chen, Siyang Song, Tianyang Zhang, Xinxing Cheng, Wenqi Lu, Zhaowen Qiu, Jinming Duan

Specifically, instead of our Fourier-Net learning to output a full-resolution displacement field in the spatial domain, we learn its low-dimensional representation in a band-limited Fourier domain.

Ranked #3 on

Medical Image Registration

on OASIS

(val dsc metric)

Ranked #3 on

Medical Image Registration

on OASIS

(val dsc metric)

GRATIS: Deep Learning Graph Representation with Task-specific Topology and Multi-dimensional Edge Features

1 code implementation • 19 Nov 2022 • Siyang Song, Yuxin Song, Cheng Luo, Zhiyuan Song, Selim Kuzucu, Xi Jia, Zhijiang Guo, Weicheng Xie, Linlin Shen, Hatice Gunes

Our framework is effective, robust and flexible, and is a plug-and-play module that can be combined with different backbones and Graph Neural Networks (GNNs) to generate a task-specific graph representation from various graph and non-graph data.

Multi-dimensional Edge-based Audio Event Relational Graph Representation Learning for Acoustic Scene Classification

1 code implementation • 27 Oct 2022 • Yuanbo Hou, Siyang Song, Chuang Yu, Yuxin Song, Wenwu Wang, Dick Botteldooren

Experiments on a polyphonic acoustic scene dataset show that the proposed ERGL achieves competitive performance on ASC by using only a limited number of embeddings of audio events without any data augmentations.

Acoustic Scene Classification

Acoustic Scene Classification

Graph Representation Learning

+1

Graph Representation Learning

+1

An Open-source Benchmark of Deep Learning Models for Audio-visual Apparent and Self-reported Personality Recognition

1 code implementation • 17 Oct 2022 • Rongfan Liao, Siyang Song, Hatice Gunes

Personality determines a wide variety of human daily and working behaviours, and is crucial for understanding human internal and external states.

Scale-free and Task-agnostic Attack: Generating Photo-realistic Adversarial Patterns with Patch Quilting Generator

no code implementations • 12 Aug 2022 • Xiangbo Gao, Cheng Luo, Qinliang Lin, Weicheng Xie, Minmin Liu, Linlin Shen, Keerthy Kusumam, Siyang Song

\noindent Traditional L_p norm-restricted image attack algorithms suffer from poor transferability to black box scenarios and poor robustness to defense algorithms.

Learning Multi-dimensional Edge Feature-based AU Relation Graph for Facial Action Unit Recognition

2 code implementations • 2 May 2022 • Cheng Luo, Siyang Song, Weicheng Xie, Linlin Shen, Hatice Gunes

While the relationship between a pair of AUs can be complex and unique, existing approaches fail to specifically and explicitly represent such cues for each pair of AUs in each facial display.

Ranked #3 on

Facial Action Unit Detection

on DISFA

Ranked #3 on

Facial Action Unit Detection

on DISFA

Two-stage Temporal Modelling Framework for Video-based Depression Recognition using Graph Representation

no code implementations • 30 Nov 2021 • Jiaqi Xu, Siyang Song, Keerthy Kusumam, Hatice Gunes, Michel Valstar

The short-term depressive behaviour modelling stage first deep learns depression-related facial behavioural features from multiple short temporal scales, where a Depression Feature Enhancement (DFE) module is proposed to enhance the depression-related clues for all temporal scales and remove non-depression noises.

Inferring User Facial Affect in Work-like Settings

no code implementations • 22 Nov 2021 • Chaudhary Muhammad Aqdus Ilyas, Siyang Song, Hatice Gunes

Unlike the six basic emotions of happiness, sadness, fear, anger, disgust and surprise, modelling and predicting dimensional affect in terms of valence (positivity - negativity) and arousal (intensity) has proven to be more flexible, applicable and useful for naturalistic and real-world settings.

Learning Graph Representation of Person-specific Cognitive Processes from Audio-visual Behaviours for Automatic Personality Recognition

no code implementations • 26 Oct 2021 • Siyang Song, Zilong Shao, Shashank Jaiswal, Linlin Shen, Michel Valstar, Hatice Gunes

This approach builds on two following findings in cognitive science: (i) human cognition partially determines expressed behaviour and is directly linked to true personality traits; and (ii) in dyadic interactions individuals' nonverbal behaviours are influenced by their conversational partner behaviours.

EMOPAIN Challenge 2020: Multimodal Pain Evaluation from Facial and Bodily Expressions

no code implementations • 21 Jan 2020 • Joy O. Egede, Siyang Song, Temitayo A. Olugbade, Chongyang Wang, Amanda Williams, Hongy-ing Meng, Min Aung, Nicholas D. Lane, Michel Valstar, Nadia Bianchi-Berthouze

The EmoPain 2020 Challenge is the first international competition aimed at creating a uniform platform for the comparison of machine learning and multimedia processing methods of automatic chronic pain assessment from human expressive behaviour, and also the identification of pain-related behaviours.

AVEC 2019 Workshop and Challenge: State-of-Mind, Detecting Depression with AI, and Cross-Cultural Affect Recognition

no code implementations • 10 Jul 2019 • Fabien Ringeval, Björn Schuller, Michel Valstar, NIcholas Cummins, Roddy Cowie, Leili Tavabi, Maximilian Schmitt, Sina Alisamir, Shahin Amiriparian, Eva-Maria Messner, Siyang Song, Shuo Liu, Ziping Zhao, Adria Mallol-Ragolta, Zhao Ren, Mohammad Soleymani, Maja Pantic

The Audio/Visual Emotion Challenge and Workshop (AVEC 2019) "State-of-Mind, Detecting Depression with AI, and Cross-cultural Affect Recognition" is the ninth competition event aimed at the comparison of multimedia processing and machine learning methods for automatic audiovisual health and emotion analysis, with all participants competing strictly under the same conditions.

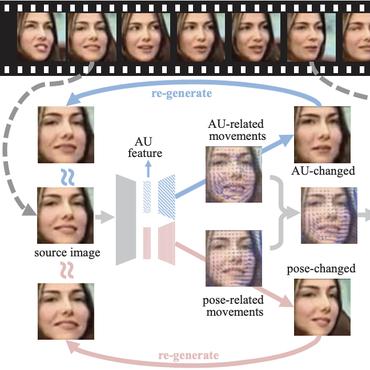

Inferring Dynamic Representations of Facial Actions from a Still Image

no code implementations • 4 Apr 2019 • Siyang Song, Enrique Sánchez-Lozano, Linlin Shen, Alan Johnston, Michel Valstar

We present a novel approach to capture multiple scales of such temporal dynamics, with an application to facial Action Unit (AU) intensity estimation and dimensional affect estimation.

Noise Invariant Frame Selection: A Simple Method to Address the Background Noise Problem for Text-independent Speaker Verification

1 code implementation • 3 May 2018 • Siyang Song, Shuimei Zhang, Björn Schuller, Linlin Shen, Michel Valstar

The performance of speaker-related systems usually degrades heavily in practical applications largely due to the presence of background noise.