Search Results for author: Xun Huang

Found 18 papers, 10 papers with code

Commonsense Prototype for Outdoor Unsupervised 3D Object Detection

1 code implementation • 25 Apr 2024 • Hai Wu, Shijia Zhao, Xun Huang, Chenglu Wen, Xin Li, Cheng Wang

The prevalent approaches of unsupervised 3D object detection follow cluster-based pseudo-label generation and iterative self-training processes.

Sunshine to Rainstorm: Cross-Weather Knowledge Distillation for Robust 3D Object Detection

1 code implementation • 28 Feb 2024 • Xun Huang, Hai Wu, Xin Li, Xiaoliang Fan, Chenglu Wen, Cheng Wang

LiDAR-based 3D object detection models have traditionally struggled under rainy conditions due to the degraded and noisy scanning signals.

DiffCollage: Parallel Generation of Large Content with Diffusion Models

no code implementations • CVPR 2023 • Qinsheng Zhang, Jiaming Song, Xun Huang, Yongxin Chen, Ming-Yu Liu

We present DiffCollage, a compositional diffusion model that can generate large content by leveraging diffusion models trained on generating pieces of the large content.

Magic3D: High-Resolution Text-to-3D Content Creation

1 code implementation • CVPR 2023 • Chen-Hsuan Lin, Jun Gao, Luming Tang, Towaki Takikawa, Xiaohui Zeng, Xun Huang, Karsten Kreis, Sanja Fidler, Ming-Yu Liu, Tsung-Yi Lin

DreamFusion has recently demonstrated the utility of a pre-trained text-to-image diffusion model to optimize Neural Radiance Fields (NeRF), achieving remarkable text-to-3D synthesis results.

Ranked #2 on

Text to 3D

on T$^3$Bench

Ranked #2 on

Text to 3D

on T$^3$Bench

eDiff-I: Text-to-Image Diffusion Models with an Ensemble of Expert Denoisers

2 code implementations • 2 Nov 2022 • Yogesh Balaji, Seungjun Nah, Xun Huang, Arash Vahdat, Jiaming Song, Qinsheng Zhang, Karsten Kreis, Miika Aittala, Timo Aila, Samuli Laine, Bryan Catanzaro, Tero Karras, Ming-Yu Liu

Therefore, in contrast to existing works, we propose to train an ensemble of text-to-image diffusion models specialized for different synthesis stages.

Ranked #14 on

Text-to-Image Generation

on MS COCO

Ranked #14 on

Text-to-Image Generation

on MS COCO

Multimodal Conditional Image Synthesis with Product-of-Experts GANs

no code implementations • 9 Dec 2021 • Xun Huang, Arun Mallya, Ting-Chun Wang, Ming-Yu Liu

Existing conditional image synthesis frameworks generate images based on user inputs in a single modality, such as text, segmentation, sketch, or style reference.

Implementing an Improved Test of Matrix Rank in Stata

no code implementations • 1 Aug 2021 • Qihui Chen, Zheng Fang, Xun Huang

We develop a Stata command, bootranktest, for implementing the matrix rank test of Chen and Fang (2019) in linear instrumental variable regression models.

Generative Adversarial Networks for Image and Video Synthesis: Algorithms and Applications

no code implementations • 6 Aug 2020 • Ming-Yu Liu, Xun Huang, Jiahui Yu, Ting-Chun Wang, Arun Mallya

The generative adversarial network (GAN) framework has emerged as a powerful tool for various image and video synthesis tasks, allowing the synthesis of visual content in an unconditional or input-conditional manner.

PointFlow: 3D Point Cloud Generation with Continuous Normalizing Flows

12 code implementations • ICCV 2019 • Guandao Yang, Xun Huang, Zekun Hao, Ming-Yu Liu, Serge Belongie, Bharath Hariharan

Specifically, we learn a two-level hierarchy of distributions where the first level is the distribution of shapes and the second level is the distribution of points given a shape.

Ranked #4 on

Point Cloud Generation

on ShapeNet Car

Ranked #4 on

Point Cloud Generation

on ShapeNet Car

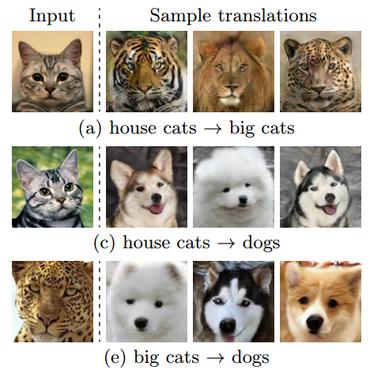

Few-Shot Unsupervised Image-to-Image Translation

10 code implementations • ICCV 2019 • Ming-Yu Liu, Xun Huang, Arun Mallya, Tero Karras, Timo Aila, Jaakko Lehtinen, Jan Kautz

Unsupervised image-to-image translation methods learn to map images in a given class to an analogous image in a different class, drawing on unstructured (non-registered) datasets of images.

Learning to Evaluate Image Captioning

1 code implementation • CVPR 2018 • Yin Cui, Guandao Yang, Andreas Veit, Xun Huang, Serge Belongie

To address these two challenges, we propose a novel learning based discriminative evaluation metric that is directly trained to distinguish between human and machine-generated captions.

Controllable Video Generation With Sparse Trajectories

no code implementations • CVPR 2018 • Zekun Hao, Xun Huang, Serge Belongie

Video generation and manipulation is an important yet challenging task in computer vision.

Multimodal Unsupervised Image-to-Image Translation

14 code implementations • ECCV 2018 • Xun Huang, Ming-Yu Liu, Serge Belongie, Jan Kautz

To translate an image to another domain, we recombine its content code with a random style code sampled from the style space of the target domain.

Multimodal Unsupervised Image-To-Image Translation

Multimodal Unsupervised Image-To-Image Translation

Translation

+1

Translation

+1

Arbitrary Style Transfer in Real-time with Adaptive Instance Normalization

28 code implementations • ICCV 2017 • Xun Huang, Serge Belongie

Gatys et al. recently introduced a neural algorithm that renders a content image in the style of another image, achieving so-called style transfer.

Stacked Generative Adversarial Networks

2 code implementations • CVPR 2017 • Xun Huang, Yixuan Li, Omid Poursaeed, John Hopcroft, Serge Belongie

In this paper, we propose a novel generative model named Stacked Generative Adversarial Networks (SGAN), which is trained to invert the hierarchical representations of a bottom-up discriminative network.

Ranked #11 on

Conditional Image Generation

on CIFAR-10

(Inception score metric)

Ranked #11 on

Conditional Image Generation

on CIFAR-10

(Inception score metric)

SALICON: Reducing the Semantic Gap in Saliency Prediction by Adapting Deep Neural Networks

no code implementations • ICCV 2015 • Xun Huang, Chengyao Shen, Xavier Boix, Qi Zhao

Saliency in Context (SALICON) is an ongoing effort that aims at understanding and predicting visual attention.

Top-Down Learning for Structured Labeling with Convolutional Pseudoprior

no code implementations • 23 Nov 2015 • Saining Xie, Xun Huang, Zhuowen Tu

Current practice in convolutional neural networks (CNN) remains largely bottom-up and the role of top-down process in CNN for pattern analysis and visual inference is not very clear.

Learning of Proto-object Representations via Fixations on Low Resolution

no code implementations • 23 Dec 2014 • Chengyao Shen, Xun Huang, Qi Zhao

Visualizations also show that these features are selective to potential objects in the scene and the responses of these features work well in predicting eye fixations on the images when combined with learned weights.