DenseMamba: State Space Models with Dense Hidden Connection for Efficient Large Language Models

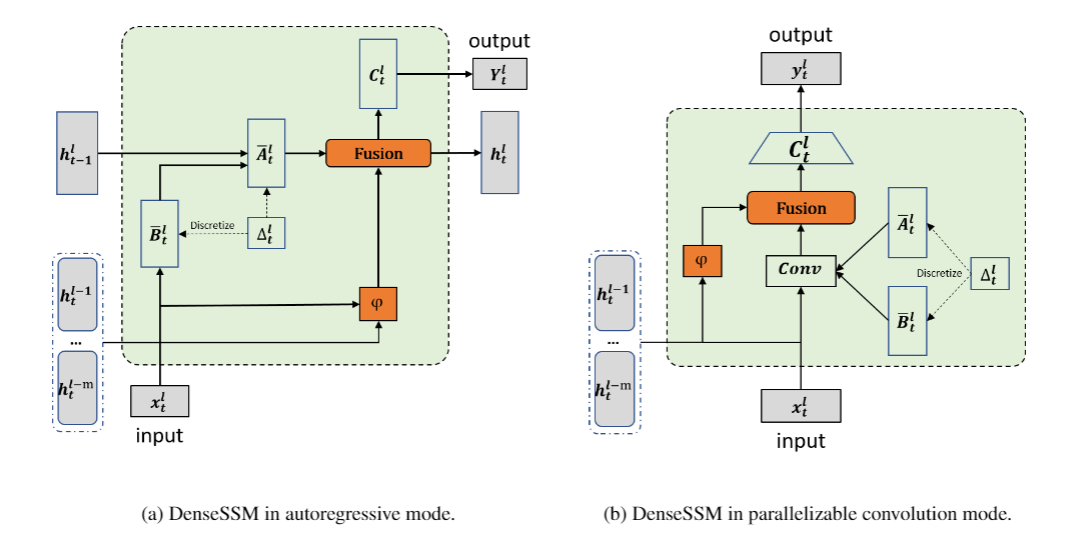

Large language models (LLMs) face a daunting challenge due to the excessive computational and memory requirements of the commonly used Transformer architecture. While state space model (SSM) is a new type of foundational network architecture offering lower computational complexity, their performance has yet to fully rival that of Transformers. This paper introduces DenseSSM, a novel approach to enhance the flow of hidden information between layers in SSMs. By selectively integrating shallowlayer hidden states into deeper layers, DenseSSM retains fine-grained information crucial for the final output. Dense connections enhanced DenseSSM still maintains the training parallelizability and inference efficiency. The proposed method can be widely applicable to various SSM types like RetNet and Mamba. With similar model size, DenseSSM achieves significant improvements, exemplified by DenseRetNet outperforming the original RetNet with up to 5% accuracy improvement on public benchmarks. code is avalaible at https://github.com/WailordHe/DenseSSM

PDF Abstract

HellaSwag

HellaSwag

BoolQ

BoolQ

PIQA

PIQA

OpenBookQA

OpenBookQA

WinoGrande

WinoGrande

SciQ

SciQ