DNABERT-2: Efficient Foundation Model and Benchmark For Multi-Species Genome

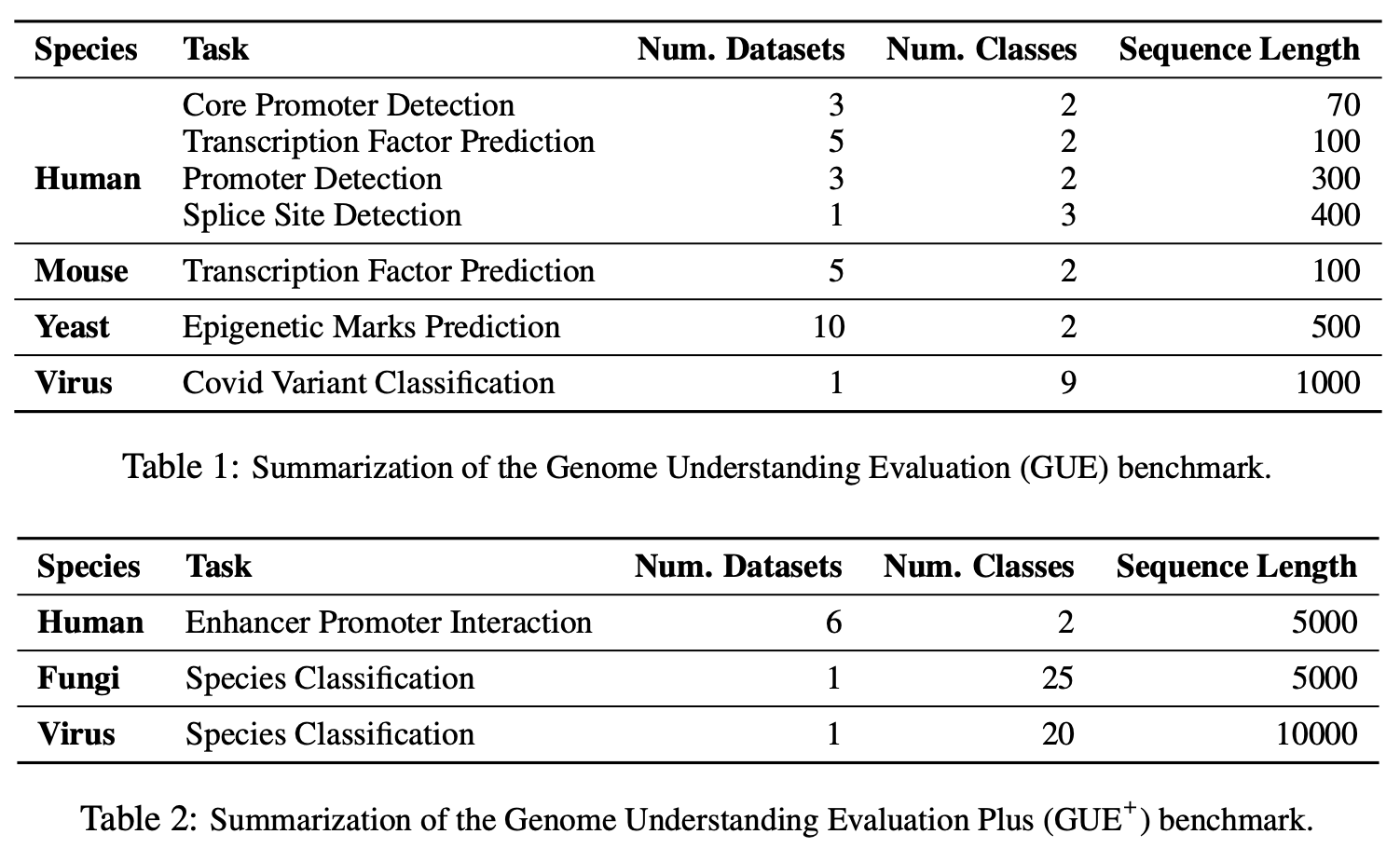

Decoding the linguistic intricacies of the genome is a crucial problem in biology, and pre-trained foundational models such as DNABERT and Nucleotide Transformer have made significant strides in this area. Existing works have largely hinged on k-mer, fixed-length permutations of A, T, C, and G, as the token of the genome language due to its simplicity. However, we argue that the computation and sample inefficiencies introduced by k-mer tokenization are primary obstacles in developing large genome foundational models. We provide conceptual and empirical insights into genome tokenization, building on which we propose to replace k-mer tokenization with Byte Pair Encoding (BPE), a statistics-based data compression algorithm that constructs tokens by iteratively merging the most frequent co-occurring genome segment in the corpus. We demonstrate that BPE not only overcomes the limitations of k-mer tokenization but also benefits from the computational efficiency of non-overlapping tokenization. Based on these insights, we introduce DNABERT-2, a refined genome foundation model that adapts an efficient tokenizer and employs multiple strategies to overcome input length constraints, reduce time and memory expenditure, and enhance model capability. Furthermore, we identify the absence of a comprehensive and standardized benchmark for genome understanding as another significant impediment to fair comparative analysis. In response, we propose the Genome Understanding Evaluation (GUE), a comprehensive multi-species genome classification dataset that amalgamates $36$ distinct datasets across $9$ tasks, with input lengths ranging from $70$ to $10000$. Through comprehensive experiments on the GUE benchmark, we demonstrate that DNABERT-2 achieves comparable performance to the state-of-the-art model with $21 \times$ fewer parameters and approximately $92 \times$ less GPU time in pre-training.

PDF AbstractCode

Tasks

Computational Efficiency

Computational Efficiency

Core Promoter Detection

Core Promoter Detection

Covid Variant Prediction

Covid Variant Prediction

Data Compression

Data Compression

DNA analysis

DNA analysis

Epigenetic Marks Prediction

Epigenetic Marks Prediction

Genome Understanding

Genome Understanding

Promoter Detection

Promoter Detection

Splice Site Prediction

Splice Site Prediction

Transcription Factor Binding Site Prediction

Transcription Factor Binding Site Prediction

Transcription Factor Binding Site Prediction (Human)

Transcription Factor Binding Site Prediction (Human)

Transcription Factor Binding Site Prediction (Mouse)

Transcription Factor Binding Site Prediction (Mouse)

Datasets

Introduced in the Paper:

GUE