nuScenes: A multimodal dataset for autonomous driving

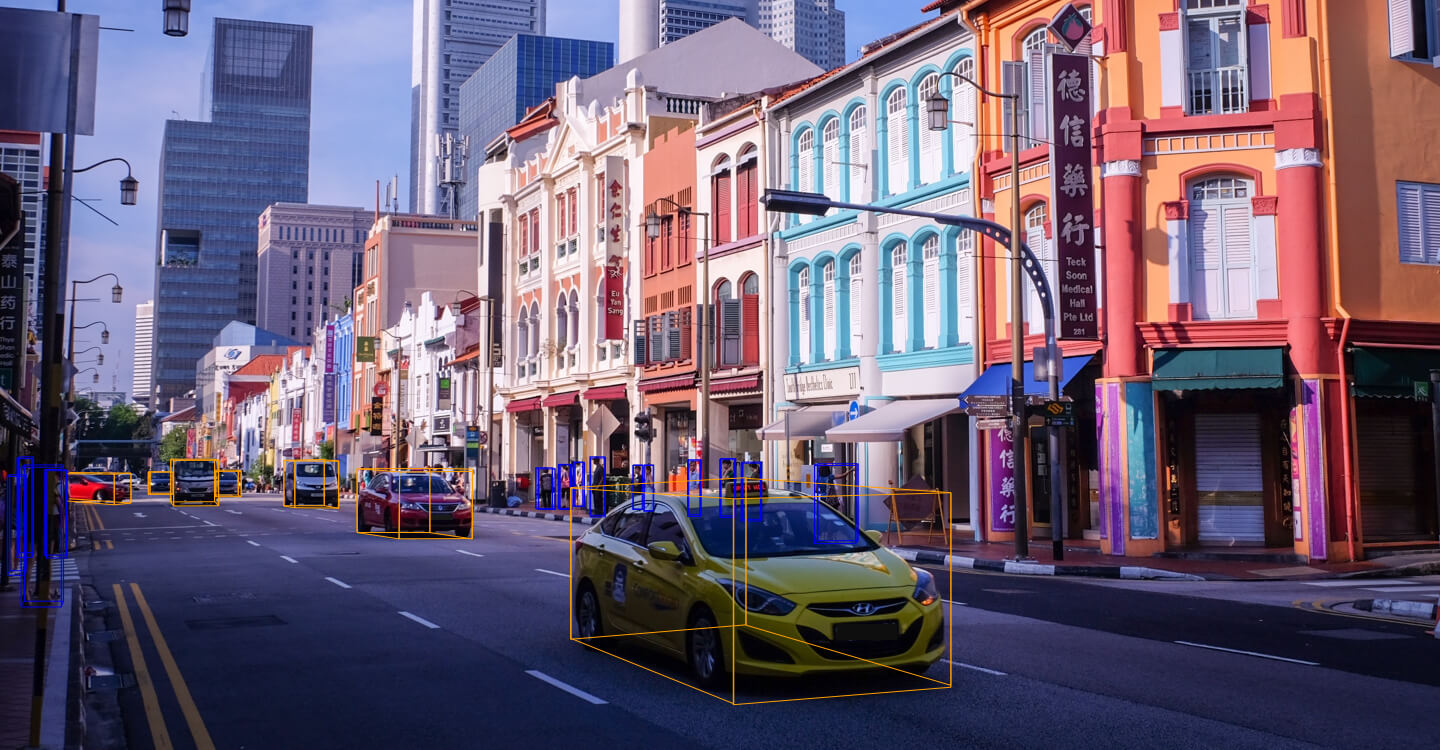

Robust detection and tracking of objects is crucial for the deployment of autonomous vehicle technology. Image based benchmark datasets have driven development in computer vision tasks such as object detection, tracking and segmentation of agents in the environment. Most autonomous vehicles, however, carry a combination of cameras and range sensors such as lidar and radar. As machine learning based methods for detection and tracking become more prevalent, there is a need to train and evaluate such methods on datasets containing range sensor data along with images. In this work we present nuTonomy scenes (nuScenes), the first dataset to carry the full autonomous vehicle sensor suite: 6 cameras, 5 radars and 1 lidar, all with full 360 degree field of view. nuScenes comprises 1000 scenes, each 20s long and fully annotated with 3D bounding boxes for 23 classes and 8 attributes. It has 7x as many annotations and 100x as many images as the pioneering KITTI dataset. We define novel 3D detection and tracking metrics. We also provide careful dataset analysis as well as baselines for lidar and image based detection and tracking. Data, development kit and more information are available online.

PDF Abstract CVPR 2020 PDF CVPR 2020 AbstractCode

Datasets

Introduced in the Paper:

nuScenes

nuScenes

nuScenes LiDAR only

nuScenes LiDAR only

Used in the Paper:

ImageNet

ImageNet

Cityscapes

Cityscapes

KITTI

KITTI

ssd

ssd

ApolloScape

ApolloScape

A*3D

A*3D

H3D

H3D

Results from the Paper

Ranked #312 on

3D Object Detection

on nuScenes

(using extra training data)

Ranked #312 on

3D Object Detection

on nuScenes

(using extra training data)