Image-to-Image Translation

491 papers with code • 37 benchmarks • 29 datasets

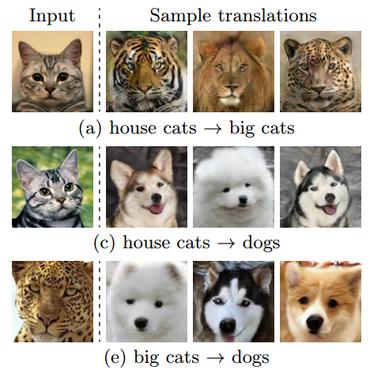

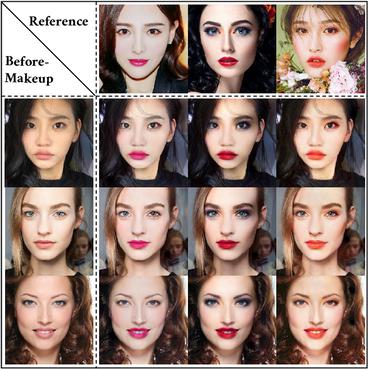

Image-to-Image Translation is a task in computer vision and machine learning where the goal is to learn a mapping between an input image and an output image, such that the output image can be used to perform a specific task, such as style transfer, data augmentation, or image restoration.

( Image credit: Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks )

Libraries

Use these libraries to find Image-to-Image Translation models and implementationsDatasets

Subtasks

Latest papers

WB LUTs: Contrastive Learning for White Balancing Lookup Tables

Automatic white balancing (AWB), one of the first steps in an integrated signal processing (ISP) pipeline, aims to correct the color cast induced by the scene illuminant.

Implicit Multi-Spectral Transformer: An Lightweight and Effective Visible to Infrared Image Translation Model

Initially, the Texture Mapping Module and Color Perception Adapter collaborate to extract texture and color features from the visible light image.

Anatomical Conditioning for Contrastive Unpaired Image-to-Image Translation of Optical Coherence Tomography Images

For a unified analysis of medical images from different modalities, data harmonization using image-to-image (I2I) translation is desired.

MoMA: Multimodal LLM Adapter for Fast Personalized Image Generation

This approach effectively synergizes reference image and text prompt information to produce valuable image features, facilitating an image diffusion model.

SDXS: Real-Time One-Step Latent Diffusion Models with Image Conditions

Recent advancements in diffusion models have positioned them at the forefront of image generation.

Shadow Generation for Composite Image Using Diffusion model

In the realm of image composition, generating realistic shadow for the inserted foreground remains a formidable challenge.

Generalized Consistency Trajectory Models for Image Manipulation

Diffusion-based generative models excel in unconditional generation, as well as on applied tasks such as image editing and restoration.

Multiscale Low-Frequency Memory Network for Improved Feature Extraction in Convolutional Neural Networks

Responding to these complexities, we introduce a novel framework, the Multiscale Low-Frequency Memory (MLFM) Network, with the goal to harness the full potential of CNNs while keeping their complexity unchanged.

Spectrum Translation for Refinement of Image Generation (STIG) Based on Contrastive Learning and Spectral Filter Profile

We evaluate our framework across eight fake image datasets and various cutting-edge models to demonstrate the effectiveness of STIG.

NocPlace: Nocturnal Visual Place Recognition via Generative and Inherited Knowledge Transfer

Visual Place Recognition (VPR) is crucial in computer vision, aiming to retrieve database images similar to a query image from an extensive collection of known images.

Cityscapes

Cityscapes

KITTI

KITTI

ADE20K

ADE20K

CelebA-HQ

CelebA-HQ

SYNTHIA

SYNTHIA

GTA5

GTA5

DeepFashion

DeepFashion

Perceptual Similarity

Perceptual Similarity

AFHQ

AFHQ

COCO-Stuff

COCO-Stuff