Image-to-Image Translation

492 papers with code • 37 benchmarks • 29 datasets

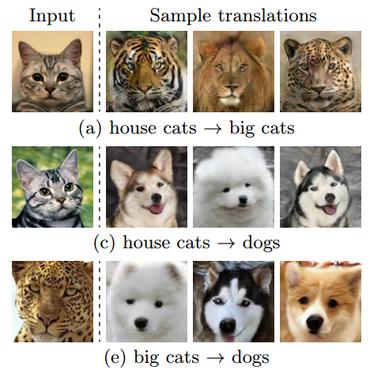

Image-to-Image Translation is a task in computer vision and machine learning where the goal is to learn a mapping between an input image and an output image, such that the output image can be used to perform a specific task, such as style transfer, data augmentation, or image restoration.

( Image credit: Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks )

Libraries

Use these libraries to find Image-to-Image Translation models and implementationsDatasets

Subtasks

Latest papers

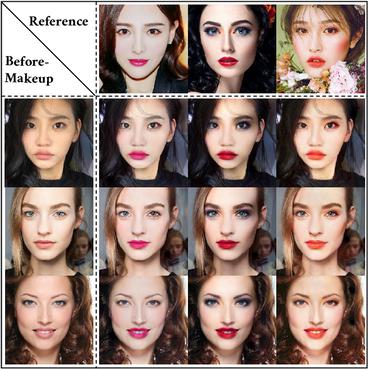

Fine-grained Appearance Transfer with Diffusion Models

A pivotal aspect of our approach is the strategic use of the predicted $x_0$ space by diffusion models within the latent space of diffusion processes.

A deep learning approach for marine snow synthesis and removal

Marine snow, the floating particles in underwater images, severely degrades the visibility and performance of human and machine vision systems.

H-Packer: Holographic Rotationally Equivariant Convolutional Neural Network for Protein Side-Chain Packing

Accurately modeling protein 3D structure is essential for the design of functional proteins.

Optimal Transport-Guided Conditional Score-Based Diffusion Models

Conditional score-based diffusion model (SBDM) is for conditional generation of target data with paired data as condition, and has achieved great success in image translation.

TPSeNCE: Towards Artifact-Free Realistic Rain Generation for Deraining and Object Detection in Rain

We first introduce a Triangular Probability Similarity (TPS) constraint to guide the generated images toward clear and rainy images in the discriminator manifold, thereby minimizing artifacts and distortions during rain generation.

Adaptive Latent Diffusion Model for 3D Medical Image to Image Translation: Multi-modal Magnetic Resonance Imaging Study

Our model exhibited successful image synthesis across different source-target modality scenarios and surpassed other models in quantitative evaluations tested on multi-modal brain magnetic resonance imaging datasets of four different modalities and an independent IXI dataset.

WAIT: Feature Warping for Animation to Illustration video Translation using GANs

Current state-of-the-art video-to-video translation models rely on having a video sequence or a single style image to stylize an input video.

VI-Diff: Unpaired Visible-Infrared Translation Diffusion Model for Single Modality Labeled Visible-Infrared Person Re-identification

In this paper, we propose VI-Diff, a diffusion model that effectively addresses the task of Visible-Infrared person image translation.

Image-to-Image Translation with Deep Reinforcement Learning

The key feature in the RL-I2IT framework is to decompose a monolithic learning process into small steps with a lightweight model to progressively transform a source image successively to a target image.

Masked Discriminators for Content-Consistent Unpaired Image-to-Image Translation

In this work, we show that masking the inputs of a global discriminator for both domains with a content-based mask is sufficient to reduce content inconsistencies significantly.

Cityscapes

Cityscapes

KITTI

KITTI

ADE20K

ADE20K

CelebA-HQ

CelebA-HQ

SYNTHIA

SYNTHIA

GTA5

GTA5

DeepFashion

DeepFashion

Perceptual Similarity

Perceptual Similarity

AFHQ

AFHQ

COCO-Stuff

COCO-Stuff