Image-to-Image Translation

491 papers with code • 37 benchmarks • 29 datasets

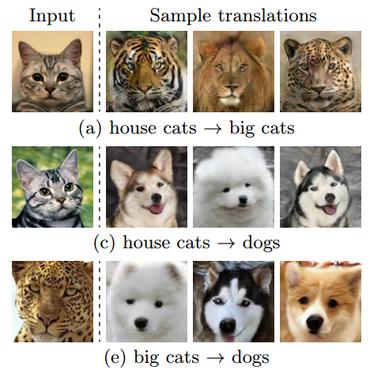

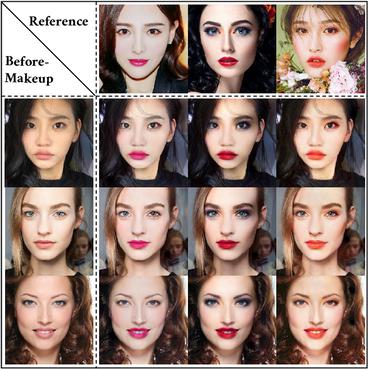

Image-to-Image Translation is a task in computer vision and machine learning where the goal is to learn a mapping between an input image and an output image, such that the output image can be used to perform a specific task, such as style transfer, data augmentation, or image restoration.

( Image credit: Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks )

Libraries

Use these libraries to find Image-to-Image Translation models and implementationsDatasets

Subtasks

Most implemented papers

Domain Adaptation for Structured Output via Discriminative Patch Representations

Predicting structured outputs such as semantic segmentation relies on expensive per-pixel annotations to learn supervised models like convolutional neural networks.

Attention-Guided Generative Adversarial Networks for Unsupervised Image-to-Image Translation

To handle the limitation, in this paper we propose a novel Attention-Guided Generative Adversarial Network (AGGAN), which can detect the most discriminative semantic object and minimize changes of unwanted part for semantic manipulation problems without using extra data and models.

GANimation: Anatomically-aware Facial Animation from a Single Image

Recent advances in Generative Adversarial Networks (GANs) have shown impressive results for task of facial expression synthesis.

Diverse Image-to-Image Translation via Disentangled Representations

Our model takes the encoded content features extracted from a given input and the attribute vectors sampled from the attribute space to produce diverse outputs at test time.

Unsupervised Cross-Domain Image Generation

We study the problem of transferring a sample in one domain to an analog sample in another domain.

Invertible Conditional GANs for image editing

Generative Adversarial Networks (GANs) have recently demonstrated to successfully approximate complex data distributions.

DualGAN: Unsupervised Dual Learning for Image-to-Image Translation

Depending on the task complexity, thousands to millions of labeled image pairs are needed to train a conditional GAN.

Virtual to Real Reinforcement Learning for Autonomous Driving

To our knowledge, this is the first successful case of driving policy trained by reinforcement learning that can adapt to real world driving data.

Toward Multimodal Image-to-Image Translation

Our proposed method encourages bijective consistency between the latent encoding and output modes.

CartoonGAN: Generative Adversarial Networks for Photo Cartoonization

Two novel losses suitable for cartoonization are proposed: (1) a semantic content loss, which is formulated as a sparse regularization in the high-level feature maps of the VGG network to cope with substantial style variation between photos and cartoons, and (2) an edge-promoting adversarial loss for preserving clear edges.

Cityscapes

Cityscapes

KITTI

KITTI

ADE20K

ADE20K

CelebA-HQ

CelebA-HQ

SYNTHIA

SYNTHIA

GTA5

GTA5

DeepFashion

DeepFashion

Perceptual Similarity

Perceptual Similarity

AFHQ

AFHQ

COCO-Stuff

COCO-Stuff