Epipolar Transformers

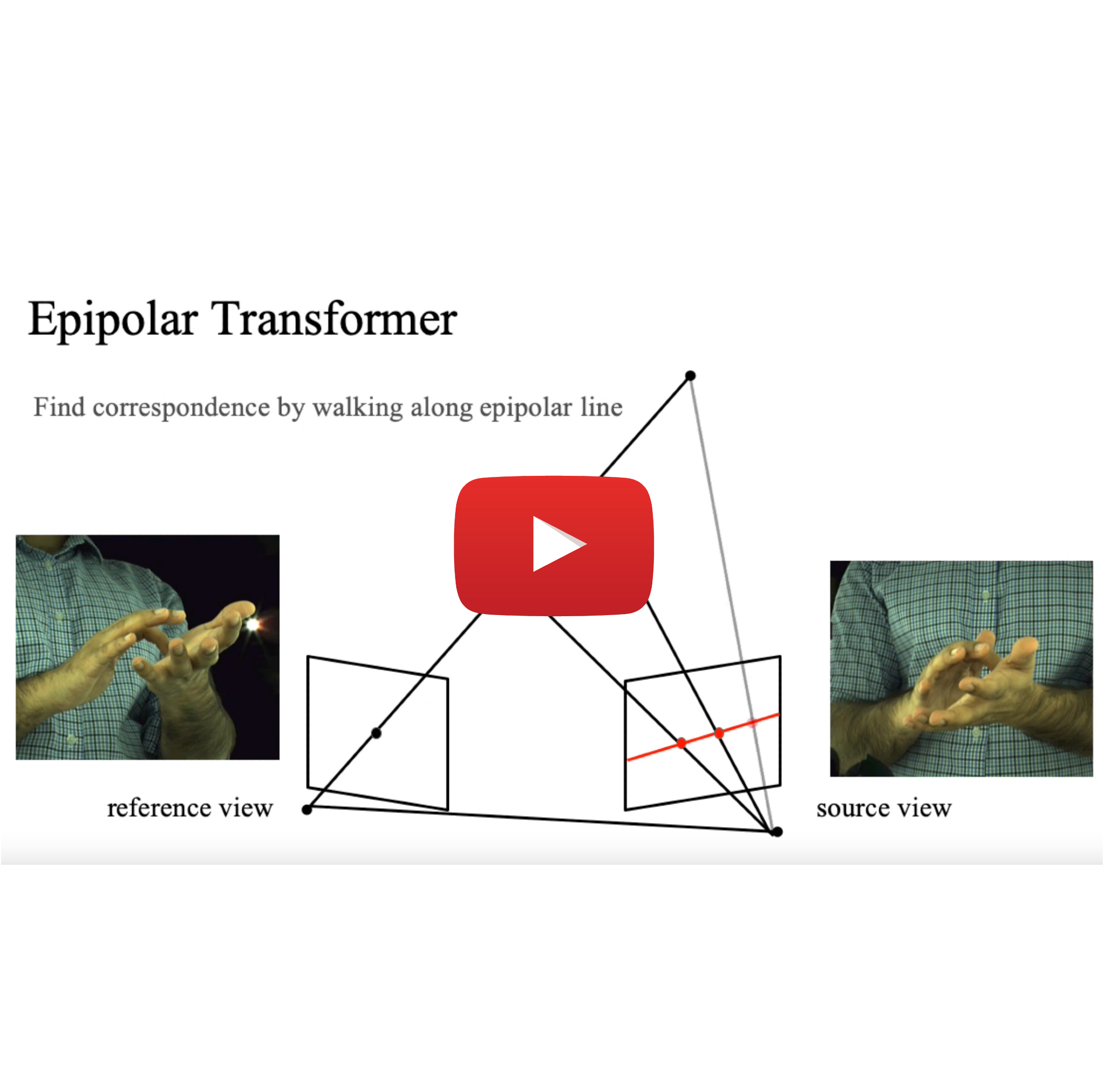

A common approach to localize 3D human joints in a synchronized and calibrated multi-view setup consists of two-steps: (1) apply a 2D detector separately on each view to localize joints in 2D, and (2) perform robust triangulation on 2D detections from each view to acquire the 3D joint locations. However, in step 1, the 2D detector is limited to solving challenging cases which could potentially be better resolved in 3D, such as occlusions and oblique viewing angles, purely in 2D without leveraging any 3D information. Therefore, we propose the differentiable "epipolar transformer", which enables the 2D detector to leverage 3D-aware features to improve 2D pose estimation. The intuition is: given a 2D location p in the current view, we would like to first find its corresponding point p' in a neighboring view, and then combine the features at p' with the features at p, thus leading to a 3D-aware feature at p. Inspired by stereo matching, the epipolar transformer leverages epipolar constraints and feature matching to approximate the features at p'. Experiments on InterHand and Human3.6M show that our approach has consistent improvements over the baselines. Specifically, in the condition where no external data is used, our Human3.6M model trained with ResNet-50 backbone and image size 256 x 256 outperforms state-of-the-art by 4.23 mm and achieves MPJPE 26.9 mm.

PDF Abstract CVPR 2020 PDF CVPR 2020 AbstractDatasets

| Task | Dataset | Model | Metric Name | Metric Value | Global Rank | Uses Extra Training Data |

Benchmark |

|---|---|---|---|---|---|---|---|

| 3D Human Pose Estimation | Human3.6M | Epipolar Transformer+R152 384x384 | Average MPJPE (mm) | 19.0 | # 7 | ||

| Using 2D ground-truth joints | No | # 2 | |||||

| Multi-View or Monocular | Multi-View | # 1 | |||||

| 3D Human Pose Estimation | Human3.6M | Epipolar Transformer+R50 256×256+RPSM | Average MPJPE (mm) | 26.9 | # 23 | ||

| Using 2D ground-truth joints | No | # 2 | |||||

| Multi-View or Monocular | Multi-View | # 1 | |||||

| 3D Hand Pose Estimation | InterHand2.6M | Epipolar Transformers | MPJPE | 4.91 | # 1 |

MS COCO

MS COCO

Human3.6M

Human3.6M

MPII

MPII

InterHand2.6M

InterHand2.6M