Towards an Effective and Efficient Transformer for Rain-by-snow Weather Removal

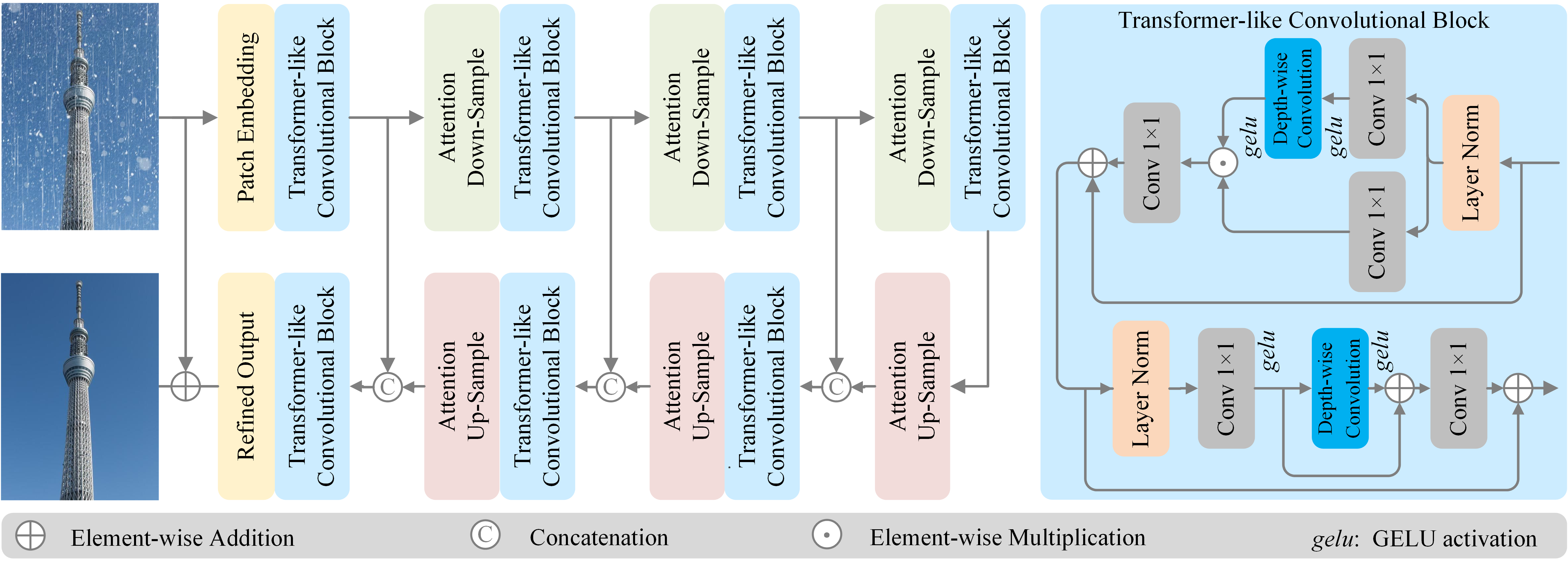

Rain-by-snow weather removal is a specialized task in weather-degraded image restoration aiming to eliminate coexisting rain streaks and snow particles. In this paper, we propose RSFormer, an efficient and effective Transformer that addresses this challenge. Initially, we explore the proximity of convolution networks (ConvNets) and vision Transformers (ViTs) in hierarchical architectures and experimentally find they perform approximately at intra-stage feature learning. On this basis, we utilize a Transformer-like convolution block (TCB) that replaces the computationally expensive self-attention while preserving attention characteristics for adapting to input content. We also demonstrate that cross-stage progression is critical for performance improvement, and propose a global-local self-attention sampling mechanism (GLASM) that down-/up-samples features while capturing both global and local dependencies. Finally, we synthesize two novel rain-by-snow datasets, RSCityScape and RS100K, to evaluate our proposed RSFormer. Extensive experiments verify that RSFormer achieves the best trade-off between performance and time-consumption compared to other restoration methods. For instance, it outperforms Restormer with a 1.53% reduction in the number of parameters and a 15.6% reduction in inference time. Datasets, source code and pre-trained models are available at \url{https://github.com/chdwyb/RSFormer}.

PDF Abstract