Vaxformer: Antigenicity-controlled Transformer for Vaccine Design Against SARS-CoV-2

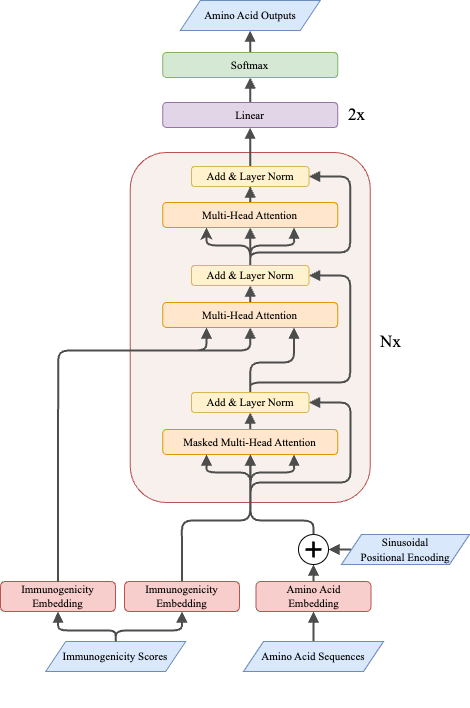

The SARS-CoV-2 pandemic has emphasised the importance of developing a universal vaccine that can protect against current and future variants of the virus. The present study proposes a novel conditional protein Language Model architecture, called Vaxformer, which is designed to produce natural-looking antigenicity-controlled SARS-CoV-2 spike proteins. We evaluate the generated protein sequences of the Vaxformer model using DDGun protein stability measure, netMHCpan antigenicity score, and a structure fidelity score with AlphaFold to gauge its viability for vaccine development. Our results show that Vaxformer outperforms the existing state-of-the-art Conditional Variational Autoencoder model to generate antigenicity-controlled SARS-CoV-2 spike proteins. These findings suggest promising opportunities for conditional Transformer models to expand our understanding of vaccine design and their role in mitigating global health challenges. The code used in this study is available at https://github.com/aryopg/vaxformer .

PDF Abstract