Search Results for author: Hossein Talebi

Found 21 papers, 7 papers with code

Fast Multi-Layer Laplacian Enhancement

no code implementations • 23 Jun 2016 • Hossein Talebi, Peyman Milanfar

A novel, fast and practical way of enhancing images is introduced in this paper.

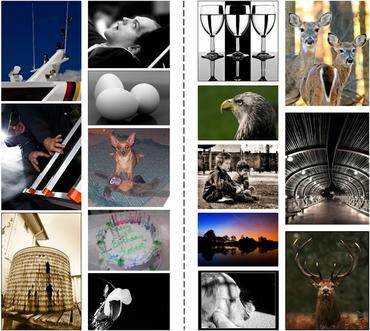

NIMA: Neural Image Assessment

12 code implementations • 15 Sep 2017 • Hossein Talebi, Peyman Milanfar

Automatically learned quality assessment for images has recently become a hot topic due to its usefulness in a wide variety of applications such as evaluating image capture pipelines, storage techniques and sharing media.

Ranked #4 on

Aesthetics Quality Assessment

on AVA

Ranked #4 on

Aesthetics Quality Assessment

on AVA

Learned Perceptual Image Enhancement

no code implementations • 7 Dec 2017 • Hossein Talebi, Peyman Milanfar

Learning a typical image enhancement pipeline involves minimization of a loss function between enhanced and reference images.

Better Compression with Deep Pre-Editing

no code implementations • 1 Feb 2020 • Hossein Talebi, Damien Kelly, Xiyang Luo, Ignacio Garcia Dorado, Feng Yang, Peyman Milanfar, Michael Elad

In this work we aim to break the unholy connection between bit-rate and image quality, and propose a way to circumvent compression artifacts by pre-editing the incoming image and modifying its content to fit the given bits.

Super-Resolving Commercial Satellite Imagery Using Realistic Training Data

no code implementations • 26 Feb 2020 • Xiang Zhu, Hossein Talebi, Xinwei Shi, Feng Yang, Peyman Milanfar

We propose a realistic training data generation model for commercial satellite imagery products, which includes not only the imaging process on satellites but also the post-process on the ground.

The Rate-Distortion-Accuracy Tradeoff: JPEG Case Study

no code implementations • 3 Aug 2020 • Xiyang Luo, Hossein Talebi, Feng Yang, Michael Elad, Peyman Milanfar

As a case study, we focus on the design of the quantization tables in the JPEG compression standard.

Rank-smoothed Pairwise Learning In Perceptual Quality Assessment

no code implementations • 21 Nov 2020 • Hossein Talebi, Ehsan Amid, Peyman Milanfar, Manfred K. Warmuth

Training a model on these pairwise preferences is a common deep learning approach.

Projected Distribution Loss for Image Enhancement

2 code implementations • 16 Dec 2020 • Mauricio Delbracio, Hossein Talebi, Peyman Milanfar

More explicitly, we show that in imaging applications such as denoising, super-resolution, demosaicing, deblurring and JPEG artifact removal, the proposed learning loss outperforms the current state-of-the-art on reference-based perceptual losses.

Deep Perceptual Image Quality Assessment for Compression

no code implementations • 1 Mar 2021 • Juan Carlos Mier, Eddie Huang, Hossein Talebi, Feng Yang, Peyman Milanfar

In this paper we propose the largest image compression quality dataset to date with human perceptual preferences, enabling the use of deep learning, and we develop a full reference perceptual quality assessment metric for lossy image compression that outperforms the existing state-of-the-art methods.

Learning to Resize Images for Computer Vision Tasks

4 code implementations • ICCV 2021 • Hossein Talebi, Peyman Milanfar

Moreover, we show that the proposed resizer can also be useful for fine-tuning the classification baselines for other vision tasks.

Exponentiated Gradient Reweighting for Robust Training Under Label Noise and Beyond

no code implementations • 3 Apr 2021 • Negin Majidi, Ehsan Amid, Hossein Talebi, Manfred K. Warmuth

Many learning tasks in machine learning can be viewed as taking a gradient step towards minimizing the average loss of a batch of examples in each training iteration.

Rich Features for Perceptual Quality Assessment of UGC Videos

no code implementations • CVPR 2021 • Yilin Wang, Junjie Ke, Hossein Talebi, Joong Gon Yim, Neil Birkbeck, Balu Adsumilli, Peyman Milanfar, Feng Yang

Besides the subjective ratings and content labels of the dataset, we also propose a DNN-based framework to thoroughly analyze importance of content, technical quality, and compression level in perceptual quality.

Deblurring via Stochastic Refinement

no code implementations • CVPR 2022 • Jay Whang, Mauricio Delbracio, Hossein Talebi, Chitwan Saharia, Alexandros G. Dimakis, Peyman Milanfar

Unlike existing techniques, we train a stochastic sampler that refines the output of a deterministic predictor and is capable of producing a diverse set of plausible reconstructions for a given input.

MAXIM: Multi-Axis MLP for Image Processing

1 code implementation • CVPR 2022 • Zhengzhong Tu, Hossein Talebi, Han Zhang, Feng Yang, Peyman Milanfar, Alan Bovik, Yinxiao Li

In this work, we present a multi-axis MLP based architecture called MAXIM, that can serve as an efficient and flexible general-purpose vision backbone for image processing tasks.

Ranked #1 on

Deblurring

on HIDE (trained on GOPRO)

Ranked #1 on

Deblurring

on HIDE (trained on GOPRO)

MaxViT: Multi-Axis Vision Transformer

14 code implementations • 4 Apr 2022 • Zhengzhong Tu, Hossein Talebi, Han Zhang, Feng Yang, Peyman Milanfar, Alan Bovik, Yinxiao Li

We also show that our proposed model expresses strong generative modeling capability on ImageNet, demonstrating the superior potential of MaxViT blocks as a universal vision module.

Ranked #1 on

Object Detection

on COCO 2017

Ranked #1 on

Object Detection

on COCO 2017

Soft Diffusion: Score Matching for General Corruptions

no code implementations • 12 Sep 2022 • Giannis Daras, Mauricio Delbracio, Hossein Talebi, Alexandros G. Dimakis, Peyman Milanfar

To reverse these general diffusions, we propose a new objective called Soft Score Matching that provably learns the score function for any linear corruption process and yields state of the art results for CelebA.

Ranked #7 on

Image Generation

on CelebA 64x64

Ranked #7 on

Image Generation

on CelebA 64x64

Multiscale Structure Guided Diffusion for Image Deblurring

no code implementations • ICCV 2023 • Mengwei Ren, Mauricio Delbracio, Hossein Talebi, Guido Gerig, Peyman Milanfar

We evaluate a single-dataset trained model on diverse datasets and demonstrate more robust deblurring results with fewer artifacts on unseen data.

MULLER: Multilayer Laplacian Resizer for Vision

1 code implementation • ICCV 2023 • Zhengzhong Tu, Peyman Milanfar, Hossein Talebi

Specifically, we select a state-of-the-art vision Transformer, MaxViT, as the baseline, and show that, if trained with MULLER, MaxViT gains up to 0. 6% top-1 accuracy, and meanwhile enjoys 36% inference cost saving to achieve similar top-1 accuracy on ImageNet-1k, as compared to the standard training scheme.

CoDi: Conditional Diffusion Distillation for Higher-Fidelity and Faster Image Generation

1 code implementation • 2 Oct 2023 • Kangfu Mei, Mauricio Delbracio, Hossein Talebi, Zhengzhong Tu, Vishal M. Patel, Peyman Milanfar

Our conditional-task learning and distillation approach outperforms previous distillation methods, achieving a new state-of-the-art in producing high-quality images with very few steps (e. g., 1-4) across multiple tasks, including super-resolution, text-guided image editing, and depth-to-image generation.

TIP: Text-Driven Image Processing with Semantic and Restoration Instructions

no code implementations • 18 Dec 2023 • Chenyang Qi, Zhengzhong Tu, Keren Ye, Mauricio Delbracio, Peyman Milanfar, Qifeng Chen, Hossein Talebi

Text-driven diffusion models have become increasingly popular for various image editing tasks, including inpainting, stylization, and object replacement.

Bigger is not Always Better: Scaling Properties of Latent Diffusion Models

no code implementations • 1 Apr 2024 • Kangfu Mei, Zhengzhong Tu, Mauricio Delbracio, Hossein Talebi, Vishal M. Patel, Peyman Milanfar

We study the scaling properties of latent diffusion models (LDMs) with an emphasis on their sampling efficiency.