Image-to-Image Translation

491 papers with code • 37 benchmarks • 29 datasets

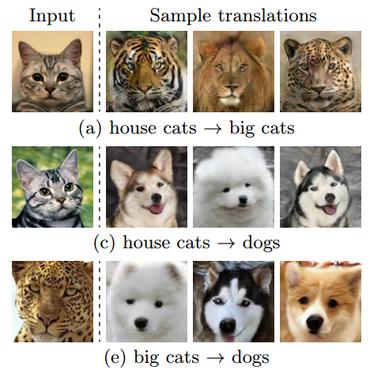

Image-to-Image Translation is a task in computer vision and machine learning where the goal is to learn a mapping between an input image and an output image, such that the output image can be used to perform a specific task, such as style transfer, data augmentation, or image restoration.

( Image credit: Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks )

Libraries

Use these libraries to find Image-to-Image Translation models and implementationsDatasets

Subtasks

Latest papers

Radio-astronomical Image Reconstruction with Conditional Denoising Diffusion Model

Current techniques, such as CLEAN and PyBDSF, often fail to detect faint sources, highlighting the need for more accurate methods.

BlenDA: Domain Adaptive Object Detection through diffusion-based blending

Unsupervised domain adaptation (UDA) aims to transfer a model learned using labeled data from the source domain to unlabeled data in the target domain.

Contrastive Learning-Based Framework for Sim-to-Real Mapping of Lidar Point Clouds in Autonomous Driving Systems

Motivated by this potential, this paper focuses on sim-to-real mapping of Lidar point clouds, a widely used perception sensor in automated driving systems.

PhenDiff: Revealing Invisible Phenotypes with Conditional Diffusion Models

Furthermore, the lack of robustness to invert a real image into the latent of a trained GAN prevents flexible editing of real images.

Open-DDVM: A Reproduction and Extension of Diffusion Model for Optical Flow Estimation

Recently, Google proposes DDVM which for the first time demonstrates that a general diffusion model for image-to-image translation task works impressively well on optical flow estimation task without any specific designs like RAFT.

STEREOFOG -- Computational DeFogging via Image-to-Image Translation on a real-world Dataset

Image-to-Image translation (I2I) is a subtype of Machine Learning (ML) that has tremendous potential in applications where two domains of images and the need for translation between the two exist, such as the removal of fog.

Towards Unsupervised Representation Learning: Learning, Evaluating and Transferring Visual Representations

Unsupervised representation learning aims at finding methods that learn representations from data without annotation-based signals.

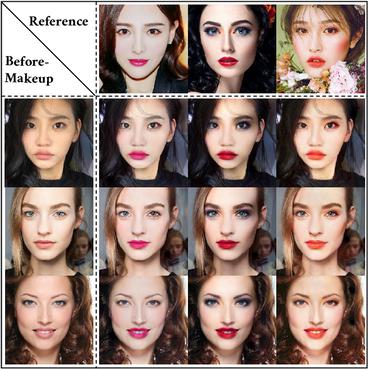

MicroGlam: Microscopic Skin Image Dataset with Cosmetics

We repeated the process for the same skin patch under three cosmetic products.

Fine-grained Appearance Transfer with Diffusion Models

A pivotal aspect of our approach is the strategic use of the predicted $x_0$ space by diffusion models within the latent space of diffusion processes.

A deep learning approach for marine snow synthesis and removal

Marine snow, the floating particles in underwater images, severely degrades the visibility and performance of human and machine vision systems.

Cityscapes

Cityscapes

KITTI

KITTI

ADE20K

ADE20K

CelebA-HQ

CelebA-HQ

SYNTHIA

SYNTHIA

GTA5

GTA5

DeepFashion

DeepFashion

Perceptual Similarity

Perceptual Similarity

AFHQ

AFHQ

COCO-Stuff

COCO-Stuff