Search Results for author: Gernot Riegler

Found 12 papers, 6 papers with code

A Deep Primal-Dual Network for Guided Depth Super-Resolution

no code implementations • 28 Jul 2016 • Gernot Riegler, David Ferstl, Matthias Rüther, Horst Bischof

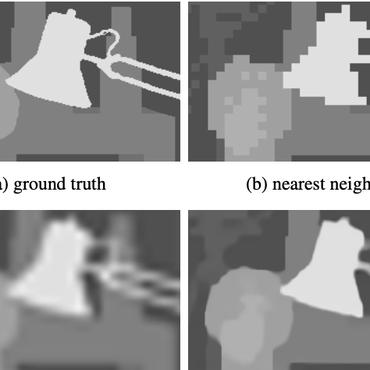

In this paper we present a novel method to increase the spatial resolution of depth images.

ATGV-Net: Accurate Depth Super-Resolution

no code implementations • 27 Jul 2016 • Gernot Riegler, Matthias Rüther, Horst Bischof

We demonstrate that it is feasible to train our method solely on synthetic data that we generate in large quantities for this task.

Filament and Flare Detection in Hα image sequences

no code implementations • 26 Apr 2013 • Gernot Riegler, Thomas Pock, Werner Pötzi, Astrid Veronig

The information produced by our method can be used for near real-time alerts and the statistical analysis of existing data by solar physicists.

Conditioned Regression Models for Non-Blind Single Image Super-Resolution

no code implementations • ICCV 2015 • Gernot Riegler, Samuel Schulter, Matthias Ruther, Horst Bischof

However, this setting is not realistic for practical applications, because the blur is typically different for each test image.

Connecting the Dots: Learning Representations for Active Monocular Depth Estimation

no code implementations • CVPR 2019 • Gernot Riegler, Yiyi Liao, Simon Donne, Vladlen Koltun, Andreas Geiger

We propose a technique for depth estimation with a monocular structured-light camera, i. e., a calibrated stereo set-up with one camera and one laser projector.

$α$Surf: Implicit Surface Reconstruction for Semi-Transparent and Thin Objects with Decoupled Geometry and Opacity

no code implementations • 17 Mar 2023 • Tianhao Wu, Hanxue Liang, Fangcheng Zhong, Gernot Riegler, Shimon Vainer, Cengiz Oztireli

While neural radiance field (NeRF) based methods can model semi-transparency and achieve photo-realistic quality in synthesized novel views, their volumetric geometry representation tightly couples geometry and opacity, and therefore cannot be easily converted into surfaces without introducing artifacts.

Efficiently Creating 3D Training Data for Fine Hand Pose Estimation

1 code implementation • CVPR 2016 • Markus Oberweger, Gernot Riegler, Paul Wohlhart, Vincent Lepetit

While many recent hand pose estimation methods critically rely on a training set of labelled frames, the creation of such a dataset is a challenging task that has been overlooked so far.

OctNetFusion: Learning Depth Fusion from Data

1 code implementation • 4 Apr 2017 • Gernot Riegler, Ali Osman Ulusoy, Horst Bischof, Andreas Geiger

In this paper, we present a learning based approach to depth fusion, i. e., dense 3D reconstruction from multiple depth images.

Stable View Synthesis

3 code implementations • CVPR 2021 • Gernot Riegler, Vladlen Koltun

The core of SVS is view-dependent on-surface feature aggregation, in which directional feature vectors at each 3D point are processed to produce a new feature vector for a ray that maps this point into the new target view.

Free View Synthesis

1 code implementation • ECCV 2020 • Gernot Riegler, Vladlen Koltun

We present a method for novel view synthesis from input images that are freely distributed around a scene.

OctNet: Learning Deep 3D Representations at High Resolutions

1 code implementation • CVPR 2017 • Gernot Riegler, Ali Osman Ulusoy, Andreas Geiger

We present OctNet, a representation for deep learning with sparse 3D data.

NeRF++: Analyzing and Improving Neural Radiance Fields

5 code implementations • 15 Oct 2020 • Kai Zhang, Gernot Riegler, Noah Snavely, Vladlen Koltun

Neural Radiance Fields (NeRF) achieve impressive view synthesis results for a variety of capture settings, including 360 capture of bounded scenes and forward-facing capture of bounded and unbounded scenes.