EViT: An Eagle Vision Transformer with Bi-Fovea Self-Attention

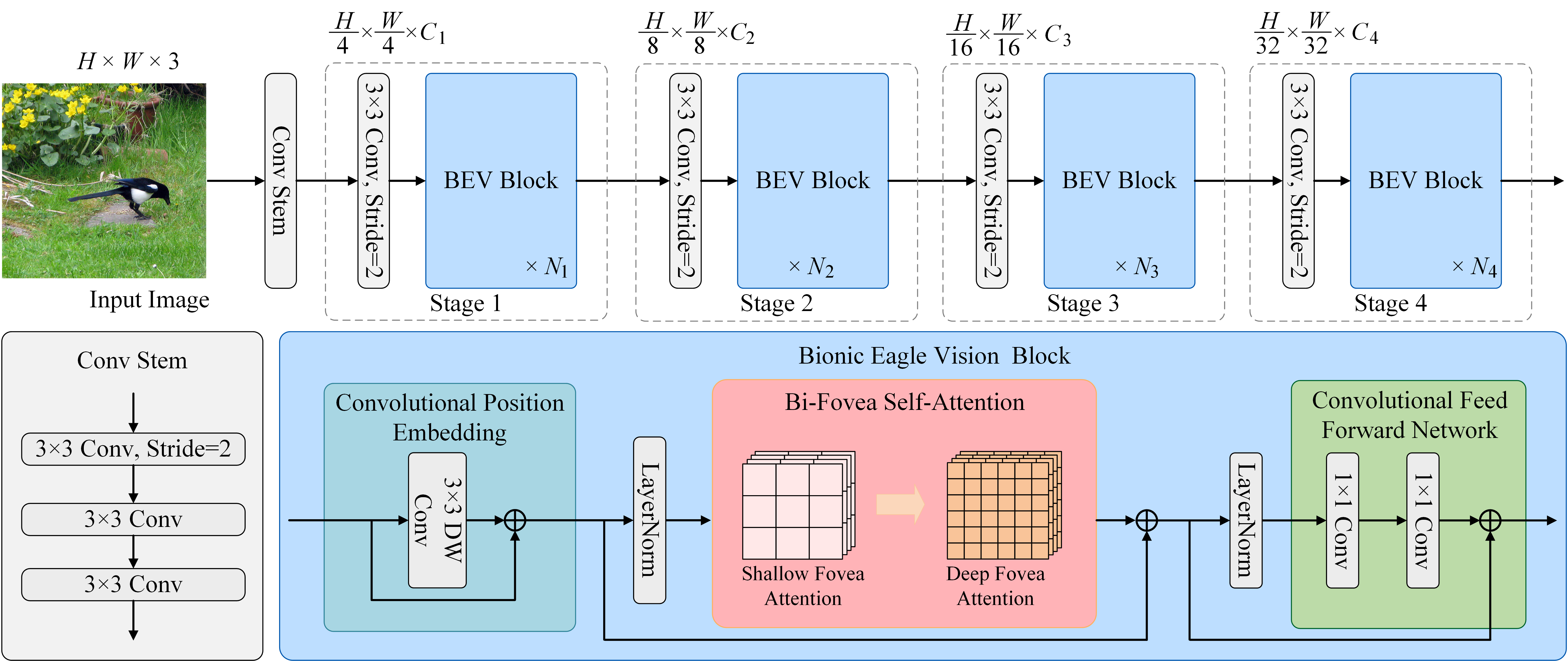

Thanks to the advancement of deep learning technology, vision transformers has demonstrated competitive performance in various computer vision tasks. Unfortunately, vision transformers still faces some challenges such as high computational complexity and absence of desirable inductive bias. To alleviate these issues, we propose a novel Bi-Fovea Self-Attention (BFSA) inspired by the physiological structure and visual properties of eagle eyes. This BFSA is used to simulate the shallow and deep fovea of eagle vision, prompting the network to learn the feature representation of targets from coarse to fine. Additionally, we design a Bionic Eagle Vision (BEV) block based on BFSA. It combines the advantages of convolution and introduces a novel Bi-Fovea Feedforward Network (BFFN) to mimic the working way of biological visual cortex processes information in hierarchically and parallel. Furthermore, we develop a unified and efficient pyramid backbone network family called Eagle Vision Transformers (EViTs) by stacking BEV blocks. Experimental results show that EViTs exhibit highly competitive performance in various computer vision tasks such as image classification, object detection and semantic segmentation. Especially in terms of performance and computational efficiency, EViTs show significant advantages compared with other counterparts. Code is available at https://github.com/nkusyl/EViT

PDF Abstract

CIFAR-10

CIFAR-10

MS COCO

MS COCO

CIFAR-100

CIFAR-100

Oxford 102 Flower

Oxford 102 Flower

ADE20K

ADE20K