Search Results for author: Nian Liu

Found 48 papers, 31 papers with code

FiDeLiS: Faithful Reasoning in Large Language Model for Knowledge Graph Question Answering

no code implementations • 22 May 2024 • Yuan Sui, Yufei He, Nian Liu, Xiaoxin He, Kun Wang, Bryan Hooi

Furthermore, we propose to leverage deductive reasoning capabilities of LLMs as a better criterion to automatically guide the reasoning process in a stepwise and generalizable manner.

Unsupervised Pre-training with Language-Vision Prompts for Low-Data Instance Segmentation

1 code implementation • 22 May 2024 • Dingwen Zhang, Hao Li, Diqi He, Nian Liu, Lechao Cheng, Jingdong Wang, Junwei Han

Experimental evaluations conducted on MS COCO, Cityscapes, and CTW1500 datasets indicate that the QEIS models' performance can be significantly improved when pre-trained with our method.

Advancing Graph Convolutional Networks via General Spectral Wavelets

1 code implementation • 22 May 2024 • Nian Liu, Xiaoxin He, Thomas Laurent, Francesco Di Giovanni, Michael M. Bronstein, Xavier Bresson

Spectral graph convolution, an important tool of data filtering on graphs, relies on two essential decisions; selecting spectral bases for signal transformation and parameterizing the kernel for frequency analysis.

Large Language Model-aided Edge Learning in Distribution System State Estimation

no code implementations • 11 May 2024 • Renyou Xie, Xin Yin, Chaojie Li, Nian Liu, Bo Zhao, ZhaoYang Dong

Distribution system state estimation (DSSE) plays a crucial role in the real-time monitoring, control, and operation of distribution networks.

Learning Social Graph for Inactive User Recommendation

1 code implementation • 8 May 2024 • Nian Liu, Shen Fan, Ting Bai, Peng Wang, Mingwei Sun, Yanhu Mo, Xiaoxiao Xu, Hong Liu, Chuan Shi

In this paper, we propose a novel social recommendation method called LSIR (\textbf{L}earning \textbf{S}ocial Graph for \textbf{I}nactive User \textbf{R}ecommendation) that learns an optimal social graph structure for social recommendation, especially for inactive users.

AnySkill: Learning Open-Vocabulary Physical Skill for Interactive Agents

no code implementations • 19 Mar 2024 • Jieming Cui, Tengyu Liu, Nian Liu, Yaodong Yang, Yixin Zhu, Siyuan Huang

Traditional approaches in physics-based motion generation, centered around imitation learning and reward shaping, often struggle to adapt to new scenarios.

Learning Invariant Representations of Graph Neural Networks via Cluster Generalization

1 code implementation • NeurIPS 2023 • Donglin Xia, Xiao Wang, Nian Liu, Chuan Shi

To address this challenge, we propose the Cluster Information Transfer (CIT) mechanism (Code available at https://github. com/BUPT-GAMMA/CITGNN), which can learn invariant representations for GNNs, thereby improving their generalization ability to various and unknown test graphs with structure shift.

TransGOP: Transformer-Based Gaze Object Prediction

1 code implementation • 21 Feb 2024 • Binglu Wang, Chenxi Guo, Yang Jin, Haisheng Xia, Nian Liu

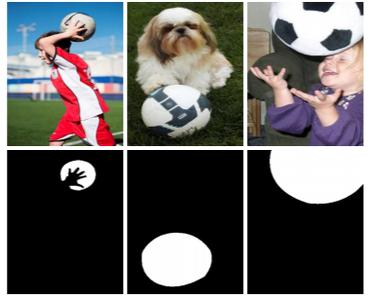

Gaze object prediction aims to predict the location and category of the object that is watched by a human.

V2VSSC: A 3D Semantic Scene Completion Benchmark for Perception with Vehicle to Vehicle Communication

no code implementations • 7 Feb 2024 • Yuanfang Zhang, Junxuan Li, Kaiqing Luo, Yiying Yang, Jiayi Han, Nian Liu, Denghui Qin, Peng Han, Chengpei Xu

Extensive experiments demonstrate that by leveraging V2V communication, the SSC performance can be increased by 8. 3% on geometric metric IoU and 6. 0% mIOU.

CivRealm: A Learning and Reasoning Odyssey in Civilization for Decision-Making Agents

1 code implementation • 19 Jan 2024 • Siyuan Qi, Shuo Chen, Yexin Li, Xiangyu Kong, Junqi Wang, Bangcheng Yang, Pring Wong, Yifan Zhong, Xiaoyuan Zhang, Zhaowei Zhang, Nian Liu, Wei Wang, Yaodong Yang, Song-Chun Zhu

Within CivRealm, we provide interfaces for two typical agent types: tensor-based agents that focus on learning, and language-based agents that emphasize reasoning.

VSCode: General Visual Salient and Camouflaged Object Detection with 2D Prompt Learning

1 code implementation • 25 Nov 2023 • Ziyang Luo, Nian Liu, Wangbo Zhao, Xuguang Yang, Dingwen Zhang, Deng-Ping Fan, Fahad Khan, Junwei Han

Salient object detection (SOD) and camouflaged object detection (COD) are related yet distinct binary mapping tasks.

GP-NeRF: Generalized Perception NeRF for Context-Aware 3D Scene Understanding

no code implementations • 20 Nov 2023 • Hao Li, Dingwen Zhang, Yalun Dai, Nian Liu, Lechao Cheng, Jingfeng Li, Jingdong Wang, Junwei Han

Applying NeRF to downstream perception tasks for scene understanding and representation is becoming increasingly popular.

VST++: Efficient and Stronger Visual Saliency Transformer

no code implementations • 18 Oct 2023 • Nian Liu, Ziyang Luo, Ni Zhang, Junwei Han

Our previous work, the Visual Saliency Transformer (VST), addressed this constraint from a transformer-based sequence-to-sequence perspective, to unify RGB and RGB-D SOD.

Provable Training for Graph Contrastive Learning

1 code implementation • NeurIPS 2023 • Yue Yu, Xiao Wang, Mengmei Zhang, Nian Liu, Chuan Shi

To this end, we propose the PrOvable Training (POT) for GCL, which regularizes the training of GCL to encode node embeddings that follows the GCL principle better.

Multi-grained Temporal Prototype Learning for Few-shot Video Object Segmentation

1 code implementation • ICCV 2023 • Nian Liu, Kepan Nan, Wangbo Zhao, Yuanwei Liu, Xiwen Yao, Salman Khan, Hisham Cholakkal, Rao Muhammad Anwer, Junwei Han, Fahad Shahbaz Khan

We decompose the query video information into a clip prototype and a memory prototype for capturing local and long-term internal temporal guidance, respectively.

Towards Instance-adaptive Inference for Federated Learning

1 code implementation • ICCV 2023 • Chun-Mei Feng, Kai Yu, Nian Liu, Xinxing Xu, Salman Khan, WangMeng Zuo

However, the performance of the global model is often hampered by non-i. i. d.

CalibNet: Dual-branch Cross-modal Calibration for RGB-D Salient Instance Segmentation

1 code implementation • 16 Jul 2023 • Jialun Pei, Tao Jiang, He Tang, Nian Liu, Yueming Jin, Deng-Ping Fan, Pheng-Ann Heng

We propose a novel approach for RGB-D salient instance segmentation using a dual-branch cross-modal feature calibration architecture called CalibNet.

Heterogeneous Value Alignment Evaluation for Large Language Models

2 code implementations • 26 May 2023 • Zhaowei Zhang, Ceyao Zhang, Nian Liu, Siyuan Qi, Ziqi Rong, Song-Chun Zhu, Shuguang Cui, Yaodong Yang

We conduct evaluations with new auto-metric \textit{value rationality} to represent the ability of LLMs to align with specific values.

Discriminative Co-Saliency and Background Mining Transformer for Co-Salient Object Detection

1 code implementation • CVPR 2023 • Long Li, Junwei Han, Ni Zhang, Nian Liu, Salman Khan, Hisham Cholakkal, Rao Muhammad Anwer, Fahad Shahbaz Khan

Then, we use two types of pre-defined tokens to mine co-saliency and background information via our proposed contrast-induced pixel-to-token correlation and co-saliency token-to-token correlation modules.

Ranked #1 on

Co-Salient Object Detection

on CoSal2015

Ranked #1 on

Co-Salient Object Detection

on CoSal2015

Hierarchical Contrastive Learning Enhanced Heterogeneous Graph Neural Network

no code implementations • 24 Apr 2023 • Nian Liu, Xiao Wang, Hui Han, Chuan Shi

Specifically, two views of a HIN (network schema and meta-path views) are proposed to learn node embeddings, so as to capture both of local and high-order structures simultaneously.

A Latent Fingerprint in the Wild Database

no code implementations • 3 Apr 2023 • Xinwei Liu, Kiran Raja, Renfang Wang, Hong Qiu, Hucheng Wu, Dechao Sun, Qiguang Zheng, Nian Liu, Xiaoxia Wang, Gehang Huang, Raghavendra Ramachandra, Christoph Busch

Further, existing databases for latent fingerprint recognition do not have a large number of unique subjects/fingerprint instances or do not provide ground truth/reference fingerprint images to conduct a cross-comparison against the latent.

Boosting Low-Data Instance Segmentation by Unsupervised Pre-training with Saliency Prompt

no code implementations • CVPR 2023 • Hao Li, Dingwen Zhang, Nian Liu, Lechao Cheng, Yalun Dai, Chao Zhang, Xinggang Wang, Junwei Han

Inspired by the recent success of the Prompting technique, we introduce a new pre-training method that boosts QEIS models by giving Saliency Prompt for queries/kernels.

A Deep Learning Approach to Generating Photospheric Vector Magnetograms of Solar Active Regions for SOHO/MDI Using SDO/HMI and BBSO Data

no code implementations • 4 Nov 2022 • Haodi Jiang, Qin Li, Zhihang Hu, Nian Liu, Yasser Abduallah, Ju Jing, Genwei Zhang, Yan Xu, Wynne Hsu, Jason T. L. Wang, Haimin Wang

We propose a new deep learning method, named MagNet, to learn from combined LOS magnetograms, Bx and By taken by SDO/HMI along with H-alpha observations collected by the Big Bear Solar Observatory (BBSO), and to generate vector components Bx' and By', which would form vector magnetograms with observed LOS data.

Intermediate Prototype Mining Transformer for Few-Shot Semantic Segmentation

1 code implementation • 13 Oct 2022 • Yuanwei Liu, Nian Liu, Xiwen Yao, Junwei Han

To solve this problem, we are the first to introduce an intermediate prototype for mining both deterministic category information from the support and adaptive category knowledge from the query.

Ranked #29 on

Few-Shot Semantic Segmentation

on PASCAL-5i (1-Shot)

Ranked #29 on

Few-Shot Semantic Segmentation

on PASCAL-5i (1-Shot)

Revisiting Graph Contrastive Learning from the Perspective of Graph Spectrum

1 code implementation • 5 Oct 2022 • Nian Liu, Xiao Wang, Deyu Bo, Chuan Shi, Jian Pei

Then we theoretically prove that GCL is able to learn the invariance information by contrastive invariance theorem, together with our GAME rule, for the first time, we uncover that the learned representations by GCL essentially encode the low-frequency information, which explains why GCL works.

Structured Attention Composition for Temporal Action Localization

2 code implementations • 20 May 2022 • Le Yang, Junwei Han, Tao Zhao, Nian Liu, Dingwen Zhang

To tackle this issue, we make an early effort to study temporal action localization from the perspective of multi-modality feature learning, based on the observation that different actions exhibit specific preferences to appearance or motion modality.

Learning Non-target Knowledge for Few-shot Semantic Segmentation

1 code implementation • CVPR 2022 • Yuanwei Liu, Nian Liu, Qinglong Cao, Xiwen Yao, Junwei Han, Ling Shao

Then, a BG Eliminating Module and a DO Eliminating Module are proposed to successively filter out the BG and DO information from the query feature, based on which we can obtain a BG and DO-free target object segmentation result.

Debiased Graph Neural Networks with Agnostic Label Selection Bias

no code implementations • 19 Jan 2022 • Shaohua Fan, Xiao Wang, Chuan Shi, Kun Kuang, Nian Liu, Bai Wang

Then to remove the bias in GNN estimation, we propose a novel Debiased Graph Neural Networks (DGNN) with a differentiated decorrelation regularizer.

Compact Graph Structure Learning via Mutual Information Compression

2 code implementations • 14 Jan 2022 • Nian Liu, Xiao Wang, Lingfei Wu, Yu Chen, Xiaojie Guo, Chuan Shi

Furthermore, we maintain the performance of estimated views and the final view and reduce the mutual information of every two views.

Light Field Saliency Detection with Dual Local Graph Learning andReciprocative Guidance

1 code implementation • 2 Oct 2021 • Nian Liu, Wangbo Zhao, Dingwen Zhang, Junwei Han, Ling Shao

On the other hand, instead of processing the twokinds of data separately, we build a novel dual graph modelto guide the focal stack fusion process using all-focus pat-terns.

Summarize and Search: Learning Consensus-aware Dynamic Convolution for Co-Saliency Detection

1 code implementation • ICCV 2021 • Ni Zhang, Junwei Han, Nian Liu, Ling Shao

In this paper, we propose a novel consensus-aware dynamic convolution model to explicitly and effectively perform the "summarize and search" process.

Ranked #3 on

Co-Salient Object Detection

on CoSal2015

Ranked #3 on

Co-Salient Object Detection

on CoSal2015

Instance-Level Relative Saliency Ranking with Graph Reasoning

no code implementations • 8 Jul 2021 • Nian Liu, Long Li, Wangbo Zhao, Junwei Han, Ling Shao

Conventional salient object detection models cannot differentiate the importance of different salient objects.

Context-aware Cross-level Fusion Network for Camouflaged Object Detection

2 code implementations • 26 May 2021 • Yujia Sun, Geng Chen, Tao Zhou, Yi Zhang, Nian Liu

Camouflaged object detection (COD) is a challenging task due to the low boundary contrast between the object and its surroundings.

Self-supervised Heterogeneous Graph Neural Network with Co-contrastive Learning

3 code implementations • 19 May 2021 • Xiao Wang, Nian Liu, Hui Han, Chuan Shi

Then the cross-view contrastive learning, as well as a view mask mechanism, is proposed, which is able to extract the positive and negative embeddings from two views.

Visual Saliency Transformer

1 code implementation • ICCV 2021 • Nian Liu, Ni Zhang, Kaiyuan Wan, Ling Shao, Junwei Han

We also develop a token-based multi-task decoder to simultaneously perform saliency and boundary detection by introducing task-related tokens and a novel patch-task-attention mechanism.

Ranked #1 on

RGB-D Salient Object Detection

on NJUD

Ranked #1 on

RGB-D Salient Object Detection

on NJUD

Lorentzian Graph Convolutional Networks

no code implementations • 15 Apr 2021 • Yiding Zhang, Xiao Wang, Chuan Shi, Nian Liu, Guojie Song

We also find that the performance of some hyperbolic GCNs can be improved by simply replacing the graph operations with those we defined in this paper.

Weakly Supervised Video Salient Object Detection

1 code implementation • CVPR 2021 • Wangbo Zhao, Jing Zhang, Long Li, Nick Barnes, Nian Liu, Junwei Han

Significant performance improvement has been achieved for fully-supervised video salient object detection with the pixel-wise labeled training datasets, which are time-consuming and expensive to obtain.

Salient Object Detection via Integrity Learning

3 code implementations • 19 Jan 2021 • Mingchen Zhuge, Deng-Ping Fan, Nian Liu, Dingwen Zhang, Dong Xu, Ling Shao

We define the concept of integrity at both a micro and macro level.

Light Field Saliency Detection With Dual Local Graph Learning and Reciprocative Guidance

no code implementations • ICCV 2021 • Nian Liu, Wangbo Zhao, Dingwen Zhang, Junwei Han, Ling Shao

In this paper, we model the information fusion within focal stack via graph networks.

Learning Selective Mutual Attention and Contrast for RGB-D Saliency Detection

1 code implementation • 12 Oct 2020 • Nian Liu, Ni Zhang, Ling Shao, Junwei Han

Early fusion and the result fusion schemes fuse RGB and depth information at the input and output stages, respectively, hence incur the problem of distribution gap or information loss.

Learning Selective Self-Mutual Attention for RGB-D Saliency Detection

1 code implementation • CVPR 2020 • Nian Liu, Ni Zhang, Junwei Han

Considering the reliability of the other modality's attention, we further propose a selection attention to weight the newly added attention term.

Ranked #19 on

RGB-D Salient Object Detection

on NJU2K

Ranked #19 on

RGB-D Salient Object Detection

on NJU2K

Online Multi-Object Tracking with Dual Matching Attention Networks

1 code implementation • ECCV 2018 • Ji Zhu, Hua Yang, Nian Liu, Minyoung Kim, Wenjun Zhang, Ming-Hsuan Yang

In this paper, we propose an online Multi-Object Tracking (MOT) approach which integrates the merits of single object tracking and data association methods in a unified framework to handle noisy detections and frequent interactions between targets.

![]() Ranked #5 on

Online Multi-Object Tracking

on MOT16

Ranked #5 on

Online Multi-Object Tracking

on MOT16

PiCANet: Pixel-wise Contextual Attention Learning for Accurate Saliency Detection

2 code implementations • 15 Dec 2018 • Nian Liu, Junwei Han, Ming-Hsuan Yang

We propose three specific formulations of the PiCANet via embedding the pixel-wise contextual attention mechanism into the pooling and convolution operations with attending to global or local contexts.

PiCANet: Learning Pixel-wise Contextual Attention for Saliency Detection

2 code implementations • CVPR 2018 • Nian Liu, Junwei Han, Ming-Hsuan Yang

We formulate the proposed PiCANet in both global and local forms to attend to global and local contexts, respectively.

Ranked #7 on

RGB Salient Object Detection

on SOC

Ranked #7 on

RGB Salient Object Detection

on SOC

A Deep Spatial Contextual Long-term Recurrent Convolutional Network for Saliency Detection

2 code implementations • 6 Oct 2016 • Nian Liu, Junwei Han

Furthermore, the proposed DSCLSTM model can significantly boost the saliency detection performance by incorporating both global spatial interconnections and scene context modulation, which may uncover novel inspirations for studies on them in computational saliency models.

DHSNet: Deep Hierarchical Saliency Network for Salient Object Detection

no code implementations • CVPR 2016 • Nian Liu, Junwei Han

Then a novel hierarchical recurrent convolutional neural network (HRCNN) is adopted to further hierarchically and progressively refine the details of saliency maps step by step via integrating local context information.

Ranked #17 on

RGB Salient Object Detection

on DUTS-TE

(max F-measure metric)

Ranked #17 on

RGB Salient Object Detection

on DUTS-TE

(max F-measure metric)

Predicting Eye Fixations Using Convolutional Neural Networks

no code implementations • CVPR 2015 • Nian Liu, Junwei Han, Dingwen Zhang, Shifeng Wen, Tianming Liu

It is believed that eye movements in free-viewing of natural scenes are directed by both bottom-up visual saliency and top-down visual factors.