Search Results for author: Raviteja Vemulapalli

Found 30 papers, 4 papers with code

Weight subcloning: direct initialization of transformers using larger pretrained ones

no code implementations • 14 Dec 2023 • Mohammad Samragh, Mehrdad Farajtabar, Sachin Mehta, Raviteja Vemulapalli, Fartash Faghri, Devang Naik, Oncel Tuzel, Mohammad Rastegari

The usual practice of transfer learning overcomes this challenge by initializing the model with weights of a pretrained model of the same size and specification to increase the convergence and training speed.

Knowledge Transfer from Vision Foundation Models for Efficient Training of Small Task-specific Models

no code implementations • 30 Nov 2023 • Raviteja Vemulapalli, Hadi Pouransari, Fartash Faghri, Sachin Mehta, Mehrdad Farajtabar, Mohammad Rastegari, Oncel Tuzel

Motivated by this, we ask the following important question, "How can we leverage the knowledge from a large VFM to train a small task-specific model for a new target task with limited labeled training data?

Probabilistic Speech-Driven 3D Facial Motion Synthesis: New Benchmarks, Methods, and Applications

no code implementations • 30 Nov 2023 • Karren D. Yang, Anurag Ranjan, Jen-Hao Rick Chang, Raviteja Vemulapalli, Oncel Tuzel

While these models can achieve high-quality lip articulation for speakers in the training set, they are unable to capture the full and diverse distribution of 3D facial motions that accompany speech in the real world.

MobileCLIP: Fast Image-Text Models through Multi-Modal Reinforced Training

1 code implementation • 28 Nov 2023 • Pavan Kumar Anasosalu Vasu, Hadi Pouransari, Fartash Faghri, Raviteja Vemulapalli, Oncel Tuzel

We further demonstrate the effectiveness of our multi-modal reinforced training by training a CLIP model based on ViT-B/16 image backbone and achieving +2. 9% average performance improvement on 38 evaluation benchmarks compared to the previous best.

TiC-CLIP: Continual Training of CLIP Models

1 code implementation • 24 Oct 2023 • Saurabh Garg, Mehrdad Farajtabar, Hadi Pouransari, Raviteja Vemulapalli, Sachin Mehta, Oncel Tuzel, Vaishaal Shankar, Fartash Faghri

We introduce the first set of web-scale Time-Continual (TiC) benchmarks for training vision-language models: TiC-DataComp, TiC-YFCC, and TiC-Redcaps.

SAM-CLIP: Merging Vision Foundation Models towards Semantic and Spatial Understanding

no code implementations • 23 Oct 2023 • Haoxiang Wang, Pavan Kumar Anasosalu Vasu, Fartash Faghri, Raviteja Vemulapalli, Mehrdad Farajtabar, Sachin Mehta, Mohammad Rastegari, Oncel Tuzel, Hadi Pouransari

By applying our method to SAM and CLIP, we obtain SAM-CLIP: a unified model that combines the capabilities of SAM and CLIP into a single vision transformer.

CLIP meets Model Zoo Experts: Pseudo-Supervision for Visual Enhancement

no code implementations • 21 Oct 2023 • Mohammadreza Salehi, Mehrdad Farajtabar, Maxwell Horton, Fartash Faghri, Hadi Pouransari, Raviteja Vemulapalli, Oncel Tuzel, Ali Farhadi, Mohammad Rastegari, Sachin Mehta

While CLIP is scalable, promptable, and robust to distribution shifts on image classification tasks, it lacks object localization capabilities.

Corpus Synthesis for Zero-shot ASR domain Adaptation using Large Language Models

no code implementations • 18 Sep 2023 • Hsuan Su, Ting-yao Hu, Hema Swetha Koppula, Raviteja Vemulapalli, Jen-Hao Rick Chang, Karren Yang, Gautam Varma Mantena, Oncel Tuzel

In this paper, we propose a new strategy for adapting ASR models to new target domains without any text or speech from those domains.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+5

Automatic Speech Recognition (ASR)

+5

Towards Federated Learning Under Resource Constraints via Layer-wise Training and Depth Dropout

no code implementations • 11 Sep 2023 • Pengfei Guo, Warren Richard Morningstar, Raviteja Vemulapalli, Karan Singhal, Vishal M. Patel, Philip Andrew Mansfield

To mitigate this issue and facilitate training of large models on edge devices, we introduce a simple yet effective strategy, Federated Layer-wise Learning, to simultaneously reduce per-client memory, computation, and communication costs.

Federated Training of Dual Encoding Models on Small Non-IID Client Datasets

no code implementations • 30 Sep 2022 • Raviteja Vemulapalli, Warren Richard Morningstar, Philip Andrew Mansfield, Hubert Eichner, Karan Singhal, Arash Afkanpour, Bradley Green

In this work, we focus on federated training of dual encoding models on decentralized data composed of many small, non-IID (independent and identically distributed) client datasets.

Less data is more: Selecting informative and diverse subsets with balancing constraints

no code implementations • 29 Sep 2021 • Srikumar Ramalingam, Daniel Glasner, Kaushal Patel, Raviteja Vemulapalli, Sadeep Jayasumana, Sanjiv Kumar

Deep learning has yielded extraordinary results in vision and natural language processing, but this achievement comes at a cost.

Less is more: Selecting informative and diverse subsets with balancing constraints

no code implementations • 26 Apr 2021 • Srikumar Ramalingam, Daniel Glasner, Kaushal Patel, Raviteja Vemulapalli, Sadeep Jayasumana, Sanjiv Kumar

Deep learning has yielded extraordinary results in vision and natural language processing, but this achievement comes at a cost.

Camera View Adjustment Prediction for Improving Image Composition

no code implementations • 15 Apr 2021 • Yu-Chuan Su, Raviteja Vemulapalli, Ben Weiss, Chun-Te Chu, Philip Andrew Mansfield, Lior Shapira, Colvin Pitts

To address this issue, we propose a deep learning-based approach that provides suggestions to the photographer on how to adjust the camera view before capturing.

Contrastive Learning for Label Efficient Semantic Segmentation

no code implementations • ICCV 2021 • Xiangyun Zhao, Raviteja Vemulapalli, Philip Andrew Mansfield, Boqing Gong, Bradley Green, Lior Shapira, Ying Wu

While recent Convolutional Neural Network (CNN) based semantic segmentation approaches have achieved impressive results by using large amounts of labeled training data, their performance drops significantly as the amount of labeled data decreases.

Global Self-Attention Networks

no code implementations • 1 Jan 2021 • Zhuoran Shen, Irwan Bello, Raviteja Vemulapalli, Xuhui Jia, Ching-Hui Chen

Based on the proposed GSA module, we introduce new standalone global attention-based deep networks that use GSA modules instead of convolutions to model pixel interactions.

Contrastive Learning for Label-Efficient Semantic Segmentation

no code implementations • 13 Dec 2020 • Xiangyun Zhao, Raviteja Vemulapalli, Philip Mansfield, Boqing Gong, Bradley Green, Lior Shapira, Ying Wu

While recent Convolutional Neural Network (CNN) based semantic segmentation approaches have achieved impressive results by using large amounts of labeled training data, their performance drops significantly as the amount of labeled data decreases.

Boosting Image-based Mutual Gaze Detection using Pseudo 3D Gaze

no code implementations • 15 Oct 2020 • Bardia Doosti, Ching-Hui Chen, Raviteja Vemulapalli, Xuhui Jia, Yukun Zhu, Bradley Green

In this work, we focus on the task of image-based mutual gaze detection, and propose a simple and effective approach to boost the performance by using an auxiliary 3D gaze estimation task during the training phase.

Global Self-Attention Networks for Image Recognition

no code implementations • 6 Oct 2020 • Zhuoran Shen, Irwan Bello, Raviteja Vemulapalli, Xuhui Jia, Ching-Hui Chen

Based on the proposed GSA module, we introduce new standalone global attention-based deep networks that use GSA modules instead of convolutions to model pixel interactions.

Search to Distill: Pearls are Everywhere but not the Eyes

no code implementations • CVPR 2020 • Yu Liu, Xuhui Jia, Mingxing Tan, Raviteja Vemulapalli, Yukun Zhu, Bradley Green, Xiaogang Wang

Standard Knowledge Distillation (KD) approaches distill the knowledge of a cumbersome teacher model into the parameters of a student model with a pre-defined architecture.

A Compact Embedding for Facial Expression Similarity

2 code implementations • CVPR 2019 • Raviteja Vemulapalli, Aseem Agarwala

Most of the existing work on automatic facial expression analysis focuses on discrete emotion recognition, or facial action unit detection.

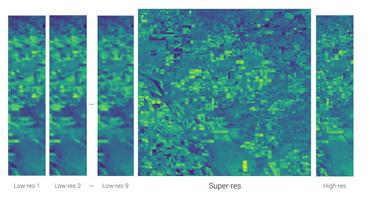

Frame-Recurrent Video Super-Resolution

no code implementations • CVPR 2018 • Mehdi S. M. Sajjadi, Raviteja Vemulapalli, Matthew Brown

Recent advances in video super-resolution have shown that convolutional neural networks combined with motion compensation are able to merge information from multiple low-resolution (LR) frames to generate high-quality images.

Ranked #6 on

Video Super-Resolution

on MSU Video Upscalers: Quality Enhancement

(VMAF metric)

Ranked #6 on

Video Super-Resolution

on MSU Video Upscalers: Quality Enhancement

(VMAF metric)

Designing Deep Convolutional Neural Networks for Continuous Object Orientation Estimation

no code implementations • 6 Feb 2017 • Kota Hara, Raviteja Vemulapalli, Rama Chellappa

The third method works by first converting the continuous orientation estimation task into a set of discrete orientation estimation tasks and then converting the discrete orientation outputs back to the continuous orientation using a mean-shift algorithm.

Rolling Rotations for Recognizing Human Actions from 3D Skeletal Data

no code implementations • 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR) 2016 • Raviteja Vemulapalli, Rama Chellappa

Then, using this representation, we model human actions as curves in this Lie group.

Ranked #4 on

Skeleton Based Action Recognition

on Gaming 3D (G3D)

Ranked #4 on

Skeleton Based Action Recognition

on Gaming 3D (G3D)

Gaussian Conditional Random Field Network for Semantic Segmentation

no code implementations • CVPR 2016 • Raviteja Vemulapalli, Oncel Tuzel, Ming-Yu Liu, Rama Chellapa

In contrast to the existing approaches that use discrete Conditional Random Field (CRF) models, we propose to use a Gaussian CRF model for the task of semantic segmentation.

Unsupervised Cross-Modal Synthesis of Subject-Specific Scans

no code implementations • ICCV 2015 • Raviteja Vemulapalli, Hien Van Nguyen, Shaohua Kevin Zhou

Our experiments on generating T1-MRI brain scans from T2-MRI and vice versa demonstrate that the synthesis capability of the proposed unsupervised approach is comparable to various state-of-the-art supervised approaches in the literature.

Deep Gaussian Conditional Random Field Network: A Model-based Deep Network for Discriminative Denoising

no code implementations • CVPR 2016 • Raviteja Vemulapalli, Oncel Tuzel, Ming-Yu Liu

We propose a novel deep network architecture for image\\ denoising based on a Gaussian Conditional Random Field (GCRF) model.

Riemannian Metric Learning for Symmetric Positive Definite Matrices

no code implementations • 10 Jan 2015 • Raviteja Vemulapalli, David W. Jacobs

In this work, we focus on the log-Euclidean Riemannian geometry and propose a data-driven approach for learning Riemannian metrics/geodesic distances for SPD matrices.

MKL-RT: Multiple Kernel Learning for Ratio-trace Problems via Convex Optimization

no code implementations • 16 Oct 2014 • Raviteja Vemulapalli, Vinay Praneeth Boda, Rama Chellappa

We experimentally demonstrate that the proposed MKL approach, which we refer to as MKL-RT, can be successfully used to select features for discriminative dimensionality reduction and cross-modal retrieval.

Human Action Recognition by Representing 3D Skeletons as Points in a Lie Group

1 code implementation • 2014 IEEE Conference on Computer Vision and Pattern Recognition (CVPR) 2014 • Raviteja Vemulapalli, Felipe Arrate, Rama Chellappa

Recently introduced cost-effective depth sensors coupled with the real-time skeleton estimation algorithm of Shotton et al. have generated a renewed interest in skeleton-based human action recognition.

Ranked #6 on

Skeleton Based Action Recognition

on UT-Kinect

Ranked #6 on

Skeleton Based Action Recognition

on UT-Kinect

Kernel Learning for Extrinsic Classification of Manifold Features

no code implementations • CVPR 2013 • Raviteja Vemulapalli, Jaishanker K. Pillai, Rama Chellappa

In this paper, we address the issue of kernelselection for the classification of features that lie on Riemannian manifolds using the kernel learning approach.