Object Detection

3733 papers with code • 91 benchmarks • 262 datasets

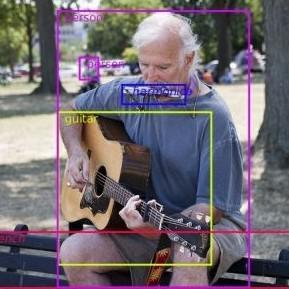

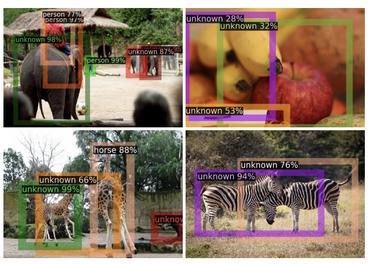

Object Detection is a computer vision task in which the goal is to detect and locate objects of interest in an image or video. The task involves identifying the position and boundaries of objects in an image, and classifying the objects into different categories. It forms a crucial part of vision recognition, alongside image classification and retrieval.

The state-of-the-art methods can be categorized into two main types: one-stage methods and two stage-methods:

-

One-stage methods prioritize inference speed, and example models include YOLO, SSD and RetinaNet.

-

Two-stage methods prioritize detection accuracy, and example models include Faster R-CNN, Mask R-CNN and Cascade R-CNN.

The most popular benchmark is the MSCOCO dataset. Models are typically evaluated according to a Mean Average Precision metric.

( Image credit: Detectron )

Libraries

Use these libraries to find Object Detection models and implementationsDatasets

Subtasks

-

3D Object Detection

3D Object Detection

-

Real-Time Object Detection

Real-Time Object Detection

-

RGB Salient Object Detection

RGB Salient Object Detection

-

Few-Shot Object Detection

Few-Shot Object Detection

-

Few-Shot Object Detection

Few-Shot Object Detection

-

Video Object Detection

Video Object Detection

-

RGB-D Salient Object Detection

RGB-D Salient Object Detection

-

Open Vocabulary Object Detection

Open Vocabulary Object Detection

-

Object Detection In Aerial Images

Object Detection In Aerial Images

-

Weakly Supervised Object Detection

Weakly Supervised Object Detection

-

Small Object Detection

Small Object Detection

-

Robust Object Detection

Robust Object Detection

-

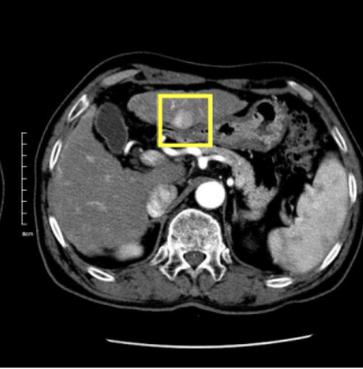

Medical Object Detection

Medical Object Detection

-

Zero-Shot Object Detection

Zero-Shot Object Detection

-

Open World Object Detection

Open World Object Detection

-

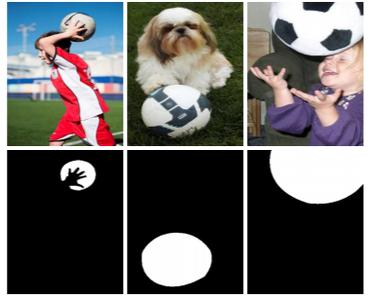

Co-Salient Object Detection

Co-Salient Object Detection

-

Dense Object Detection

Dense Object Detection

-

Object Proposal Generation

Object Proposal Generation

-

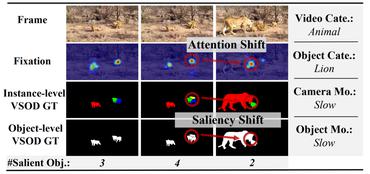

Video Salient Object Detection

Video Salient Object Detection

-

Camouflaged Object Segmentation

Camouflaged Object Segmentation

-

License Plate Detection

License Plate Detection

-

Head Detection

Head Detection

-

Multiview Detection

Multiview Detection

-

3D Object Detection From Monocular Images

3D Object Detection From Monocular Images

-

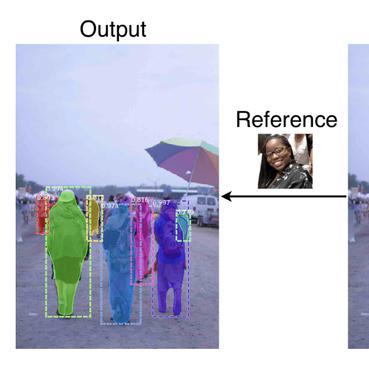

One-Shot Object Detection

One-Shot Object Detection

-

Moving Object Detection

Moving Object Detection

-

Surgical tool detection

Surgical tool detection

-

Described Object Detection

Described Object Detection

-

Body Detection

Body Detection

-

Pupil Detection

Pupil Detection

-

Object Detection In Indoor Scenes

Object Detection In Indoor Scenes

-

Class-agnostic Object Detection

Class-agnostic Object Detection

-

Semantic Part Detection

Semantic Part Detection

-

Object Skeleton Detection

Object Skeleton Detection

-

Fish Detection

Fish Detection

-

Multiple Affordance Detection

Multiple Affordance Detection

-

Weakly Supervised 3D Detection

Weakly Supervised 3D Detection

Latest papers with no code

Reviewing Intelligent Cinematography: AI research for camera-based video production

The main discussion categorizes work by four production types: General Production, Virtual Production, Live Production and Aerial Production.

A New Dataset and Comparative Study for Aphid Cluster Detection and Segmentation in Sorghum Fields

In this study, we trained and evaluated four real-time semantic segmentation models and three object detection models specifically for aphid cluster segmentation and detection.

DriveWorld: 4D Pre-trained Scene Understanding via World Models for Autonomous Driving

In this paper, we address this challenge by introducing a world model-based autonomous driving 4D representation learning framework, dubbed \emph{DriveWorld}, which is capable of pre-training from multi-camera driving videos in a spatio-temporal fashion.

ViewFormer: Exploring Spatiotemporal Modeling for Multi-View 3D Occupancy Perception via View-Guided Transformers

Leveraging the proposed view attention as well as an additional multi-frame streaming temporal attention, we introduce ViewFormer, a vision-centric transformer-based framework for spatiotemporal feature aggregation.

Deep Event-based Object Detection in Autonomous Driving: A Survey

Object detection plays a critical role in autonomous driving, where accurately and efficiently detecting objects in fast-moving scenes is crucial.

A Novel Wide-Area Multiobject Detection System with High-Probability Region Searching

To address these challenges, this paper presents a hybrid system that incorporates a wide-angle camera, a high-speed search camera, and a galvano-mirror.

Low-light Object Detection

In this competition we employed a model fusion approach to achieve object detection results close to those of real images.

Salient Object Detection From Arbitrary Modalities

The most prominent characteristics of AM SOD are that the modality types and modality numbers will be arbitrary or dynamically changed.

Modality Prompts for Arbitrary Modality Salient Object Detection

A novel modality-adaptive Transformer (MAT) will be proposed to investigate two fundamental challenges of AM SOD, ie more diverse modality discrepancies caused by varying modality types that need to be processed, and dynamic fusion design caused by an uncertain number of modalities present in the inputs of multimodal fusion strategy.

BadFusion: 2D-Oriented Backdoor Attacks against 3D Object Detection

To tackle this issue, we propose an innovative 2D-oriented backdoor attack against LiDAR-camera fusion methods for 3D object detection, named BadFusion, for preserving trigger effectiveness throughout the entire fusion process.

MS COCO

MS COCO

KITTI

KITTI

nuScenes

nuScenes

Visual Genome

Visual Genome

LVIS

LVIS

SUN RGB-D

SUN RGB-D

Waymo Open Dataset

Waymo Open Dataset

BDD100K

BDD100K

MVTecAD

MVTecAD

Manga109

Manga109