Search Results for author: Phillip Isola

Found 81 papers, 54 papers with code

Understanding Contrastive Representation Learning through Geometry on the Hypersphere

1 code implementation • ICML 2020 • Tongzhou Wang, Phillip Isola

Contrastive representation learning has been outstandingly successful in practice.

Scalable Optimization in the Modular Norm

1 code implementation • 23 May 2024 • Tim Large, Yang Liu, Minyoung Huh, Hyojin Bahng, Phillip Isola, Jeremy Bernstein

When ramping up the width of a single layer, graceful scaling of training has been linked to the need to normalize the weights and their updates in the "natural norm" particular to that layer.

The Platonic Representation Hypothesis

1 code implementation • 13 May 2024 • Minyoung Huh, Brian Cheung, Tongzhou Wang, Phillip Isola

We argue that representations in AI models, particularly deep networks, are converging.

Training Neural Networks from Scratch with Parallel Low-Rank Adapters

no code implementations • 26 Feb 2024 • Minyoung Huh, Brian Cheung, Jeremy Bernstein, Phillip Isola, Pulkit Agrawal

The scalability of deep learning models is fundamentally limited by computing resources, memory, and communication.

A Vision Check-up for Language Models

no code implementations • 3 Jan 2024 • Pratyusha Sharma, Tamar Rott Shaham, Manel Baradad, Stephanie Fu, Adrian Rodriguez-Munoz, Shivam Duggal, Phillip Isola, Antonio Torralba

Although LLM-generated images do not look like natural images, results on image generation and the ability of models to correct these generated images indicate that precise modeling of strings can teach language models about numerous aspects of the visual world.

Learning Vision from Models Rivals Learning Vision from Data

1 code implementation • 28 Dec 2023 • Yonglong Tian, Lijie Fan, KaiFeng Chen, Dina Katabi, Dilip Krishnan, Phillip Isola

We introduce SynCLR, a novel approach for learning visual representations exclusively from synthetic images and synthetic captions, without any real data.

Scaling Laws of Synthetic Images for Model Training ... for Now

1 code implementation • 7 Dec 2023 • Lijie Fan, KaiFeng Chen, Dilip Krishnan, Dina Katabi, Phillip Isola, Yonglong Tian

Our findings also suggest that scaling synthetic data can be particularly effective in scenarios such as: (1) when there is a limited supply of real images for a supervised problem (e. g., fewer than 0. 5 million images in ImageNet), (2) when the evaluation dataset diverges significantly from the training data, indicating the out-of-distribution scenario, or (3) when synthetic data is used in conjunction with real images, as demonstrated in the training of CLIP models.

The NeurIPS 2022 Neural MMO Challenge: A Massively Multiagent Competition with Specialization and Trade

1 code implementation • 7 Nov 2023 • Enhong Liu, Joseph Suarez, Chenhui You, Bo Wu, BingCheng Chen, Jun Hu, Jiaxin Chen, Xiaolong Zhu, Clare Zhu, Julian Togelius, Sharada Mohanty, Weijun Hong, Rui Du, Yibing Zhang, Qinwen Wang, Xinhang Li, Zheng Yuan, Xiang Li, Yuejia Huang, Kun Zhang, Hanhui Yang, Shiqi Tang, Phillip Isola

In this paper, we present the results of the NeurIPS-2022 Neural MMO Challenge, which attracted 500 participants and received over 1, 600 submissions.

LangNav: Language as a Perceptual Representation for Navigation

no code implementations • 11 Oct 2023 • Bowen Pan, Rameswar Panda, SouYoung Jin, Rogerio Feris, Aude Oliva, Phillip Isola, Yoon Kim

We explore the use of language as a perceptual representation for vision-and-language navigation (VLN), with a focus on low-data settings.

Benchmarking Robustness and Generalization in Multi-Agent Systems: A Case Study on Neural MMO

no code implementations • 30 Aug 2023 • Yangkun Chen, Joseph Suarez, Junjie Zhang, Chenghui Yu, Bo Wu, HanMo Chen, Hengman Zhu, Rui Du, Shanliang Qian, Shuai Liu, Weijun Hong, Jinke He, Yibing Zhang, Liang Zhao, Clare Zhu, Julian Togelius, Sharada Mohanty, Jiaxin Chen, Xiu Li, Xiaolong Zhu, Phillip Isola

We present the results of the second Neural MMO challenge, hosted at IJCAI 2022, which received 1600+ submissions.

Distilled Feature Fields Enable Few-Shot Language-Guided Manipulation

1 code implementation • 27 Jul 2023 • William Shen, Ge Yang, Alan Yu, Jansen Wong, Leslie Pack Kaelbling, Phillip Isola

Self-supervised and language-supervised image models contain rich knowledge of the world that is important for generalization.

DreamSim: Learning New Dimensions of Human Visual Similarity using Synthetic Data

1 code implementation • NeurIPS 2023 • Stephanie Fu, Netanel Tamir, Shobhita Sundaram, Lucy Chai, Richard Zhang, Tali Dekel, Phillip Isola

Furthermore, our metric outperforms both prior learned metrics and recent large vision models on these tasks.

MultiEarth 2023 -- Multimodal Learning for Earth and Environment Workshop and Challenge

1 code implementation • 7 Jun 2023 • Miriam Cha, Gregory Angelides, Mark Hamilton, Andy Soszynski, Brandon Swenson, Nathaniel Maidel, Phillip Isola, Taylor Perron, Bill Freeman

The Multimodal Learning for Earth and Environment Workshop (MultiEarth 2023) is the second annual CVPR workshop aimed at the monitoring and analysis of the health of Earth ecosystems by leveraging the vast amount of remote sensing data that is continuously being collected.

StableRep: Synthetic Images from Text-to-Image Models Make Strong Visual Representation Learners

2 code implementations • NeurIPS 2023 • Yonglong Tian, Lijie Fan, Phillip Isola, Huiwen Chang, Dilip Krishnan

We investigate the potential of learning visual representations using synthetic images generated by text-to-image models.

Improving CLIP Training with Language Rewrites

1 code implementation • NeurIPS 2023 • Lijie Fan, Dilip Krishnan, Phillip Isola, Dina Katabi, Yonglong Tian

During training, LaCLIP randomly selects either the original texts or the rewritten versions as text augmentations for each image.

Straightening Out the Straight-Through Estimator: Overcoming Optimization Challenges in Vector Quantized Networks

no code implementations • 15 May 2023 • Minyoung Huh, Brian Cheung, Pulkit Agrawal, Phillip Isola

We identify the factors that contribute to this issue, including the codebook gradient sparsity and the asymmetric nature of the commitment loss, which leads to misaligned code-vector assignments.

Optimal Goal-Reaching Reinforcement Learning via Quasimetric Learning

1 code implementation • 3 Apr 2023 • Tongzhou Wang, Antonio Torralba, Phillip Isola, Amy Zhang

In goal-reaching reinforcement learning (RL), the optimal value function has a particular geometry, called quasimetric structure.

Persistent Nature: A Generative Model of Unbounded 3D Worlds

1 code implementation • CVPR 2023 • Lucy Chai, Richard Tucker, Zhengqi Li, Phillip Isola, Noah Snavely

Despite increasingly realistic image quality, recent 3D image generative models often operate on 3D volumes of fixed extent with limited camera motions.

Ranked #2 on

Scene Generation

on GoogleEarth

Ranked #2 on

Scene Generation

on GoogleEarth

Steerable Equivariant Representation Learning

no code implementations • 22 Feb 2023 • Sangnie Bhardwaj, Willie McClinton, Tongzhou Wang, Guillaume Lajoie, Chen Sun, Phillip Isola, Dilip Krishnan

In this paper, we propose a method of learning representations that are instead equivariant to data augmentations.

Procedural Image Programs for Representation Learning

1 code implementation • 29 Nov 2022 • Manel Baradad, Chun-Fu Chen, Jonas Wulff, Tongzhou Wang, Rogerio Feris, Antonio Torralba, Phillip Isola

Learning image representations using synthetic data allows training neural networks without some of the concerns associated with real images, such as privacy and bias.

Improved Representation of Asymmetrical Distances with Interval Quasimetric Embeddings

1 code implementation • 28 Nov 2022 • Tongzhou Wang, Phillip Isola

Asymmetrical distance structures (quasimetrics) are ubiquitous in our lives and are gaining more attention in machine learning applications.

Powderworld: A Platform for Understanding Generalization via Rich Task Distributions

no code implementations • 23 Nov 2022 • Kevin Frans, Phillip Isola

Within Powderworld, two motivating challenges distributions are presented, one for world-modelling and one for reinforcement learning.

Totems: Physical Objects for Verifying Visual Integrity

no code implementations • 26 Sep 2022 • Jingwei Ma, Lucy Chai, Minyoung Huh, Tongzhou Wang, Ser-Nam Lim, Phillip Isola, Antonio Torralba

We introduce a new approach to image forensics: placing physical refractive objects, which we call totems, into a scene so as to protect any photograph taken of that scene.

Semantic uncertainty intervals for disentangled latent spaces

1 code implementation • 20 Jul 2022 • Swami Sankaranarayanan, Anastasios N. Angelopoulos, Stephen Bates, Yaniv Romano, Phillip Isola

Meaningful uncertainty quantification in computer vision requires reasoning about semantic information -- say, the hair color of the person in a photo or the location of a car on the street.

Developing a Series of AI Challenges for the United States Department of the Air Force

1 code implementation • 14 Jul 2022 • Vijay Gadepally, Gregory Angelides, Andrei Barbu, Andrew Bowne, Laura J. Brattain, Tamara Broderick, Armando Cabrera, Glenn Carl, Ronisha Carter, Miriam Cha, Emilie Cowen, Jesse Cummings, Bill Freeman, James Glass, Sam Goldberg, Mark Hamilton, Thomas Heldt, Kuan Wei Huang, Phillip Isola, Boris Katz, Jamie Koerner, Yen-Chen Lin, David Mayo, Kyle McAlpin, Taylor Perron, Jean Piou, Hrishikesh M. Rao, Hayley Reynolds, Kaira Samuel, Siddharth Samsi, Morgan Schmidt, Leslie Shing, Olga Simek, Brandon Swenson, Vivienne Sze, Jonathan Taylor, Paul Tylkin, Mark Veillette, Matthew L Weiss, Allan Wollaber, Sophia Yuditskaya, Jeremy Kepner

Through a series of federal initiatives and orders, the U. S. Government has been making a concerted effort to ensure American leadership in AI.

On the Learning and Learnability of Quasimetrics

2 code implementations • 30 Jun 2022 • Tongzhou Wang, Phillip Isola

In contrast, our proposed Poisson Quasimetric Embedding (PQE) is the first quasimetric learning formulation that both is learnable with gradient-based optimization and enjoys strong performance guarantees.

Denoised MDPs: Learning World Models Better Than the World Itself

1 code implementation • 30 Jun 2022 • Tongzhou Wang, Simon S. Du, Antonio Torralba, Phillip Isola, Amy Zhang, Yuandong Tian

The ability to separate signal from noise, and reason with clean abstractions, is critical to intelligence.

MultiEarth 2022 -- Multimodal Learning for Earth and Environment Workshop and Challenge

no code implementations • 15 Apr 2022 • Miriam Cha, Kuan Wei Huang, Morgan Schmidt, Gregory Angelides, Mark Hamilton, Sam Goldberg, Armando Cabrera, Phillip Isola, Taylor Perron, Bill Freeman, Yen-Chen Lin, Brandon Swenson, Jean Piou

The Multimodal Learning for Earth and Environment Challenge (MultiEarth 2022) will be the first competition aimed at the monitoring and analysis of deforestation in the Amazon rainforest at any time and in any weather conditions.

Any-resolution Training for High-resolution Image Synthesis

1 code implementation • 14 Apr 2022 • Lucy Chai, Michael Gharbi, Eli Shechtman, Phillip Isola, Richard Zhang

To take advantage of varied-size data, we introduce continuous-scale training, a process that samples patches at random scales to train a new generator with variable output resolutions.

Exploring Visual Prompts for Adapting Large-Scale Models

1 code implementation • 31 Mar 2022 • Hyojin Bahng, Ali Jahanian, Swami Sankaranarayanan, Phillip Isola

The surprising effectiveness of visual prompting provides a new perspective on adapting pre-trained models in vision.

Learning to generate line drawings that convey geometry and semantics

2 code implementations • CVPR 2022 • Caroline Chan, Fredo Durand, Phillip Isola

We introduce a geometry loss which predicts depth information from the image features of a line drawing, and a semantic loss which matches the CLIP features of a line drawing with its corresponding photograph.

NeRF-Supervision: Learning Dense Object Descriptors from Neural Radiance Fields

no code implementations • 3 Mar 2022 • Lin Yen-Chen, Pete Florence, Jonathan T. Barron, Tsung-Yi Lin, Alberto Rodriguez, Phillip Isola

In particular, we demonstrate that a NeRF representation of a scene can be used to train dense object descriptors.

Contrastive Feature Loss for Image Prediction

1 code implementation • 12 Nov 2021 • Alex Andonian, Taesung Park, Bryan Russell, Phillip Isola, Jun-Yan Zhu, Richard Zhang

Training supervised image synthesis models requires a critic to compare two images: the ground truth to the result.

Learning to Ground Multi-Agent Communication with Autoencoders

no code implementations • NeurIPS 2021 • Toru Lin, Minyoung Huh, Chris Stauffer, Ser-Nam Lim, Phillip Isola

Communication requires having a common language, a lingua franca, between agents.

The Neural MMO Platform for Massively Multiagent Research

no code implementations • 14 Oct 2021 • Joseph Suarez, Yilun Du, Clare Zhu, Igor Mordatch, Phillip Isola

Neural MMO is a computationally accessible research platform that combines large agent populations, long time horizons, open-ended tasks, and modular game systems.

OPEn: An Open-ended Physics Environment for Learning Without a Task

1 code implementation • 13 Oct 2021 • Chuang Gan, Abhishek Bhandwaldar, Antonio Torralba, Joshua B. Tenenbaum, Phillip Isola

We test several existing RL-based exploration methods on this benchmark and find that an agent using unsupervised contrastive learning for representation learning, and impact-driven learning for exploration, achieved the best results.

On the Learning of Quasimetrics

no code implementations • ICLR 2022 • Tongzhou Wang, Phillip Isola

Our world is full of asymmetries.

Adaptable Agent Populations via a Generative Model of Policies

1 code implementation • NeurIPS 2021 • Kenneth Derek, Phillip Isola

To this end, we introduce a generative model of policies, which maps a low-dimensional latent space to an agent policy space.

Learning to See before Learning to Act: Visual Pre-training for Manipulation

no code implementations • 1 Jul 2021 • Lin Yen-Chen, Andy Zeng, Shuran Song, Phillip Isola, Tsung-Yi Lin

With just a small amount of robotic experience, we can further fine-tune the affordance model to achieve better results.

Learning to See by Looking at Noise

1 code implementation • NeurIPS 2021 • Manel Baradad, Jonas Wulff, Tongzhou Wang, Phillip Isola, Antonio Torralba

We investigate a suite of image generation models that produce images from simple random processes.

Generative Models as a Data Source for Multiview Representation Learning

1 code implementation • ICLR 2022 • Ali Jahanian, Xavier Puig, Yonglong Tian, Phillip Isola

We investigate this question in the setting of learning general-purpose visual representations from a black-box generative model rather than directly from data.

Curious Representation Learning for Embodied Intelligence

1 code implementation • ICCV 2021 • Yilun Du, Chuang Gan, Phillip Isola

Instead, it must explore its environment to acquire the data it will learn from.

Ensembling with Deep Generative Views

1 code implementation • CVPR 2021 • Lucy Chai, Jun-Yan Zhu, Eli Shechtman, Phillip Isola, Richard Zhang

Here, we investigate whether such views can be applied to real images to benefit downstream analysis tasks such as image classification.

Explaining in Style: Training a GAN to explain a classifier in StyleSpace

2 code implementations • ICCV 2021 • Oran Lang, Yossi Gandelsman, Michal Yarom, Yoav Wald, Gal Elidan, Avinatan Hassidim, William T. Freeman, Phillip Isola, Amir Globerson, Michal Irani, Inbar Mosseri

A natural source for such attributes is the StyleSpace of StyleGAN, which is known to generate semantically meaningful dimensions in the image.

The Low-Rank Simplicity Bias in Deep Networks

1 code implementation • 18 Mar 2021 • Minyoung Huh, Hossein Mobahi, Richard Zhang, Brian Cheung, Pulkit Agrawal, Phillip Isola

We show empirically that our claim holds true on finite width linear and non-linear models on practical learning paradigms and show that on natural data, these are often the solutions that generalize well.

Using latent space regression to analyze and leverage compositionality in GANs

1 code implementation • ICLR 2021 • Lucy Chai, Jonas Wulff, Phillip Isola

In this work, we investigate regression into the latent space as a probe to understand the compositional properties of GANs.

INeRF: Inverting Neural Radiance Fields for Pose Estimation

1 code implementation • 10 Dec 2020 • Lin Yen-Chen, Pete Florence, Jonathan T. Barron, Alberto Rodriguez, Phillip Isola, Tsung-Yi Lin

We then show that for complex real-world scenes from the LLFF dataset, iNeRF can improve NeRF by estimating the camera poses of novel images and using these images as additional training data for NeRF.

What makes fake images detectable? Understanding properties that generalize

1 code implementation • ECCV 2020 • Lucy Chai, David Bau, Ser-Nam Lim, Phillip Isola

The quality of image generation and manipulation is reaching impressive levels, making it increasingly difficult for a human to distinguish between what is real and what is fake.

Noisy Agents: Self-supervised Exploration by Predicting Auditory Events

no code implementations • 27 Jul 2020 • Chuang Gan, Xiaoyu Chen, Phillip Isola, Antonio Torralba, Joshua B. Tenenbaum

Humans integrate multiple sensory modalities (e. g. visual and audio) to build a causal understanding of the physical world.

What Makes for Good Views for Contrastive Learning?

1 code implementation • NeurIPS 2020 • Yonglong Tian, Chen Sun, Ben Poole, Dilip Krishnan, Cordelia Schmid, Phillip Isola

Contrastive learning between multiple views of the data has recently achieved state of the art performance in the field of self-supervised representation learning.

Ranked #2 on

Contrastive Learning

on imagenet-1k

Ranked #2 on

Contrastive Learning

on imagenet-1k

Understanding Contrastive Representation Learning through Alignment and Uniformity on the Hypersphere

2 code implementations • 20 May 2020 • Tongzhou Wang, Phillip Isola

Contrastive representation learning has been outstandingly successful in practice.

Supervised Contrastive Learning

23 code implementations • NeurIPS 2020 • Prannay Khosla, Piotr Teterwak, Chen Wang, Aaron Sarna, Yonglong Tian, Phillip Isola, Aaron Maschinot, Ce Liu, Dilip Krishnan

Contrastive learning applied to self-supervised representation learning has seen a resurgence in recent years, leading to state of the art performance in the unsupervised training of deep image models.

Ranked #2 on

Class Incremental Learning

on cifar100

Ranked #2 on

Class Incremental Learning

on cifar100

Rethinking Few-Shot Image Classification: a Good Embedding Is All You Need?

3 code implementations • ECCV 2020 • Yonglong Tian, Yue Wang, Dilip Krishnan, Joshua B. Tenenbaum, Phillip Isola

The focus of recent meta-learning research has been on the development of learning algorithms that can quickly adapt to test time tasks with limited data and low computational cost.

Neural MMO v1.3: A Massively Multiagent Game Environment for Training and Evaluating Neural Networks

no code implementations • 31 Jan 2020 • Joseph Suarez, Yilun Du, Igor Mordatch, Phillip Isola

We present Neural MMO, a massively multiagent game environment inspired by MMOs and discuss our progress on two more general challenges in multiagent systems engineering for AI research: distributed infrastructure and game IO.

Experience-Embedded Visual Foresight

no code implementations • 12 Nov 2019 • Lin Yen-Chen, Maria Bauza, Phillip Isola

In this paper, we tackle the generalization problem via fast adaptation, where we train a prediction model to quickly adapt to the observed visual dynamics of a novel object.

Contrastive Representation Distillation

3 code implementations • ICLR 2020 • Yonglong Tian, Dilip Krishnan, Phillip Isola

We demonstrate that this objective ignores important structural knowledge of the teacher network.

Ranked #13 on

Knowledge Distillation

on CIFAR-100

Ranked #13 on

Knowledge Distillation

on CIFAR-100

Omnipush: accurate, diverse, real-world dataset of pushing dynamics with RGB-D video

no code implementations • 1 Oct 2019 • Maria Bauza, Ferran Alet, Yen-Chen Lin, Tomas Lozano-Perez, Leslie P. Kaelbling, Phillip Isola, Alberto Rodriguez

Such models, however, are approximate, which limits their applicability.

Learning Good Policies By Learning Good Perceptual Models

no code implementations • 25 Sep 2019 • Yilun Du, Phillip Isola

Reinforcement learning (RL) has led to increasingly complex looking behavior in recent years.

On the "steerability" of generative adversarial networks

2 code implementations • 16 Jul 2019 • Ali Jahanian, Lucy Chai, Phillip Isola

We hypothesize that the degree of distributional shift is related to the breadth of the training data distribution.

GANalyze: Toward Visual Definitions of Cognitive Image Properties

1 code implementation • ICCV 2019 • Authors, :, Lore Goetschalckx, Alex Andonian, Aude Oliva, Phillip Isola

We introduce a framework that uses Generative Adversarial Networks (GANs) to study cognitive properties like memorability, aesthetics, and emotional valence.

Contrastive Multiview Coding

8 code implementations • ECCV 2020 • Yonglong Tian, Dilip Krishnan, Phillip Isola

We analyze key properties of the approach that make it work, finding that the contrastive loss outperforms a popular alternative based on cross-view prediction, and that the more views we learn from, the better the resulting representation captures underlying scene semantics.

Ranked #48 on

Self-Supervised Action Recognition

on UCF101

Ranked #48 on

Self-Supervised Action Recognition

on UCF101

Neural MMO: A massively multiplayer game environment for intelligent agents

no code implementations • ICLR 2019 • Joseph Suarez, Yilun Du, Phillip Isola, Igor Mordatch

We demonstrate how this platform can be used to study behavior and learning in large populations of neural agents.

Neural MMO: A Massively Multiagent Game Environment for Training and Evaluating Intelligent Agents

1 code implementation • 2 Mar 2019 • Joseph Suarez, Yilun Du, Phillip Isola, Igor Mordatch

The emergence of complex life on Earth is often attributed to the arms race that ensued from a huge number of organisms all competing for finite resources.

Learning to Control Self-Assembling Morphologies: A Study of Generalization via Modularity

1 code implementation • NeurIPS 2019 • Deepak Pathak, Chris Lu, Trevor Darrell, Phillip Isola, Alexei A. Efros

We evaluate the performance of these dynamic and modular agents in simulated environments.

InGAN: Capturing and Remapping the "DNA" of a Natural Image

1 code implementation • 1 Dec 2018 • Assaf Shocher, Shai Bagon, Phillip Isola, Michal Irani

In this paper we propose an "Internal GAN" (InGAN) - an image-specific GAN - which trains on a single input image and learns its internal distribution of patches.

Evolved Policy Gradients

3 code implementations • NeurIPS 2018 • Rein Houthooft, Richard Y. Chen, Phillip Isola, Bradly C. Stadie, Filip Wolski, Jonathan Ho, Pieter Abbeel

We propose a metalearning approach for learning gradient-based reinforcement learning (RL) algorithms.

The Unreasonable Effectiveness of Deep Features as a Perceptual Metric

24 code implementations • CVPR 2018 • Richard Zhang, Phillip Isola, Alexei A. Efros, Eli Shechtman, Oliver Wang

We systematically evaluate deep features across different architectures and tasks and compare them with classic metrics.

Ranked #19 on

Video Quality Assessment

on MSU FR VQA Database

Ranked #19 on

Video Quality Assessment

on MSU FR VQA Database

CyCADA: Cycle-Consistent Adversarial Domain Adaptation

3 code implementations • ICML 2018 • Judy Hoffman, Eric Tzeng, Taesung Park, Jun-Yan Zhu, Phillip Isola, Kate Saenko, Alexei A. Efros, Trevor Darrell

Domain adaptation is critical for success in new, unseen environments.

3D Sketching using Multi-View Deep Volumetric Prediction

no code implementations • 26 Jul 2017 • Johanna Delanoy, Mathieu Aubry, Phillip Isola, Alexei A. Efros, Adrien Bousseau

The main strengths of our approach are its robustness to freehand bitmap drawings, its ability to adapt to different object categories, and the continuum it offers between single-view and multi-view sketch-based modeling.

Real-Time User-Guided Image Colorization with Learned Deep Priors

3 code implementations • 8 May 2017 • Richard Zhang, Jun-Yan Zhu, Phillip Isola, Xinyang Geng, Angela S. Lin, Tianhe Yu, Alexei A. Efros

The system directly maps a grayscale image, along with sparse, local user "hints" to an output colorization with a Convolutional Neural Network (CNN).

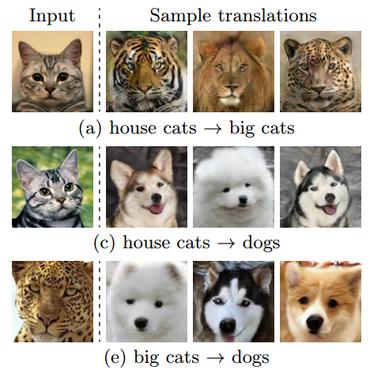

Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks

187 code implementations • ICCV 2017 • Jun-Yan Zhu, Taesung Park, Phillip Isola, Alexei A. Efros

Image-to-image translation is a class of vision and graphics problems where the goal is to learn the mapping between an input image and an output image using a training set of aligned image pairs.

Ranked #1 on

Image-to-Image Translation

on zebra2horse

(Frechet Inception Distance metric)

Ranked #1 on

Image-to-Image Translation

on zebra2horse

(Frechet Inception Distance metric)

Multimodal Unsupervised Image-To-Image Translation

Multimodal Unsupervised Image-To-Image Translation

Style Transfer

+2

Style Transfer

+2

Combining Self-Supervised Learning and Imitation for Vision-Based Rope Manipulation

no code implementations • 6 Mar 2017 • Ashvin Nair, Dian Chen, Pulkit Agrawal, Phillip Isola, Pieter Abbeel, Jitendra Malik, Sergey Levine

Manipulation of deformable objects, such as ropes and cloth, is an important but challenging problem in robotics.

Split-Brain Autoencoders: Unsupervised Learning by Cross-Channel Prediction

2 code implementations • CVPR 2017 • Richard Zhang, Phillip Isola, Alexei A. Efros

We propose split-brain autoencoders, a straightforward modification of the traditional autoencoder architecture, for unsupervised representation learning.

Ranked #127 on

Self-Supervised Image Classification

on ImageNet

Ranked #127 on

Self-Supervised Image Classification

on ImageNet

Representation Learning

Representation Learning

Self-Supervised Image Classification

+1

Self-Supervised Image Classification

+1

Image-to-Image Translation with Conditional Adversarial Networks

176 code implementations • CVPR 2017 • Phillip Isola, Jun-Yan Zhu, Tinghui Zhou, Alexei A. Efros

We investigate conditional adversarial networks as a general-purpose solution to image-to-image translation problems.

Colorful Image Colorization

39 code implementations • 28 Mar 2016 • Richard Zhang, Phillip Isola, Alexei A. Efros

We embrace the underlying uncertainty of the problem by posing it as a classification task and use class-rebalancing at training time to increase the diversity of colors in the result.

Ranked #128 on

Self-Supervised Image Classification

on ImageNet

Ranked #128 on

Self-Supervised Image Classification

on ImageNet

Visually Indicated Sounds

no code implementations • CVPR 2016 • Andrew Owens, Phillip Isola, Josh Mcdermott, Antonio Torralba, Edward H. Adelson, William T. Freeman

Objects make distinctive sounds when they are hit or scratched.

Learning Ordinal Relationships for Mid-Level Vision

no code implementations • ICCV 2015 • Daniel Zoran, Phillip Isola, Dilip Krishnan, William T. Freeman

We demonstrate that this frame- work works well on two important mid-level vision tasks: intrinsic image decomposition and depth from an RGB im- age.

Learning visual groups from co-occurrences in space and time

2 code implementations • 21 Nov 2015 • Phillip Isola, Daniel Zoran, Dilip Krishnan, Edward H. Adelson

We propose a self-supervised framework that learns to group visual entities based on their rate of co-occurrence in space and time.

Discovering States and Transformations in Image Collections

no code implementations • CVPR 2015 • Phillip Isola, Joseph J. Lim, Edward H. Adelson

Our system works by generalizing across object classes: states and transformations learned on one set of objects are used to interpret the image collection for an entirely new object class.

Sparkle Vision: Seeing the World through Random Specular Microfacets

no code implementations • 26 Dec 2014 • Zhengdong Zhang, Phillip Isola, Edward H. Adelson

In this paper, we study the problem of reproducing the world lighting from a single image of an object covered with random specular microfacets on the surface.

Understanding the Intrinsic Memorability of Images

no code implementations • NeurIPS 2011 • Phillip Isola, Devi Parikh, Antonio Torralba, Aude Oliva

Artists, advertisers, and photographers are routinely presented with the task of creating an image that a viewer will remember.