Search Results for author: Jianqiang Huang

Found 64 papers, 30 papers with code

Momentum Batch Normalization for Deep Learning with Small Batch Size

no code implementations • ECCV 2020 • Hongwei Yong, Jianqiang Huang, Deyu Meng, Xian-Sheng Hua, Lei Zhang

To make a deeper understanding of BN, in this work we prove that BN actually introduces a certain level of noise into the sample mean and variance during the training process, while the noise level depends only on the batch size.

BERP: A Blind Estimator of Room Acoustic and Physical Parameters for Single-Channel Noisy Speech Signals

1 code implementation • 7 May 2024 • Lijun Wang, Yixian Lu, Ziyan Gao, Kai Li, Jianqiang Huang, Yuntao Kong, Shogo Okada

On the other hand, in this paper, we propose a novel universal blind estimation framework called the blind estimator of room acoustical and physical parameters (BERP), by introducing a new stochastic room impulse response (RIR) model, namely, the sparse stochastic impulse response (SSIR) model, and endowing the BERP with a unified encoder and multiple separate predictors to estimate RPPs and SSIR parameters in parallel.

ARFA: An Asymmetric Receptive Field Autoencoder Model for Spatiotemporal Prediction

no code implementations • 1 Sep 2023 • Wenxuan Zhang, Xuechao Zou, Li Wu, Xiaoying Wang, Jianqiang Huang, Junliang Xing

Additionally, we construct the RainBench, a large-scale radar echo dataset for precipitation prediction, to address the scarcity of meteorological data in the domain.

Structural and Statistical Texture Knowledge Distillation for Semantic Segmentation

no code implementations • CVPR 2022 • Deyi Ji, Haoran Wang, Mingyuan Tao, Jianqiang Huang, Xian-Sheng Hua, Hongtao Lu

Existing knowledge distillation works for semantic segmentation mainly focus on transferring high-level contextual knowledge from teacher to student.

Attention-based Class Activation Diffusion for Weakly-Supervised Semantic Segmentation

no code implementations • 20 Nov 2022 • Jianqiang Huang, Jian Wang, Qianru Sun, Hanwang Zhang

An intuitive solution is ``coupling'' the CAM with the long-range attention matrix of visual transformers (ViT) We find that the direct ``coupling'', e. g., pixel-wise multiplication of attention and activation, achieves a more global coverage (on the foreground), but unfortunately goes with a great increase of false positives, i. e., background pixels are mistakenly included.

Weakly supervised Semantic Segmentation

Weakly supervised Semantic Segmentation

Weakly-Supervised Semantic Segmentation

Weakly-Supervised Semantic Segmentation

On Mitigating Hard Clusters for Face Clustering

1 code implementation • 25 Jul 2022 • Yingjie Chen, Huasong Zhong, Chong Chen, Chen Shen, Jianqiang Huang, Tao Wang, Yun Liang, Qianru Sun

Face clustering is a promising way to scale up face recognition systems using large-scale unlabeled face images.

Rethinking IoU-based Optimization for Single-stage 3D Object Detection

1 code implementation • 19 Jul 2022 • Hualian Sheng, Sijia Cai, Na Zhao, Bing Deng, Jianqiang Huang, Xian-Sheng Hua, Min-Jian Zhao, Gim Hee Lee

Since Intersection-over-Union (IoU) based optimization maintains the consistency of the final IoU prediction metric and losses, it has been widely used in both regression and classification branches of single-stage 2D object detectors.

Spatial Likelihood Voting with Self-Knowledge Distillation for Weakly Supervised Object Detection

no code implementations • 14 Apr 2022 • Ze Chen, Zhihang Fu, Jianqiang Huang, Mingyuan Tao, Rongxin Jiang, Xiang Tian, Yaowu Chen, Xian-Sheng Hua

The likelihood maps generated by the SLV module are used to supervise the feature learning of the backbone network, encouraging the network to attend to wider and more diverse areas of the image.

Online Convolutional Re-parameterization

1 code implementation • CVPR 2022 • Mu Hu, Junyi Feng, Jiashen Hua, Baisheng Lai, Jianqiang Huang, Xiaojin Gong, Xiansheng Hua

Structural re-parameterization has drawn increasing attention in various computer vision tasks.

Homography Loss for Monocular 3D Object Detection

1 code implementation • CVPR 2022 • Jiaqi Gu, Bojian Wu, Lubin Fan, Jianqiang Huang, Shen Cao, Zhiyu Xiang, Xian-Sheng Hua

Monocular 3D object detection is an essential task in autonomous driving.

Dynamic Supervisor for Cross-dataset Object Detection

no code implementations • 1 Apr 2022 • Ze Chen, Zhihang Fu, Jianqiang Huang, Mingyuan Tao, Shengyu Li, Rongxin Jiang, Xiang Tian, Yaowu Chen, Xian-Sheng Hua

The application of cross-dataset training in object detection tasks is complicated because the inconsistency in the category range across datasets transforms fully supervised learning into semi-supervised learning.

Continual Learning for CTR Prediction: A Hybrid Approach

no code implementations • 18 Jan 2022 • Ke Hu, Yi Qi, Jianqiang Huang, Jia Cheng, Jun Lei

To address this problem, we formulate CTR prediction as a continual learning task and propose COLF, a hybrid COntinual Learning Framework for CTR prediction, which has a memory-based modular architecture that is designed to adapt, learn and give predictions continuously when faced with non-stationary drifting click data streams.

MPC: Multi-View Probabilistic Clustering

no code implementations • CVPR 2022 • Junjie Liu, Junlong Liu, Shaotian Yan, Rongxin Jiang, Xiang Tian, Boxuan Gu, Yaowu Chen, Chen Shen, Jianqiang Huang

Despite the promising progress having been made, the two challenges of multi-view clustering (MVC) are still waiting for better solutions: i) Most existing methods are either not qualified or require additional steps for incomplete multi-view clustering and ii) noise or outliers might significantly degrade the overall clustering performance.

Meta Convolutional Neural Networks for Single Domain Generalization

no code implementations • CVPR 2022 • Chaoqun Wan, Xu Shen, Yonggang Zhang, Zhiheng Yin, Xinmei Tian, Feng Gao, Jianqiang Huang, Xian-Sheng Hua

Taking meta features as reference, we propose compositional operations to eliminate irrelevant features of local convolutional features by an addressing process and then to reformulate the convolutional feature maps as a composition of related meta features.

Ranked #4 on

Single-Source Domain Generalization

on Digits-five

Ranked #4 on

Single-Source Domain Generalization

on Digits-five

Balanced and Hierarchical Relation Learning for One-Shot Object Detection

1 code implementation • CVPR 2022 • Hanqing Yang, Sijia Cai, Hualian Sheng, Bing Deng, Jianqiang Huang, Xian-Sheng Hua, Yong Tang, Yu Zhang

In this paper, we introduce the balanced and hierarchical learning for our detector.

Deconfounded Visual Grounding

no code implementations • 31 Dec 2021 • Jianqiang Huang, Yu Qin, Jiaxin Qi, Qianru Sun, Hanwang Zhang

We focus on the confounding bias between language and location in the visual grounding pipeline, where we find that the bias is the major visual reasoning bottleneck.

AutoHEnsGNN: Winning Solution to AutoGraph Challenge for KDD Cup 2020

1 code implementation • 25 Nov 2021 • Jin Xu, Mingjian Chen, Jianqiang Huang, Xingyuan Tang, Ke Hu, Jian Li, Jia Cheng, Jun Lei

Graph Neural Networks (GNNs) have become increasingly popular and achieved impressive results in many graph-based applications.

Meta Clustering Learning for Large-scale Unsupervised Person Re-identification

no code implementations • 19 Nov 2021 • Xin Jin, Tianyu He, Xu Shen, Tongliang Liu, Xinchao Wang, Jianqiang Huang, Zhibo Chen, Xian-Sheng Hua

Unsupervised Person Re-identification (U-ReID) with pseudo labeling recently reaches a competitive performance compared to fully-supervised ReID methods based on modern clustering algorithms.

Self-Supervised Learning Disentangled Group Representation as Feature

1 code implementation • NeurIPS 2021 • Tan Wang, Zhongqi Yue, Jianqiang Huang, Qianru Sun, Hanwang Zhang

A good visual representation is an inference map from observations (images) to features (vectors) that faithfully reflects the hidden modularized generative factors (semantics).

Density-Based Clustering with Kernel Diffusion

no code implementations • 11 Oct 2021 • Chao Zheng, Yingjie Chen, Chong Chen, Jianqiang Huang, Xian-Sheng Hua

Finding a suitable density function is essential for density-based clustering algorithms such as DBSCAN and DPC.

Networked Time Series Prediction with Incomplete Data via Generative Adversarial Network

no code implementations • 5 Oct 2021 • Yichen Zhu, Bo Jiang, Haiming Jin, Mengtian Zhang, Feng Gao, Jianqiang Huang, Tao Lin, Xinbing Wang

An important task in such applications is to predict the future values of a NETS based on its historical values and the underlying graph.

Unleash the Potential of Adaptation Models via Dynamic Domain Labels

no code implementations • 29 Sep 2021 • Xin Jin, Tianyu He, Xu Shen, Songhua Wu, Tongliang Liu, Xinchao Wang, Jianqiang Huang, Zhibo Chen, Xian-Sheng Hua

In this paper, we propose an embarrassing simple yet highly effective adversarial domain adaptation (ADA) method for effectively training models for alignment.

AutoSmart: An Efficient and Automatic Machine Learning framework for Temporal Relational Data

1 code implementation • 9 Sep 2021 • Zhipeng Luo, Zhixing He, Jin Wang, Manqing Dong, Jianqiang Huang, Mingjian Chen, Bohang Zheng

Temporal relational data, perhaps the most commonly used data type in industrial machine learning applications, needs labor-intensive feature engineering and data analyzing for giving precise model predictions.

Improving 3D Object Detection with Channel-wise Transformer

1 code implementation • ICCV 2021 • Hualian Sheng, Sijia Cai, YuAn Liu, Bing Deng, Jianqiang Huang, Xian-Sheng Hua, Min-Jian Zhao

Though 3D object detection from point clouds has achieved rapid progress in recent years, the lack of flexible and high-performance proposal refinement remains a great hurdle for existing state-of-the-art two-stage detectors.

Aug3D-RPN: Improving Monocular 3D Object Detection by Synthetic Images with Virtual Depth

no code implementations • 28 Jul 2021 • Chenhang He, Jianqiang Huang, Xian-Sheng Hua, Lei Zhang

Current geometry-based monocular 3D object detection models can efficiently detect objects by leveraging perspective geometry, but their performance is limited due to the absence of accurate depth information.

Revisiting Knowledge Distillation: An Inheritance and Exploration Framework

1 code implementation • CVPR 2021 • Zhen Huang, Xu Shen, Jun Xing, Tongliang Liu, Xinmei Tian, Houqiang Li, Bing Deng, Jianqiang Huang, Xian-Sheng Hua

The inheritance part is learned with a similarity loss to transfer the existing learned knowledge from the teacher model to the student model, while the exploration part is encouraged to learn representations different from the inherited ones with a dis-similarity loss.

Partial Person Re-Identification With Part-Part Correspondence Learning

no code implementations • CVPR 2021 • Tianyu He, Xu Shen, Jianqiang Huang, Zhibo Chen, Xian-Sheng Hua

Driven by the success of deep learning, the last decade has seen rapid advances in person re-identification (re-ID).

Deep Position-wise Interaction Network for CTR Prediction

1 code implementation • 10 Jun 2021 • Jianqiang Huang, Ke Hu, Qingtao Tang, Mingjian Chen, Yi Qi, Jia Cheng, Jun Lei

Click-through rate (CTR) prediction plays an important role in online advertising and recommender systems.

Criterion-based Heterogeneous Collaborative Filtering for Multi-behavior Implicit Recommendation

1 code implementation • 25 May 2021 • Xiao Luo, Daqing Wu, Yiyang Gu, Chong Chen, Luchen Liu, Jinwen Ma, Ming Zhang, Minghua Deng, Jianqiang Huang, Xian-Sheng Hua

Besides, CHCF integrates criterion learning and user preference learning into a unified framework, which can be trained jointly for the interaction prediction of the target behavior.

Discriminative-Generative Dual Memory Video Anomaly Detection

no code implementations • 29 Apr 2021 • Xin Guo, Zhongming Jin, Chong Chen, Helei Nie, Jianqiang Huang, Deng Cai, Xiaofei He, Xiansheng Hua

In this paper, we propose a DiscRiminative-gEnerative duAl Memory (DREAM) anomaly detection model to take advantage of a few anomalies and solve data imbalance.

Graph Contrastive Clustering

1 code implementation • ICCV 2021 • Huasong Zhong, Jianlong Wu, Chong Chen, Jianqiang Huang, Minghua Deng, Liqiang Nie, Zhouchen Lin, Xian-Sheng Hua

On the other hand, a novel graph-based contrastive learning strategy is proposed to learn more compact clustering assignments.

Half-Real Half-Fake Distillation for Class-Incremental Semantic Segmentation

no code implementations • 2 Apr 2021 • Zilong Huang, Wentian Hao, Xinggang Wang, Mingyuan Tao, Jianqiang Huang, Wenyu Liu, Xian-Sheng Hua

Despite their success for semantic segmentation, convolutional neural networks are ill-equipped for incremental learning, \ie, adapting the original segmentation model as new classes are available but the initial training data is not retained.

Class-Incremental Semantic Segmentation

Class-Incremental Semantic Segmentation

Incremental Learning

+1

Incremental Learning

+1

Cloth-Changing Person Re-identification from A Single Image with Gait Prediction and Regularization

1 code implementation • CVPR 2022 • Xin Jin, Tianyu He, Kecheng Zheng, Zhiheng Yin, Xu Shen, Zhen Huang, Ruoyu Feng, Jianqiang Huang, Xian-Sheng Hua, Zhibo Chen

Specifically, we introduce Gait recognition as an auxiliary task to drive the Image ReID model to learn cloth-agnostic representations by leveraging personal unique and cloth-independent gait information, we name this framework as GI-ReID.

Ranked #5 on

Person Re-Identification

on PRCC

Ranked #5 on

Person Re-Identification

on PRCC

Cloth-Changing Person Re-Identification

Cloth-Changing Person Re-Identification

Computational Efficiency

+1

Computational Efficiency

+1

The Blessings of Unlabeled Background in Untrimmed Videos

1 code implementation • CVPR 2021 • YuAn Liu, Jingyuan Chen, Zhenfang Chen, Bing Deng, Jianqiang Huang, Hanwang Zhang

The key challenge is how to distinguish the action of interest segments from the background, which is unlabelled even on the video-level.

Weakly-supervised Temporal Action Localization

Weakly-supervised Temporal Action Localization

Weakly Supervised Temporal Action Localization

Weakly Supervised Temporal Action Localization

Dense Interaction Learning for Video-based Person Re-identification

no code implementations • ICCV 2021 • Tianyu He, Xin Jin, Xu Shen, Jianqiang Huang, Zhibo Chen, Xian-Sheng Hua

The CNN encoder is responsible for efficiently extracting discriminative spatial features while the DI decoder is designed to densely model spatial-temporal inherent interaction across frames.

Ranked #1 on

Person Re-Identification

on DukeMTMC-reID

Ranked #1 on

Person Re-Identification

on DukeMTMC-reID

Video Object Segmentation With Dynamic Memory Networks and Adaptive Object Alignment

1 code implementation • ICCV 2021 • Shuxian Liang, Xu Shen, Jianqiang Huang, Xian-Sheng Hua

In this paper, we propose a novel solution for object-matching based semi-supervised video object segmentation, where the target object masks in the first frame are provided.

3D Local Convolutional Neural Networks for Gait Recognition

1 code implementation • ICCV 2021 • Zhen Huang, Dixiu Xue, Xu Shen, Xinmei Tian, Houqiang Li, Jianqiang Huang, Xian-Sheng Hua

Second, different body parts possess different scales, and even the same part in different frames can appear at different locations and scales.

Ranked #2 on

Gait Recognition

on OUMVLP

Ranked #2 on

Gait Recognition

on OUMVLP

VideoFlow: A Framework for Building Visual Analysis Pipelines

no code implementations • 1 Jan 2021 • Yue Wu, Jianqiang Huang, Jiangjie Zhen, Guokun Wang, Chen Shen, Chang Zhou, Xian-Sheng Hua

The past years have witnessed an explosion of deep learning frameworks like PyTorch and TensorFlow since the success of deep neural networks.

Camera-aware Proxies for Unsupervised Person Re-Identification

1 code implementation • 19 Dec 2020 • Menglin Wang, Baisheng Lai, Jianqiang Huang, Xiaojin Gong, Xian-Sheng Hua

These camera-aware proxies enable us to deal with large intra-ID variance and generate more reliable pseudo labels for learning.

Learning to Generate Content-Aware Dynamic Detectors

no code implementations • 8 Dec 2020 • Junyi Feng, Jiashen Hua, Baisheng Lai, Jianqiang Huang, Xi Li, Xian-Sheng Hua

To the best of our knowledge, our CADDet is the first work to introduce dynamic routing mechanism in object detection.

Spatio-Temporal Inception Graph Convolutional Networks for Skeleton-Based Action Recognition

1 code implementation • 26 Nov 2020 • Zhen Huang, Xu Shen, Xinmei Tian, Houqiang Li, Jianqiang Huang, Xian-Sheng Hua

The topology of the adjacency graph is a key factor for modeling the correlations of the input skeletons.

DCT-Mask: Discrete Cosine Transform Mask Representation for Instance Segmentation

1 code implementation • CVPR 2021 • Xing Shen, Jirui Yang, Chunbo Wei, Bing Deng, Jianqiang Huang, Xiansheng Hua, Xiaoliang Cheng, Kewei Liang

Generally, a low-resolution grid is not sufficient to capture the details, while a high-resolution grid dramatically increases the training complexity.

Tracklets Predicting Based Adaptive Graph Tracking

no code implementations • 18 Oct 2020 • Chaobing Shan, Chunbo Wei, Bing Deng, Jianqiang Huang, Xian-Sheng Hua, Xiaoliang Cheng, Kewei Liang

It re-extracts the features of the tracklets in the current frame based on motion predicting, which is the key to solve the problem of features inconsistent.

CIMON: Towards High-quality Hash Codes

no code implementations • 15 Oct 2020 • Xiao Luo, Daqing Wu, Zeyu Ma, Chong Chen, Minghua Deng, Jinwen Ma, Zhongming Jin, Jianqiang Huang, Xian-Sheng Hua

However, due to the inefficient representation ability of the pre-trained model, many false positives and negatives in local semantic similarity will be introduced and lead to error propagation during the hash code learning.

Long-Tailed Classification by Keeping the Good and Removing the Bad Momentum Causal Effect

2 code implementations • NeurIPS 2020 • Kaihua Tang, Jianqiang Huang, Hanwang Zhang

On one hand, it has a harmful causal effect that misleads the tail prediction biased towards the head.

Ranked #36 on

Long-tail Learning

on CIFAR-10-LT (ρ=10)

Ranked #36 on

Long-tail Learning

on CIFAR-10-LT (ρ=10)

PCPL: Predicate-Correlation Perception Learning for Unbiased Scene Graph Generation

1 code implementation • 2 Sep 2020 • Shaotian Yan, Chen Shen, Zhongming Jin, Jianqiang Huang, Rongxin Jiang, Yaowu Chen, Xian-Sheng Hua

Today, scene graph generation(SGG) task is largely limited in realistic scenarios, mainly due to the extremely long-tailed bias of predicate annotation distribution.

Ranked #4 on

Unbiased Scene Graph Generation

on Visual Genome

Ranked #4 on

Unbiased Scene Graph Generation

on Visual Genome

Apparel-invariant Feature Learning for Apparel-changed Person Re-identification

no code implementations • 14 Aug 2020 • Zhengxu Yu, Yilun Zhao, Bin Hong, Zhongming Jin, Jianqiang Huang, Deng Cai, Xiaofei He, Xian-Sheng Hua

Therefore, it is critical to learn an apparel-invariant person representation under cases like cloth changing or several persons wearing similar clothes.

Out-of-distribution Generalization via Partial Feature Decorrelation

no code implementations • 30 Jul 2020 • Xin Guo, Zhengxu Yu, Chao Xiang, Zhongming Jin, Jianqiang Huang, Deng Cai, Xiaofei He, Xian-Sheng Hua

Most deep-learning-based image classification methods assume that all samples are generated under an independent and identically distributed (IID) setting.

Joint Auction-Coalition Formation Framework for Communication-Efficient Federated Learning in UAV-Enabled Internet of Vehicles

no code implementations • 13 Jul 2020 • Jer Shyuan Ng, Wei Yang Bryan Lim, Hong-Ning Dai, Zehui Xiong, Jianqiang Huang, Dusit Niyato, Xian-Sheng Hua, Cyril Leung, Chunyan Miao

The simulation results show that the grand coalition, where all UAVs join a single coalition, is not always stable due to the profit-maximizing behavior of the UAVs.

Networking and Internet Architecture Signal Processing

Towards Federated Learning in UAV-Enabled Internet of Vehicles: A Multi-Dimensional Contract-Matching Approach

no code implementations • 8 Apr 2020 • Wei Yang Bryan Lim, Jianqiang Huang, Zehui Xiong, Jiawen Kang, Dusit Niyato, Xian-Sheng Hua, Cyril Leung, Chunyan Miao

Coupled with the rise of Deep Learning, the wealth of data and enhanced computation capabilities of Internet of Vehicles (IoV) components enable effective Artificial Intelligence (AI) based models to be built.

Signal Processing Networking and Internet Architecture

Gradient Centralization: A New Optimization Technique for Deep Neural Networks

7 code implementations • ECCV 2020 • Hongwei Yong, Jianqiang Huang, Xian-Sheng Hua, Lei Zhang

It has been shown that using the first and second order statistics (e. g., mean and variance) to perform Z-score standardization on network activations or weight vectors, such as batch normalization (BN) and weight standardization (WS), can improve the training performance.

A Survey on Deep Hashing Methods

no code implementations • 4 Mar 2020 • Xiao Luo, Haixin Wang, Daqing Wu, Chong Chen, Minghua Deng, Jianqiang Huang, Xian-Sheng Hua

Nearest neighbor search aims to obtain the samples in the database with the smallest distances from them to the queries, which is a basic task in a range of fields, including computer vision and data mining.

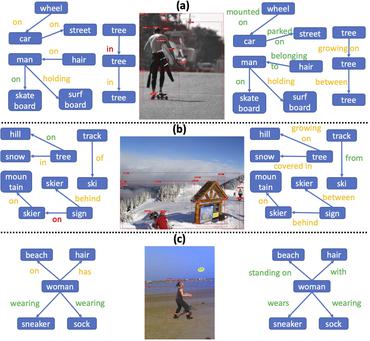

Unbiased Scene Graph Generation from Biased Training

6 code implementations • CVPR 2020 • Kaihua Tang, Yulei Niu, Jianqiang Huang, Jiaxin Shi, Hanwang Zhang

Today's scene graph generation (SGG) task is still far from practical, mainly due to the severe training bias, e. g., collapsing diverse "human walk on / sit on / lay on beach" into "human on beach".

Ranked #3 on

Scene Graph Generation

on Visual Genome

Ranked #3 on

Scene Graph Generation

on Visual Genome

Visual Commonsense R-CNN

1 code implementation • CVPR 2020 • Tan Wang, Jianqiang Huang, Hanwang Zhang, Qianru Sun

We present a novel unsupervised feature representation learning method, Visual Commonsense Region-based Convolutional Neural Network (VC R-CNN), to serve as an improved visual region encoder for high-level tasks such as captioning and VQA.

Ranked #23 on

Image Captioning

on COCO Captions

Ranked #23 on

Image Captioning

on COCO Captions

Towards Precise Intra-camera Supervised Person Re-identification

no code implementations • 12 Feb 2020 • Menglin Wang, Baisheng Lai, Haokun Chen, Jianqiang Huang, Xiaojin Gong, Xian-Sheng Hua

Our approach performs even comparable to state-of-the-art fully supervised methods in two of the datasets.

Two Causal Principles for Improving Visual Dialog

1 code implementation • CVPR 2020 • Jiaxin Qi, Yulei Niu, Jianqiang Huang, Hanwang Zhang

This paper unravels the design tricks adopted by us, the champion team MReaL-BDAI, for Visual Dialog Challenge 2019: two causal principles for improving Visual Dialog (VisDial).

Quantization Networks

1 code implementation • CVPR 2019 • Jiwei Yang, Xu Shen, Jun Xing, Xinmei Tian, Houqiang Li, Bing Deng, Jianqiang Huang, Xian-Sheng Hua

The proposed quantization function can be learned in a lossless and end-to-end manner and works for any weights and activations of neural networks in a simple and uniform way.

Neural News Recommendation with Heterogeneous User Behavior

no code implementations • IJCNLP 2019 • Chuhan Wu, Fangzhao Wu, Mingxiao An, Tao Qi, Jianqiang Huang, Yongfeng Huang, Xing Xie

In the user representation module, we propose an attentive multi-view learning framework to learn unified representations of users from their heterogeneous behaviors such as search queries, clicked news and browsed webpages.

Progressive Transfer Learning

1 code implementation • 7 Aug 2019 • Zhengxu Yu, Dong Shen, Zhongming Jin, Jianqiang Huang, Deng Cai, Xian-Sheng Hua

Model fine-tuning is a widely used transfer learning approach in person Re-identification (ReID) applications, which fine-tuning a pre-trained feature extraction model into the target scenario instead of training a model from scratch.

NPA: Neural News Recommendation with Personalized Attention

no code implementations • 12 Jul 2019 • Chuhan Wu, Fangzhao Wu, Mingxiao An, Jianqiang Huang, Yongfeng Huang, Xing Xie

Since different words and different news articles may have different informativeness for representing news and users, we propose to apply both word- and news-level attention mechanism to help our model attend to important words and news articles.

Neural News Recommendation with Attentive Multi-View Learning

5 code implementations • 12 Jul 2019 • Chuhan Wu, Fangzhao Wu, Mingxiao An, Jianqiang Huang, Yongfeng Huang, Xing Xie

In the user encoder we learn the representations of users based on their browsed news and apply attention mechanism to select informative news for user representation learning.

Ranked #6 on

News Recommendation

on MIND

Ranked #6 on

News Recommendation

on MIND

Deep Active Learning for Video-based Person Re-identification

no code implementations • 14 Dec 2018 • Menglin Wang, Baisheng Lai, Zhongming Jin, Xiaojin Gong, Jianqiang Huang, Xian-Sheng Hua

With the gained annotations of the actively selected candidates, the tracklets' pesudo labels are updated by label merging and further used to re-train our re-ID model.

Dynamic Spatio-temporal Graph-based CNNs for Traffic Prediction

no code implementations • 5 Dec 2018 • Ken Chen, Fei Chen, Baisheng Lai, Zhongming Jin, Yong liu, Kai Li, Long Wei, Pengfei Wang, Yandong Tang, Jianqiang Huang, Xian-Sheng Hua

To capture the graph dynamics, we use the graph prediction stream to predict the dynamic graph structures, and the predicted structures are fed into the flow prediction stream.

Homocentric Hypersphere Feature Embedding for Person Re-identification

no code implementations • 24 Apr 2018 • Wangmeng Xiang, Jianqiang Huang, Xianbiao Qi, Xian-Sheng Hua, Lei Zhang

Person re-identification (Person ReID) is a challenging task due to the large variations in camera viewpoint, lighting, resolution, and human pose.