Search Results for author: Ming-Hsuan Yang

Found 353 papers, 176 papers with code

Visual Tracking via Locality Sensitive Histograms

no code implementations • CVPR 2013 • Shengfeng He, Qingxiong Yang, Rynson W. H. Lau, Jiang Wang, Ming-Hsuan Yang

A robust tracking framework based on the locality sensitive histograms is proposed, which consists of two main components: a new feature for tracking that is robust to illumination changes and a novel multi-region tracking algorithm that runs in realtime even with hundreds of regions.

Online Object Tracking: A Benchmark

no code implementations • CVPR 2013 • Yi Wu, Jongwoo Lim, Ming-Hsuan Yang

Object tracking is one of the most important components in numerous applications of computer vision.

Structured Face Hallucination

no code implementations • CVPR 2013 • Chih-Yuan Yang, Sifei Liu, Ming-Hsuan Yang

Each face image is represented in terms of facial components, contours and smooth regions.

Saliency Detection via Graph-Based Manifold Ranking

no code implementations • CVPR 2013 • Chuan Yang, Lihe Zhang, Huchuan Lu, Xiang Ruan, Ming-Hsuan Yang

The saliency of the image elements is defined based on their relevances to the given seeds or queries.

Least Soft-Threshold Squares Tracking

no code implementations • CVPR 2013 • Dong Wang, Huchuan Lu, Ming-Hsuan Yang

In this paper, we propose a generative tracking method based on a novel robust linear regression algorithm.

Parallel Coordinate Descent Newton Method for Efficient $\ell_1$-Regularized Minimization

1 code implementation • 18 Jun 2013 • An Bian, Xiong Li, Yuncai Liu, Ming-Hsuan Yang

We show that: (1) PCDN is guaranteed to converge globally despite increasing parallelism; (2) PCDN converges to the specified accuracy $\epsilon$ within the limited iteration number of $T_\epsilon$, and $T_\epsilon$ decreases with increasing parallelism (bundle size $P$).

Fast Tracking via Spatio-Temporal Context Learning

no code implementations • 8 Nov 2013 • Kaihua Zhang, Lei Zhang, Ming-Hsuan Yang, David Zhang

In this paper, we present a simple yet fast and robust algorithm which exploits the spatio-temporal context for visual tracking.

Max-Margin Boltzmann Machines for Object Segmentation

no code implementations • CVPR 2014 • Jimei Yang, Simon Safar, Ming-Hsuan Yang

We present Max-Margin Boltzmann Machines (MMBMs) for object segmentation.

Deblurring Text Images via L0-Regularized Intensity and Gradient Prior

no code implementations • CVPR 2014 • Jinshan Pan, Zhe Hu, Zhixun Su, Ming-Hsuan Yang

We propose a simple yet effective L_0-regularized prior based on intensity and gradient for text image deblurring.

Joint Depth Estimation and Camera Shake Removal from Single Blurry Image

no code implementations • CVPR 2014 • Zhe Hu, Li Xu, Ming-Hsuan Yang

The non-uniform blur effect is not only caused by the camera motion, but also the depth variation of the scene.

Context Driven Scene Parsing with Attention to Rare Classes

no code implementations • CVPR 2014 • Jimei Yang, Brian Price, Scott Cohen, Ming-Hsuan Yang

This paper presents a scalable scene parsing algorithm based on image retrieval and superpixel matching.

Deblurring Low-light Images with Light Streaks

no code implementations • CVPR 2014 • Zhe Hu, Sunghyun Cho, Jue Wang, Ming-Hsuan Yang

Images taken in low-light conditions with handheld cameras are often blurry due to the required long exposure time.

Ranked #11 on

Deblurring

on RealBlur-R (trained on GoPro)

Ranked #11 on

Deblurring

on RealBlur-R (trained on GoPro)

Robust Visual Tracking via Convolutional Networks

no code implementations • 19 Jan 2015 • Kaihua Zhang, Qingshan Liu, Yi Wu, Ming-Hsuan Yang

In this paper we present that, even without offline training with a large amount of auxiliary data, simple two-layer convolutional networks can be powerful enough to develop a robust representation for visual tracking.

Multi-Objective Convolutional Learning for Face Labeling

no code implementations • CVPR 2015 • Sifei Liu, Jimei Yang, Chang Huang, Ming-Hsuan Yang

This paper formulates face labeling as a conditional random field with unary and pairwise classifiers.

Multi-Instance Object Segmentation With Occlusion Handling

no code implementations • CVPR 2015 • Yi-Ting Chen, Xiaokai Liu, Ming-Hsuan Yang

We present a multi-instance object segmentation algorithm to tackle occlusions.

Adaptive Region Pooling for Object Detection

no code implementations • CVPR 2015 • Yi-Hsuan Tsai, Onur C. Hamsici, Ming-Hsuan Yang

Learning models for object detection is a challenging problem due to the large intra-class variability of objects in appearance, viewpoints, and rigidity.

PatchCut: Data-Driven Object Segmentation via Local Shape Transfer

no code implementations • CVPR 2015 • Jimei Yang, Brian Price, Scott Cohen, Zhe Lin, Ming-Hsuan Yang

The transferred local shape masks constitute a patch-level segmentation solution space and we thus develop a novel cascade algorithm, PatchCut, for coarse-to-fine object segmentation.

Long-Term Correlation Tracking

no code implementations • CVPR 2015 • Chao Ma, Xiaokang Yang, Chongyang Zhang, Ming-Hsuan Yang

In this paper, we address the problem of long-term visual tracking where the target objects undergo significant appearance variation due to deformation, abrupt motion, heavy occlusion and out-of-the-view.

Deep Networks for Saliency Detection via Local Estimation and Global Search

no code implementations • CVPR 2015 • Lijun Wang, Huchuan Lu, Xiang Ruan, Ming-Hsuan Yang

In the global search stage, the local saliency map together with global contrast and geometric information are used as global features to describe a set of object candidate regions.

Structural Sparse Tracking

no code implementations • CVPR 2015 • Tianzhu Zhang, Si Liu, Changsheng Xu, Shuicheng Yan, Bernard Ghanem, Narendra Ahuja, Ming-Hsuan Yang

Sparse representation has been applied to visual tracking by finding the best target candidate with minimal reconstruction error by use of target templates.

JOTS: Joint Online Tracking and Segmentation

no code implementations • CVPR 2015 • Longyin Wen, Dawei Du, Zhen Lei, Stan Z. Li, Ming-Hsuan Yang

We present a novel Joint Online Tracking and Segmentation (JOTS) algorithm which integrates the multi-part tracking and segmentation into a unified energy optimization framework to handle the video segmentation task.

Salient Object Detection via Bootstrap Learning

no code implementations • CVPR 2015 • Na Tong, Huchuan Lu, Xiang Ruan, Ming-Hsuan Yang

Furthermore, we show that the proposed bootstrap learning approach can be easily applied to other bottom-up saliency models for significant improvement.

To Know Where We Are: Vision-Based Positioning in Outdoor Environments

no code implementations • 19 Jun 2015 • Kuan-Wen Chen, Chun-Hsin Wang, Xiao Wei, Qiao Liang, Ming-Hsuan Yang, Chu-Song Chen, Yi-Ping Hung

Augmented reality (AR) displays become more and more popular recently, because of its high intuitiveness for humans and high-quality head-mounted display have rapidly developed.

DeepSaliency: Multi-Task Deep Neural Network Model for Salient Object Detection

no code implementations • 19 Oct 2015 • Xi Li, Liming Zhao, Lina Wei, Ming-Hsuan Yang, Fei Wu, Yueting Zhuang, Haibin Ling, Jingdong Wang

A key problem in salient object detection is how to effectively model the semantic properties of salient objects in a data-driven manner.

UA-DETRAC: A New Benchmark and Protocol for Multi-Object Detection and Tracking

no code implementations • 13 Nov 2015 • Longyin Wen, Dawei Du, Zhaowei Cai, Zhen Lei, Ming-Ching Chang, Honggang Qi, Jongwoo Lim, Ming-Hsuan Yang, Siwei Lyu

In this work, we perform a comprehensive quantitative study on the effects of object detection accuracy to the overall MOT performance, using the new large-scale University at Albany DETection and tRACking (UA-DETRAC) benchmark dataset.

Fast and Accurate Head Pose Estimation via Random Projection Forests

no code implementations • ICCV 2015 • Donghoon Lee, Ming-Hsuan Yang, Songhwai Oh

In this paper, we consider the problem of estimating the gaze direction of a person from a low-resolution image.

What Makes an Object Memorable?

no code implementations • ICCV 2015 • Rachit Dubey, Joshua Peterson, Aditya Khosla, Ming-Hsuan Yang, Bernard Ghanem

We augment both the images and object segmentations from the PASCAL-S dataset with ground truth memorability scores and shed light on the various factors and properties that make an object memorable (or forgettable) to humans.

Hierarchical Convolutional Features for Visual Tracking

no code implementations • ICCV 2015 • Chao Ma, Jia-Bin Huang, Xiaokang Yang, Ming-Hsuan Yang

The outputs of the last convolutional layers encode the semantic information of targets and such representations are robust to significant appearance variations.

Weakly-supervised Disentangling with Recurrent Transformations for 3D View Synthesis

no code implementations • NeurIPS 2015 • Jimei Yang, Scott Reed, Ming-Hsuan Yang, Honglak Lee

An important problem for both graphics and vision is to synthesize novel views of a 3D object from a single image.

Learning Support Correlation Filters for Visual Tracking

no code implementations • 22 Jan 2016 • Wangmeng Zuo, Xiaohe Wu, Liang Lin, Lei Zhang, Ming-Hsuan Yang

Sampling and budgeting training examples are two essential factors in tracking algorithms based on support vector machines (SVMs) as a trade-off between accuracy and efficiency.

Object Contour Detection with a Fully Convolutional Encoder-Decoder Network

3 code implementations • CVPR 2016 • Jimei Yang, Brian Price, Scott Cohen, Honglak Lee, Ming-Hsuan Yang

We develop a deep learning algorithm for contour detection with a fully convolutional encoder-decoder network.

Superpixel Hierarchy

1 code implementation • 20 May 2016 • Xing Wei, Qingxiong Yang, Yihong Gong, Ming-Hsuan Yang, Narendra Ahuja

Quantitative and qualitative evaluation on a number of computer vision applications was conducted, demonstrating that the proposed method is the top performer.

Soft-Segmentation Guided Object Motion Deblurring

no code implementations • CVPR 2016 • Jinshan Pan, Zhe Hu, Zhixun Su, Hsin-Ying Lee, Ming-Hsuan Yang

To address these problems, we propose a novel model for object motion deblurring.

Online Multi-Object Tracking via Structural Constraint Event Aggregation

no code implementations • CVPR 2016 • Ju Hong Yoon, Chang-Ryeol Lee, Ming-Hsuan Yang, Kuk-Jin Yoon

In addition, to further improve the robustness of data association against mis-detections and clutters, a novel event aggregation approach is developed to integrate structural constraints in assignment costs for online MOT.

![]() Ranked #21 on

Multiple Object Tracking

on KITTI Tracking test

Ranked #21 on

Multiple Object Tracking

on KITTI Tracking test

Object Tracking via Dual Linear Structured SVM and Explicit Feature Map

no code implementations • CVPR 2016 • Jifeng Ning, Jimei Yang, Shaojie Jiang, Lei Zhang, Ming-Hsuan Yang

Structured support vector machine (SSVM) based methods has demonstrated encouraging performance in recent object tracking benchmarks.

Hedged Deep Tracking

no code implementations • CVPR 2016 • Yuankai Qi, Shengping Zhang, Lei Qin, Hongxun Yao, Qingming Huang, Jongwoo Lim, Ming-Hsuan Yang

In recent years, several methods have been developed to utilize hierarchical features learned from a deep convolutional neural network (CNN) for visual tracking.

Image Deblurring Using Smartphone Inertial Sensors

no code implementations • CVPR 2016 • Zhe Hu, Lu Yuan, Stephen Lin, Ming-Hsuan Yang

Removing image blur caused by camera shake is an ill-posed problem, as both the latent image and the point spread function (PSF) are unknown.

Blind Image Deblurring Using Dark Channel Prior

no code implementations • CVPR 2016 • Jinshan Pan, Deqing Sun, Hanspeter Pfister, Ming-Hsuan Yang

Therefore, enforcing the sparsity of the dark channel helps blind deblurring on various scenarios, including natural, face, text, and low-illumination images.

Ranked #10 on

Deblurring

on RealBlur-R (trained on GoPro)

Ranked #10 on

Deblurring

on RealBlur-R (trained on GoPro)

A Comparative Study for Single Image Blind Deblurring

no code implementations • CVPR 2016 • Wei-Sheng Lai, Jia-Bin Huang, Zhe Hu, Narendra Ahuja, Ming-Hsuan Yang

Using these datasets, we conduct a large-scale user study to quantify the performance of several representative state-of-the-art blind deblurring algorithms.

Weakly Supervised Object Localization With Progressive Domain Adaptation

no code implementations • CVPR 2016 • Dong Li, Jia-Bin Huang, Ya-Li Li, Shengjin Wang, Ming-Hsuan Yang

In this paper, we address this problem by progressive domain adaptation with two main steps: classification adaptation and detection adaptation.

Video Segmentation via Object Flow

no code implementations • CVPR 2016 • Yi-Hsuan Tsai, Ming-Hsuan Yang, Michael J. Black

Video object segmentation is challenging due to fast moving objects, deforming shapes, and cluttered backgrounds.

![]() Ranked #74 on

Semi-Supervised Video Object Segmentation

on DAVIS 2016

(using extra training data)

Ranked #74 on

Semi-Supervised Video Object Segmentation

on DAVIS 2016

(using extra training data)

Robust Kernel Estimation With Outliers Handling for Image Deblurring

no code implementations • CVPR 2016 • Jinshan Pan, Zhouchen Lin, Zhixun Su, Ming-Hsuan Yang

Estimating blur kernels from real world images is a challenging problem as the linear image formation assumption does not hold when significant outliers, such as saturated pixels and non-Gaussian noise, are present.

Visual Tracking via Boolean Map Representations

no code implementations • 30 Oct 2016 • Kaihua Zhang, Qingshan Liu, Ming-Hsuan Yang

In this paper, we present a simple yet effective Boolean map based representation that exploits connectivity cues for visual tracking.

Learning Fully Convolutional Networks for Iterative Non-blind Deconvolution

no code implementations • CVPR 2017 • Jiawei Zhang, Jinshan Pan, Wei-Sheng Lai, Rynson Lau, Ming-Hsuan Yang

In this paper, we propose a fully convolutional networks for iterative non-blind deconvolution We decompose the non-blind deconvolution problem into image denoising and image deconvolution.

Learning a No-Reference Quality Metric for Single-Image Super-Resolution

2 code implementations • 18 Dec 2016 • Chao Ma, Chih-Yuan Yang, Xiaokang Yang, Ming-Hsuan Yang

Numerous single-image super-resolution algorithms have been proposed in the literature, but few studies address the problem of performance evaluation based on visual perception.

Ranked #7 on

Video Quality Assessment

on MSU SR-QA Dataset

Ranked #7 on

Video Quality Assessment

on MSU SR-QA Dataset

Dual Deep Network for Visual Tracking

1 code implementation • 19 Dec 2016 • Zhizhen Chi, Hongyang Li, Huchuan Lu, Ming-Hsuan Yang

In this paper, we propose a dual network to better utilize features among layers for visual tracking.

Online multi-object tracking via robust collaborative model and sample selection

1 code implementation • Computer Vision and Image Understanding 2017 • Mohamed A. Naiel, M. Omair Ahmad, M.N.S. Swamy, Jongwoo Lim, Ming-Hsuan Yang

For each frame, we construct an association between detections and trackers, and treat each detected image region as a key sample, for online update, if it is associated to a tracker.

![]() Ranked #1 on

Online Multi-Object Tracking

on Oxford Town Center

Ranked #1 on

Online Multi-Object Tracking

on Oxford Town Center

Deep Image Harmonization

2 code implementations • CVPR 2017 • Yi-Hsuan Tsai, Xiaohui Shen, Zhe Lin, Kalyan Sunkavalli, Xin Lu, Ming-Hsuan Yang

Compositing is one of the most common operations in photo editing.

Diversified Texture Synthesis with Feed-forward Networks

no code implementations • CVPR 2017 • Yijun Li, Chen Fang, Jimei Yang, Zhaowen Wang, Xin Lu, Ming-Hsuan Yang

Recent progresses on deep discriminative and generative modeling have shown promising results on texture synthesis.

Unsupervised Holistic Image Generation from Key Local Patches

1 code implementation • ECCV 2018 • Donghoon Lee, Sangdoo Yun, Sungjoon Choi, Hwiyeon Yoo, Ming-Hsuan Yang, Songhwai Oh

We introduce a new problem of generating an image based on a small number of key local patches without any geometric prior.

Semantic-driven Generation of Hyperlapse from $360^\circ$ Video

no code implementations • 31 Mar 2017 • Wei-Sheng Lai, Yujia Huang, Neel Joshi, Chris Buehler, Ming-Hsuan Yang, Sing Bing Kang

We present a system for converting a fully panoramic ($360^\circ$) video into a normal field-of-view (NFOV) hyperlapse for an optimal viewing experience.

Deep Laplacian Pyramid Networks for Fast and Accurate Super-Resolution

1 code implementation • CVPR 2017 • Wei-Sheng Lai, Jia-Bin Huang, Narendra Ahuja, Ming-Hsuan Yang

Convolutional neural networks have recently demonstrated high-quality reconstruction for single-image super-resolution.

Ranked #40 on

Image Super-Resolution

on BSD100 - 4x upscaling

Ranked #40 on

Image Super-Resolution

on BSD100 - 4x upscaling

Generative Face Completion

2 code implementations • CVPR 2017 • Yijun Li, Sifei Liu, Jimei Yang, Ming-Hsuan Yang

In this paper, we propose an effective face completion algorithm using a deep generative model.

Universal Style Transfer via Feature Transforms

15 code implementations • NeurIPS 2017 • Yijun Li, Chen Fang, Jimei Yang, Zhaowen Wang, Xin Lu, Ming-Hsuan Yang

The whitening and coloring transforms reflect a direct matching of feature covariance of the content image to a given style image, which shares similar spirits with the optimization of Gram matrix based cost in neural style transfer.

Learning Structured Semantic Embeddings for Visual Recognition

no code implementations • 5 Jun 2017 • Dong Li, Hsin-Ying Lee, Jia-Bin Huang, Shengjin Wang, Ming-Hsuan Yang

First, we exploit the discriminative constraints to capture the intra- and inter-class relationships of image embeddings.

Learning Spatial-Aware Regressions for Visual Tracking

1 code implementation • CVPR 2018 • Chong Sun, Dong Wang, Huchuan Lu, Ming-Hsuan Yang

Second, we propose a fully convolutional neural network with spatially regularized kernels, through which the filter kernel corresponding to each output channel is forced to focus on a specific region of the target.

![]() Ranked #12 on

Visual Object Tracking

on VOT2017/18

Ranked #12 on

Visual Object Tracking

on VOT2017/18

Multi-Task Correlation Particle Filter for Robust Object Tracking

no code implementations • CVPR 2017 • Tianzhu Zhang, Changsheng Xu, Ming-Hsuan Yang

In this paper, we propose a multi-task correlation particle filter (MCPF) for robust visual tracking.

Adaptive Correlation Filters with Long-Term and Short-Term Memory for Object Tracking

1 code implementation • 7 Jul 2017 • Chao Ma, Jia-Bin Huang, Xiaokang Yang, Ming-Hsuan Yang

Second, we learn a correlation filter over a feature pyramid centered at the estimated target position for predicting scale changes.

Robust Visual Tracking via Hierarchical Convolutional Features

1 code implementation • 12 Jul 2017 • Chao Ma, Jia-Bin Huang, Xiaokang Yang, Ming-Hsuan Yang

Specifically, we learn adaptive correlation filters on the outputs from each convolutional layer to encode the target appearance.

CREST: Convolutional Residual Learning for Visual Tracking

no code implementations • ICCV 2017 • Yibing Song, Chao Ma, Lijun Gong, Jiawei Zhang, Rynson Lau, Ming-Hsuan Yang

Our method integrates feature extraction, response map generation as well as model update into the neural networks for an end-to-end training.

Unsupervised Representation Learning by Sorting Sequences

1 code implementation • ICCV 2017 • Hsin-Ying Lee, Jia-Bin Huang, Maneesh Singh, Ming-Hsuan Yang

We present an unsupervised representation learning approach using videos without semantic labels.

Ranked #46 on

Self-Supervised Action Recognition

on HMDB51

Ranked #46 on

Self-Supervised Action Recognition

on HMDB51

Face Parsing via Recurrent Propagation

no code implementations • 6 Aug 2017 • Sifei Liu, Jianping Shi, Ji Liang, Ming-Hsuan Yang

Face parsing is an important problem in computer vision that finds numerous applications including recognition and editing.

Unsupervised Domain Adaptation for Face Recognition in Unlabeled Videos

no code implementations • ICCV 2017 • Kihyuk Sohn, Sifei Liu, Guangyu Zhong, Xiang Yu, Ming-Hsuan Yang, Manmohan Chandraker

Despite rapid advances in face recognition, there remains a clear gap between the performance of still image-based face recognition and video-based face recognition, due to the vast difference in visual quality between the domains and the difficulty of curating diverse large-scale video datasets.

Video Deblurring via Semantic Segmentation and Pixel-Wise Non-Linear Kernel

no code implementations • ICCV 2017 • Wenqi Ren, Jinshan Pan, Xiaochun Cao, Ming-Hsuan Yang

We analyze the relationship between motion blur trajectory and optical flow, and present a novel pixel-wise non-linear kernel model to account for motion blur.

PiCANet: Learning Pixel-wise Contextual Attention for Saliency Detection

2 code implementations • CVPR 2018 • Nian Liu, Junwei Han, Ming-Hsuan Yang

We formulate the proposed PiCANet in both global and local forms to attend to global and local contexts, respectively.

Ranked #7 on

RGB Salient Object Detection

on SOC

Ranked #7 on

RGB Salient Object Detection

on SOC

Stylizing Face Images via Multiple Exemplars

no code implementations • 28 Aug 2017 • Yibing Song, Linchao Bao, Shengfeng He, Qingxiong Yang, Ming-Hsuan Yang

We address the problem of transferring the style of a headshot photo to face images.

Learning to Segment Instances in Videos with Spatial Propagation Network

no code implementations • 14 Sep 2017 • Jingchun Cheng, Sifei Liu, Yi-Hsuan Tsai, Wei-Chih Hung, Shalini De Mello, Jinwei Gu, Jan Kautz, Shengjin Wang, Ming-Hsuan Yang

In addition, we apply a filter on the refined score map that aims to recognize the best connected region using spatial and temporal consistencies in the video.

SegFlow: Joint Learning for Video Object Segmentation and Optical Flow

1 code implementation • ICCV 2017 • Jingchun Cheng, Yi-Hsuan Tsai, Shengjin Wang, Ming-Hsuan Yang

This paper proposes an end-to-end trainable network, SegFlow, for simultaneously predicting pixel-wise object segmentation and optical flow in videos.

![]() Ranked #67 on

Semi-Supervised Video Object Segmentation

on DAVIS 2016

Ranked #67 on

Semi-Supervised Video Object Segmentation

on DAVIS 2016

Referring Expression Generation and Comprehension via Attributes

no code implementations • ICCV 2017 • Jingyu Liu, Liang Wang, Ming-Hsuan Yang

In this paper, we explore the role of attributes by incorporating them into both referring expression generation and comprehension.

Blind Image Deblurring With Outlier Handling

no code implementations • ICCV 2017 • Jiangxin Dong, Jinshan Pan, Zhixun Su, Ming-Hsuan Yang

We analyze the relationship between the proposed algorithm and other blind deblurring methods with outlier handling and show how to estimate intermediate latent images for blur kernel estimation principally.

Learning Discriminative Data Fitting Functions for Blind Image Deblurring

no code implementations • ICCV 2017 • Jinshan Pan, Jiangxin Dong, Yu-Wing Tai, Zhixun Su, Ming-Hsuan Yang

Solving blind image deblurring usually requires defining a data fitting function and image priors.

Learning to Super-Resolve Blurry Face and Text Images

no code implementations • ICCV 2017 • Xiangyu Xu, Deqing Sun, Jinshan Pan, Yu-Jin Zhang, Hanspeter Pfister, Ming-Hsuan Yang

We present an algorithm to directly restore a clear high-resolution image from a blurry low-resolution input.

Learning Affinity via Spatial Propagation Networks

no code implementations • NeurIPS 2017 • Sifei Liu, Shalini De Mello, Jinwei Gu, Guangyu Zhong, Ming-Hsuan Yang, Jan Kautz

Specifically, we develop a three-way connection for the linear propagation model, which (a) formulates a sparse transformation matrix, where all elements can be the output from a deep CNN, but (b) results in a dense affinity matrix that effectively models any task-specific pairwise similarity matrix.

Learning Affinity via Spatial Propagation Network

no code implementations • 3 Oct 2017 • Sifei Liu, Shalini De Mello, Jinwei Gu, Guangyu Zhong, Ming-Hsuan Yang, Jan Kautz

Specifically, we develop a three-way connection for the linear propagation model, which (a) formulates a sparse transformation matrix, where all elements can be the output from a deep CNN, but (b) results in a dense affinity matrix that effectively models any task-specific pairwise similarity matrix.

Visual Tracking via Dynamic Graph Learning

no code implementations • 4 Oct 2017 • Chenglong Li, Liang Lin, WangMeng Zuo, Jin Tang, Ming-Hsuan Yang

First, the graph is initialized by assigning binary weights of some image patches to indicate the object and background patches according to the predicted bounding box.

Fast and Accurate Image Super-Resolution with Deep Laplacian Pyramid Networks

7 code implementations • 4 Oct 2017 • Wei-Sheng Lai, Jia-Bin Huang, Narendra Ahuja, Ming-Hsuan Yang

However, existing methods often require a large number of network parameters and entail heavy computational loads at runtime for generating high-accuracy super-resolution results.

Tracking Persons-of-Interest via Unsupervised Representation Adaptation

2 code implementations • 5 Oct 2017 • Shun Zhang, Jia-Bin Huang, Jongwoo Lim, Yihong Gong, Jinjun Wang, Narendra Ahuja, Ming-Hsuan Yang

Multi-face tracking in unconstrained videos is a challenging problem as faces of one person often appear drastically different in multiple shots due to significant variations in scale, pose, expression, illumination, and make-up.

Integrating Boundary and Center Correlation Filters for Visual Tracking with Aspect Ratio Variation

1 code implementation • 5 Oct 2017 • Feng Li, Yingjie Yao, Peihua Li, David Zhang, WangMeng Zuo, Ming-Hsuan Yang

The aspect ratio variation frequently appears in visual tracking and has a severe influence on performance.

Joint Image Filtering with Deep Convolutional Networks

no code implementations • 11 Oct 2017 • Yijun Li, Jia-Bin Huang, Narendra Ahuja, Ming-Hsuan Yang

In contrast to existing methods that consider only the guidance image, the proposed algorithm can selectively transfer salient structures that are consistent with both guidance and target images.

Progressive Representation Adaptation for Weakly Supervised Object Localization

1 code implementation • 12 Oct 2017 • Dong Li, Jia-Bin Huang, Ya-Li Li, Shengjin Wang, Ming-Hsuan Yang

In classification adaptation, we transfer a pre-trained network to a multi-label classification task for recognizing the presence of a certain object in an image.

Scene Parsing with Global Context Embedding

1 code implementation • ICCV 2017 • Wei-Chih Hung, Yi-Hsuan Tsai, Xiaohui Shen, Zhe Lin, Kalyan Sunkavalli, Xin Lu, Ming-Hsuan Yang

We present a scene parsing method that utilizes global context information based on both the parametric and non- parametric models.

Super SloMo: High Quality Estimation of Multiple Intermediate Frames for Video Interpolation

5 code implementations • CVPR 2018 • Huaizu Jiang, Deqing Sun, Varun Jampani, Ming-Hsuan Yang, Erik Learned-Miller, Jan Kautz

Finally, the two input images are warped and linearly fused to form each intermediate frame.

Semi-Supervised Learning for Optical Flow with Generative Adversarial Networks

no code implementations • NeurIPS 2017 • Wei-Sheng Lai, Jia-Bin Huang, Ming-Hsuan Yang

Convolutional neural networks (CNNs) have recently been applied to the optical flow estimation problem.

Learning Binary Residual Representations for Domain-specific Video Streaming

no code implementations • 14 Dec 2017 • Yi-Hsuan Tsai, Ming-Yu Liu, Deqing Sun, Ming-Hsuan Yang, Jan Kautz

Specifically, we target a streaming setting where the videos to be streamed from a server to a client are all in the same domain and they have to be compressed to a small size for low-latency transmission.

Generative Single Image Reflection Separation

no code implementations • 12 Jan 2018 • Donghoon Lee, Ming-Hsuan Yang, Songhwai Oh

Single image reflection separation is an ill-posed problem since two scenes, a transmitted scene and a reflected scene, need to be inferred from a single observation.

Learning Video-Story Composition via Recurrent Neural Network

no code implementations • 31 Jan 2018 • Guangyu Zhong, Yi-Hsuan Tsai, Sifei Liu, Zhixun Su, Ming-Hsuan Yang

In this paper, we propose a learning-based method to compose a video-story from a group of video clips that describe an activity or experience.

A Closed-form Solution to Photorealistic Image Stylization

12 code implementations • ECCV 2018 • Yijun Li, Ming-Yu Liu, Xueting Li, Ming-Hsuan Yang, Jan Kautz

Photorealistic image stylization concerns transferring style of a reference photo to a content photo with the constraint that the stylized photo should remain photorealistic.

SPLATNet: Sparse Lattice Networks for Point Cloud Processing

2 code implementations • CVPR 2018 • Hang Su, Varun Jampani, Deqing Sun, Subhransu Maji, Evangelos Kalogerakis, Ming-Hsuan Yang, Jan Kautz

We present a network architecture for processing point clouds that directly operates on a collection of points represented as a sparse set of samples in a high-dimensional lattice.

Ranked #30 on

Semantic Segmentation

on ScanNet

Ranked #30 on

Semantic Segmentation

on ScanNet

Adversarial Learning for Semi-Supervised Semantic Segmentation

13 code implementations • ICLR 2018 • Wei-Chih Hung, Yi-Hsuan Tsai, Yan-Ting Liou, Yen-Yu Lin, Ming-Hsuan Yang

We propose a method for semi-supervised semantic segmentation using an adversarial network.

Learning to Adapt Structured Output Space for Semantic Segmentation

12 code implementations • CVPR 2018 • Yi-Hsuan Tsai, Wei-Chih Hung, Samuel Schulter, Kihyuk Sohn, Ming-Hsuan Yang, Manmohan Chandraker

In this paper, we propose an adversarial learning method for domain adaptation in the context of semantic segmentation.

Ranked #3 on

Domain Adaptation

on Synscapes-to-Cityscapes

Ranked #3 on

Domain Adaptation

on Synscapes-to-Cityscapes

Learning a Discriminative Prior for Blind Image Deblurring

no code implementations • CVPR 2018 • Lerenhan Li, Jinshan Pan, Wei-Sheng Lai, Changxin Gao, Nong Sang, Ming-Hsuan Yang

We present an effective blind image deblurring method based on a data-driven discriminative prior. Our work is motivated by the fact that a good image prior should favor clear images over blurred images. In this work, we formulate the image prior as a binary classifier which can be achieved by a deep convolutional neural network (CNN). The learned prior is able to distinguish whether an input image is clear or not. Embedded into the maximum a posterior (MAP) framework, it helps blind deblurring in various scenarios, including natural, face, text, and low-illumination images. However, it is difficult to optimize the deblurring method with the learned image prior as it involves a non-linear CNN. Therefore, we develop an efficient numerical approach based on the half-quadratic splitting method and gradient decent algorithm to solve the proposed model. Furthermore, the proposed model can be easily extended to non-uniform deblurring. Both qualitative and quantitative experimental results show that our method performs favorably against state-of-the-art algorithms as well as domain-specific image deblurring approaches.

Deep Semantic Face Deblurring

no code implementations • CVPR 2018 • Ziyi Shen, Wei-Sheng Lai, Tingfa Xu, Jan Kautz, Ming-Hsuan Yang

In this paper, we present an effective and efficient face deblurring algorithm by exploiting semantic cues via deep convolutional neural networks (CNNs).

Learning to Localize Sound Source in Visual Scenes

no code implementations • CVPR 2018 • Arda Senocak, Tae-Hyun Oh, Junsik Kim, Ming-Hsuan Yang, In So Kweon

We show that even with a few supervision, false conclusion is able to be corrected and the source of sound in a visual scene can be localized effectively.

Learning Spatial-Temporal Regularized Correlation Filters for Visual Tracking

1 code implementation • CVPR 2018 • Feng Li, Cheng Tian, WangMeng Zuo, Lei Zhang, Ming-Hsuan Yang

Compared with SRDCF, STRCF with hand-crafted features provides a 5 times speedup and achieves a gain of 5. 4% and 3. 6% AUC score on OTB-2015 and Temple-Color, respectively.

![]() Ranked #9 on

Visual Object Tracking

on VOT2017/18

Ranked #9 on

Visual Object Tracking

on VOT2017/18

Gated Fusion Network for Single Image Dehazing

no code implementations • CVPR 2018 • Wenqi Ren, Lin Ma, Jiawei Zhang, Jinshan Pan, Xiaochun Cao, Wei Liu, Ming-Hsuan Yang

The proposed algorithm hinges on an end-to-end trainable neural network that consists of an encoder and a decoder.

Ranked #23 on

Image Dehazing

on SOTS Outdoor

Ranked #23 on

Image Dehazing

on SOTS Outdoor

VITAL: VIsual Tracking via Adversarial Learning

no code implementations • CVPR 2018 • Yibing Song, Chao Ma, Xiaohe Wu, Lijun Gong, Linchao Bao, WangMeng Zuo, Chunhua Shen, Rynson Lau, Ming-Hsuan Yang

To augment positive samples, we use a generative network to randomly generate masks, which are applied to adaptively dropout input features to capture a variety of appearance changes.

Simultaneous Fidelity and Regularization Learning for Image Restoration

1 code implementation • 12 Apr 2018 • Dongwei Ren, WangMeng Zuo, David Zhang, Lei Zhang, Ming-Hsuan Yang

For blind deconvolution, as estimation error of blur kernel is usually introduced, the subsequent non-blind deconvolution process does not restore the latent image well.

Switchable Temporal Propagation Network

1 code implementation • ECCV 2018 • Sifei Liu, Guangyu Zhong, Shalini De Mello, Jinwei Gu, Varun Jampani, Ming-Hsuan Yang, Jan Kautz

Our approach is based on a temporal propagation network (TPN), which models the transition-related affinity between a pair of frames in a purely data-driven manner.

Correlation Tracking via Joint Discrimination and Reliability Learning

1 code implementation • CVPR 2018 • Chong Sun, Dong Wang, Huchuan Lu, Ming-Hsuan Yang

To address this issue, we propose a novel CF-based optimization problem to jointly model the discrimination and reliability information.

Learning Dual Convolutional Neural Networks for Low-Level Vision

no code implementations • CVPR 2018 • Jinshan Pan, Sifei Liu, Deqing Sun, Jiawei Zhang, Yang Liu, Jimmy Ren, Zechao Li, Jinhui Tang, Huchuan Lu, Yu-Wing Tai, Ming-Hsuan Yang

These problems usually involve the estimation of two components of the target signals: structures and details.

Learning to Deblur Images with Exemplars

no code implementations • 15 May 2018 • Jinshan Pan, Wenqi Ren, Zhe Hu, Ming-Hsuan Yang

However, existing methods are less effective as only few edges can be restored from blurry face images for kernel estimation.

Weakly Supervised Coupled Networks for Visual Sentiment Analysis

1 code implementation • CVPR 2018 • Jufeng Yang, Dongyu She, Yu-Kun Lai, Paul L. Rosin, Ming-Hsuan Yang

The second branch utilizes both the holistic and localized information by coupling the sentiment map with deep features for robust classification.

Learning Superpixels With Segmentation-Aware Affinity Loss

no code implementations • CVPR 2018 • Wei-Chih Tu, Ming-Yu Liu, Varun Jampani, Deqing Sun, Shao-Yi Chien, Ming-Hsuan Yang, Jan Kautz

Specifically, we propose a new loss function that takes the segmentation error into account for affinity learning.

Dynamic Scene Deblurring Using Spatially Variant Recurrent Neural Networks

1 code implementation • CVPR 2018 • Jiawei Zhang, Jinshan Pan, Jimmy Ren, Yibing Song, Linchao Bao, Rynson W. H. Lau, Ming-Hsuan Yang

The proposed network is composed of three deep convolutional neural networks (CNNs) and a recurrent neural network (RNN).

Ranked #10 on

Deblurring

on RealBlur-R (trained on GoPro)

(SSIM (sRGB) metric)

Ranked #10 on

Deblurring

on RealBlur-R (trained on GoPro)

(SSIM (sRGB) metric)

Flow-Grounded Spatial-Temporal Video Prediction from Still Images

1 code implementation • ECCV 2018 • Yijun Li, Chen Fang, Jimei Yang, Zhaowen Wang, Xin Lu, Ming-Hsuan Yang

Existing video prediction methods mainly rely on observing multiple historical frames or focus on predicting the next one-frame.

Superpixel Sampling Networks

2 code implementations • ECCV 2018 • Varun Jampani, Deqing Sun, Ming-Yu Liu, Ming-Hsuan Yang, Jan Kautz

Superpixels provide an efficient low/mid-level representation of image data, which greatly reduces the number of image primitives for subsequent vision tasks.

Gated Fusion Network for Joint Image Deblurring and Super-Resolution

2 code implementations • 27 Jul 2018 • Xinyi Zhang, Hang Dong, Zhe Hu, Wei-Sheng Lai, Fei Wang, Ming-Hsuan Yang

Single-image super-resolution is a fundamental task for vision applications to enhance the image quality with respect to spatial resolution.

Learning Blind Video Temporal Consistency

1 code implementation • ECCV 2018 • Wei-Sheng Lai, Jia-Bin Huang, Oliver Wang, Eli Shechtman, Ersin Yumer, Ming-Hsuan Yang

Our method takes the original unprocessed and per-frame processed videos as inputs to produce a temporally consistent video.

Physics-Based Generative Adversarial Models for Image Restoration and Beyond

no code implementations • 2 Aug 2018 • Jinshan Pan, Jiangxin Dong, Yang Liu, Jiawei Zhang, Jimmy Ren, Jinhui Tang, Yu-Wing Tai, Ming-Hsuan Yang

We present an algorithm to directly solve numerous image restoration problems (e. g., image deblurring, image dehazing, image deraining, etc.).

Diverse Image-to-Image Translation via Disentangled Representations

7 code implementations • ECCV 2018 • Hsin-Ying Lee, Hung-Yu Tseng, Jia-Bin Huang, Maneesh Kumar Singh, Ming-Hsuan Yang

Our model takes the encoded content features extracted from a given input and the attribute vectors sampled from the attribute space to produce diverse outputs at test time.

Learning Linear Transformations for Fast Arbitrary Style Transfer

1 code implementation • 14 Aug 2018 • Xueting Li, Sifei Liu, Jan Kautz, Ming-Hsuan Yang

Recent arbitrary style transfer methods transfer second order statistics from reference image onto content image via a multiplication between content image features and a transformation matrix, which is computed from features with a pre-determined algorithm.

Deep Regression Tracking with Shrinkage Loss

1 code implementation • ECCV 2018 • Xiankai Lu, Chao Ma, Bingbing Ni, Xiaokang Yang, Ian Reid, Ming-Hsuan Yang

Regression trackers directly learn a mapping from regularly dense samples of target objects to soft labels, which are usually generated by a Gaussian function, to estimate target positions.

Learning Data Terms for Non-blind Deblurring

no code implementations • ECCV 2018 • Jiangxin Dong, Jinshan Pan, Deqing Sun, Zhixun Su, Ming-Hsuan Yang

We propose a simple and effective discriminative framework to learn data terms that can adaptively handle blurred images in the presence of severe noise and outliers.

Sub-GAN: An Unsupervised Generative Model via Subspaces

no code implementations • ECCV 2018 • Jie Liang, Jufeng Yang, Hsin-Ying Lee, Kai Wang, Ming-Hsuan Yang

The recent years have witnessed significant growth in constructing robust generative models to capture informative distributions of natural data.

DFT-based Transformation Invariant Pooling Layer for Visual Classification

no code implementations • ECCV 2018 • Jongbin Ryu, Ming-Hsuan Yang, Jongwoo Lim

The proposed methods are extensively evaluated on various classification tasks using the ImageNet, CUB 2010-2011, MIT Indoors, Caltech 101, FMD and DTD datasets.

Rendering Portraitures from Monocular Camera and Beyond

no code implementations • ECCV 2018 • Xiangyu Xu, Deqing Sun, Sifei Liu, Wenqi Ren, Yu-Jin Zhang, Ming-Hsuan Yang, Jian Sun

Specifically, we first exploit Convolutional Neural Networks to estimate the relative depth and portrait segmentation maps from a single input image.

Learning to Blend Photos

1 code implementation • ECCV 2018 • Wei-Chih Hung, Jianming Zhang, Xiaohui Shen, Zhe Lin, Joon-Young Lee, Ming-Hsuan Yang

Specifically, given a foreground image and a background image, our proposed method automatically generates a set of blending photos with scores that indicate the aesthetics quality with the proposed quality network and policy network.

Deep Attentive Tracking via Reciprocative Learning

no code implementations • NeurIPS 2018 • Shi Pu, Yibing Song, Chao Ma, Honggang Zhang, Ming-Hsuan Yang

Visual attention, derived from cognitive neuroscience, facilitates human perception on the most pertinent subset of the sensory data.

MEMC-Net: Motion Estimation and Motion Compensation Driven Neural Network for Video Interpolation and Enhancement

1 code implementation • 20 Oct 2018 • Wenbo Bao, Wei-Sheng Lai, Xiaoyun Zhang, Zhiyong Gao, Ming-Hsuan Yang

Recently, a number of data-driven frame interpolation methods based on convolutional neural networks have been proposed.

Ranked #22 on

Video Frame Interpolation

on Vimeo90K

Ranked #22 on

Video Frame Interpolation

on Vimeo90K

MEMC-Net: Motion Estimation and Motion Compensation Driven Neural Network for Video Frame Interpolation and Enhancement

1 code implementation • arXiv 2018 • Wenbo Bao, Wei-Sheng Lai, Xiaoyun Zhang, Zhiyong Gao, Ming-Hsuan Yang

In this work, we propose a motion estimation and motion compensation driven neural network for video frame interpolation.

Ranked #6 on

Video Frame Interpolation

on Middlebury

Ranked #6 on

Video Frame Interpolation

on Middlebury

A Fusion Approach for Multi-Frame Optical Flow Estimation

2 code implementations • 23 Oct 2018 • Zhile Ren, Orazio Gallo, Deqing Sun, Ming-Hsuan Yang, Erik B. Sudderth, Jan Kautz

To date, top-performing optical flow estimation methods only take pairs of consecutive frames into account.

Joint Face Hallucination and Deblurring via Structure Generation and Detail Enhancement

no code implementations • 22 Nov 2018 • Yibing Song, Jiawei Zhang, Lijun Gong, Shengfeng He, Linchao Bao, Jinshan Pan, Qingxiong Yang, Ming-Hsuan Yang

We first propose a facial component guided deep Convolutional Neural Network (CNN) to restore a coarse face image, which is denoted as the base image where the facial component is automatically generated from the input face image.

Deep Non-Blind Deconvolution via Generalized Low-Rank Approximation

no code implementations • NeurIPS 2018 • Wenqi Ren, Jiawei Zhang, Lin Ma, Jinshan Pan, Xiaochun Cao, WangMeng Zuo, Wei Liu, Ming-Hsuan Yang

In this paper, we present a deep convolutional neural network to capture the inherent properties of image degradation, which can handle different kernels and saturated pixels in a unified framework.

Context-Aware Synthesis and Placement of Object Instances

2 code implementations • NeurIPS 2018 • Donghoon Lee, Sifei Liu, Jinwei Gu, Ming-Yu Liu, Ming-Hsuan Yang, Jan Kautz

Learning to insert an object instance into an image in a semantically coherent manner is a challenging and interesting problem.

PiCANet: Pixel-wise Contextual Attention Learning for Accurate Saliency Detection

2 code implementations • 15 Dec 2018 • Nian Liu, Junwei Han, Ming-Hsuan Yang

We propose three specific formulations of the PiCANet via embedding the pixel-wise contextual attention mechanism into the pooling and convolution operations with attending to global or local contexts.

Unseen Object Segmentation in Videos via Transferable Representations

no code implementations • 8 Jan 2019 • Yi-Wen Chen, Yi-Hsuan Tsai, Chu-Ya Yang, Yen-Yu Lin, Ming-Hsuan Yang

The entire process is decomposed into two tasks: 1) solving a submodular function for selecting object-like segments, and 2) learning a CNN model with a transferable module for adapting seen categories in the source domain to the unseen target video.

Online Multi-Object Tracking with Dual Matching Attention Networks

1 code implementation • ECCV 2018 • Ji Zhu, Hua Yang, Nian Liu, Minyoung Kim, Wenjun Zhang, Ming-Hsuan Yang

In this paper, we propose an online Multi-Object Tracking (MOT) approach which integrates the merits of single object tracking and data association methods in a unified framework to handle noisy detections and frequent interactions between targets.

![]() Ranked #5 on

Online Multi-Object Tracking

on MOT16

Ranked #5 on

Online Multi-Object Tracking

on MOT16

Putting Humans in a Scene: Learning Affordance in 3D Indoor Environments

no code implementations • CVPR 2019 • Xueting Li, Sifei Liu, Kihwan Kim, Xiaolong Wang, Ming-Hsuan Yang, Jan Kautz

In order to predict valid affordances and learn possible 3D human poses in indoor scenes, we need to understand the semantic and geometric structure of a scene as well as its potential interactions with a human.

Mode Seeking Generative Adversarial Networks for Diverse Image Synthesis

2 code implementations • CVPR 2019 • Qi Mao, Hsin-Ying Lee, Hung-Yu Tseng, Siwei Ma, Ming-Hsuan Yang

In this work, we propose a simple yet effective regularization term to address the mode collapse issue for cGANs.

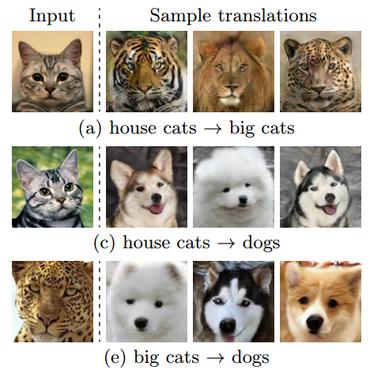

Multimodal Unsupervised Image-To-Image Translation

Multimodal Unsupervised Image-To-Image Translation

Translation

Translation

Inserting Videos into Videos

no code implementations • CVPR 2019 • Donghoon Lee, Tomas Pfister, Ming-Hsuan Yang

To synthesize a realistic video, the network renders each frame based on the current input and previous frames.

Im2Pencil: Controllable Pencil Illustration from Photographs

1 code implementation • CVPR 2019 • Yijun Li, Chen Fang, Aaron Hertzmann, Eli Shechtman, Ming-Hsuan Yang

We propose a high-quality photo-to-pencil translation method with fine-grained control over the drawing style.

Depth-Aware Video Frame Interpolation

5 code implementations • CVPR 2019 • Wenbo Bao, Wei-Sheng Lai, Chao Ma, Xiaoyun Zhang, Zhiyong Gao, Ming-Hsuan Yang

The proposed model then warps the input frames, depth maps, and contextual features based on the optical flow and local interpolation kernels for synthesizing the output frame.

Ranked #5 on

Video Frame Interpolation

on Middlebury

Ranked #5 on

Video Frame Interpolation

on Middlebury

Res2Net: A New Multi-scale Backbone Architecture

32 code implementations • 2 Apr 2019 • Shang-Hua Gao, Ming-Ming Cheng, Kai Zhao, Xin-Yu Zhang, Ming-Hsuan Yang, Philip Torr

We evaluate the Res2Net block on all these models and demonstrate consistent performance gains over baseline models on widely-used datasets, e. g., CIFAR-100 and ImageNet.

Ranked #2 on

Image Classification

on GasHisSDB

Ranked #2 on

Image Classification

on GasHisSDB

Target-Aware Deep Tracking

no code implementations • CVPR 2019 • Xin Li, Chao Ma, Baoyuan Wu, Zhenyu He, Ming-Hsuan Yang

Despite demonstrated successes for numerous vision tasks, the contributions of using pre-trained deep features for visual tracking are not as significant as that for object recognition.

Eidetic 3D LSTM: A Model for Video Prediction and Beyond

3 code implementations • ICLR 2019 • Yunbo Wang, Lu Jiang, Ming-Hsuan Yang, Li-Jia Li, Mingsheng Long, Li Fei-Fei

We first evaluate the E3D-LSTM network on widely-used future video prediction datasets and achieve the state-of-the-art performance.

Ranked #1 on

Video Prediction

on KTH

(Cond metric)

Ranked #1 on

Video Prediction

on KTH

(Cond metric)

DRIT++: Diverse Image-to-Image Translation via Disentangled Representations

4 code implementations • 2 May 2019 • Hsin-Ying Lee, Hung-Yu Tseng, Qi Mao, Jia-Bin Huang, Yu-Ding Lu, Maneesh Singh, Ming-Hsuan Yang

In this work, we present an approach based on disentangled representation for generating diverse outputs without paired training images.

SCOPS: Self-Supervised Co-Part Segmentation

1 code implementation • CVPR 2019 • Wei-Chih Hung, Varun Jampani, Sifei Liu, Pavlo Molchanov, Ming-Hsuan Yang, Jan Kautz

Parts provide a good intermediate representation of objects that is robust with respect to the camera, pose and appearance variations.

Ranked #4 on

Unsupervised Keypoint Estimation

on CUB

Ranked #4 on

Unsupervised Keypoint Estimation

on CUB

Few-Shot Viewpoint Estimation

no code implementations • 13 May 2019 • Hung-Yu Tseng, Shalini De Mello, Jonathan Tremblay, Sifei Liu, Stan Birchfield, Ming-Hsuan Yang, Jan Kautz

Through extensive experimentation on the ObjectNet3D and Pascal3D+ benchmark datasets, we demonstrate that our framework, which we call MetaView, significantly outperforms fine-tuning the state-of-the-art models with few examples, and that the specific architectural innovations of our method are crucial to achieving good performance.

Weakly-supervised Caricature Face Parsing through Domain Adaptation

1 code implementation • 13 May 2019 • Wenqing Chu, Wei-Chih Hung, Yi-Hsuan Tsai, Deng Cai, Ming-Hsuan Yang

However, current state-of-the-art face parsing methods require large amounts of labeled data on the pixel-level and such process for caricature is tedious and labor-intensive.

Self-supervised Audio Spatialization with Correspondence Classifier

no code implementations • 14 May 2019 • Yu-Ding Lu, Hsin-Ying Lee, Hung-Yu Tseng, Ming-Hsuan Yang

Spatial audio is an essential medium to audiences for 3D visual and auditory experience.

An Adaptive Random Path Selection Approach for Incremental Learning

1 code implementation • 3 Jun 2019 • Jathushan Rajasegaran, Munawar Hayat, Salman Khan, Fahad Shahbaz Khan, Ling Shao, Ming-Hsuan Yang

In a conventional supervised learning setting, a machine learning model has access to examples of all object classes that are desired to be recognized during the inference stage.

Detection and Tracking of Multiple Mice Using Part Proposal Networks

no code implementations • 6 Jun 2019 • Zheheng Jiang, Zhihua Liu, Long Chen, Lei Tong, Xiangrong Zhang, Xiangyuan Lan, Danny Crookes, Ming-Hsuan Yang, Huiyu Zhou

The study of mouse social behaviours has been increasingly undertaken in neuroscience research.

Show, Match and Segment: Joint Weakly Supervised Learning of Semantic Matching and Object Co-segmentation

1 code implementation • 13 Jun 2019 • Yun-Chun Chen, Yen-Yu Lin, Ming-Hsuan Yang, Jia-Bin Huang

In contrast to existing algorithms that tackle the tasks of semantic matching and object co-segmentation in isolation, our method exploits the complementary nature of the two tasks.

Video Stitching for Linear Camera Arrays

no code implementations • 31 Jul 2019 • Wei-Sheng Lai, Orazio Gallo, Jinwei Gu, Deqing Sun, Ming-Hsuan Yang, Jan Kautz

Despite the long history of image and video stitching research, existing academic and commercial solutions still produce strong artifacts.

Joint-task Self-supervised Learning for Temporal Correspondence

2 code implementations • NeurIPS 2019 • Xueting Li, Sifei Liu, Shalini De Mello, Xiaolong Wang, Jan Kautz, Ming-Hsuan Yang

Our learning process integrates two highly related tasks: tracking large image regions \emph{and} establishing fine-grained pixel-level associations between consecutive video frames.

Referring Expression Object Segmentation with Caption-Aware Consistency

1 code implementation • 10 Oct 2019 • Yi-Wen Chen, Yi-Hsuan Tsai, Tiantian Wang, Yen-Yu Lin, Ming-Hsuan Yang

To this end, we propose an end-to-end trainable comprehension network that consists of the language and visual encoders to extract feature representations from both domains.

Ranked #19 on

Referring Expression Segmentation

on RefCOCO testB

Ranked #19 on

Referring Expression Segmentation

on RefCOCO testB

Progressive Domain Adaptation for Object Detection

1 code implementation • 24 Oct 2019 • Han-Kai Hsu, Chun-Han Yao, Yi-Hsuan Tsai, Wei-Chih Hung, Hung-Yu Tseng, Maneesh Singh, Ming-Hsuan Yang

This intermediate domain is constructed by translating the source images to mimic the ones in the target domain.

Quadratic video interpolation

1 code implementation • NeurIPS 2019 • Xiangyu Xu, Li Si-Yao, Wenxiu Sun, Qian Yin, Ming-Hsuan Yang

Video interpolation is an important problem in computer vision, which helps overcome the temporal limitation of camera sensors.

Dancing to Music

2 code implementations • NeurIPS 2019 • Hsin-Ying Lee, Xiaodong Yang, Ming-Yu Liu, Ting-Chun Wang, Yu-Ding Lu, Ming-Hsuan Yang, Jan Kautz

In the analysis phase, we decompose a dance into a series of basic dance units, through which the model learns how to move.

Ranked #3 on

Motion Synthesis

on BRACE

Ranked #3 on

Motion Synthesis

on BRACE

Learning to Localize Sound Sources in Visual Scenes: Analysis and Applications

1 code implementation • 20 Nov 2019 • Arda Senocak, Tae-Hyun Oh, Junsik Kim, Ming-Hsuan Yang, In So Kweon

Visual events are usually accompanied by sounds in our daily lives.

Adversarial Learning of Privacy-Preserving and Task-Oriented Representations

no code implementations • 22 Nov 2019 • Taihong Xiao, Yi-Hsuan Tsai, Kihyuk Sohn, Manmohan Chandraker, Ming-Hsuan Yang

For instance, there could be a potential privacy risk of machine learning systems via the model inversion attack, whose goal is to reconstruct the input data from the latent representation of deep networks.

Neural Design Network: Graphic Layout Generation with Constraints

no code implementations • ECCV 2020 • Hsin-Ying Lee, Lu Jiang, Irfan Essa, Phuong B Le, Haifeng Gong, Ming-Hsuan Yang, Weilong Yang

The first module predicts a graph with complete relations from a graph with user-specified relations.

Controllable and Progressive Image Extrapolation

no code implementations • 25 Dec 2019 • Yijun Li, Lu Jiang, Ming-Hsuan Yang

Image extrapolation aims at expanding the narrow field of view of a given image patch.

RC-DARTS: Resource Constrained Differentiable Architecture Search

no code implementations • 30 Dec 2019 • Xiaojie Jin, Jiang Wang, Joshua Slocum, Ming-Hsuan Yang, Shengyang Dai, Shuicheng Yan, Jiashi Feng

In this paper, we propose the resource constrained differentiable architecture search (RC-DARTS) method to learn architectures that are significantly smaller and faster while achieving comparable accuracy.

CrDoCo: Pixel-level Domain Transfer with Cross-Domain Consistency

no code implementations • CVPR 2019 • Yun-Chun Chen, Yen-Yu Lin, Ming-Hsuan Yang, Jia-Bin Huang

Unsupervised domain adaptation algorithms aim to transfer the knowledge learned from one domain to another (e. g., synthetic to real images).

Visual Question Answering on 360° Images

no code implementations • 10 Jan 2020 • Shih-Han Chou, Wei-Lun Chao, Wei-Sheng Lai, Min Sun, Ming-Hsuan Yang

We then study two different VQA models on VQA 360, including one conventional model that takes an equirectangular image (with intrinsic distortion) as input and one dedicated model that first projects a 360 image onto cubemaps and subsequently aggregates the information from multiple spatial resolutions.

Exploiting Semantics for Face Image Deblurring

no code implementations • 19 Jan 2020 • Ziyi Shen, Wei-Sheng Lai, Tingfa Xu, Jan Kautz, Ming-Hsuan Yang

Specifically, we first use a coarse deblurring network to reduce the motion blur on the input face image.

Cross-Domain Few-Shot Classification via Learned Feature-Wise Transformation

1 code implementation • ICLR 2020 • Hung-Yu Tseng, Hsin-Ying Lee, Jia-Bin Huang, Ming-Hsuan Yang

Few-shot classification aims to recognize novel categories with only few labeled images in each class.

Ranked #6 on

Cross-Domain Few-Shot

on CUB

Ranked #6 on

Cross-Domain Few-Shot

on CUB

Weakly-Supervised Semantic Segmentation by Iterative Affinity Learning

no code implementations • 19 Feb 2020 • Xiang Wang, Sifei Liu, Huimin Ma, Ming-Hsuan Yang

In this paper, we propose an iterative algorithm to learn such pairwise relations, which consists of two branches, a unary segmentation network which learns the label probabilities for each pixel, and a pairwise affinity network which learns affinity matrix and refines the probability map generated from the unary network.

Structured Sparsification with Joint Optimization of Group Convolution and Channel Shuffle

1 code implementation • 19 Feb 2020 • Xin-Yu Zhang, Kai Zhao, Taihong Xiao, Ming-Ming Cheng, Ming-Hsuan Yang

Recent advances in convolutional neural networks(CNNs) usually come with the expense of excessive computational overhead and memory footprint.

Gated Fusion Network for Degraded Image Super Resolution

1 code implementation • 2 Mar 2020 • Xinyi Zhang, Hang Dong, Zhe Hu, Wei-Sheng Lai, Fei Wang, Ming-Hsuan Yang

To address this problem, we propose a dual-branch convolutional neural network to extract base features and recovered features separately.

Self-supervised Single-view 3D Reconstruction via Semantic Consistency

1 code implementation • ECCV 2020 • Xueting Li, Sifei Liu, Kihwan Kim, Shalini De Mello, Varun Jampani, Ming-Hsuan Yang, Jan Kautz

To the best of our knowledge, we are the first to try and solve the single-view reconstruction problem without a category-specific template mesh or semantic keypoints.

Learning Enriched Features for Real Image Restoration and Enhancement

12 code implementations • ECCV 2020 • Syed Waqas Zamir, Aditya Arora, Salman Khan, Munawar Hayat, Fahad Shahbaz Khan, Ming-Hsuan Yang, Ling Shao

With the goal of recovering high-quality image content from its degraded version, image restoration enjoys numerous applications, such as in surveillance, computational photography, medical imaging, and remote sensing.

Ranked #5 on

Spectral Reconstruction

on ARAD-1K

Ranked #5 on

Spectral Reconstruction

on ARAD-1K

CycleISP: Real Image Restoration via Improved Data Synthesis

8 code implementations • CVPR 2020 • Syed Waqas Zamir, Aditya Arora, Salman Khan, Munawar Hayat, Fahad Shahbaz Khan, Ming-Hsuan Yang, Ling Shao

This is mainly because the AWGN is not adequate for modeling the real camera noise which is signal-dependent and heavily transformed by the camera imaging pipeline.

Ranked #10 on

Image Denoising

on DND

(using extra training data)

Ranked #10 on

Image Denoising

on DND

(using extra training data)

Collaborative Distillation for Ultra-Resolution Universal Style Transfer

1 code implementation • CVPR 2020 • Huan Wang, Yijun Li, Yuehai Wang, Haoji Hu, Ming-Hsuan Yang

In this work, we present a new knowledge distillation method (named Collaborative Distillation) for encoder-decoder based neural style transfer to reduce the convolutional filters.

Rethinking Class-Balanced Methods for Long-Tailed Visual Recognition from a Domain Adaptation Perspective

1 code implementation • CVPR 2020 • Muhammad Abdullah Jamal, Matthew Brown, Ming-Hsuan Yang, Liqiang Wang, Boqing Gong

Object frequency in the real world often follows a power law, leading to a mismatch between datasets with long-tailed class distributions seen by a machine learning model and our expectation of the model to perform well on all classes.

Ranked #27 on

Long-tail Learning

on Places-LT

Ranked #27 on

Long-tail Learning

on Places-LT

TapLab: A Fast Framework for Semantic Video Segmentation Tapping into Compressed-Domain Knowledge

1 code implementation • 30 Mar 2020 • Junyi Feng, Songyuan Li, Xi Li, Fei Wu, Qi Tian, Ming-Hsuan Yang, Haibin Ling

Real-time semantic video segmentation is a challenging task due to the strict requirements of inference speed.

Deep Semantic Matching with Foreground Detection and Cycle-Consistency

no code implementations • 31 Mar 2020 • Yun-Chun Chen, Po-Hsiang Huang, Li-Yu Yu, Jia-Bin Huang, Ming-Hsuan Yang, Yen-Yu Lin

Establishing dense semantic correspondences between object instances remains a challenging problem due to background clutter, significant scale and pose differences, and large intra-class variations.

Learning to See Through Obstructions

1 code implementation • CVPR 2020 • Yu-Lun Liu, Wei-Sheng Lai, Ming-Hsuan Yang, Yung-Yu Chuang, Jia-Bin Huang

We present a learning-based approach for removing unwanted obstructions, such as window reflections, fence occlusions or raindrops, from a short sequence of images captured by a moving camera.

Single-Image HDR Reconstruction by Learning to Reverse the Camera Pipeline

1 code implementation • CVPR 2020 • Yu-Lun Liu, Wei-Sheng Lai, Yu-Sheng Chen, Yi-Lung Kao, Ming-Hsuan Yang, Yung-Yu Chuang, Jia-Bin Huang

We model the HDRto-LDR image formation pipeline as the (1) dynamic range clipping, (2) non-linear mapping from a camera response function, and (3) quantization.

Regularizing Meta-Learning via Gradient Dropout

1 code implementation • 13 Apr 2020 • Hung-Yu Tseng, Yi-Wen Chen, Yi-Hsuan Tsai, Sifei Liu, Yen-Yu Lin, Ming-Hsuan Yang

With the growing attention on learning-to-learn new tasks using only a few examples, meta-learning has been widely used in numerous problems such as few-shot classification, reinforcement learning, and domain generalization.

Multi-Scale Boosted Dehazing Network with Dense Feature Fusion

1 code implementation • CVPR 2020 • Hang Dong, Jinshan Pan, Lei Xiang, Zhe Hu, Xinyi Zhang, Fei Wang, Ming-Hsuan Yang

To address the issue of preserving spatial information in the U-Net architecture, we design a dense feature fusion module using the back-projection feedback scheme.

Ranked #9 on

Image Dehazing

on Haze4k

Ranked #9 on

Image Dehazing

on Haze4k

Generalized Convolutional Forest Networks for Domain Generalization and Visual Recognition

no code implementations • ICLR 2020 • Jongbin Ryu, Gitaek Kwon, Ming-Hsuan Yang, Jongwoo Lim

When constructing random forests, it is of prime importance to ensure high accuracy and low correlation of individual tree classifiers for good performance.

WW-Nets: Dual Neural Networks for Object Detection

no code implementations • 15 May 2020 • Mohammad K. Ebrahimpour, J. Ben Falandays, Samuel Spevack, Ming-Hsuan Yang, David C. Noelle

Inspired by this structure, we have proposed an object detection framework involving the integration of a "What Network" and a "Where Network".

Ventral-Dorsal Neural Networks: Object Detection via Selective Attention

no code implementations • 15 May 2020 • Mohammad K. Ebrahimpour, Jiayun Li, Yen-Yun Yu, Jackson L. Reese, Azadeh Moghtaderi, Ming-Hsuan Yang, David C. Noelle

The coarse functional distinction between these streams is between object recognition -- the "what" of the signal -- and extracting location related information -- the "where" of the signal.

Semi-Supervised Learning with Meta-Gradient

1 code implementation • 8 Jul 2020 • Xin-Yu Zhang, Taihong Xiao, HaoLin Jia, Ming-Ming Cheng, Ming-Hsuan Yang

In this work, we propose a simple yet effective meta-learning algorithm in semi-supervised learning.

Modeling Artistic Workflows for Image Generation and Editing

1 code implementation • ECCV 2020 • Hung-Yu Tseng, Matthew Fisher, Jingwan Lu, Yijun Li, Vladimir Kim, Ming-Hsuan Yang

People often create art by following an artistic workflow involving multiple stages that inform the overall design.

Controllable Image Synthesis via SegVAE

no code implementations • ECCV 2020 • Yen-Chi Cheng, Hsin-Ying Lee, Min Sun, Ming-Hsuan Yang

We also apply an off-the-shelf image-to-image translation model to generate realistic RGB images to better understand the quality of the synthesized semantic maps.

RetrieveGAN: Image Synthesis via Differentiable Patch Retrieval

no code implementations • ECCV 2020 • Hung-Yu Tseng, Hsin-Ying Lee, Lu Jiang, Ming-Hsuan Yang, Weilong Yang

Image generation from scene description is a cornerstone technique for the controlled generation, which is beneficial to applications such as content creation and image editing.

Learnable Cost Volume Using the Cayley Representation

1 code implementation • ECCV 2020 • Taihong Xiao, Jinwei Yuan, Deqing Sun, Qifei Wang, Xin-Yu Zhang, Kehan Xu, Ming-Hsuan Yang

Cost volume is an essential component of recent deep models for optical flow estimation and is usually constructed by calculating the inner product between two feature vectors.

Mixup-CAM: Weakly-supervised Semantic Segmentation via Uncertainty Regularization

no code implementations • 3 Aug 2020 • Yu-Ting Chang, Qiaosong Wang, Wei-Chih Hung, Robinson Piramuthu, Yi-Hsuan Tsai, Ming-Hsuan Yang

Obtaining object response maps is one important step to achieve weakly-supervised semantic segmentation using image-level labels.

Weakly-Supervised Semantic Segmentation via Sub-category Exploration

1 code implementation • CVPR 2020 • Yu-Ting Chang, Qiaosong Wang, Wei-Chih Hung, Robinson Piramuthu, Yi-Hsuan Tsai, Ming-Hsuan Yang

Existing weakly-supervised semantic segmentation methods using image-level annotations typically rely on initial responses to locate object regions.

Spatiotemporal Contrastive Video Representation Learning

4 code implementations • CVPR 2021 • Rui Qian, Tianjian Meng, Boqing Gong, Ming-Hsuan Yang, Huisheng Wang, Serge Belongie, Yin Cui

Our representations are learned using a contrastive loss, where two augmented clips from the same short video are pulled together in the embedding space, while clips from different videos are pushed away.

Ranked #1 on

Self-Supervised Action Recognition

on Kinetics-600

Ranked #1 on

Self-Supervised Action Recognition

on Kinetics-600

Learning to See Through Obstructions with Layered Decomposition

1 code implementation • 11 Aug 2020 • Yu-Lun Liu, Wei-Sheng Lai, Ming-Hsuan Yang, Yung-Yu Chuang, Jia-Bin Huang

We present a learning-based approach for removing unwanted obstructions, such as window reflections, fence occlusions, or adherent raindrops, from a short sequence of images captured by a moving camera.

Learning to Caricature via Semantic Shape Transform

1 code implementation • 12 Aug 2020 • Wenqing Chu, Wei-Chih Hung, Yi-Hsuan Tsai, Yu-Ting Chang, Yijun Li, Deng Cai, Ming-Hsuan Yang

Caricature is an artistic drawing created to abstract or exaggerate facial features of a person.

SoDA: Multi-Object Tracking with Soft Data Association

no code implementations • 18 Aug 2020 • Wei-Chih Hung, Henrik Kretzschmar, Tsung-Yi Lin, Yuning Chai, Ruichi Yu, Ming-Hsuan Yang, Dragomir Anguelov

Robust multi-object tracking (MOT) is a prerequisite fora safe deployment of self-driving cars.

Every Pixel Matters: Center-aware Feature Alignment for Domain Adaptive Object Detector

1 code implementation • ECCV 2020 • Cheng-Chun Hsu, Yi-Hsuan Tsai, Yen-Yu Lin, Ming-Hsuan Yang

A domain adaptive object detector aims to adapt itself to unseen domains that may contain variations of object appearance, viewpoints or backgrounds.

Multi-path Neural Networks for On-device Multi-domain Visual Classification

no code implementations • 10 Oct 2020 • Qifei Wang, Junjie Ke, Joshua Greaves, Grace Chu, Gabriel Bender, Luciano Sbaiz, Alec Go, Andrew Howard, Feng Yang, Ming-Hsuan Yang, Jeff Gilbert, Peyman Milanfar

This approach effectively reduces the total number of parameters and FLOPS, encouraging positive knowledge transfer while mitigating negative interference across domains.

Unsupervised Domain Adaptation for Spatio-Temporal Action Localization

no code implementations • 19 Oct 2020 • Nakul Agarwal, Yi-Ting Chen, Behzad Dariush, Ming-Hsuan Yang

Spatio-temporal action localization is an important problem in computer vision that involves detecting where and when activities occur, and therefore requires modeling of both spatial and temporal features.

Continuous and Diverse Image-to-Image Translation via Signed Attribute Vectors

1 code implementation • 2 Nov 2020 • Qi Mao, Hung-Yu Tseng, Hsin-Ying Lee, Jia-Bin Huang, Siwei Ma, Ming-Hsuan Yang

Generating a smooth sequence of intermediate results bridges the gap of two different domains, facilitating the morphing effect across domains.

Shaping Deep Feature Space towards Gaussian Mixture for Visual Classification

no code implementations • 18 Nov 2020 • Weitao Wan, Jiansheng Chen, Cheng Yu, Tong Wu, Yuanyi Zhong, Ming-Hsuan Yang

In this work, we propose a Gaussian mixture (GM) loss function for deep neural networks for visual classification.

Unsupervised Discovery of Disentangled Manifolds in GANs

1 code implementation • 24 Nov 2020 • Yu-Ding Lu, Hsin-Ying Lee, Hung-Yu Tseng, Ming-Hsuan Yang

Interpretable generation process is beneficial to various image editing applications.

Online Adaptation for Consistent Mesh Reconstruction in the Wild

no code implementations • NeurIPS 2020 • Xueting Li, Sifei Liu, Shalini De Mello, Kihwan Kim, Xiaolong Wang, Ming-Hsuan Yang, Jan Kautz

This paper presents an algorithm to reconstruct temporally consistent 3D meshes of deformable object instances from videos in the wild.

D2-Net: Weakly-Supervised Action Localization via Discriminative Embeddings and Denoised Activations

1 code implementation • ICCV 2021 • Sanath Narayan, Hisham Cholakkal, Munawar Hayat, Fahad Shahbaz Khan, Ming-Hsuan Yang, Ling Shao

The proposed formulation comprises a discriminative and a denoising loss term for enhancing temporal action localization.

Ranked #3 on

Weakly Supervised Action Localization

on THUMOS’14

Ranked #3 on

Weakly Supervised Action Localization

on THUMOS’14

Benchmarking Ultra-High-Definition Image Super-Resolution

no code implementations • ICCV 2021 • Kaihao Zhang, Dongxu Li, Wenhan Luo, Wenqi Ren, Bjorn Stenger, Wei Liu, Hongdong Li, Ming-Hsuan Yang

Increasingly, modern mobile devices allow capturing images at Ultra-High-Definition (UHD) resolution, which includes 4K and 8K images.

Video Matting via Consistency-Regularized Graph Neural Networks

no code implementations • ICCV 2021 • Tiantian Wang, Sifei Liu, Yapeng Tian, Kai Li, Ming-Hsuan Yang

In this paper, we propose to enhance the temporal coherence by Consistency-Regularized Graph Neural Networks (CRGNN) with the aid of a synthesized video matting dataset.

Low Light Image Enhancement via Global and Local Context Modeling

no code implementations • 4 Jan 2021 • Aditya Arora, Muhammad Haris, Syed Waqas Zamir, Munawar Hayat, Fahad Shahbaz Khan, Ling Shao, Ming-Hsuan Yang

These contexts can be crucial towards inferring several image enhancement tasks, e. g., local and global contrast, brightness and color corrections; which requires cues from both local and global spatial extent.

GAN Inversion: A Survey

1 code implementation • 14 Jan 2021 • Weihao Xia, Yulun Zhang, Yujiu Yang, Jing-Hao Xue, Bolei Zhou, Ming-Hsuan Yang

GAN inversion aims to invert a given image back into the latent space of a pretrained GAN model, for the image to be faithfully reconstructed from the inverted code by the generator.

Learning Spatial and Spatio-Temporal Pixel Aggregations for Image and Video Denoising

3 code implementations • 26 Jan 2021 • Xiangyu Xu, Muchen Li, Wenxiu Sun, Ming-Hsuan Yang

We present a spatial pixel aggregation network and learn the pixel sampling and averaging strategies for image denoising.