Search Results for author: Yu Zhang

Found 522 papers, 161 papers with code

Worst-Case Linear Discriminant Analysis

no code implementations • NeurIPS 2010 • Yu Zhang, Dit-yan Yeung

In this paper, we first analyze the scatter measures used in the conventional linear discriminant analysis~(LDA) model and note that the formulation is based on the average-case view.

Probabilistic Multi-Task Feature Selection

no code implementations • NeurIPS 2010 • Yu Zhang, Dit-yan Yeung, Qian Xu

In this paper, we unify the $l_{1, 2}$ and $l_{1,\infty}$ norms by considering a family of $l_{1, q}$ norms for $1 < q\le\infty$ and study the problem of determining the most appropriate sparsity enforcing norm to use in the context of multi-task feature selection.

A Convex Formulation for Learning Task Relationships in Multi-Task Learning

no code implementations • 15 Mar 2012 • Yu Zhang, Dit-yan Yeung

In this paper, we propose a regularization formulation for learning the relationships between tasks in multi-task learning.

Group Component Analysis for Multiblock Data: Common and Individual Feature Extraction

no code implementations • 17 Dec 2012 • Guoxu Zhou, Andrzej Cichocki, Yu Zhang, Danilo Mandic

Very often data we encounter in practice is a collection of matrices rather than a single matrix.

Ensemble of Distributed Learners for Online Classification of Dynamic Data Streams

no code implementations • 24 Aug 2013 • Luca Canzian, Yu Zhang, Mihaela van der Schaar

We present an efficient distributed online learning scheme to classify data captured from distributed, heterogeneous, and dynamic data sources.

Frequency Recognition in SSVEP-based BCI using Multiset Canonical Correlation Analysis

no code implementations • 26 Aug 2013 • Yu Zhang, Guoxu Zhou, Jing Jin, Xingyu Wang, Andrzej Cichocki

Canonical correlation analysis (CCA) has been one of the most popular methods for frequency recognition in steady-state visual evoked potential (SSVEP)-based brain-computer interfaces (BCIs).

Electricity Market Forecasting via Low-Rank Multi-Kernel Learning

no code implementations • 2 Oct 2013 • Vassilis Kekatos, Yu Zhang, Georgios B. Giannakis

The smart grid vision entails advanced information technology and data analytics to enhance the efficiency, sustainability, and economics of the power grid infrastructure.

Heterogeneous-Neighborhood-based Multi-Task Local Learning Algorithms

no code implementations • NeurIPS 2013 • Yu Zhang

In this paper, different from existing methods, we propose local learning methods for multi-task classification and regression problems based on heterogeneous neighborhood which is defined on data points from all tasks.

An Active Learning Approach for Jointly Estimating Worker Performance and Annotation Reliability with Crowdsourced Data

no code implementations • 16 Jan 2014 • Liyue Zhao, Yu Zhang, Gita Sukthankar

Crowdsourcing platforms offer a practical solution to the problem of affordably annotating large datasets for training supervised classifiers.

A Formal Analysis of Required Cooperation in Multi-agent Planning

no code implementations • 22 Apr 2014 • Yu Zhang, Subbarao Kambhampati

Then, by dividing the problems that require cooperation (referred to as RC problems) into two classes -- problems with heterogeneous and homogeneous agents, we aim to identify all the conditions that can cause RC in these two classes.

Towards Good Practices for Action Video Encoding

no code implementations • CVPR 2014 • Jianxin Wu, Yu Zhang, Weiyao Lin

High dimensional representations such as VLAD or FV have shown excellent accuracy in action recognition.

Compact Representation for Image Classification: To Choose or to Compress?

no code implementations • CVPR 2014 • Yu Zhang, Jianxin Wu, Jianfei Cai

In spite of the popularity of various feature compression methods, this paper argues that feature selection is a better choice than feature compression.

Learning of Agent Capability Models with Applications in Multi-agent Planning

no code implementations • 4 Nov 2014 • Yu Zhang, Subbarao Kambhampati

Thus far, there are two common representations of agent models: MDP based and action based, which are both based on action modeling.

Plan or not: Remote Human-robot Teaming with Incomplete Task Information

no code implementations • 9 Dec 2014 • Vignesh Narayanan, Yu Zhang, Nathaniel Mendoza, Subbarao Kambhampati

While information asymmetry can be desirable sometimes, it may also lead to the robot choosing improper actions that negatively influence the teaming performance.

Weakly Supervised Fine-Grained Image Categorization

no code implementations • 20 Apr 2015 • Yu Zhang, Xiu-Shen Wei, Jianxin Wu, Jianfei Cai, Jiangbo Lu, Viet-Anh Nguyen, Minh N. Do

Most existing works heavily rely on object / part detectors to build the correspondence between object parts by using object or object part annotations inside training images.

Exploit Bounding Box Annotations for Multi-label Object Recognition

no code implementations • CVPR 2016 • Hao Yang, Joey Tianyi Zhou, Yu Zhang, Bin-Bin Gao, Jianxin Wu, Jianfei Cai

With strong labels, our framework is able to achieve state-of-the-art results in both datasets.

Ranked #16 on

Multi-Label Classification

on PASCAL VOC 2007

Ranked #16 on

Multi-Label Classification

on PASCAL VOC 2007

Semantic Object Segmentation via Detection in Weakly Labeled Video

no code implementations • CVPR 2015 • Yu Zhang, Xiaowu Chen, Jia Li, Chen Wang, Changqun Xia

Semantic object segmentation in video is an important step for large-scale multimedia analysis.

3D Reconstruction in the Presence of Glasses by Acoustic and Stereo Fusion

no code implementations • CVPR 2015 • Mao Ye, Yu Zhang, Ruigang Yang, Dinesh Manocha

We present a novel sensor fusion algorithm that first segments the depth map into different categories such as opaque/transparent/infinity (e. g., too far to measure) and then updates the depth map based on the segmentation outcome.

Linked Component Analysis from Matrices to High Order Tensors: Applications to Biomedical Data

no code implementations • 29 Aug 2015 • Guoxu Zhou, Qibin Zhao, Yu Zhang, Tülay Adalı, Shengli Xie, Andrzej Cichocki

With the increasing availability of various sensor technologies, we now have access to large amounts of multi-block (also called multi-set, multi-relational, or multi-view) data that need to be jointly analyzed to explore their latent connections.

Prediction-Adaptation-Correction Recurrent Neural Networks for Low-Resource Language Speech Recognition

no code implementations • 30 Oct 2015 • Yu Zhang, Ekapol Chuangsuwanich, James Glass, Dong Yu

In this paper, we investigate the use of prediction-adaptation-correction recurrent neural networks (PAC-RNNs) for low-resource speech recognition.

Highway Long Short-Term Memory RNNs for Distant Speech Recognition

no code implementations • 30 Oct 2015 • Yu Zhang, Guoguo Chen, Dong Yu, Kaisheng Yao, Sanjeev Khudanpur, James Glass

In this paper, we extend the deep long short-term memory (DLSTM) recurrent neural networks by introducing gated direct connections between memory cells in adjacent layers.

Plan Explicability and Predictability for Robot Task Planning

no code implementations • 25 Nov 2015 • Yu Zhang, Sarath Sreedharan, Anagha Kulkarni, Tathagata Chakraborti, Hankz Hankui Zhuo, Subbarao Kambhampati

Hence, for such agents to be helpful, one important requirement is for them to synthesize plans that can be easily understood by humans.

On Training Bi-directional Neural Network Language Model with Noise Contrastive Estimation

1 code implementation • 19 Feb 2016 • Tianxing He, Yu Zhang, Jasha Droppo, Kai Yu

We propose to train bi-directional neural network language model(NNLM) with noise contrastive estimation(NCE).

Storm Detection by Visual Learning Using Satellite Images

no code implementations • 1 Mar 2016 • Yu Zhang, Stephen Wistar, Jia Li, Michael Steinberg, James Z. Wang

In our system, we extract and summarize important visual storm evidence from satellite image sequences in the way that meteorologists interpret the images.

Recurrent Neural Network Encoder with Attention for Community Question Answering

no code implementations • 23 Mar 2016 • Wei-Ning Hsu, Yu Zhang, James Glass

We apply a general recurrent neural network (RNN) encoder framework to community question answering (cQA) tasks.

A Deep Neural Network for Chinese Zero Pronoun Resolution

no code implementations • 20 Apr 2016 • Qingyu Yin, Wei-Nan Zhang, Yu Zhang, Ting Liu

This is because zero pronouns have no descriptive information, which results in difficulty in explicitly capturing their semantic similarities with antecedents.

Neural Recovery Machine for Chinese Dropped Pronoun

no code implementations • 7 May 2016 • Wei-Nan Zhang, Ting Liu, Qingyu Yin, Yu Zhang

Dropped pronouns (DPs) are ubiquitous in pro-drop languages like Chinese, Japanese etc.

Proactive Decision Support using Automated Planning

no code implementations • 24 Jun 2016 • Satya Gautam Vadlamudi, Tathagata Chakraborti, Yu Zhang, Subbarao Kambhampati

Proactive decision support (PDS) helps in improving the decision making experience of human decision makers in human-in-the-loop planning environments.

Lie-X: Depth Image Based Articulated Object Pose Estimation, Tracking, and Action Recognition on Lie Groups

no code implementations • 13 Sep 2016 • Chi Xu, Lakshmi Narasimhan Govindarajan, Yu Zhang, Li Cheng

Pose estimation, tracking, and action recognition of articulated objects from depth images are important and challenging problems, which are normally considered separately.

Latent Sequence Decompositions

no code implementations • 10 Oct 2016 • William Chan, Yu Zhang, Quoc Le, Navdeep Jaitly

We present the Latent Sequence Decompositions (LSD) framework.

Personalizing a Dialogue System with Transfer Reinforcement Learning

no code implementations • 10 Oct 2016 • Kaixiang Mo, Shuangyin Li, Yu Zhang, Jiajun Li, Qiang Yang

One way to solve this problem is to consider a collection of multiple users' data as a source domain and an individual user's data as a target domain, and to perform a transfer learning from the source to the target domain.

Very Deep Convolutional Networks for End-to-End Speech Recognition

2 code implementations • 10 Oct 2016 • Yu Zhang, William Chan, Navdeep Jaitly

Sequence-to-sequence models have shown success in end-to-end speech recognition.

Explicablility as Minimizing Distance from Expected Behavior

no code implementations • 16 Nov 2016 • Anagha Kulkarni, Yantian Zha, Tathagata Chakraborti, Satya Gautam Vadlamudi, Yu Zhang, Subbarao Kambhampati

In order to have effective human-AI collaboration, it is necessary to address how the AI agent's behavior is being perceived by the humans-in-the-loop.

Neural Attention for Learning to Rank Questions in Community Question Answering

no code implementations • COLING 2016 • Salvatore Romeo, Giovanni Da San Martino, Alberto Barr{\'o}n-Cede{\~n}o, Aless Moschitti, ro, Yonatan Belinkov, Wei-Ning Hsu, Yu Zhang, Mitra Mohtarami, James Glass

In real-world data, e. g., from Web forums, text is often contaminated with redundant or irrelevant content, which leads to introducing noise in machine learning algorithms.

Learning to Search on Manifolds for 3D Pose Estimation of Articulated Objects

no code implementations • 2 Dec 2016 • Yu Zhang, Chi Xu, Li Cheng

This paper focuses on the challenging problem of 3D pose estimation of a diverse spectrum of articulated objects from single depth images.

Visual Compiler: Synthesizing a Scene-Specific Pedestrian Detector and Pose Estimator

no code implementations • 15 Dec 2016 • Namhoon Lee, Xinshuo Weng, Vishnu Naresh Boddeti, Yu Zhang, Fares Beainy, Kris Kitani, Takeo Kanade

We introduce the concept of a Visual Compiler that generates a scene specific pedestrian detector and pose estimator without any pedestrian observations.

Multivariate Regression with Grossly Corrupted Observations: A Robust Approach and its Applications

no code implementations • 11 Jan 2017 • Xiaowei Zhang, Chi Xu, Yu Zhang, Tingshao Zhu, Li Cheng

The implementation of our approach and comparison methods as well as the involved datasets are made publicly available in support of the open-source and reproducible research initiatives.

Plan Explanations as Model Reconciliation: Moving Beyond Explanation as Soliloquy

no code implementations • 28 Jan 2017 • Tathagata Chakraborti, Sarath Sreedharan, Yu Zhang, Subbarao Kambhampati

When AI systems interact with humans in the loop, they are often called on to provide explanations for their plans and behavior.

Sequence-based Multimodal Apprenticeship Learning For Robot Perception and Decision Making

no code implementations • 24 Feb 2017 • Fei Han, Xue Yang, Yu Zhang, Hao Zhang

Apprenticeship learning has recently attracted a wide attention due to its capability of allowing robots to learn physical tasks directly from demonstrations provided by human experts.

Simultaneous Feature and Body-Part Learning for Real-Time Robot Awareness of Human Behaviors

no code implementations • 24 Feb 2017 • Fei Han, Xue Yang, Christopher Reardon, Yu Zhang, Hao Zhang

We formulate FABL as a regression-like optimization problem with structured sparsity-inducing norms to model interrelationships of body parts and features.

Learning Latent Representations for Speech Generation and Transformation

no code implementations • 13 Apr 2017 • Wei-Ning Hsu, Yu Zhang, James Glass

In this paper, we apply a convolutional VAE to model the generative process of natural speech.

Causes and Corrections for Bimodal Multipath Scanning with Structured Light

no code implementations • 8 Jun 2017 • Yu Zhang, Daniel L. Lau, Ying Yu

Structured light illumination is an active 3-D scanning technique based on projecting/capturing a set of striped patterns and measuring the warping of the patterns as they reflect off a target object's surface.

Advances in Joint CTC-Attention based End-to-End Speech Recognition with a Deep CNN Encoder and RNN-LM

6 code implementations • 8 Jun 2017 • Takaaki Hori, Shinji Watanabe, Yu Zhang, William Chan

The CTC network sits on top of the encoder and is jointly trained with the attention-based decoder.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+3

Automatic Speech Recognition (ASR)

+3

Structured Light Phase Measuring Profilometry Pattern Design for Binary Spatial Light Modulators

no code implementations • 8 Jun 2017 • Daniel L. Lau, Yu Zhang, Kai Liu

In the case of phase measuring profilometry (PMP), the projected patterns are composed of a rolling sinusoidal wave, but as a set of time-multiplexed patterns, PMP requires the target surface to remain motionless or for scanning to be performed at such high rates that any movement is small.

What Is and What Is Not a Salient Object? Learning Salient Object Detector by Ensembling Linear Exemplar Regressors

no code implementations • CVPR 2017 • Changqun Xia, Jia Li, Xiaowu Chen, Anlin Zheng, Yu Zhang

Finding what is and what is not a salient object can be helpful in developing better features and models in salient object detection (SOD).

AI Challenges in Human-Robot Cognitive Teaming

no code implementations • 15 Jul 2017 • Tathagata Chakraborti, Subbarao Kambhampati, Matthias Scheutz, Yu Zhang

Among the many anticipated roles for robots in the future is that of being a human teammate.

Unsupervised Domain Adaptation for Robust Speech Recognition via Variational Autoencoder-Based Data Augmentation

no code implementations • 19 Jul 2017 • Wei-Ning Hsu, Yu Zhang, James Glass

Research on robust speech recognition can be regarded as trying to overcome this domain mismatch issue.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+4

Automatic Speech Recognition (ASR)

+4

A Survey on Multi-Task Learning

1 code implementation • 25 Jul 2017 • Yu Zhang, Qiang Yang

Multi-Task Learning (MTL) is a learning paradigm in machine learning and its aim is to leverage useful information contained in multiple related tasks to help improve the generalization performance of all the tasks.

SCIR-QA at SemEval-2017 Task 3: CNN Model Based on Similar and Dissimilar Information between Keywords for Question Similarity

no code implementations • SEMEVAL 2017 • Le Qi, Yu Zhang, Ting Liu

We describe a method of calculating the similarity of questions in community QA.

Learning to Transfer

no code implementations • 18 Aug 2017 • Ying Wei, Yu Zhang, Qiang Yang

We establish the L2T framework in two stages: 1) we first learn a reflection function encrypting transfer learning skills from experiences; and 2) we infer what and how to transfer for a newly arrived pair of domains by optimizing the reflection function.

Chinese Zero Pronoun Resolution with Deep Memory Network

no code implementations • EMNLP 2017 • Qingyu Yin, Yu Zhang, Wei-Nan Zhang, Ting Liu

Existing approaches for Chinese zero pronoun resolution typically utilize only syntactical and lexical features while ignoring semantic information.

Simple Recurrent Units for Highly Parallelizable Recurrence

11 code implementations • EMNLP 2018 • Tao Lei, Yu Zhang, Sida I. Wang, Hui Dai, Yoav Artzi

Common recurrent neural architectures scale poorly due to the intrinsic difficulty in parallelizing their state computations.

Ranked #32 on

Question Answering

on SQuAD1.1 dev

Ranked #32 on

Question Answering

on SQuAD1.1 dev

Flexible End-to-End Dialogue System for Knowledge Grounded Conversation

no code implementations • 13 Sep 2017 • Wenya Zhu, Kaixiang Mo, Yu Zhang, Zhangbin Zhu, Xuezheng Peng, Qiang Yang

Although existing generative question answering (QA) systems can be applied to knowledge grounded conversation, they either have at most one entity in a response or cannot deal with out-of-vocabulary entities.

Unsupervised Learning of Disentangled and Interpretable Representations from Sequential Data

3 code implementations • NeurIPS 2017 • Wei-Ning Hsu, Yu Zhang, James Glass

We present a factorized hierarchical variational autoencoder, which learns disentangled and interpretable representations from sequential data without supervision.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+2

Automatic Speech Recognition (ASR)

+2

Supervision by Fusion: Towards Unsupervised Learning of Deep Salient Object Detector

no code implementations • ICCV 2017 • Dingwen Zhang, Junwei Han, Yu Zhang

Based on this insight, we combine an intra-image fusion stream and a inter-image fusion stream in the proposed framework to generate the learning curriculum and pseudo ground-truth for supervising the training of the deep salient object detector.

Learning Graphical Models from a Distributed Stream

no code implementations • 5 Oct 2017 • Yu Zhang, Srikanta Tirthapura, Graham Cormode

We study Bayesian networks, the workhorse of graphical models, and present a communication-efficient method for continuously learning and maintaining a Bayesian network model over data that is arriving as a distributed stream partitioned across multiple processors.

Weakly-supervised Relation Extraction by Pattern-enhanced Embedding Learning

no code implementations • 9 Nov 2017 • Meng Qu, Xiang Ren, Yu Zhang, Jiawei Han

We propose a novel co-training framework with a distributional module and a pattern module.

Integrating User and Agent Models: A Deep Task-Oriented Dialogue System

no code implementations • 10 Nov 2017 • Weiyan Wang, Yuxiang Wu, Yu Zhang, Zhongqi Lu, Kaixiang Mo, Qiang Yang

Then the built user model is used as a leverage to train the agent model by deep reinforcement learning.

Fine Grained Knowledge Transfer for Personalized Task-oriented Dialogue Systems

no code implementations • 11 Nov 2017 • Kaixiang Mo, Yu Zhang, Qiang Yang, Pascale Fung

Training a personalized dialogue system requires a lot of data, and the data collected for a single user is usually insufficient.

Image Matters: Visually modeling user behaviors using Advanced Model Server

no code implementations • 17 Nov 2017 • Tiezheng Ge, Liqin Zhao, Guorui Zhou, Keyu Chen, Shuying Liu, Huimin Yi, Zelin Hu, Bochao Liu, Peng Sun, Haoyu Liu, Pengtao Yi, Sui Huang, Zhiqiang Zhang, Xiaoqiang Zhu, Yu Zhang, Kun Gai

So we propose to model user preference jointly with user behavior ID features and behavior images.

Natural TTS Synthesis by Conditioning WaveNet on Mel Spectrogram Predictions

30 code implementations • 16 Dec 2017 • Jonathan Shen, Ruoming Pang, Ron J. Weiss, Mike Schuster, Navdeep Jaitly, Zongheng Yang, Zhifeng Chen, Yu Zhang, Yuxuan Wang, RJ Skerry-Ryan, Rif A. Saurous, Yannis Agiomyrgiannakis, Yonghui Wu

This paper describes Tacotron 2, a neural network architecture for speech synthesis directly from text.

Ranked #2 on

Speech Synthesis

on North American English

Ranked #2 on

Speech Synthesis

on North American English

Training RNNs as Fast as CNNs

1 code implementation • ICLR 2018 • Tao Lei, Yu Zhang, Yoav Artzi

Common recurrent neural network architectures scale poorly due to the intrinsic difficulty in parallelizing their state computations.

Cross-type Biomedical Named Entity Recognition with Deep Multi-Task Learning

2 code implementations • 30 Jan 2018 • Xuan Wang, Yu Zhang, Xiang Ren, Yuhao Zhang, Marinka Zitnik, Jingbo Shang, Curtis Langlotz, Jiawei Han

Motivation: State-of-the-art biomedical named entity recognition (BioNER) systems often require handcrafted features specific to each entity type, such as genes, chemicals and diseases.

Style Tokens: Unsupervised Style Modeling, Control and Transfer in End-to-End Speech Synthesis

11 code implementations • ICML 2018 • Yuxuan Wang, Daisy Stanton, Yu Zhang, RJ Skerry-Ryan, Eric Battenberg, Joel Shor, Ying Xiao, Fei Ren, Ye Jia, Rif A. Saurous

In this work, we propose "global style tokens" (GSTs), a bank of embeddings that are jointly trained within Tacotron, a state-of-the-art end-to-end speech synthesis system.

LCMR: Local and Centralized Memories for Collaborative Filtering with Unstructured Text

no code implementations • 17 Apr 2018 • GuangNeng Hu, Yu Zhang, Qiang Yang

By modeling content information as local memories, LCMR attentively learns what to exploit with the guidance of user-item interaction.

CoNet: Collaborative Cross Networks for Cross-Domain Recommendation

1 code implementation • 18 Apr 2018 • Guang-Neng Hu, Yu Zhang, Qiang Yang

CoNet enables dual knowledge transfer across domains by introducing cross connections from one base network to another and vice versa.

Cross-domain Dialogue Policy Transfer via Simultaneous Speech-act and Slot Alignment

no code implementations • 20 Apr 2018 • Kaixiang Mo, Yu Zhang, Qiang Yang, Pascale Fung

Also, they depend on either common slots or slot entropy, which are not available when the source and target slots are totally disjoint and no database is available to calculate the slot entropy.

Expert Finding in Community Question Answering: A Review

no code implementations • 21 Apr 2018 • Sha Yuan, Yu Zhang, Jie Tang, Juan Bautista Cabotà

Moreover, we use innovative diagrams to clarify several important concepts of ensemble learning, and find that ensemble models with several specific single models can further boosting the performance.

Parameter Transfer Unit for Deep Neural Networks

no code implementations • 23 Apr 2018 • Yinghua Zhang, Yu Zhang, Qiang Yang

Unfortunately, the transferability is usually defined as discrete states and it differs with domains and network architectures.

Hierarchical Attention Transfer Network for Cross-Domain Sentiment Classification

1 code implementation • Thirty-Second AAAI Conference on Artificial Intelligence 2018 • Zheng Li, Ying WEI, Yu Zhang, Qiang Yang

Existing cross-domain sentiment classification meth- ods cannot automatically capture non-pivots, i. e., the domain- specific sentiment words, and pivots, i. e., the domain-shared sentiment words, simultaneously.

Integrating Local Context and Global Cohesiveness for Open Information Extraction

1 code implementation • 26 Apr 2018 • Qi Zhu, Xiang Ren, Jingbo Shang, Yu Zhang, Ahmed El-Kishky, Jiawei Han

However, current Open IE systems focus on modeling local context information in a sentence to extract relation tuples, while ignoring the fact that global statistics in a large corpus can be collectively leveraged to identify high-quality sentence-level extractions.

Variable-fidelity expected improvement method for efficient global optimization of expensive functions

no code implementations • Structural and Multidisciplinary Optimization 2018 • Yu Zhang, Zhong-Hua Han, Ke-Shi Zhang

The efficient global optimization method (EGO) based on kriging surrogate model and expected improvement (EI) has received much attention for optimization of high-fidelity, expensive functions.

Image Co-segmentation via Multi-scale Local Shape Transfer

no code implementations • 15 May 2018 • Wei Teng, Yu Zhang, Xiaowu Chen, Jia Li, Zhiqiang He

Image co-segmentation is a challenging task in computer vision that aims to segment all pixels of the objects from a predefined semantic category.

Learning to Multitask

no code implementations • NeurIPS 2018 • Yu Zhang, Ying WEI, Qiang Yang

Based on such training set, L2MT first uses a proposed layerwise graph neural network to learn task embeddings for all the tasks in a multitask problem and then learns an estimation function to estimate the relative test error based on task embeddings and the representation of the multitask model based on a unified formulation.

Deep Reinforcement Learning for Chinese Zero pronoun Resolution

1 code implementation • ACL 2018 • Qingyu Yin, Yu Zhang, Wei-Nan Zhang, Ting Liu, William Yang Wang

In this study, we show how to integrate local and global decision-making by exploiting deep reinforcement learning models.

Transfer Learning from Speaker Verification to Multispeaker Text-To-Speech Synthesis

11 code implementations • NeurIPS 2018 • Ye Jia, Yu Zhang, Ron J. Weiss, Quan Wang, Jonathan Shen, Fei Ren, Zhifeng Chen, Patrick Nguyen, Ruoming Pang, Ignacio Lopez Moreno, Yonghui Wu

Clone a voice in 5 seconds to generate arbitrary speech in real-time

Transfer Learning via Learning to Transfer

no code implementations • ICML 2018 • Ying WEI, Yu Zhang, Junzhou Huang, Qiang Yang

In transfer learning, what and how to transfer are two primary issues to be addressed, as different transfer learning algorithms applied between a source and a target domain result in different knowledge transferred and thereby the performance improvement in the target domain.

Back-Translation-Style Data Augmentation for End-to-End ASR

no code implementations • 28 Jul 2018 • Tomoki Hayashi, Shinji Watanabe, Yu Zhang, Tomoki Toda, Takaaki Hori, Ramon Astudillo, Kazuya Takeda

In this paper we propose a novel data augmentation method for attention-based end-to-end automatic speech recognition (E2E-ASR), utilizing a large amount of text which is not paired with speech signals.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+5

Automatic Speech Recognition (ASR)

+5

Zero Pronoun Resolution with Attention-based Neural Network

1 code implementation • COLING 2018 • Qingyu Yin, Yu Zhang, Wei-Nan Zhang, Ting Liu, William Yang Wang

Recent neural network methods for zero pronoun resolution explore multiple models for generating representation vectors for zero pronouns and their candidate antecedents.

Semi-Supervised Training for Improving Data Efficiency in End-to-End Speech Synthesis

no code implementations • 30 Aug 2018 • Yu-An Chung, Yuxuan Wang, Wei-Ning Hsu, Yu Zhang, RJ Skerry-Ryan

We demonstrate that the proposed framework enables Tacotron to generate intelligible speech using less than half an hour of paired training data.

Human activity recognition based on time series analysis using U-Net

no code implementations • 20 Sep 2018 • Yong Zhang, Yu Zhang, Zhao Zhang, Jie Bao, Yunpeng Song

Traditional human activity recognition (HAR) based on time series adopts sliding window analysis method.

Cross-lingual Knowledge Graph Alignment via Graph Convolutional Networks

1 code implementation • EMNLP 2018 • Zhichun Wang, Qingsong Lv, Xiaohan Lan, Yu Zhang

Embeddings can be learned from both the structural and attribute information of entities, and the results of structure embedding and attribute embedding are combined to get accurate alignments.

Ranked #5 on

Entity Alignment

on YAGO-WIKI50K

Ranked #5 on

Entity Alignment

on YAGO-WIKI50K

Top-K Influential Nodes in Social Networks: A Game Perspective

no code implementations • 14 Oct 2018 • Yu Zhang, Yan Zhang

In this paper, we study influence maximization from a game perspective.

Hierarchical Generative Modeling for Controllable Speech Synthesis

2 code implementations • ICLR 2019 • Wei-Ning Hsu, Yu Zhang, Ron J. Weiss, Heiga Zen, Yonghui Wu, Yuxuan Wang, Yuan Cao, Ye Jia, Zhifeng Chen, Jonathan Shen, Patrick Nguyen, Ruoming Pang

This paper proposes a neural sequence-to-sequence text-to-speech (TTS) model which can control latent attributes in the generated speech that are rarely annotated in the training data, such as speaking style, accent, background noise, and recording conditions.

Cycle-consistency training for end-to-end speech recognition

no code implementations • 2 Nov 2018 • Takaaki Hori, Ramon Astudillo, Tomoki Hayashi, Yu Zhang, Shinji Watanabe, Jonathan Le Roux

To solve this problem, this work presents a loss that is based on the speech encoder state sequence instead of the raw speech signal.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+2

Automatic Speech Recognition (ASR)

+2

Modeling and Predicting Popularity Dynamics via Deep Learning Attention Mechanism

no code implementations • 6 Nov 2018 • Sha Yuan, Yu Zhang, Jie Tang, Hua-Wei Shen, Xingxing Wei

Here we propose a deep learning attention mechanism to model the process through which individual items gain their popularity.

Modeling and Predicting Citation Count via Recurrent Neural Network with Long Short-Term Memory

no code implementations • 6 Nov 2018 • Sha Yuan, Jie Tang, Yu Zhang, Yifan Wang, Tong Xiao

The rapid evolution of scientific research has been creating a huge volume of publications every year.

Digital Libraries Physics and Society

RGB-D SLAM in Dynamic Environments Using Point Correlations

no code implementations • 8 Nov 2018 • Weichen Dai, Yu Zhang, Ping Li, Zheng Fang, Sebastian Scherer

This method utilizes the correlation between map points to separate points that are part of the static scene and points that are part of different moving objects into different groups.

Model-guided Multi-path Knowledge Aggregation for Aerial Saliency Prediction

no code implementations • 14 Nov 2018 • Kui Fu, Jia Li, Yu Zhang, Hongze Shen, Yonghong Tian

After that, the visual saliency knowledge encoded in the most representative paths is selected and aggregated to improve the capability of MM-Net in predicting spatial saliency in aerial scenarios.

Exploiting Coarse-to-Fine Task Transfer for Aspect-level Sentiment Classification

1 code implementation • AAAI 2019 2018 • Zheng Li, Ying WEI, Yu Zhang, Xiang Zhang, Xin Li, Qiang Yang

Aspect-level sentiment classification (ASC) aims at identifying sentiment polarities towards aspects in a sentence, where the aspect can behave as a general Aspect Category (AC) or a specific Aspect Term (AT).

Bytes are All You Need: End-to-End Multilingual Speech Recognition and Synthesis with Bytes

no code implementations • 22 Nov 2018 • Bo Li, Yu Zhang, Tara Sainath, Yonghui Wu, William Chan

We present two end-to-end models: Audio-to-Byte (A2B) and Byte-to-Audio (B2A), for multilingual speech recognition and synthesis.

Complementary Segmentation of Primary Video Objects with Reversible Flows

no code implementations • 23 Nov 2018 • Jia Li, Junjie Wu, Anlin Zheng, Yafei Song, Yu Zhang, Xiaowu Chen

Segmenting primary objects in a video is an important yet challenging problem in computer vision, as it exhibits various levels of foreground/background ambiguities.

FLEET: Butterfly Estimation from a Bipartite Graph Stream

1 code implementation • 8 Dec 2018 • Seyed-Vahid Sanei-Mehri, Yu Zhang, Ahmet Erdem Sariyuce, Srikanta Tirthapura

We consider space-efficient single-pass estimation of the number of butterflies, a fundamental bipartite graph motif, from a massive bipartite graph stream where each edge represents a connection between entities in two different partitions.

Data Structures and Algorithms

Fault Location in Power Distribution Systems via Deep Graph Convolutional Networks

1 code implementation • 22 Dec 2018 • Kunjin Chen, Jun Hu, Yu Zhang, Zhanqing Yu, Jinliang He

This paper develops a novel graph convolutional network (GCN) framework for fault location in power distribution networks.

Selectivity or Invariance: Boundary-aware Salient Object Detection

no code implementations • ICCV 2019 • Jinming Su, Jia Li, Yu Zhang, Changqun Xia, Yonghong Tian

In this network, the feature selectivity at boundaries is enhanced by incorporating a boundary localization stream, while the feature invariance at interiors is guaranteed with a complex interior perception stream.

The height problem in first passage percolation

no code implementations • 27 Dec 2018 • Yu Zhang

We consider the first passage percolation model in Z2 with a distribution F for 0 < F (0) < pc.

Probability

Interactive Plan Explicability in Human-Robot Teaming

no code implementations • 17 Jan 2019 • Mehrdad Zakershahrak, Yu Zhang

Being aware of the human teammates' expectation leads to robot behaviors that better align with human expectation, thus facilitating more efficient and potentially safer teams.

Transfer Meets Hybrid: A Synthetic Approach for Cross-Domain Collaborative Filtering with Text

no code implementations • 22 Jan 2019 • Guang-Neng Hu, Yu Zhang, Qiang Yang

Another thread is to transfer knowledge from other source domains such as improving the movie recommendation with the knowledge from the book domain, leading to transfer learning methods.

Progressive Explanation Generation for Human-robot Teaming

no code implementations • 2 Feb 2019 • Yu Zhang, Mehrdad Zakershahrak

A progressive explanation improves understanding by limiting the cognitive effort required at each step of making the explanation.

Lingvo: a Modular and Scalable Framework for Sequence-to-Sequence Modeling

2 code implementations • 21 Feb 2019 • Jonathan Shen, Patrick Nguyen, Yonghui Wu, Zhifeng Chen, Mia X. Chen, Ye Jia, Anjuli Kannan, Tara Sainath, Yuan Cao, Chung-Cheng Chiu, Yanzhang He, Jan Chorowski, Smit Hinsu, Stella Laurenzo, James Qin, Orhan Firat, Wolfgang Macherey, Suyog Gupta, Ankur Bapna, Shuyuan Zhang, Ruoming Pang, Ron J. Weiss, Rohit Prabhavalkar, Qiao Liang, Benoit Jacob, Bowen Liang, HyoukJoong Lee, Ciprian Chelba, Sébastien Jean, Bo Li, Melvin Johnson, Rohan Anil, Rajat Tibrewal, Xiaobing Liu, Akiko Eriguchi, Navdeep Jaitly, Naveen Ari, Colin Cherry, Parisa Haghani, Otavio Good, Youlong Cheng, Raziel Alvarez, Isaac Caswell, Wei-Ning Hsu, Zongheng Yang, Kuan-Chieh Wang, Ekaterina Gonina, Katrin Tomanek, Ben Vanik, Zelin Wu, Llion Jones, Mike Schuster, Yanping Huang, Dehao Chen, Kazuki Irie, George Foster, John Richardson, Klaus Macherey, Antoine Bruguier, Heiga Zen, Colin Raffel, Shankar Kumar, Kanishka Rao, David Rybach, Matthew Murray, Vijayaditya Peddinti, Maxim Krikun, Michiel A. U. Bacchiani, Thomas B. Jablin, Rob Suderman, Ian Williams, Benjamin Lee, Deepti Bhatia, Justin Carlson, Semih Yavuz, Yu Zhang, Ian McGraw, Max Galkin, Qi Ge, Golan Pundak, Chad Whipkey, Todd Wang, Uri Alon, Dmitry Lepikhin, Ye Tian, Sara Sabour, William Chan, Shubham Toshniwal, Baohua Liao, Michael Nirschl, Pat Rondon

Lingvo is a Tensorflow framework offering a complete solution for collaborative deep learning research, with a particular focus towards sequence-to-sequence models.

Integrating neural networks into the blind deblurring framework to compete with the end-to-end learning-based methods

no code implementations • 7 Mar 2019 • Junde Wu, Xiaoguang Di, Jiehao Huang, Yu Zhang

Recently, end-to-end learning-based methods based on deep neural network (DNN) have been proven effective for blind deblurring.

HLT@SUDA at SemEval 2019 Task 1: UCCA Graph Parsing as Constituent Tree Parsing

no code implementations • 11 Mar 2019 • Wei Jiang, Zhenghua Li, Yu Zhang, Min Zhang

The key idea is to convert a UCCA semantic graph into a constituent tree, in which extra labels are deliberately designed to mark remote edges and discontinuous nodes for future recovery.

Online Explanation Generation for Human-Robot Teaming

no code implementations • 15 Mar 2019 • Mehrdad Zakershahrak, Ze Gong, Nikhillesh Sadassivam, Yu Zhang

The new explanation generation methods are based on a model reconciliation setting introduced in our prior work.

LibriTTS: A Corpus Derived from LibriSpeech for Text-to-Speech

5 code implementations • 5 Apr 2019 • Heiga Zen, Viet Dang, Rob Clark, Yu Zhang, Ron J. Weiss, Ye Jia, Zhifeng Chen, Yonghui Wu

This paper introduces a new speech corpus called "LibriTTS" designed for text-to-speech use.

Sound Audio and Speech Processing

Image Quality Assessment for Omnidirectional Cross-reference Stitching

no code implementations • 10 Apr 2019 • Kaiwen Yu, Jia Li, Yu Zhang, Yifan Zhao, Long Xu

Along with the development of virtual reality (VR), omnidirectional images play an important role in producing multimedia content with immersive experience.

Interpretable Classification from Skin Cancer Histology Slides Using Deep Learning: A Retrospective Multicenter Study

no code implementations • 12 Apr 2019 • Peizhen Xie, Ke Zuo, Yu Zhang, Fangfang Li, Mingzhu Yin, Kai Lu

For making the classifications reasonable, the visualization of CNN representations is furthermore used to identify cells between melanoma and nevi.

A Light Dual-Task Neural Network for Haze Removal

no code implementations • 12 Apr 2019 • Yu Zhang, Xinchao Wang, Xiaojun Bi, DaCheng Tao

In LDTNet, the haze-free image and the transmission map are produced simultaneously.

SpecAugment: A Simple Data Augmentation Method for Automatic Speech Recognition

29 code implementations • 18 Apr 2019 • Daniel S. Park, William Chan, Yu Zhang, Chung-Cheng Chiu, Barret Zoph, Ekin D. Cubuk, Quoc V. Le

On LibriSpeech, we achieve 6. 8% WER on test-other without the use of a language model, and 5. 8% WER with shallow fusion with a language model.

Ranked #1 on

Speech Recognition

on Hub5'00 SwitchBoard

Ranked #1 on

Speech Recognition

on Hub5'00 SwitchBoard

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+2

Automatic Speech Recognition (ASR)

+2

From Abstractions to Grounded Languages for Robust Coordination of Task Planning Robots

no code implementations • 1 May 2019 • Yu Zhang

In this paper, we consider a first step to bridge a gap in coordinating task planning robots.

A Survey on Deep Learning-based Non-Invasive Brain Signals:Recent Advances and New Frontiers

no code implementations • 10 May 2019 • Xiang Zhang, Lina Yao, Xianzhi Wang, Jessica Monaghan, David Mcalpine, Yu Zhang

Brain-Computer Interface (BCI) bridges the human's neural world and the outer physical world by decoding individuals' brain signals into commands recognizable by computer devices.

HLT@SUDA at SemEval-2019 Task 1: UCCA Graph Parsing as Constituent Tree Parsing

no code implementations • SEMEVAL 2019 • Wei Jiang, Zhenghua Li, Yu Zhang, Min Zhang

The key idea is to convert a UCCA semantic graph into a constituent tree, in which extra labels are deliberately designed to mark remote edges and discontinuous nodes for future recovery.

Ranked #1 on

UCCA Parsing

on SemEval 2019 Task 1

Ranked #1 on

UCCA Parsing

on SemEval 2019 Task 1

Causes and Corrections for Bimodal Multi-Path Scanning With Structured Light

no code implementations • CVPR 2019 • Yu Zhang, Daniel L. Lau, Ying Yu

Structured light illumination is an active 3D scanning technique based on projecting/capturing a set of striped patterns and measuring the warping of the patterns as they reflect off a target object's surface.

Structure-Preserving Stereoscopic View Synthesis With Multi-Scale Adversarial Correlation Matching

no code implementations • CVPR 2019 • Yu Zhang, Dongqing Zou, Jimmy S. Ren, Zhe Jiang, Xiaohao Chen

This paper addresses stereoscopic view synthesis from a single image.

Evidence for $Z_{c}^{\pm}$ decays into the $ρ^{\pm} η_{c}$ final state

no code implementations • 3 Jun 2019 • M. Ablikim, M. N. Achasov, S. Ahmed, M. Albrecht, M. Alekseev, A. Amoroso, F. F. An, Q. An, Y. Bai, O. Bakina, R. Baldini Ferroli, Y. Ban, K. Begzsuren, D. W. Bennett, J. V. Bennett, N. Berger, M. Bertani, D. Bettoni, F. Bianchi, E. Boger, I. Boyko, R. A. Briere, H. Cai, X. Cai, A. Calcaterra, G. F. Cao, S. A. Cetin, J. Chai, J. F. Chang, W. L. Chang, G. Chelkov, G. Chen, H. S. Chen, J. C. Chen, M. L. Chen, P. L. Chen, S. J. Chen, X. R. Chen, Y. B. Chen, W. Cheng, X. K. Chu, G. Cibinetto, F. Cossio, H. L. Dai, J. P. Dai, A. Dbeyssi, D. Dedovich, Z. Y. Deng, A. Denig, I. Denysenko, M. Destefanis, F. DeMori, Y. Ding, C. Dong, J. Dong, L. Y. Dong, M. Y. Dong, Z. L. Dou, S. X. Du, P. F. Duan, J. Fang, S. S. Fang, Y. Fang, R. Farinelli, L. Fava, F. Feldbauer, G. Felici, C. Q. Feng, M. Fritsch, C. D. Fu, Q. Gao, X. L. Gao, Y. Gao, Y. G. Gao, Z. Gao, B. Garillon, I. Garzia, A. Gilman, K. Goetzen, L. Gong, W. X. Gong, W. Gradl, M. Greco, L. M. Gu, M. H. Gu, Y. T. Gu, A. Q. Guo, L. B. Guo, R. P. Guo, Y. P. Guo, A. Guskov, Z. Haddadi, S. Han, X. Q. Hao, F. A. Harris, K. L. He, F. H. Heinsius, T. Held, Y. K. Heng, Z. L. Hou, H. M. Hu, J. F. Hu, T. Hu, Y. Hu, G. S. Huang, J. S. Huang, X. T. Huang, X. Z. Huang, Z. L. Huang, T. Hussain, W. Ikegami Andersson, M. Irshad, Q. Ji, Q. P. Ji, X. B. Ji, X. L. Ji, H. L. Jiang, X. S. Jiang, X. Y. Jiang, J. B. Jiao, Z. Jiao, D. P. Jin, S. Jin, Y. Jin, T. Johansson, A. Julin, N. Kalantar-Nayestanaki, X. S. Kang, M. Kavatsyuk, B. C. Ke, I. K. Keshk, T. Khan, A. Khoukaz, P. Kiese, R. Kiuchi, R. Kliemt, L. Koch, O. B. Kolcu, B. Kopf, M. Kuemmel, M. Kuessner, A. Kupsc, M. Kurth, W. Kühn, J. S. Lange, P. Larin, L. Lavezzi, S. Leiber, H. Leithoff, C. Li, Cheng Li, D. M. Li, F. Li, F. Y. Li, G. Li, H. B. Li, H. J. Li, J. C. Li, J. W. Li, K. J. Li, Kang Li, Ke Li, Lei LI, P. L. Li, P. R. Li, Q. Y. Li, T. Li, W. D. Li, W. G. Li, X. L. Li, X. N. Li, X. Q. Li, Z. B. Li, H. Liang, Y. F. Liang, Y. T. Liang, G. R. Liao, L. Z. Liao, J. Libby, C. X. Lin, D. X. Lin, B. Liu, B. J. Liu, C. X. Liu, D. Liu, D. Y. Liu, F. H. Liu, Fang Liu, Feng Liu, H. B. Liu, H. L. Liu, H. M. Liu, Huanhuan Liu, Huihui Liu, J. B. Liu, J. Y. Liu, K. Y. Liu, Ke Liu, L. D. Liu, Q. Liu, S. B. Liu, X. Liu, Y. B. Liu, Z. A. Liu, Zhiqing Liu, Y. F. Long, X. C. Lou, H. J. Lu, J. G. Lu, Y. Lu, Y. P. Lu, C. L. Luo, M. X. Luo, P. W. Luo, T. Luo, X. L. Luo, S. Lusso, X. R. Lyu, F. C. Ma, H. L. Ma, L. L. Ma, M. M. Ma, Q. M. Ma, X. N. Ma, X. Y. Ma, Y. M. Ma, F. E. Maas, M. Maggiora, S. Maldaner, Q. A. Malik, A. Mangoni, Y. J. Mao, Z. P. Mao, S. Marcello, Z. X. Meng, J. G. Messchendorp, G. Mezzadri, J. Min, T. J. Min, R. E. Mitchell, X. H. Mo, Y. J. Mo, C. Morales Morales, N. Yu. Muchnoi, H. Muramatsu, A. Mustafa, S. Nakhoul, Y. Nefedov, F. Nerling, I. B. Nikolaev, Z. Ning, S. Nisar, S. L. Niu, X. Y. Niu, S. L. Olsen, Q. Ouyang, S. Pacetti, Y. Pan, M. Papenbrock, P. Patteri, M. Pelizaeus, J. Pellegrino, H. P. Peng, Z. Y. Peng, K. Peters, J. Pettersson, J. L. Ping, R. G. Ping, A. Pitka, R. Poling, V. Prasad, H. R. Qi, M. Qi, T. Y. Qi, S. Qian, C. F. Qiao, N. Qin, X. S. Qin, Z. H. Qin, J. F. Qiu, S. Q. Qu, K. H. Rashid, C. F. Redmer, M. Richter, M. Ripka, A. Rivetti, M. Rolo, G. Rong, Ch. Rosner, A. Sarantsev, M. Savrié, K. Schoenning, W. Shan, X. Y. Shan, M. Shao, C. P. Shen, P. X. Shen, X. Y. Shen, H. Y. Sheng, X. Shi, J. J. Song, W. M. Song, X. Y. Song, S. Sosio, C. Sowa, S. Spataro, F. F. Sui, G. X. Sun, J. F. Sun, L. Sun, S. S. Sun, X. H. Sun, Y. J. Sun, Y. K Sun, Y. Z. Sun, Z. J. Sun, Z. T. Sun, Y. T Tan, C. J. Tang, G. Y. Tang, X. Tang, M. Tiemens, B. Tsednee, I. Uman, B. Wang, B. L. Wang, C. W. Wang, D. Wang, D. Y. Wang, Dan Wang, H. H. Wang, K. Wang, L. L. Wang, L. S. Wang, M. Wang, Meng Wang, P. Wang, P. L. Wang, W. P. Wang, X. F. Wang, Y. Wang, Y. F. Wang, Z. Wang, Z. G. Wang, Z. Y. Wang, Zongyuan Wang, T. Weber, D. H. Wei, P. Weidenkaff, S. P. Wen, U. Wiedner, M. Wolke, L. H. Wu, L. J. Wu, Z. Wu, L. Xia, X. Xia, Y. Xia, D. Xiao, Y. J. Xiao, Z. J. Xiao, Y. G. Xie, Y. H. Xie, X. A. Xiong, Q. L. Xiu, G. F. Xu, J. J. Xu, L. Xu, Q. J. Xu, X. P. Xu, F. Yan, L. Yan, W. B. Yan, W. C. Yan, Y. H. Yan, H. J. Yang, H. X. Yang, L. Yang, R. X. Yang, S. L. Yang, Y. H. Yang, Y. X. Yang, Yifan Yang, Z. Q. Yang, M. Ye, M. H. Ye, J. H. Yin, Z. Y. You, B. X. Yu, C. X. Yu, J. S. Yu, C. Z. Yuan, Y. Yuan, A. Yuncu, A. A. Zafar, Y. Zeng, B. X. Zhang, B. Y. Zhang, C. C. Zhang, D. H. Zhang, H. H. Zhang, H. Y. Zhang, J. Zhang, J. L. Zhang, J. Q. Zhang, J. W. Zhang, J. Y. Zhang, J. Z. Zhang, K. Zhang, L. Zhang, S. F. Zhang, T. J. Zhang, X. Y. Zhang, Y. Zhang, Y. H. Zhang, Y. T. Zhang, Yang Zhang, YaoZ hang, Yu Zhang, Z. H. Zhang, Z. P. Zhang, Z. Y. Zhang, G. Zhao, J. W. Zhao, J. Y. Zhao, J. Z. Zhao, Lei Zhao, Ling Zhao, M. G. Zhao, Q. Zhao, S. J. Zhao, T. C. Zhao, Y. B. Zhao, Z. G. Zhao, A. Zhemchugov, B. Zheng, J. P. Zheng, W. J. Zheng, Y. H. Zheng, B. Zhong, L. Zhou, Q. Zhou, X. Zhou, X. K. Zhou, X. R. Zhou, X. Y. Zhou, Xiaoyu Zhou, Xu Zhou, A. N. Zhu, J. Zhu, K. Zhu, K. J. Zhu, S. Zhu, S. H. Zhu, X. L. Zhu, Y. C. Zhu, Y. S. Zhu, Z. A. Zhu, J. Zhuang, B. S. Zou, J. H. Zou

We study $e^{+}e^{-}$ collisions with a $\pi^{+}\pi^{-}\pi^{0}\eta_{c}$ final state using data samples collected with the BESIII detector at center-of-mass energies $\sqrt{s}=4. 226$, $4. 258$, $4. 358$, $4. 416$, and $4. 600$ GeV.

High Energy Physics - Experiment

Federated Hierarchical Hybrid Networks for Clickbait Detection

no code implementations • 3 Jun 2019 • Feng Liao, Hankz Hankui Zhuo, Xiaoling Huang, Yu Zhang

Online media outlets adopt clickbait techniques to lure readers to click on articles in a bid to expand their reach and subsequently increase revenue through ad monetization.

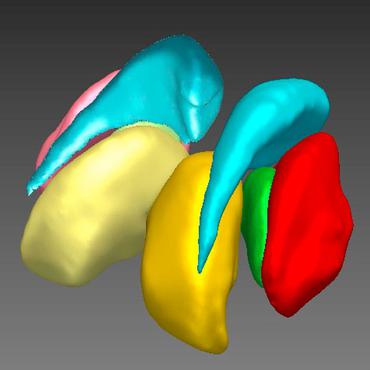

Brain Network Construction and Classification Toolbox (BrainNetClass)

1 code implementation • 17 Jun 2019 • Zhen Zhou, Xiaobo Chen, Yu Zhang, Lishan Qiao, Renping Yu, Gang Pan, Han Zhang, Dinggang Shen

The goal of this work is to introduce a toolbox namely "Brain Network Construction and Classification" (BrainNetClass) to the field to promote more advanced brain network construction methods.

Expected Sarsa($λ$) with Control Variate for Variance Reduction

no code implementations • 25 Jun 2019 • Long Yang, Yu Zhang, Jun Wen, Qian Zheng, Pengfei Li, Gang Pan

In this paper, for reducing the variance, we introduce control variate technique to $\mathtt{Expected}$ $\mathtt{Sarsa}$($\lambda$) and propose a tabular $\mathtt{ES}$($\lambda$)-$\mathtt{CV}$ algorithm.

Policy Optimization with Stochastic Mirror Descent

no code implementations • 25 Jun 2019 • Long Yang, Yu Zhang, Gang Zheng, Qian Zheng, Pengfei Li, Jianhang Huang, Jun Wen, Gang Pan

Improving sample efficiency has been a longstanding goal in reinforcement learning.

Learning to Speak Fluently in a Foreign Language: Multilingual Speech Synthesis and Cross-Language Voice Cloning

4 code implementations • 9 Jul 2019 • Yu Zhang, Ron J. Weiss, Heiga Zen, Yonghui Wu, Zhifeng Chen, RJ Skerry-Ryan, Ye Jia, Andrew Rosenberg, Bhuvana Ramabhadran

We present a multispeaker, multilingual text-to-speech (TTS) synthesis model based on Tacotron that is able to produce high quality speech in multiple languages.

Discriminative Topic Mining via Category-Name Guided Text Embedding

1 code implementation • 20 Aug 2019 • Yu Meng, Jiaxin Huang, Guangyuan Wang, Zihan Wang, Chao Zhang, Yu Zhang, Jiawei Han

We propose a new task, discriminative topic mining, which leverages a set of user-provided category names to mine discriminative topics from text corpora.

Multi-Spectral Visual Odometry without Explicit Stereo Matching

no code implementations • 23 Aug 2019 • Weichen Dai, Yu Zhang, Donglei Sun, Naira Hovakimyan, Ping Li

Moreover, the proposed method can also provide a metric 3D reconstruction in semi-dense density with multi-spectral information, which is not available from existing multi-spectral methods.

Heterogeneous Domain Adaptation via Soft Transfer Network

no code implementations • 28 Aug 2019 • Yuan Yao, Yu Zhang, Xutao Li, Yunming Ye

Heterogeneous domain adaptation (HDA) aims to facilitate the learning task in a target domain by borrowing knowledge from a heterogeneous source domain.

Gradient Q$(σ, λ)$: A Unified Algorithm with Function Approximation for Reinforcement Learning

no code implementations • 6 Sep 2019 • Long Yang, Yu Zhang, Qian Zheng, Pengfei Li, Gang Pan

To address above problem, we propose a GQ$(\sigma,\lambda)$ that extends tabular Q$(\sigma,\lambda)$ with linear function approximation.

Functional Annotation of Human Cognitive States using Graph Convolution Networks

no code implementations • NeurIPS Workshop Neuro_AI 2019 • Yu Zhang, Pierre Bellec

In this project, we applied graph convolutional networks (GCN) to decode brain activity over short time windows in a task fMRI dataset, i. e. associate a given window of fMRI time series with the task used.

Adversarial Representation Learning for Robust Patient-Independent Epileptic Seizure Detection

1 code implementation • 18 Sep 2019 • Xiang Zhang, Lina Yao, Manqing Dong, Zhe Liu, Yu Zhang, Yong Li

Furthermore, to enhance the explainability, we develop an attention mechanism to automatically learn the importance of each EEG channels in the seizure diagnosis procedure.

Speech Recognition with Augmented Synthesized Speech

no code implementations • 25 Sep 2019 • Andrew Rosenberg, Yu Zhang, Bhuvana Ramabhadran, Ye Jia, Pedro Moreno, Yonghui Wu, Zelin Wu

Recent success of the Tacotron speech synthesis architecture and its variants in producing natural sounding multi-speaker synthesized speech has raised the exciting possibility of replacing expensive, manually transcribed, domain-specific, human speech that is used to train speech recognizers.

Knowledge Distillation from Internal Representations

no code implementations • 8 Oct 2019 • Gustavo Aguilar, Yuan Ling, Yu Zhang, Benjamin Yao, Xing Fan, Chenlei Guo

In this paper, we propose to distill the internal representations of a large model such as BERT into a simplified version of it.

End-to-End Multi-View Fusion for 3D Object Detection in LiDAR Point Clouds

no code implementations • 15 Oct 2019 • Yin Zhou, Pei Sun, Yu Zhang, Dragomir Anguelov, Jiyang Gao, Tom Ouyang, James Guo, Jiquan Ngiam, Vijay Vasudevan

In this paper, we aim to synergize the birds-eye view and the perspective view and propose a novel end-to-end multi-view fusion (MVF) algorithm, which can effectively learn to utilize the complementary information from both.

Deep Learning for Massive MIMO with 1-Bit ADCs: When More Antennas Need Fewer Pilots

1 code implementation • 15 Oct 2019 • Yu Zhang, Muhammad Alrabeiah, Ahmed Alkhateeb

This leads to the interesting, and \textit{counter-intuitive}, observation that when more antennas are employed by the massive MIMO base station, our proposed deep learning approach achieves better channel estimation performance, for the same pilot sequence length.

Information Theory Signal Processing Information Theory

HiGitClass: Keyword-Driven Hierarchical Classification of GitHub Repositories

2 code implementations • 16 Oct 2019 • Yu Zhang, Frank F. Xu, Sha Li, Yu Meng, Xuan Wang, Qi Li, Jiawei Han

With the massive number of repositories available, there is a pressing need for topic-based search.

ESPnet-TTS: Unified, Reproducible, and Integratable Open Source End-to-End Text-to-Speech Toolkit

3 code implementations • 24 Oct 2019 • Tomoki Hayashi, Ryuichi Yamamoto, Katsuki Inoue, Takenori Yoshimura, Shinji Watanabe, Tomoki Toda, Kazuya Takeda, Yu Zhang, Xu Tan

Furthermore, the unified design enables the integration of ASR functions with TTS, e. g., ASR-based objective evaluation and semi-supervised learning with both ASR and TTS models.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+1

Automatic Speech Recognition (ASR)

+1

Solving Optimization Problems through Fully Convolutional Networks: an Application to the Travelling Salesman Problem

no code implementations • 27 Oct 2019 • Zhengxuan Ling, Xinyu Tao, Yu Zhang, Xi Chen

Based on samples of a 10 city TSP, a fully convolutional network (FCN) is used to learn the mapping from a feasible region to an optimal solution.

Transferable End-to-End Aspect-based Sentiment Analysis with Selective Adversarial Learning

1 code implementation • IJCNLP 2019 • Zheng Li, Xin Li, Ying WEI, Lidong Bing, Yu Zhang, Qiang Yang

Joint extraction of aspects and sentiments can be effectively formulated as a sequence labeling problem.

Aspect-Based Sentiment Analysis

Aspect-Based Sentiment Analysis

Aspect-Based Sentiment Analysis (ABSA)

+1

Aspect-Based Sentiment Analysis (ABSA)

+1

ASVspoof 2019: A large-scale public database of synthesized, converted and replayed speech

no code implementations • 5 Nov 2019 • Xin Wang, Junichi Yamagishi, Massimiliano Todisco, Hector Delgado, Andreas Nautsch, Nicholas Evans, Md Sahidullah, Ville Vestman, Tomi Kinnunen, Kong Aik Lee, Lauri Juvela, Paavo Alku, Yu-Huai Peng, Hsin-Te Hwang, Yu Tsao, Hsin-Min Wang, Sebastien Le Maguer, Markus Becker, Fergus Henderson, Rob Clark, Yu Zhang, Quan Wang, Ye Jia, Kai Onuma, Koji Mushika, Takashi Kaneda, Yuan Jiang, Li-Juan Liu, Yi-Chiao Wu, Wen-Chin Huang, Tomoki Toda, Kou Tanaka, Hirokazu Kameoka, Ingmar Steiner, Driss Matrouf, Jean-Francois Bonastre, Avashna Govender, Srikanth Ronanki, Jing-Xuan Zhang, Zhen-Hua Ling

Spoofing attacks within a logical access (LA) scenario are generated with the latest speech synthesis and voice conversion technologies, including state-of-the-art neural acoustic and waveform model techniques.

A comparison of end-to-end models for long-form speech recognition

no code implementations • 6 Nov 2019 • Chung-Cheng Chiu, Wei Han, Yu Zhang, Ruoming Pang, Sergey Kishchenko, Patrick Nguyen, Arun Narayanan, Hank Liao, Shuyuan Zhang, Anjuli Kannan, Rohit Prabhavalkar, Zhifeng Chen, Tara Sainath, Yonghui Wu

In this paper, we both investigate and improve the performance of end-to-end models on long-form transcription.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+2

Automatic Speech Recognition (ASR)

+2

Scale- and Context-Aware Convolutional Non-intrusive Load Monitoring

no code implementations • 17 Nov 2019 • Kunjin Chen, Yu Zhang, Qin Wang, Jun Hu, Hang Fan, Jinliang He

Non-intrusive load monitoring addresses the challenging task of decomposing the aggregate signal of a household's electricity consumption into appliance-level data without installing dedicated meters.

FeCaffe: FPGA-enabled Caffe with OpenCL for Deep Learning Training and Inference on Intel Stratix 10

no code implementations • 18 Nov 2019 • Ke He, Bo Liu, Yu Zhang, Andrew Ling, Dian Gu

In this paper, we firstly propose the FeCaffe, i. e. FPGA-enabled Caffe, a hierarchical software and hardware design methodology based on the Caffe to enable FPGA to support mainline deep learning development features, e. g. training and inference with Caffe.

Speech Sentiment Analysis via Pre-trained Features from End-to-end ASR Models

no code implementations • 21 Nov 2019 • Zhiyun Lu, Liangliang Cao, Yu Zhang, Chung-Cheng Chiu, James Fan

In this paper, we propose to use pre-trained features from end-to-end ASR models to solve speech sentiment analysis as a down-stream task.

Scalability in Perception for Autonomous Driving: Waymo Open Dataset

8 code implementations • CVPR 2020 • Pei Sun, Henrik Kretzschmar, Xerxes Dotiwalla, Aurelien Chouard, Vijaysai Patnaik, Paul Tsui, James Guo, Yin Zhou, Yuning Chai, Benjamin Caine, Vijay Vasudevan, Wei Han, Jiquan Ngiam, Hang Zhao, Aleksei Timofeev, Scott Ettinger, Maxim Krivokon, Amy Gao, Aditya Joshi, Sheng Zhao, Shuyang Cheng, Yu Zhang, Jonathon Shlens, Zhifeng Chen, Dragomir Anguelov

In an effort to help align the research community's contributions with real-world self-driving problems, we introduce a new large scale, high quality, diverse dataset.

SpecAugment on Large Scale Datasets

no code implementations • 11 Dec 2019 • Daniel S. Park, Yu Zhang, Chung-Cheng Chiu, Youzheng Chen, Bo Li, William Chan, Quoc V. Le, Yonghui Wu

Recently, SpecAugment, an augmentation scheme for automatic speech recognition that acts directly on the spectrogram of input utterances, has shown to be highly effective in enhancing the performance of end-to-end networks on public datasets.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+1

Automatic Speech Recognition (ASR)

+1

Fully-hierarchical fine-grained prosody modeling for interpretable speech synthesis

no code implementations • 6 Feb 2020 • Guangzhi Sun, Yu Zhang, Ron J. Weiss, Yuan Cao, Heiga Zen, Yonghui Wu

This paper proposes a hierarchical, fine-grained and interpretable latent variable model for prosody based on the Tacotron 2 text-to-speech model.

Generating diverse and natural text-to-speech samples using a quantized fine-grained VAE and auto-regressive prosody prior

no code implementations • 6 Feb 2020 • Guangzhi Sun, Yu Zhang, Ron J. Weiss, Yuan Cao, Heiga Zen, Andrew Rosenberg, Bhuvana Ramabhadran, Yonghui Wu

Recent neural text-to-speech (TTS) models with fine-grained latent features enable precise control of the prosody of synthesized speech.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+3

Automatic Speech Recognition (ASR)

+3

MDLdroid: a ChainSGD-reduce Approach to Mobile Deep Learning for Personal Mobile Sensing

no code implementations • 7 Feb 2020 • Yu Zhang, Tao Gu, Xi Zhang

Towards pushing deep learning on devices, we present MDLdroid, a novel decentralized mobile deep learning framework to enable resource-aware on-device collaborative learning for personal mobile sensing applications.

Deep Multi-Task Augmented Feature Learning via Hierarchical Graph Neural Network

1 code implementation • 12 Feb 2020 • Pengxin Guo, Chang Deng, Linjie Xu, Xiaonan Huang, Yu Zhang

The proposed feature augmentation strategy can be used in many deep multi-task learning models.

Deep Multi-Task Learning via Generalized Tensor Trace Norm

no code implementations • 12 Feb 2020 • Yi Zhang, Yu Zhang, Wei Wang

The GTTN is defined as a convex combination of matrix trace norms of all possible tensor flattenings and hence it can discover all the possible low-rank structures.

A Simple General Approach to Balance Task Difficulty in Multi-Task Learning

no code implementations • 12 Feb 2020 • Sicong Liang, Yu Zhang

In multi-task learning, difficulty levels of different tasks are varying.

High-Order Paired-ASPP Networks for Semantic Segmenation

no code implementations • 18 Feb 2020 • Yu Zhang, Xin Sun, Junyu Dong, Changrui Chen, Yue Shen

The network first introduces a High-Order Representation module to extract the contextual high-order information from all stages of the backbone.

CODAR: A Contextual Duration-Aware Qubit Mapping for Various NISQ Devices

1 code implementation • 24 Feb 2020 • Haowei Deng, Yu Zhang, Quanxi Li

Quantum computing devices in the NISQ era share common features and challenges like limited connectivity between qubits.

Quantum Physics

Learning Beam Codebooks with Neural Networks: Towards Environment-Aware mmWave MIMO

1 code implementation • 25 Feb 2020 • Yu Zhang, Muhammad Alrabeiah, Ahmed Alkhateeb

This leads to high beam training overhead and loss in the achievable beamforming gains.

Information Theory Signal Processing Information Theory

Self-supervised Image Enhancement Network: Training with Low Light Images Only

1 code implementation • 26 Feb 2020 • Yu Zhang, Xiaoguang Di, Bin Zhang, Chunhui Wang

We introduce a constraint that the maximum channel of the reflectance conforms to the maximum channel of the low light image and its entropy should be largest in our model to achieve self-supervised learning.

Defense-PointNet: Protecting PointNet Against Adversarial Attacks

no code implementations • 27 Feb 2020 • Yu Zhang, Gongbo Liang, Tawfiq Salem, Nathan Jacobs

Despite remarkable performance across a broad range of tasks, neural networks have been shown to be vulnerable to adversarial attacks.

Joint 2D-3D Breast Cancer Classification

no code implementations • 27 Feb 2020 • Gongbo Liang, Xiaoqin Wang, Yu Zhang, Xin Xing, Hunter Blanton, Tawfiq Salem, Nathan Jacobs

Breast cancer is the malignant tumor that causes the highest number of cancer deaths in females.

2D Convolutional Neural Networks for 3D Digital Breast Tomosynthesis Classification

no code implementations • 27 Feb 2020 • Yu Zhang, Xiaoqin Wang, Hunter Blanton, Gongbo Liang, Xin Xing, Nathan Jacobs

Automated methods for breast cancer detection have focused on 2D mammography and have largely ignored 3D digital breast tomosynthesis (DBT), which is frequently used in clinical practice.

Attention-guided Chained Context Aggregation for Semantic Segmentation

3 code implementations • 27 Feb 2020 • Quan Tang, Fagui Liu, Tong Zhang, Jun Jiang, Yu Zhang

The way features propagate in Fully Convolutional Networks is of momentous importance to capture multi-scale contexts for obtaining precise segmentation masks.

Ranked #23 on

Semantic Segmentation

on SUN-RGBD

(using extra training data)

Ranked #23 on

Semantic Segmentation

on SUN-RGBD

(using extra training data)

Unsupervised Domain Adaptation for Mammogram Image Classification: A Promising Tool for Model Generalization

no code implementations • 2 Mar 2020 • Yu Zhang, Gongbo Liang, Nathan Jacobs, Xiaoqin Wang

Generalization is one of the key challenges in the clinical validation and application of deep learning models to medical images.

Is POS Tagging Necessary or Even Helpful for Neural Dependency Parsing?

1 code implementation • 6 Mar 2020 • Houquan Zhou, Yu Zhang, Zhenghua Li, Min Zhang

In the pre deep learning era, part-of-speech tags have been considered as indispensable ingredients for feature engineering in dependency parsing.

Fisher Deep Domain Adaptation

1 code implementation • 12 Mar 2020 • Yinghua Zhang, Yu Zhang, Ying WEI, Kun Bai, Yangqiu Song, Qiang Yang

Though the learned representations are separable in the source domain, they usually have a large variance and samples with different class labels tend to overlap in the target domain, which yields suboptimal adaptation performance.

CF2-Net: Coarse-to-Fine Fusion Convolutional Network for Breast Ultrasound Image Segmentation

no code implementations • 23 Mar 2020 • Zhenyuan Ning, Ke Wang, Shengzhou Zhong, Qianjin Feng, Yu Zhang

Breast ultrasound (BUS) image segmentation plays a crucial role in a computer-aided diagnosis system, which is regarded as a useful tool to help increase the accuracy of breast cancer diagnosis.

A Streaming On-Device End-to-End Model Surpassing Server-Side Conventional Model Quality and Latency

no code implementations • 28 Mar 2020 • Tara N. Sainath, Yanzhang He, Bo Li, Arun Narayanan, Ruoming Pang, Antoine Bruguier, Shuo-Yiin Chang, Wei Li, Raziel Alvarez, Zhifeng Chen, Chung-Cheng Chiu, David Garcia, Alex Gruenstein, Ke Hu, Minho Jin, Anjuli Kannan, Qiao Liang, Ian McGraw, Cal Peyser, Rohit Prabhavalkar, Golan Pundak, David Rybach, Yuan Shangguan, Yash Sheth, Trevor Strohman, Mirko Visontai, Yonghui Wu, Yu Zhang, Ding Zhao

Thus far, end-to-end (E2E) models have not been shown to outperform state-of-the-art conventional models with respect to both quality, i. e., word error rate (WER), and latency, i. e., the time the hypothesis is finalized after the user stops speaking.

Heterogeneous Network Representation Learning: A Unified Framework with Survey and Benchmark

1 code implementation • 1 Apr 2020 • Carl Yang, Yuxin Xiao, Yu Zhang, Yizhou Sun, Jiawei Han

Since there has already been a broad body of HNE algorithms, as the first contribution of this work, we provide a generic paradigm for the systematic categorization and analysis over the merits of various existing HNE algorithms.

Residual Attention U-Net for Automated Multi-Class Segmentation of COVID-19 Chest CT Images

no code implementations • 12 Apr 2020 • Xiaocong Chen, Lina Yao, Yu Zhang

The novel coronavirus disease 2019 (COVID-19) has been spreading rapidly around the world and caused significant impact on the public health and economy.

Learning Event-Based Motion Deblurring

no code implementations • CVPR 2020 • Zhe Jiang, Yu Zhang, Dongqing Zou, Jimmy Ren, Jiancheng Lv, Yebin Liu

Recovering sharp video sequence from a motion-blurred image is highly ill-posed due to the significant loss of motion information in the blurring process.

Ranked #28 on

Image Deblurring

on GoPro

(using extra training data)

Ranked #28 on

Image Deblurring

on GoPro

(using extra training data)

Order Matters: Generating Progressive Explanations for Planning Tasks in Human-Robot Teaming

no code implementations • 16 Apr 2020 • Mehrdad Zakershahrak, Shashank Rao Marpally, Akshay Sharma, Ze Gong, Yu Zhang

Given this sequential process, a formulation based on goal-based MDP for generating progressive explanations is presented.

Learning an Adaptive Model for Extreme Low-light Raw Image Processing

1 code implementation • 22 Apr 2020 • Qingxu Fu, Xiaoguang Di, Yu Zhang

Furthermore, those tests illustrate that the proposed method is able to adaptively control the global image brightness according to the content of the image scene.

Partially-Typed NER Datasets Integration: Connecting Practice to Theory

no code implementations • 1 May 2020 • Shi Zhi, Liyuan Liu, Yu Zhang, Shiyin Wang, Qi Li, Chao Zhang, Jiawei Han

While typical named entity recognition (NER) models require the training set to be annotated with all target types, each available datasets may only cover a part of them.

A Large Scale Speech Sentiment Corpus

no code implementations • LREC 2020 • Eric Chen, Zhiyun Lu, Hao Xu, Liangliang Cao, Yu Zhang, James Fan

We present a multimodal corpus for sentiment analysis based on the existing Switchboard-1 Telephone Speech Corpus released by the Linguistic Data Consortium.

Minimally Supervised Categorization of Text with Metadata

1 code implementation • 1 May 2020 • Yu Zhang, Yu Meng, Jiaxin Huang, Frank F. Xu, Xuan Wang, Jiawei Han

Then, based on the same generative process, we synthesize training samples to address the bottleneck of label scarcity.

Efficient Second-Order TreeCRF for Neural Dependency Parsing

2 code implementations • ACL 2020 • Yu Zhang, Zhenghua Li, Min Zhang

Experiments and analysis on 27 datasets from 13 languages clearly show that techniques developed before the DL era, such as structural learning (global TreeCRF loss) and high-order modeling are still useful, and can further boost parsing performance over the state-of-the-art biaffine parser, especially for partially annotated training data.

Ranked #1 on

Dependency Parsing

on CoNLL-2009

Ranked #1 on

Dependency Parsing

on CoNLL-2009

ContextNet: Improving Convolutional Neural Networks for Automatic Speech Recognition with Global Context

6 code implementations • 7 May 2020 • Wei Han, Zhengdong Zhang, Yu Zhang, Jiahui Yu, Chung-Cheng Chiu, James Qin, Anmol Gulati, Ruoming Pang, Yonghui Wu

We demonstrate that on the widely used LibriSpeech benchmark, ContextNet achieves a word error rate (WER) of 2. 1%/4. 6% without external language model (LM), 1. 9%/4. 1% with LM and 2. 9%/7. 0% with only 10M parameters on the clean/noisy LibriSpeech test sets.

Ranked #12 on

Speech Recognition

on LibriSpeech test-clean

Ranked #12 on

Speech Recognition

on LibriSpeech test-clean

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+2

Automatic Speech Recognition (ASR)

+2

RNN-T Models Fail to Generalize to Out-of-Domain Audio: Causes and Solutions

no code implementations • 7 May 2020 • Chung-Cheng Chiu, Arun Narayanan, Wei Han, Rohit Prabhavalkar, Yu Zhang, Navdeep Jaitly, Ruoming Pang, Tara N. Sainath, Patrick Nguyen, Liangliang Cao, Yonghui Wu

On a long-form YouTube test set, when the nonstreaming RNN-T model is trained with shorter segments of data, the proposed combination improves word error rate (WER) from 22. 3% to 14. 8%; when the streaming RNN-T model trained on short Search queries, the proposed techniques improve WER on the YouTube set from 67. 0% to 25. 3%.

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+1

Automatic Speech Recognition (ASR)

+1

Conformer: Convolution-augmented Transformer for Speech Recognition

24 code implementations • 16 May 2020 • Anmol Gulati, James Qin, Chung-Cheng Chiu, Niki Parmar, Yu Zhang, Jiahui Yu, Wei Han, Shibo Wang, Zhengdong Zhang, Yonghui Wu, Ruoming Pang

Recently Transformer and Convolution neural network (CNN) based models have shown promising results in Automatic Speech Recognition (ASR), outperforming Recurrent neural networks (RNNs).

Ranked #12 on

Speech Recognition

on LibriSpeech test-other

(using extra training data)

Ranked #12 on

Speech Recognition

on LibriSpeech test-other

(using extra training data)

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+1

Automatic Speech Recognition (ASR)

+1

Improved Noisy Student Training for Automatic Speech Recognition

1 code implementation • 19 May 2020 • Daniel S. Park, Yu Zhang, Ye Jia, Wei Han, Chung-Cheng Chiu, Bo Li, Yonghui Wu, Quoc V. Le

Noisy student training is an iterative self-training method that leverages augmentation to improve network performance.

Ranked #5 on

Speech Recognition

on LibriSpeech test-clean

Ranked #5 on

Speech Recognition

on LibriSpeech test-clean

Automatic Speech Recognition

Automatic Speech Recognition

Automatic Speech Recognition (ASR)

+2

Automatic Speech Recognition (ASR)

+2

Attention: to Better Stand on the Shoulders of Giants

no code implementations • 27 May 2020 • Sha Yuan, Zhou Shao, Yu Zhang, Xingxing Wei, Tong Xiao, Yifan Wang, Jie Tang

In the progress of science, the previously discovered knowledge principally inspires new scientific ideas, and citation is a reasonably good reflection of this cumulative nature of scientific research.

M2Net: Multi-modal Multi-channel Network for Overall Survival Time Prediction of Brain Tumor Patients

1 code implementation • 1 Jun 2020 • Tao Zhou, Huazhu Fu, Yu Zhang, Changqing Zhang, Xiankai Lu, Jianbing Shen, Ling Shao

Then, we use a modality-specific network to extract implicit and high-level features from different MR scans.

Distant Transfer Learning via Deep Random Walk

no code implementations • 13 Jun 2020 • Qiao Xiao, Yu Zhang

Transfer learning, which is to improve the learning performance in the target domain by leveraging useful knowledge from the source domain, often requires that those two domains are very close, which limits its application scope.

Momentum Contrastive Learning for Few-Shot COVID-19 Diagnosis from Chest CT Images

no code implementations • 16 Jun 2020 • Xiaocong Chen, Lina Yao, Tao Zhou, Jinming Dong, Yu Zhang

Diagnosis from chest CT images is a promising direction.

Neural Networks Based Beam Codebooks: Learning mmWave Massive MIMO Beams that Adapt to Deployment and Hardware

1 code implementation • 25 Jun 2020 • Muhammad Alrabeiah, Yu Zhang, Ahmed Alkhateeb

To overcome these limitations, this paper develops an efficient online machine learning framework that learns how to adapt the codebook beam patterns to the specific deployment, surrounding environment, user distribution, and hardware characteristics.

Offline Handwritten Chinese Text Recognition with Convolutional Neural Networks

1 code implementation • 28 Jun 2020 • Brian Liu, Xianchao Xu, Yu Zhang

Deep learning based methods have been dominating the text recognition tasks in different and multilingual scenarios.

A Multi-spectral Dataset for Evaluating Motion Estimation Systems

1 code implementation • 1 Jul 2020 • Weichen Dai, Yu Zhang, Shenzhou Chen, Donglei Sun, Da Kong

The multi-spectral images, including both color and thermal images in full sensor resolution (640 x 480), are obtained from a standard and a long-wave infrared camera at 32Hz with hardware-synchronization.

Allocation of Multi-Robot Tasks with Task Variants

no code implementations • 1 Jul 2020 • Zakk Giacometti, Yu Zhang

We referred to this new problem as the multi-robot task allocation problem with task variants.

Multi-Site Infant Brain Segmentation Algorithms: The iSeg-2019 Challenge

no code implementations • 4 Jul 2020 • Yue Sun, Kun Gao, Zhengwang Wu, Zhihao Lei, Ying WEI, Jun Ma, Xiaoping Yang, Xue Feng, Li Zhao, Trung Le Phan, Jitae Shin, Tao Zhong, Yu Zhang, Lequan Yu, Caizi Li, Ramesh Basnet, M. Omair Ahmad, M. N. S. Swamy, Wenao Ma, Qi Dou, Toan Duc Bui, Camilo Bermudez Noguera, Bennett Landman, Ian H. Gotlib, Kathryn L. Humphreys, Sarah Shultz, Longchuan Li, Sijie Niu, Weili Lin, Valerie Jewells, Gang Li, Dinggang Shen, Li Wang

Deep learning-based methods have achieved state-of-the-art performance; however, one of major limitations is that the learning-based methods may suffer from the multi-site issue, that is, the models trained on a dataset from one site may not be applicable to the datasets acquired from other sites with different imaging protocols/scanners.

Hierarchical Topic Mining via Joint Spherical Tree and Text Embedding

1 code implementation • 18 Jul 2020 • Yu Meng, Yunyi Zhang, Jiaxin Huang, Yu Zhang, Chao Zhang, Jiawei Han

Mining a set of meaningful topics organized into a hierarchy is intuitively appealing since topic correlations are ubiquitous in massive text corpora.

Ranked #1 on

Topic Models

on Arxiv HEP-TH citation graph

Ranked #1 on

Topic Models

on Arxiv HEP-TH citation graph

Deep Image Clustering with Category-Style Representation

1 code implementation • ECCV 2020 • Junjie Zhao, Donghuan Lu, Kai Ma, Yu Zhang, Yefeng Zheng

In this paper, we propose a novel deep image clustering framework to learn a category-style latent representation in which the category information is disentangled from image style and can be directly used as the cluster assignment.

A Study on Evaluation Standard for Automatic Crack Detection Regard the Random Fractal

no code implementations • 23 Jul 2020 • Hongyu Li, Jihe Wang, Yu Zhang, Zi-Rui Wang, Tiejun Wang

In CovEval, a different matching process based on the idea of covering box matching is adopted for this issue.

COMET: Convolutional Dimension Interaction for Collaborative Filtering

no code implementations • 28 Jul 2020 • Zhuoyi Lin, Lei Feng, Xingzhi Guo, Yu Zhang, Rui Yin, Chee Keong Kwoh, Chi Xu

In this paper, we propose a novel representation learning-based model called COMET (COnvolutional diMEnsion inTeraction), which simultaneously models the high-order interaction patterns among historical interactions and embedding dimensions.

Multi-source Heterogeneous Domain Adaptation with Conditional Weighting Adversarial Network

1 code implementation • 6 Aug 2020 • Yuan Yao, Xutao Li, Yu Zhang, Yunming Ye

In reality, however, it is not uncommon to obtain samples from multiple heterogeneous domains.

MiNet: Mixed Interest Network for Cross-Domain Click-Through Rate Prediction

1 code implementation • 7 Aug 2020 • Wentao Ouyang, Xiuwu Zhang, Lei Zhao, Jinmei Luo, Yu Zhang, Heng Zou, Zhaojie Liu, Yanlong Du

Our study is based on UC Toutiao (a news feed service integrated with the UC Browser App, serving hundreds of millions of users daily), where the source domain is the news and the target domain is the ad.

Fast and Accurate Neural CRF Constituency Parsing